“联合熵”的版本间的差异

(Moved page from wikipedia:en:Joint entropy (history)) |

小 (Moved page from wikipedia:en:Joint entropy (history)) |

||

| 第1行: | 第1行: | ||

| − | 此词条暂由彩云小译翻译,未经人工整理和审校,带来阅读不便,请见谅。 | + | 此词条暂由彩云小译翻译,未经人工整理和审校,带来阅读不便,请见谅。 |

| − | |||

| + | {{Short description|Measure of information in probability and information theory}} | ||

{{Information theory}} | {{Information theory}} | ||

| 第7行: | 第7行: | ||

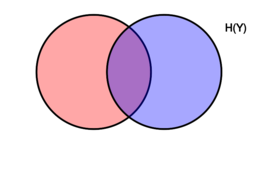

| + | [[Image:Entropy-mutual-information-relative-entropy-relation-diagram.svg|thumb|256px|right|A misleading<ref>{{Cite book|author=D.J.C. Mackay|title= Information theory, inferences, and learning algorithms}}{{rp|141}}</ref> [[Venn diagram]] showing additive, and subtractive relationships between various [[Quantities of information|information measures]] associated with correlated variables X and Y. The area contained by both circles is the [[joint entropy]] H(X,Y). The circle on the left (red and violet) is the [[Entropy (information theory)|individual entropy]] H(X), with the red being the [[conditional entropy]] H(X{{!}}Y). The circle on the right (blue and violet) is H(Y), with the blue being H(Y{{!}}X). The violet is the [[mutual information]] I(X;Y).]] | ||

| + | [[Image:Entropy-mutual-information-relative-entropy-relation-diagram.svg|thumb|256px|right|A misleading | ||

| − | + | [图片: 熵-互信息-相对熵-关系图. svg | thumb | 256px | 右 | 误导 | |

| − | |||

| − | [ | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| 第23行: | 第17行: | ||

In [[information theory]], '''joint [[entropy (information theory)|entropy]]''' is a measure of the uncertainty associated with a set of [[random variables|variables]].<ref name=korn>{{cite book |author1=Theresa M. Korn |author2=Korn, Granino Arthur |title=Mathematical Handbook for Scientists and Engineers: Definitions, Theorems, and Formulas for Reference and Review |publisher=Dover Publications |location=New York |year= |isbn=0-486-41147-8 |oclc= |doi=}}</ref> | In [[information theory]], '''joint [[entropy (information theory)|entropy]]''' is a measure of the uncertainty associated with a set of [[random variables|variables]].<ref name=korn>{{cite book |author1=Theresa M. Korn |author2=Korn, Granino Arthur |title=Mathematical Handbook for Scientists and Engineers: Definitions, Theorems, and Formulas for Reference and Review |publisher=Dover Publications |location=New York |year= |isbn=0-486-41147-8 |oclc= |doi=}}</ref> | ||

| − | + | <math>\Eta(X,Y) \leq \Eta(X) + \Eta(Y)</math> | |

| − | |||

| − | |||

| − | |||

| + | (数学) Eta (x,y) leq Eta (x) + Eta (y) | ||

| 第33行: | 第25行: | ||

==Definition== | ==Definition== | ||

| − | + | <math>\Eta(X_1,\ldots, X_n) \leq \Eta(X_1) + \ldots + \Eta(X_n)</math> | |

| − | + | [ math ] Eta (x _ 1,ldots,x _ n) leq Eta (x _ 1) + ldots + Eta (x _ n) | |

The joint [[Shannon entropy]] (in [[bit]]s) of two discrete [[random variable|random variables]] <math>X</math> and <math>Y</math> with images <math>\mathcal X</math> and <math>\mathcal Y</math> is defined as<ref name=cover1991>{{cite book |author1=Thomas M. Cover |author2=Joy A. Thomas |title=Elements of Information Theory |publisher=Wiley |location=Hoboken, New Jersey |year= |isbn=0-471-24195-4}}</ref>{{rp|16}} | The joint [[Shannon entropy]] (in [[bit]]s) of two discrete [[random variable|random variables]] <math>X</math> and <math>Y</math> with images <math>\mathcal X</math> and <math>\mathcal Y</math> is defined as<ref name=cover1991>{{cite book |author1=Thomas M. Cover |author2=Joy A. Thomas |title=Elements of Information Theory |publisher=Wiley |location=Hoboken, New Jersey |year= |isbn=0-471-24195-4}}</ref>{{rp|16}} | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

{{Equation box 1 | {{Equation box 1 | ||

| − | |||

| − | |||

| − | |||

| − | |||

|indent = | |indent = | ||

| − | + | Joint entropy is used in the definition of conditional entropy | |

| − | + | 联合熵被用来定义条件熵 | |

| − | |||

| − | |||

|title= | |title= | ||

| − | |||

| − | |||

|equation = {{NumBlk||<math>\Eta(X,Y) = -\sum_{x\in\mathcal X} \sum_{y\in\mathcal Y} P(x,y) \log_2[P(x,y)]</math>|{{EquationRef|Eq.1}}}} | |equation = {{NumBlk||<math>\Eta(X,Y) = -\sum_{x\in\mathcal X} \sum_{y\in\mathcal Y} P(x,y) \log_2[P(x,y)]</math>|{{EquationRef|Eq.1}}}} | ||

| − | | | + | <math>\Eta(X|Y) = \Eta(X,Y) - \Eta(Y)\,</math>, |

| − | + | 埃塔(x | y) = 埃塔(x,y)-埃塔(y) , | |

|cellpadding= 6 | |cellpadding= 6 | ||

| − | |||

| − | |||

| − | |||

| − | |||

|border | |border | ||

| − | | | + | and <math display="block">\Eta(X_1,\dots,X_n) = \sum_{k=1}^n \Eta(X_k|X_{k-1},\dots, X_1)</math>It is also used in the definition of mutual information |

| − | |||

| − | |||

| − | | | + | 而〈 math display = " block" > Eta (x _ 1,dots,xn) = sum _ { k = 1} ^ n Eta (x _ k | x _ { k-1} ,dots,x _ 1) </math > 它也用于互信息的定义 |

|border colour = #0073CF | |border colour = #0073CF | ||

| − | |||

| − | |||

|background colour=#F5FFFA}} | |background colour=#F5FFFA}} | ||

| − | + | <math>\operatorname{I}(X;Y) = \Eta(X) + \Eta(Y) - \Eta(X,Y)\,</math> | |

| − | |||

| − | |||

| − | |||

| + | (x; y) = Eta (x) + Eta (y)-Eta (x,y) ,</math > | ||

| 第101行: | 第69行: | ||

where <math>x</math> and <math>y</math> are particular values of <math>X</math> and <math>Y</math>, respectively, <math>P(x,y)</math> is the [[joint probability]] of these values occurring together, and <math>P(x,y) \log_2[P(x,y)]</math> is defined to be 0 if <math>P(x,y)=0</math>. | where <math>x</math> and <math>y</math> are particular values of <math>X</math> and <math>Y</math>, respectively, <math>P(x,y)</math> is the [[joint probability]] of these values occurring together, and <math>P(x,y) \log_2[P(x,y)]</math> is defined to be 0 if <math>P(x,y)=0</math>. | ||

| − | + | In quantum information theory, the joint entropy is generalized into the joint quantum entropy. | |

| − | + | 在量子信息论中,联合熵被推广到联合量子熵。 | |

| − | |||

| − | |||

| − | |||

| − | |||

For more than two random variables <math>X_1, ..., X_n</math> this expands to | For more than two random variables <math>X_1, ..., X_n</math> this expands to | ||

| − | |||

| − | |||

| − | |||

| − | |||

| 第121行: | 第81行: | ||

{{Equation box 1 | {{Equation box 1 | ||

| − | + | A python package for computing all multivariate joint entropies, mutual informations, conditional mutual information, total correlations, information distance in a dataset of n variables is available. | |

| − | + | 计算所有多元联合熵,互信息,条件互信息,总相关性,信息距离在一个 n 个变量的数据集的 python 包是可用的。 | |

|indent = | |indent = | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

|title= | |title= | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

{{Equation box 1 | {{Equation box 1 | ||

| 第411行: | 第93行: | ||

{方程式方框1 | {方程式方框1 | ||

| − | | | + | |equation = {{NumBlk||<math>\Eta(X_1, ..., X_n) = |

|indent = | |indent = | ||

| − | + | 2012年10月22日 | |

| − | | | + | -\sum_{x_1 \in\mathcal X_1} ... \sum_{x_n \in\mathcal X_n} P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)]</math>|{{EquationRef|Eq.2}}}} |

|title= | |title= | ||

| − | + | 2012年10月11日 | |

| − | | | + | |cellpadding= 6 |

|equation = }} | |equation = }} | ||

| − | + | | equation = } | |

| − | | | + | |border |

|cellpadding= 6 | |cellpadding= 6 | ||

| − | + | 6 | |

| − | |border | + | |border colour = #0073CF |

|border | |border | ||

| 第441行: | 第123行: | ||

边界 | 边界 | ||

| − | | | + | |background colour=#F5FFFA}} |

|border colour = #0073CF | |border colour = #0073CF | ||

| 第447行: | 第129行: | ||

0073CF | 0073CF | ||

| − | + | ||

|background colour=#F5FFFA}} | |background colour=#F5FFFA}} | ||

| − | 5 / fffa } | + | 5/fffa }} |

| − | |||

| + | where <math>x_1,...,x_n</math> are particular values of <math>X_1,...,X_n</math>, respectively, <math>P(x_1, ..., x_n)</math> is the probability of these values occurring together, and <math>P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)]</math> is defined to be 0 if <math>P(x_1, ..., x_n)=0</math>. | ||

| 第459行: | 第141行: | ||

For more than two continuous random variables <math>X_1, ..., X_n</math> the definition is generalized to: | For more than two continuous random variables <math>X_1, ..., X_n</math> the definition is generalized to: | ||

| − | + | 对于两个以上的连续随机变量 < math > x _ 1,... ,x _ n </math > ,定义被推广到: | |

| − | |||

| − | |||

| − | |||

| + | ==Properties== | ||

| 第469行: | 第149行: | ||

{{Equation box 1 | {{Equation box 1 | ||

| − | { | + | {方程式方框1 |

| − | + | ===Nonnegativity=== | |

|indent = | |indent = | ||

| − | + | 2012年10月22日 | |

| − | |||

| − | |||

|title= | |title= | ||

| − | + | 2012年10月11日 | |

| − | + | The joint entropy of a set of random variables is a nonnegative number. | |

|equation = }} | |equation = }} | ||

| − | + | | equation = } | |

| + | |||

| − | |||

|cellpadding= 6 | |cellpadding= 6 | ||

| − | + | 6 | |

| − | + | :<math>\Eta(X,Y) \geq 0</math> | |

|border | |border | ||

| 第503行: | 第181行: | ||

边界 | 边界 | ||

| − | + | ||

|border colour = #0073CF | |border colour = #0073CF | ||

| 第509行: | 第187行: | ||

0073CF | 0073CF | ||

| − | + | :<math>\Eta(X_1,\ldots, X_n) \geq 0</math> | |

|background colour=#F5FFFA}} | |background colour=#F5FFFA}} | ||

| − | 5 / fffa } | + | 5/fffa }} |

| + | ===Greater than individual entropies=== | ||

| + | The integral is taken over the support of <math>f</math>. It is possible that the integral does not exist in which case we say that the differential entropy is not defined. | ||

| − | + | 这个积分取代了“数学”的支持。这个积分可能不存在,在这种情况下,我们说微分熵是没有定义的。 | |

| − | |||

| − | |||

| − | + | The joint entropy of a set of variables is greater than or equal to the maximum of all of the individual entropies of the variables in the set. | |

| − | |||

| − | |||

| − | |||

| − | |||

As in the discrete case the joint differential entropy of a set of random variables is smaller or equal than the sum of the entropies of the individual random variables: | As in the discrete case the joint differential entropy of a set of random variables is smaller or equal than the sum of the entropies of the individual random variables: | ||

| 第537行: | 第211行: | ||

在离散情况下,一组随机变量的联合微分熵小于或等于各个随机变量的熵之和: | 在离散情况下,一组随机变量的联合微分熵小于或等于各个随机变量的熵之和: | ||

| − | :<math> | + | :<math>\Eta(X,Y) \geq \max \left[\Eta(X),\Eta(Y) \right]</math> |

<math>h(X_1,X_2, \ldots,X_n) \le \sum_{i=1}^n h(X_i)</math> | <math>h(X_1,X_2, \ldots,X_n) \le \sum_{i=1}^n h(X_i)</math> | ||

| − | + | [ math ] h (x _ 1,x _ 2,ldots,x _ n) le sum { i = 1} ^ n h (xi) </math > | |

| − | + | :<math>\Eta \bigl(X_1,\ldots, X_n \bigr) \geq \max_{1 \le i \le n} | |

| − | |||

| − | |||

The following chain rule holds for two random variables: | The following chain rule holds for two random variables: | ||

| 第553行: | 第225行: | ||

下面的链式规则适用于两个随机变量: | 下面的链式规则适用于两个随机变量: | ||

| − | + | \Bigl\{ \Eta\bigl(X_i\bigr) \Bigr\}</math> | |

<math>h(X,Y) = h(X|Y) + h(Y)</math> | <math>h(X,Y) = h(X|Y) + h(Y)</math> | ||

| − | H (x,y) h (x | y) + h (y) / math | + | H (x,y) = h (x | y) + h (y) </math > |

| + | |||

| − | |||

In the case of more than two random variables this generalizes to: | In the case of more than two random variables this generalizes to: | ||

| 第565行: | 第237行: | ||

对于两个以上的随机变量,这种情况可以推广到: | 对于两个以上的随机变量,这种情况可以推广到: | ||

| − | + | ===Less than or equal to the sum of individual entropies=== | |

<math>h(X_1,X_2, \ldots,X_n) = \sum_{i=1}^n h(X_i|X_1,X_2, \ldots,X_{i-1})</math> | <math>h(X_1,X_2, \ldots,X_n) = \sum_{i=1}^n h(X_i|X_1,X_2, \ldots,X_{i-1})</math> | ||

| − | + | < math > h (x _ 1,x _ 2,ldots,x _ n) = sum _ { i = 1} ^ n h (x _ i | x _ 1,x _ 2,ldots,x _ { i-1}) </math > | |

| + | |||

| − | |||

Joint differential entropy is also used in the definition of the mutual information between continuous random variables: | Joint differential entropy is also used in the definition of the mutual information between continuous random variables: | ||

| 第577行: | 第249行: | ||

联合微分熵也用于连续随机变量之间互信息的定义: | 联合微分熵也用于连续随机变量之间互信息的定义: | ||

| − | + | The joint entropy of a set of variables is less than or equal to the sum of the individual entropies of the variables in the set. This is an example of [[subadditivity]]. This inequality is an equality if and only if <math>X</math> and <math>Y</math> are [[statistically independent]].<ref name=cover1991 />{{rp|30}} | |

<math>\operatorname{I}(X,Y)=h(X)+h(Y)-h(X,Y)</math> | <math>\operatorname{I}(X,Y)=h(X)+h(Y)-h(X,Y)</math> | ||

| − | + | (x,y) = h (x) + h (y)-h (x,y) </math > | |

| + | :<math>\Eta(X,Y) \leq \Eta(X) + \Eta(Y)</math> | ||

| − | |||

| − | + | :<math>\Eta(X_1,\ldots, X_n) \leq \Eta(X_1) + \ldots + \Eta(X_n)</math> | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

Category:Entropy and information | Category:Entropy and information | ||

| 第607行: | 第269行: | ||

类别: 熵和信息 | 类别: 熵和信息 | ||

| + | ==Relations to other entropy measures== | ||

| − | |||

| − | |||

| − | |||

de:Bedingte Entropie#Blockentropie | de:Bedingte Entropie#Blockentropie | ||

2020年10月25日 (日) 16:06的版本

此词条暂由彩云小译翻译,未经人工整理和审校,带来阅读不便,请见谅。

[[Image:Entropy-mutual-information-relative-entropy-relation-diagram.svg|thumb|256px|right|A misleading

[图片: 熵-互信息-相对熵-关系图. svg | thumb | 256px | 右 | 误导

In information theory, joint entropy is a measure of the uncertainty associated with a set of variables.[2]

[math]\displaystyle{ \Eta(X,Y) \leq \Eta(X) + \Eta(Y) }[/math]

(数学) Eta (x,y) leq Eta (x) + Eta (y)

Definition

[math]\displaystyle{ \Eta(X_1,\ldots, X_n) \leq \Eta(X_1) + \ldots + \Eta(X_n) }[/math]

[ math ] Eta (x _ 1,ldots,x _ n) leq Eta (x _ 1) + ldots + Eta (x _ n)

The joint Shannon entropy (in bits) of two discrete random variables [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] with images [math]\displaystyle{ \mathcal X }[/math] and [math]\displaystyle{ \mathcal Y }[/math] is defined as[3]:16

[math]\displaystyle{ \Eta(X,Y) = -\sum_{x\in\mathcal X} \sum_{y\in\mathcal Y} P(x,y) \log_2[P(x,y)] }[/math]

|

|

(Eq.1) |

[math]\displaystyle{ \Eta(X|Y) = \Eta(X,Y) - \Eta(Y)\, }[/math],

埃塔(x

[math]\displaystyle{ \operatorname{I}(X;Y) = \Eta(X) + \Eta(Y) - \Eta(X,Y)\, }[/math]

(x; y) = Eta (x) + Eta (y)-Eta (x,y) ,</math >

where [math]\displaystyle{ x }[/math] and [math]\displaystyle{ y }[/math] are particular values of [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math], respectively, [math]\displaystyle{ P(x,y) }[/math] is the joint probability of these values occurring together, and [math]\displaystyle{ P(x,y) \log_2[P(x,y)] }[/math] is defined to be 0 if [math]\displaystyle{ P(x,y)=0 }[/math].

In quantum information theory, the joint entropy is generalized into the joint quantum entropy.

在量子信息论中,联合熵被推广到联合量子熵。

For more than two random variables [math]\displaystyle{ X_1, ..., X_n }[/math] this expands to

{{Equation box 1

A python package for computing all multivariate joint entropies, mutual informations, conditional mutual information, total correlations, information distance in a dataset of n variables is available.

计算所有多元联合熵,互信息,条件互信息,总相关性,信息距离在一个 n 个变量的数据集的 python 包是可用的。

|indent =

|title=

{{Equation box 1

{方程式方框1

|equation =

[math]\displaystyle{ \Eta(X_1, ..., X_n) =

|indent =

2012年10月22日

-\sum_{x_1 \in\mathcal X_1} ... \sum_{x_n \in\mathcal X_n} P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)] }[/math]

|

|

(Eq.2) |

|title=

2012年10月11日

|cellpadding= 6

|equation = }}

| equation = }

|border

|cellpadding= 6

6

|border colour = #0073CF

|border

边界

|background colour=#F5FFFA}}

|border colour = #0073CF

0073CF

|background colour=#F5FFFA}}

5/fffa }}

where [math]\displaystyle{ x_1,...,x_n }[/math] are particular values of [math]\displaystyle{ X_1,...,X_n }[/math], respectively, [math]\displaystyle{ P(x_1, ..., x_n) }[/math] is the probability of these values occurring together, and [math]\displaystyle{ P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)] }[/math] is defined to be 0 if [math]\displaystyle{ P(x_1, ..., x_n)=0 }[/math].

For more than two continuous random variables [math]\displaystyle{ X_1, ..., X_n }[/math] the definition is generalized to:

对于两个以上的连续随机变量 < math > x _ 1,... ,x _ n </math > ,定义被推广到:

Properties

{{Equation box 1

{方程式方框1

Nonnegativity

|indent =

2012年10月22日

|title=

2012年10月11日

The joint entropy of a set of random variables is a nonnegative number.

|equation = }}

| equation = }

|cellpadding= 6

6

- [math]\displaystyle{ \Eta(X,Y) \geq 0 }[/math]

|border

边界

|border colour = #0073CF

0073CF

- [math]\displaystyle{ \Eta(X_1,\ldots, X_n) \geq 0 }[/math]

|background colour=#F5FFFA}}

5/fffa }}

Greater than individual entropies

The integral is taken over the support of [math]\displaystyle{ f }[/math]. It is possible that the integral does not exist in which case we say that the differential entropy is not defined.

这个积分取代了“数学”的支持。这个积分可能不存在,在这种情况下,我们说微分熵是没有定义的。

The joint entropy of a set of variables is greater than or equal to the maximum of all of the individual entropies of the variables in the set.

As in the discrete case the joint differential entropy of a set of random variables is smaller or equal than the sum of the entropies of the individual random variables:

在离散情况下,一组随机变量的联合微分熵小于或等于各个随机变量的熵之和:

- [math]\displaystyle{ \Eta(X,Y) \geq \max \left[\Eta(X),\Eta(Y) \right] }[/math]

[math]\displaystyle{ h(X_1,X_2, \ldots,X_n) \le \sum_{i=1}^n h(X_i) }[/math]

[ math ] h (x _ 1,x _ 2,ldots,x _ n) le sum { i = 1} ^ n h (xi) </math >

- [math]\displaystyle{ \Eta \bigl(X_1,\ldots, X_n \bigr) \geq \max_{1 \le i \le n} The following chain rule holds for two random variables: 下面的链式规则适用于两个随机变量: \Bigl\{ \Eta\bigl(X_i\bigr) \Bigr\} }[/math]

[math]\displaystyle{ h(X,Y) = h(X|Y) + h(Y) }[/math]

H (x,y) = h (x | y) + h (y) </math >

In the case of more than two random variables this generalizes to:

对于两个以上的随机变量,这种情况可以推广到:

Less than or equal to the sum of individual entropies

[math]\displaystyle{ h(X_1,X_2, \ldots,X_n) = \sum_{i=1}^n h(X_i|X_1,X_2, \ldots,X_{i-1}) }[/math]

< math > h (x _ 1,x _ 2,ldots,x _ n) = sum _ { i = 1} ^ n h (x _ i | x _ 1,x _ 2,ldots,x _ { i-1}) </math >

Joint differential entropy is also used in the definition of the mutual information between continuous random variables:

联合微分熵也用于连续随机变量之间互信息的定义:

The joint entropy of a set of variables is less than or equal to the sum of the individual entropies of the variables in the set. This is an example of subadditivity. This inequality is an equality if and only if [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] are statistically independent.[3]:30

[math]\displaystyle{ \operatorname{I}(X,Y)=h(X)+h(Y)-h(X,Y) }[/math]

(x,y) = h (x) + h (y)-h (x,y) </math >

- [math]\displaystyle{ \Eta(X,Y) \leq \Eta(X) + \Eta(Y) }[/math]

- [math]\displaystyle{ \Eta(X_1,\ldots, X_n) \leq \Eta(X_1) + \ldots + \Eta(X_n) }[/math]

Category:Entropy and information

类别: 熵和信息

Relations to other entropy measures

de:Bedingte Entropie#Blockentropie

- blockentropie

This page was moved from wikipedia:en:Joint entropy. Its edit history can be viewed at 联合熵/edithistory

- ↑ D.J.C. Mackay. Information theory, inferences, and learning algorithms.:141

- ↑ Theresa M. Korn; Korn, Granino Arthur. Mathematical Handbook for Scientists and Engineers: Definitions, Theorems, and Formulas for Reference and Review. New York: Dover Publications. ISBN 0-486-41147-8.

- ↑ 3.0 3.1 Thomas M. Cover; Joy A. Thomas. Elements of Information Theory. Hoboken, New Jersey: Wiley. ISBN 0-471-24195-4.