“联合熵”的版本间的差异

| 第12行: | 第12行: | ||

==Definition 定义 == | ==Definition 定义 == | ||

The joint [[Shannon entropy]] (in [[bit]]s) of two discrete [[random variable|random variables]] <math>X</math> and <math>Y</math> with images <math>\mathcal X</math> and <math>\mathcal Y</math> is defined as<ref name=cover1991>{{cite book |author1=Thomas M. Cover |author2=Joy A. Thomas |title=Elements of Information Theory |publisher=Wiley |location=Hoboken, New Jersey |year= |isbn=0-471-24195-4}}</ref>{{rp|16}} | The joint [[Shannon entropy]] (in [[bit]]s) of two discrete [[random variable|random variables]] <math>X</math> and <math>Y</math> with images <math>\mathcal X</math> and <math>\mathcal Y</math> is defined as<ref name=cover1991>{{cite book |author1=Thomas M. Cover |author2=Joy A. Thomas |title=Elements of Information Theory |publisher=Wiley |location=Hoboken, New Jersey |year= |isbn=0-471-24195-4}}</ref>{{rp|16}} | ||

| + | |||

具有像<math>\mathcal X</math>和<math>\mathcal Y</math>的两个离散随机变量<math>X</math>和<math>Y</math>的'''<font color="#ff8000"> 联合香农熵Shannon entropy </font>'''(以比特为单位)定义为: | 具有像<math>\mathcal X</math>和<math>\mathcal Y</math>的两个离散随机变量<math>X</math>和<math>Y</math>的'''<font color="#ff8000"> 联合香农熵Shannon entropy </font>'''(以比特为单位)定义为: | ||

| + | |||

{{Equation box 1 | {{Equation box 1 | ||

| 第25行: | 第27行: | ||

where <math>x</math> and <math>y</math> are particular values of <math>X</math> and <math>Y</math>, respectively, <math>P(x,y)</math> is the [[joint probability]] of these values occurring together, and <math>P(x,y) \log_2[P(x,y)]</math> is defined to be 0 if <math>P(x,y)=0</math>. | where <math>x</math> and <math>y</math> are particular values of <math>X</math> and <math>Y</math>, respectively, <math>P(x,y)</math> is the [[joint probability]] of these values occurring together, and <math>P(x,y) \log_2[P(x,y)]</math> is defined to be 0 if <math>P(x,y)=0</math>. | ||

| + | |||

| + | 其中<math>x</math>和<math>y</math>分别是<math>X</math>和<math>Y</math>的特定值,<math>P(x,y)</math>是这些值产生交集时的联合概率,如果<math>P(x,y)=0</math>则<math>P(x,y) \log_2[P(x,y)]</math>定义为0。 | ||

| + | |||

| + | |||

For more than two random variables <math>X_1, ..., X_n</math> this expands to | For more than two random variables <math>X_1, ..., X_n</math> this expands to | ||

| + | 对于两个以上的随机变量<math>X_1, ..., X_n</math>,它扩展为 | ||

| + | |||

{{Equation box 1 | {{Equation box 1 | ||

| 第37行: | 第45行: | ||

|border colour = #0073CF | |border colour = #0073CF | ||

|background colour=#F5FFFA}} | |background colour=#F5FFFA}} | ||

| + | |||

where <math>x_1,...,x_n</math> are particular values of <math>X_1,...,X_n</math>, respectively, <math>P(x_1, ..., x_n)</math> is the probability of these values occurring together, and <math>P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)]</math> is defined to be 0 if <math>P(x_1, ..., x_n)=0</math>. | where <math>x_1,...,x_n</math> are particular values of <math>X_1,...,X_n</math>, respectively, <math>P(x_1, ..., x_n)</math> is the probability of these values occurring together, and <math>P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)]</math> is defined to be 0 if <math>P(x_1, ..., x_n)=0</math>. | ||

| + | |||

| + | 其中<math>x_1,...,x_n</math>分别是<math>X_1,...,X_n</math>的特定值,<math>P(x_1, ..., x_n)</math>是这些值产生交集时的概率,如果<math>P(x_1, ..., x_n)=0</math>则<math>P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)]</math>定义为0。 | ||

==Properties== | ==Properties== | ||

2020年11月3日 (二) 15:47的版本

此词条暂由彩云小译翻译,未经人工整理和审校,带来阅读不便,请见谅。

In information theory, joint entropy is a measure of the uncertainty associated with a set of variables.[1]

Definition 定义

The joint Shannon entropy (in bits) of two discrete random variables [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] with images [math]\displaystyle{ \mathcal X }[/math] and [math]\displaystyle{ \mathcal Y }[/math] is defined as[2]:16

具有像[math]\displaystyle{ \mathcal X }[/math]和[math]\displaystyle{ \mathcal Y }[/math]的两个离散随机变量[math]\displaystyle{ X }[/math]和[math]\displaystyle{ Y }[/math]的 联合香农熵Shannon entropy (以比特为单位)定义为:

[math]\displaystyle{ \Eta(X,Y) = -\sum_{x\in\mathcal X} \sum_{y\in\mathcal Y} P(x,y) \log_2[P(x,y)] }[/math]

|

|

(Eq.1) |

where [math]\displaystyle{ x }[/math] and [math]\displaystyle{ y }[/math] are particular values of [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math], respectively, [math]\displaystyle{ P(x,y) }[/math] is the joint probability of these values occurring together, and [math]\displaystyle{ P(x,y) \log_2[P(x,y)] }[/math] is defined to be 0 if [math]\displaystyle{ P(x,y)=0 }[/math].

其中[math]\displaystyle{ x }[/math]和[math]\displaystyle{ y }[/math]分别是[math]\displaystyle{ X }[/math]和[math]\displaystyle{ Y }[/math]的特定值,[math]\displaystyle{ P(x,y) }[/math]是这些值产生交集时的联合概率,如果[math]\displaystyle{ P(x,y)=0 }[/math]则[math]\displaystyle{ P(x,y) \log_2[P(x,y)] }[/math]定义为0。

For more than two random variables [math]\displaystyle{ X_1, ..., X_n }[/math] this expands to 对于两个以上的随机变量[math]\displaystyle{ X_1, ..., X_n }[/math],它扩展为

[math]\displaystyle{ \Eta(X_1, ..., X_n) =

-\sum_{x_1 \in\mathcal X_1} ... \sum_{x_n \in\mathcal X_n} P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)] }[/math]

|

|

(Eq.2) |

where [math]\displaystyle{ x_1,...,x_n }[/math] are particular values of [math]\displaystyle{ X_1,...,X_n }[/math], respectively, [math]\displaystyle{ P(x_1, ..., x_n) }[/math] is the probability of these values occurring together, and [math]\displaystyle{ P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)] }[/math] is defined to be 0 if [math]\displaystyle{ P(x_1, ..., x_n)=0 }[/math].

其中[math]\displaystyle{ x_1,...,x_n }[/math]分别是[math]\displaystyle{ X_1,...,X_n }[/math]的特定值,[math]\displaystyle{ P(x_1, ..., x_n) }[/math]是这些值产生交集时的概率,如果[math]\displaystyle{ P(x_1, ..., x_n)=0 }[/math]则[math]\displaystyle{ P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)] }[/math]定义为0。

Properties

Nonnegativity

The joint entropy of a set of random variables is a nonnegative number.

- [math]\displaystyle{ \Eta(X,Y) \geq 0 }[/math]

- [math]\displaystyle{ \Eta(X_1,\ldots, X_n) \geq 0 }[/math]

Greater than individual entropies

The joint entropy of a set of variables is greater than or equal to the maximum of all of the individual entropies of the variables in the set.

- [math]\displaystyle{ \Eta(X,Y) \geq \max \left[\Eta(X),\Eta(Y) \right] }[/math]

- [math]\displaystyle{ \Eta \bigl(X_1,\ldots, X_n \bigr) \geq \max_{1 \le i \le n} \Bigl\{ \Eta\bigl(X_i\bigr) \Bigr\} }[/math]

Less than or equal to the sum of individual entropies

The joint entropy of a set of variables is less than or equal to the sum of the individual entropies of the variables in the set. This is an example of subadditivity. This inequality is an equality if and only if [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] are statistically independent.[2]:30

- [math]\displaystyle{ \Eta(X,Y) \leq \Eta(X) + \Eta(Y) }[/math]

- [math]\displaystyle{ \Eta(X_1,\ldots, X_n) \leq \Eta(X_1) + \ldots + \Eta(X_n) }[/math]

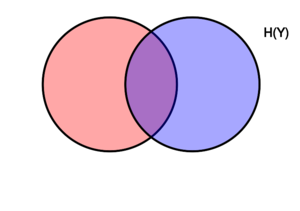

Relations to other entropy measures

Joint entropy is used in the definition of conditional entropy[2]:22

- [math]\displaystyle{ \Eta(X|Y) = \Eta(X,Y) - \Eta(Y)\, }[/math],

and [math]\displaystyle{ \Eta(X_1,\dots,X_n) = \sum_{k=1}^n \Eta(X_k|X_{k-1},\dots, X_1) }[/math]It is also used in the definition of mutual information[2]:21

- [math]\displaystyle{ \operatorname{I}(X;Y) = \Eta(X) + \Eta(Y) - \Eta(X,Y)\, }[/math]

In quantum information theory, the joint entropy is generalized into the joint quantum entropy.

Applications

A python package for computing all multivariate joint entropies, mutual informations, conditional mutual information, total correlations, information distance in a dataset of n variables is available.[3]

Joint differential entropy

Definition

The above definition is for discrete random variables and just as valid in the case of continuous random variables. The continuous version of discrete joint entropy is called joint differential (or continuous) entropy. Let [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] be a continuous random variables with a joint probability density function [math]\displaystyle{ f(x,y) }[/math]. The differential joint entropy [math]\displaystyle{ h(X,Y) }[/math] is defined as[2]:249

[math]\displaystyle{ h(X,Y) = -\int_{\mathcal X , \mathcal Y} f(x,y)\log f(x,y)\,dx dy }[/math]

|

|

(Eq.3) |

For more than two continuous random variables [math]\displaystyle{ X_1, ..., X_n }[/math] the definition is generalized to:

[math]\displaystyle{ h(X_1, \ldots,X_n) = -\int f(x_1, \ldots,x_n)\log f(x_1, \ldots,x_n)\,dx_1 \ldots dx_n }[/math]

|

|

(Eq.4) |

The integral is taken over the support of [math]\displaystyle{ f }[/math]. It is possible that the integral does not exist in which case we say that the differential entropy is not defined.

Properties

As in the discrete case the joint differential entropy of a set of random variables is smaller or equal than the sum of the entropies of the individual random variables:

- [math]\displaystyle{ h(X_1,X_2, \ldots,X_n) \le \sum_{i=1}^n h(X_i) }[/math][2]:253

The following chain rule holds for two random variables:

- [math]\displaystyle{ h(X,Y) = h(X|Y) + h(Y) }[/math]

In the case of more than two random variables this generalizes to:[2]:253

- [math]\displaystyle{ h(X_1,X_2, \ldots,X_n) = \sum_{i=1}^n h(X_i|X_1,X_2, \ldots,X_{i-1}) }[/math]

Joint differential entropy is also used in the definition of the mutual information between continuous random variables:

- [math]\displaystyle{ \operatorname{I}(X,Y)=h(X)+h(Y)-h(X,Y) }[/math]

References

- ↑ Theresa M. Korn; Korn, Granino Arthur. Mathematical Handbook for Scientists and Engineers: Definitions, Theorems, and Formulas for Reference and Review. New York: Dover Publications. ISBN 0-486-41147-8.

- ↑ 2.0 2.1 2.2 2.3 2.4 2.5 2.6 Thomas M. Cover; Joy A. Thomas. Elements of Information Theory. Hoboken, New Jersey: Wiley. ISBN 0-471-24195-4.

- ↑ "InfoTopo: Topological Information Data Analysis. Deep statistical unsupervised and supervised learning - File Exchange - Github". github.com/pierrebaudot/infotopopy/. Retrieved 26 September 2020.