“情感计算”的版本间的差异

| 第418行: | 第418行: | ||

| Contempt || R12A+R14A | | Contempt || R12A+R14A | ||

|} | |} | ||

| + | <nowiki>-| 快乐 | | 6 + 12 |-| 悲伤 | | 1 + 4 + 15 |-| 惊喜 | | 1 + 2 + 5 b + 26 |-| 恐惧 | | 1 + 2 + 4 + 5 + 20 + 26 |-愤怒 | 4 + 5 + 7 + 23 |-| 厌恶 | 9 + 15 + 16 |-| 藐视 | R12A + R14A | }</nowiki> | ||

| − | |||

| − | |||

| − | |||

====Challenges in facial detection==== | ====Challenges in facial detection==== | ||

| − | + | ||

As with every computational practice, in affect detection by facial processing, some obstacles need to be surpassed, in order to fully unlock the hidden potential of the overall algorithm or method employed. In the early days of almost every kind of AI-based detection (speech recognition, face recognition, affect recognition), the accuracy of modeling and tracking has been an issue. As hardware evolves, as more data are collected and as new discoveries are made and new practices introduced, this lack of accuracy fades, leaving behind noise issues. However, methods for noise removal exist including neighborhood averaging, linear Gaussian smoothing, median filtering, or newer methods such as the Bacterial Foraging Optimization Algorithm.Clever Algorithms. "Bacterial Foraging Optimization Algorithm – Swarm Algorithms – Clever Algorithms" . Clever Algorithms. Retrieved 21 March 2011."Soft Computing". Soft Computing. Retrieved 18 March 2011. | As with every computational practice, in affect detection by facial processing, some obstacles need to be surpassed, in order to fully unlock the hidden potential of the overall algorithm or method employed. In the early days of almost every kind of AI-based detection (speech recognition, face recognition, affect recognition), the accuracy of modeling and tracking has been an issue. As hardware evolves, as more data are collected and as new discoveries are made and new practices introduced, this lack of accuracy fades, leaving behind noise issues. However, methods for noise removal exist including neighborhood averaging, linear Gaussian smoothing, median filtering, or newer methods such as the Bacterial Foraging Optimization Algorithm.Clever Algorithms. "Bacterial Foraging Optimization Algorithm – Swarm Algorithms – Clever Algorithms" . Clever Algorithms. Retrieved 21 March 2011."Soft Computing". Soft Computing. Retrieved 18 March 2011. | ||

| 第458行: | 第456行: | ||

姿势可以有效地作为一种检测用户特定情绪状态的手段,特别是与语音和面部识别结合使用时。根据具体的动作,姿势可以是简单的反射性反应,比如当你不知道一个问题的答案时抬起你的肩膀,或者它们可以是复杂和有意义的,比如当用手语交流时。不需要利用任何物体或周围环境,我们可以挥手、拍手或招手。另一方面,当我们使用物体时,我们可以指向它们,移动,触摸或者处理它们。计算机应该能够识别这些,分析上下文,并以一种有意义的方式作出响应,以便有效地用于人机交互。 | 姿势可以有效地作为一种检测用户特定情绪状态的手段,特别是与语音和面部识别结合使用时。根据具体的动作,姿势可以是简单的反射性反应,比如当你不知道一个问题的答案时抬起你的肩膀,或者它们可以是复杂和有意义的,比如当用手语交流时。不需要利用任何物体或周围环境,我们可以挥手、拍手或招手。另一方面,当我们使用物体时,我们可以指向它们,移动,触摸或者处理它们。计算机应该能够识别这些,分析上下文,并以一种有意义的方式作出响应,以便有效地用于人机交互。 | ||

| − | There are many proposed methods<ref name="JK">J. K. Aggarwal, Q. Cai, Human Motion Analysis: A Review, Computer Vision and Image Understanding, Vol. 73, No. 3, 1999</ref> to detect the body gesture. Some literature differentiates 2 different approaches in gesture recognition: a 3D model based and an appearance-based.<ref name="Vladimir">{{cite journal | first1 = Vladimir I. | last1 = Pavlovic | first2 = Rajeev | last2 = Sharma | first3 = Thomas S. | last3 = Huang | url = http://www.cs.rutgers.edu/~vladimir/pub/pavlovic97pami.pdf | title = Visual Interpretation of Hand Gestures for Human–Computer Interaction: A Review | journal = [[IEEE Transactions on Pattern Analysis and Machine Intelligence]] | volume = 19 | issue = 7 | pages = 677–695 | year = 1997 | doi = 10.1109/34.598226 }}</ref> The foremost method makes use of 3D information of key elements of the body parts in order to obtain several important parameters, like palm position or joint angles. On the other hand, appearance-based systems use images or videos to for direct interpretation. Hand gestures have been a common focus of body gesture detection methods.<ref name="Vladimir"/> | + | There are many proposed methods<ref name="JK">J. K. Aggarwal, Q. Cai, Human Motion Analysis: A Review, Computer Vision and Image Understanding, Vol. 73, No. 3, 1999</ref> to detect the body gesture. Some literature differentiates 2 different approaches in gesture recognition: a 3D model based and an appearance-based.<ref name="Vladimir">{{cite journal | first1 = Vladimir I. | last1 = Pavlovic | first2 = Rajeev | last2 = Sharma | first3 = Thomas S. | last3 = Huang | url = http://www.cs.rutgers.edu/~vladimir/pub/pavlovic97pami.pdf | title = Visual Interpretation of Hand Gestures for Human–Computer Interaction: A Review | journal = [[IEEE Transactions on Pattern Analysis and Machine Intelligence]] | volume = 19 | issue = 7 | pages = 677–695 | year = 1997 | doi = 10.1109/34.598226 }}</ref> The foremost method makes use of 3D information of key elements of the body parts in order to obtain several important parameters, like palm position or joint angles. On the other hand, appearance-based systems use images or videos to for direct interpretation. Hand gestures have been a common focus of body gesture detection methods.<ref name="Vladimir" /> |

There are many proposed methodsJ. K. Aggarwal, Q. Cai, Human Motion Analysis: A Review, Computer Vision and Image Understanding, Vol. 73, No. 3, 1999 to detect the body gesture. Some literature differentiates 2 different approaches in gesture recognition: a 3D model based and an appearance-based. The foremost method makes use of 3D information of key elements of the body parts in order to obtain several important parameters, like palm position or joint angles. On the other hand, appearance-based systems use images or videos to for direct interpretation. Hand gestures have been a common focus of body gesture detection methods. | There are many proposed methodsJ. K. Aggarwal, Q. Cai, Human Motion Analysis: A Review, Computer Vision and Image Understanding, Vol. 73, No. 3, 1999 to detect the body gesture. Some literature differentiates 2 different approaches in gesture recognition: a 3D model based and an appearance-based. The foremost method makes use of 3D information of key elements of the body parts in order to obtain several important parameters, like palm position or joint angles. On the other hand, appearance-based systems use images or videos to for direct interpretation. Hand gestures have been a common focus of body gesture detection methods. | ||

| 第471行: | 第469行: | ||

这可用于通过监测和分析用户的生理迹象来检测用户的情感状态。 这些迹象的范围从心率和皮肤电导率的变化到面部肌肉的微小收缩和面部血流的变化。这个领域的发展势头越来越强劲,我们现在看到了实现这些技术的真正产品。通常被分析的4个主要生理特征是血容量脉搏、皮肤电反应、面部肌电图和面部颜色模式。 | 这可用于通过监测和分析用户的生理迹象来检测用户的情感状态。 这些迹象的范围从心率和皮肤电导率的变化到面部肌肉的微小收缩和面部血流的变化。这个领域的发展势头越来越强劲,我们现在看到了实现这些技术的真正产品。通常被分析的4个主要生理特征是血容量脉搏、皮肤电反应、面部肌电图和面部颜色模式。 | ||

| − | |||

| − | |||

| − | + | Blood volume pulse | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| + | =====Overview 概述===== | ||

A subject's blood volume pulse (BVP) can be measured by a process called photoplethysmography, which produces a graph indicating blood flow through the extremities.<ref name="Picard, Rosalind 1998">Picard, Rosalind (1998). Affective Computing. MIT.</ref> The peaks of the waves indicate a cardiac cycle where the heart has pumped blood to the extremities. If the subject experiences fear or is startled, their heart usually 'jumps' and beats quickly for some time, causing the amplitude of the cardiac cycle to increase. This can clearly be seen on a photoplethysmograph when the distance between the trough and the peak of the wave has decreased. As the subject calms down, and as the body's inner core expands, allowing more blood to flow back to the extremities, the cycle will return to normal. | A subject's blood volume pulse (BVP) can be measured by a process called photoplethysmography, which produces a graph indicating blood flow through the extremities.<ref name="Picard, Rosalind 1998">Picard, Rosalind (1998). Affective Computing. MIT.</ref> The peaks of the waves indicate a cardiac cycle where the heart has pumped blood to the extremities. If the subject experiences fear or is startled, their heart usually 'jumps' and beats quickly for some time, causing the amplitude of the cardiac cycle to increase. This can clearly be seen on a photoplethysmograph when the distance between the trough and the peak of the wave has decreased. As the subject calms down, and as the body's inner core expands, allowing more blood to flow back to the extremities, the cycle will return to normal. | ||

| 第489行: | 第481行: | ||

一个实验对象的血容量脉搏(BVP)可以通过一个叫做光容血管造影术的技术来测量,这个过程产生一个图表来显示通过四肢的血液流动【38】。波峰表明心脏将血液泵入四肢的心动周期。如果受试者感到恐惧或受到惊吓,他们的心脏通常会“跳动”并快速跳动一段时间,导致心脏周期的振幅增加。当波谷和波峰之间的距离减小时,可以在光电容积描记器上清楚地看到这一点。当受试者平静下来,身体内核扩张,允许更多的血液回流到四肢,循环将恢复正常。 | 一个实验对象的血容量脉搏(BVP)可以通过一个叫做光容血管造影术的技术来测量,这个过程产生一个图表来显示通过四肢的血液流动【38】。波峰表明心脏将血液泵入四肢的心动周期。如果受试者感到恐惧或受到惊吓,他们的心脏通常会“跳动”并快速跳动一段时间,导致心脏周期的振幅增加。当波谷和波峰之间的距离减小时,可以在光电容积描记器上清楚地看到这一点。当受试者平静下来,身体内核扩张,允许更多的血液回流到四肢,循环将恢复正常。 | ||

| − | |||

| − | |||

| − | = = = = 方法论 = = = | + | |

| + | |||

| + | =====Methodology 方法论===== | ||

| + | |||

Infra-red light is shone on the skin by special sensor hardware, and the amount of light reflected is measured. The amount of reflected and transmitted light correlates to the BVP as light is absorbed by hemoglobin which is found richly in the bloodstream. | Infra-red light is shone on the skin by special sensor hardware, and the amount of light reflected is measured. The amount of reflected and transmitted light correlates to the BVP as light is absorbed by hemoglobin which is found richly in the bloodstream. | ||

| 第501行: | 第494行: | ||

红外光通过特殊的传感器硬件照射在皮肤上,测量皮肤反射的光量。反射和透射光的数量与 BVP 相关,因为光线被血红蛋白吸收,而血液中的血红蛋白含量丰富。 | 红外光通过特殊的传感器硬件照射在皮肤上,测量皮肤反射的光量。反射和透射光的数量与 BVP 相关,因为光线被血红蛋白吸收,而血液中的血红蛋白含量丰富。 | ||

| − | |||

| − | |||

| − | = = = = 劣势 = = = = | + | =====Disadvantages 劣势===== |

| + | |||

It can be cumbersome to ensure that the sensor shining an infra-red light and monitoring the reflected light is always pointing at the same extremity, especially seeing as subjects often stretch and readjust their position while using a computer. | It can be cumbersome to ensure that the sensor shining an infra-red light and monitoring the reflected light is always pointing at the same extremity, especially seeing as subjects often stretch and readjust their position while using a computer. | ||

| 第535行: | 第527行: | ||

{{Main|Galvanic skin response}} | {{Main|Galvanic skin response}} | ||

| − | Galvanic skin response (GSR) is an outdated term for a more general phenomenon known as [Electrodermal Activity] or EDA. EDA is a general phenomena whereby the skin's electrical properties change. The skin is innervated by the [sympathetic nervous system], so measuring its resistance or conductance provides a way to quantify small changes in the sympathetic branch of the autonomic nervous system. As the sweat glands are activated, even before the skin feels sweaty, the level of the EDA can be captured (usually using conductance) and used to discern small changes in autonomic arousal. The more aroused a subject is, the greater the skin conductance tends to be.<ref name="Picard, Rosalind 1998"/> | + | Galvanic skin response (GSR) is an outdated term for a more general phenomenon known as [Electrodermal Activity] or EDA. EDA is a general phenomena whereby the skin's electrical properties change. The skin is innervated by the [sympathetic nervous system], so measuring its resistance or conductance provides a way to quantify small changes in the sympathetic branch of the autonomic nervous system. As the sweat glands are activated, even before the skin feels sweaty, the level of the EDA can be captured (usually using conductance) and used to discern small changes in autonomic arousal. The more aroused a subject is, the greater the skin conductance tends to be.<ref name="Picard, Rosalind 1998" /> |

Galvanic skin response (GSR) is an outdated term for a more general phenomenon known as [Electrodermal Activity] or EDA. EDA is a general phenomena whereby the skin's electrical properties change. The skin is innervated by the [sympathetic nervous system], so measuring its resistance or conductance provides a way to quantify small changes in the sympathetic branch of the autonomic nervous system. As the sweat glands are activated, even before the skin feels sweaty, the level of the EDA can be captured (usually using conductance) and used to discern small changes in autonomic arousal. The more aroused a subject is, the greater the skin conductance tends to be. | Galvanic skin response (GSR) is an outdated term for a more general phenomenon known as [Electrodermal Activity] or EDA. EDA is a general phenomena whereby the skin's electrical properties change. The skin is innervated by the [sympathetic nervous system], so measuring its resistance or conductance provides a way to quantify small changes in the sympathetic branch of the autonomic nervous system. As the sweat glands are activated, even before the skin feels sweaty, the level of the EDA can be captured (usually using conductance) and used to discern small changes in autonomic arousal. The more aroused a subject is, the greater the skin conductance tends to be. | ||

| 第547行: | 第539行: | ||

皮肤导电反应通常是通过放置在皮肤某处的小型氯化银电极并在两者之间施加一个小电压来测量的。为了最大限度地舒适和减少刺激,电极可以放在手腕、腿上或脚上,这样手就可以完全自由地进行日常活动。 | 皮肤导电反应通常是通过放置在皮肤某处的小型氯化银电极并在两者之间施加一个小电压来测量的。为了最大限度地舒适和减少刺激,电极可以放在手腕、腿上或脚上,这样手就可以完全自由地进行日常活动。 | ||

| − | + | ||

====Facial color==== | ====Facial color==== | ||

| − | |||

=====Overview===== | =====Overview===== | ||

| − | |||

| − | |||

| − | |||

The surface of the human face is innervated with a large network of blood vessels. Blood flow variations in these vessels yield visible color changes on the face. Whether or not facial emotions activate facial muscles, variations in blood flow, blood pressure, glucose levels, and other changes occur. Also, the facial color signal is independent from that provided by facial muscle movements.<ref name="face">Carlos F. Benitez-Quiroz, Ramprakash Srinivasan, Aleix M. Martinez, [https://www.pnas.org/content/115/14/3581 Facial color is an efficient mechanism to visually transmit emotion], PNAS. April 3, 2018 115 (14) 3581–3586; first published March 19, 2018 https://doi.org/10.1073/pnas.1716084115.</ref> | The surface of the human face is innervated with a large network of blood vessels. Blood flow variations in these vessels yield visible color changes on the face. Whether or not facial emotions activate facial muscles, variations in blood flow, blood pressure, glucose levels, and other changes occur. Also, the facial color signal is independent from that provided by facial muscle movements.<ref name="face">Carlos F. Benitez-Quiroz, Ramprakash Srinivasan, Aleix M. Martinez, [https://www.pnas.org/content/115/14/3581 Facial color is an efficient mechanism to visually transmit emotion], PNAS. April 3, 2018 115 (14) 3581–3586; first published March 19, 2018 https://doi.org/10.1073/pnas.1716084115.</ref> | ||

| 第567行: | 第555行: | ||

=====Methodology===== | =====Methodology===== | ||

| − | |||

| − | |||

| − | |||

| − | Approaches are based on facial color changes. Delaunay triangulation is used to create the triangular local areas. Some of these triangles which define the interior of the mouth and eyes (sclera and iris) are removed. Use the left triangular areas’ pixels to create feature vectors.<ref name="face"/> It shows that converting the pixel color of the standard RGB color space to a color space such as oRGB color space<ref name="orgb">M. Bratkova, S. Boulos, and P. Shirley, [https://ieeexplore.ieee.org/document/4736456 oRGB: a practical opponent color space for computer graphics], IEEE Computer Graphics and Applications, 29(1):42–55, 2009.</ref> or LMS channels perform better when dealing with faces.<ref name="mec">Hadas Shahar, [[Hagit Hel-Or]], [http://openaccess.thecvf.com/content_ICCVW_2019/papers/CVPM/Shahar_Micro_Expression_Classification_using_Facial_Color_and_Deep_Learning_Methods_ICCVW_2019_paper.pdf Micro Expression Classification using Facial Color and Deep Learning Methods], The IEEE International Conference on Computer Vision (ICCV), 2019, pp. 0–0.</ref> So, map the above vector onto the better color space and decompose into red-green and yellow-blue channels. Then use deep learning methods to find equivalent emotions. | + | Approaches are based on facial color changes. Delaunay triangulation is used to create the triangular local areas. Some of these triangles which define the interior of the mouth and eyes (sclera and iris) are removed. Use the left triangular areas’ pixels to create feature vectors.<ref name="face" /> It shows that converting the pixel color of the standard RGB color space to a color space such as oRGB color space<ref name="orgb">M. Bratkova, S. Boulos, and P. Shirley, [https://ieeexplore.ieee.org/document/4736456 oRGB: a practical opponent color space for computer graphics], IEEE Computer Graphics and Applications, 29(1):42–55, 2009.</ref> or LMS channels perform better when dealing with faces.<ref name="mec">Hadas Shahar, [[Hagit Hel-Or]], [http://openaccess.thecvf.com/content_ICCVW_2019/papers/CVPM/Shahar_Micro_Expression_Classification_using_Facial_Color_and_Deep_Learning_Methods_ICCVW_2019_paper.pdf Micro Expression Classification using Facial Color and Deep Learning Methods], The IEEE International Conference on Computer Vision (ICCV), 2019, pp. 0–0.</ref> So, map the above vector onto the better color space and decompose into red-green and yellow-blue channels. Then use deep learning methods to find equivalent emotions. |

Approaches are based on facial color changes. Delaunay triangulation is used to create the triangular local areas. Some of these triangles which define the interior of the mouth and eyes (sclera and iris) are removed. Use the left triangular areas’ pixels to create feature vectors. It shows that converting the pixel color of the standard RGB color space to a color space such as oRGB color spaceM. Bratkova, S. Boulos, and P. Shirley, oRGB: a practical opponent color space for computer graphics, IEEE Computer Graphics and Applications, 29(1):42–55, 2009. or LMS channels perform better when dealing with faces.Hadas Shahar, Hagit Hel-Or, Micro Expression Classification using Facial Color and Deep Learning Methods, The IEEE International Conference on Computer Vision (ICCV), 2019, pp. 0–0. So, map the above vector onto the better color space and decompose into red-green and yellow-blue channels. Then use deep learning methods to find equivalent emotions. | Approaches are based on facial color changes. Delaunay triangulation is used to create the triangular local areas. Some of these triangles which define the interior of the mouth and eyes (sclera and iris) are removed. Use the left triangular areas’ pixels to create feature vectors. It shows that converting the pixel color of the standard RGB color space to a color space such as oRGB color spaceM. Bratkova, S. Boulos, and P. Shirley, oRGB: a practical opponent color space for computer graphics, IEEE Computer Graphics and Applications, 29(1):42–55, 2009. or LMS channels perform better when dealing with faces.Hadas Shahar, Hagit Hel-Or, Micro Expression Classification using Facial Color and Deep Learning Methods, The IEEE International Conference on Computer Vision (ICCV), 2019, pp. 0–0. So, map the above vector onto the better color space and decompose into red-green and yellow-blue channels. Then use deep learning methods to find equivalent emotions. | ||

| 第585行: | 第570行: | ||

==Potential applications== | ==Potential applications== | ||

| − | === Education === | + | ===Education=== |

Affection influences learners' learning state. Using affective computing technology, computers can judge the learners' affection and learning state by recognizing their facial expressions. In education, the teacher can use the analysis result to understand the student's learning and accepting ability, and then formulate reasonable teaching plans. At the same time, they can pay attention to students' inner feelings, which is helpful to students' psychological health. Especially in distance education, due to the separation of time and space, there is no emotional incentive between teachers and students for two-way communication. Without the atmosphere brought by traditional classroom learning, students are easily bored, and affect the learning effect. Applying affective computing in distance education system can effectively improve this situation. | Affection influences learners' learning state. Using affective computing technology, computers can judge the learners' affection and learning state by recognizing their facial expressions. In education, the teacher can use the analysis result to understand the student's learning and accepting ability, and then formulate reasonable teaching plans. At the same time, they can pay attention to students' inner feelings, which is helpful to students' psychological health. Especially in distance education, due to the separation of time and space, there is no emotional incentive between teachers and students for two-way communication. Without the atmosphere brought by traditional classroom learning, students are easily bored, and affect the learning effect. Applying affective computing in distance education system can effectively improve this situation. | ||

<ref>http://www.learntechlib.org/p/173785/</ref> | <ref>http://www.learntechlib.org/p/173785/</ref> | ||

| 第607行: | 第592行: | ||

情感计算也被应用于交流技术的发展,以供孤独症患者使用【46】。情感计算项目文本中的情感成分也越来越受到关注,特别是它在所谓的情感或情感互联网中的作用【47】。 | 情感计算也被应用于交流技术的发展,以供孤独症患者使用【46】。情感计算项目文本中的情感成分也越来越受到关注,特别是它在所谓的情感或情感互联网中的作用【47】。 | ||

| − | |||

| − | === Video games === | + | ===Video games=== |

| − | |||

Affective video games can access their players' emotional states through [[biofeedback]] devices.<ref>{{cite conference |title=Affective Videogames and Modes of Affective Gaming: Assist Me, Challenge Me, Emote Me |first1=Kiel Mark |last1=Gilleade |first2=Alan |last2=Dix |first3=Jen |last3=Allanson |year=2005 |conference=Proc. [[Digital Games Research Association|DiGRA]] Conf. |url=http://comp.eprints.lancs.ac.uk/1057/1/Gilleade_Affective_Gaming_DIGRA_2005.pdf |access-date=2016-12-10 |archive-url=https://web.archive.org/web/20150406200454/http://comp.eprints.lancs.ac.uk/1057/1/Gilleade_Affective_Gaming_DIGRA_2005.pdf |archive-date=2015-04-06 |url-status=dead }}</ref> A particularly simple form of biofeedback is available through [[gamepad]]s that measure the pressure with which a button is pressed: this has been shown to correlate strongly with the players' level of [[arousal]];<ref>{{Cite conference| doi = 10.1145/765891.765957| title = Affective gaming: Measuring emotion through the gamepad| conference = CHI '03 Extended Abstracts on Human Factors in Computing Systems| year = 2003| last1 = Sykes | first1 = Jonathan| last2 = Brown | first2 = Simon| isbn = 1581136374| citeseerx = 10.1.1.92.2123}}</ref> at the other end of the scale are [[brain–computer interface]]s.<ref>{{Cite journal | doi = 10.1016/j.entcom.2009.09.007| title = Turning shortcomings into challenges: Brain–computer interfaces for games| journal = Entertainment Computing| volume = 1| issue = 2| pages = 85–94| year = 2009| last1 = Nijholt | first1 = Anton| last2 = Plass-Oude Bos | first2 = Danny| last3 = Reuderink | first3 = Boris| bibcode = 2009itie.conf..153N| url = http://wwwhome.cs.utwente.nl/~anijholt/artikelen/intetain_bci_2009.pdf}}</ref><ref>{{Cite conference| doi = 10.1007/978-3-642-02315-6_23| title = Affective Pacman: A Frustrating Game for Brain–Computer Interface Experiments| conference = Intelligent Technologies for Interactive Entertainment (INTETAIN)| pages = 221–227| year = 2009| last1 = Reuderink | first1 = Boris| last2 = Nijholt | first2 = Anton| last3 = Poel | first3 = Mannes| isbn = 978-3-642-02314-9}}</ref> Affective games have been used in medical research to support the emotional development of [[autism|autistic]] children.<ref>{{Cite journal | Affective video games can access their players' emotional states through [[biofeedback]] devices.<ref>{{cite conference |title=Affective Videogames and Modes of Affective Gaming: Assist Me, Challenge Me, Emote Me |first1=Kiel Mark |last1=Gilleade |first2=Alan |last2=Dix |first3=Jen |last3=Allanson |year=2005 |conference=Proc. [[Digital Games Research Association|DiGRA]] Conf. |url=http://comp.eprints.lancs.ac.uk/1057/1/Gilleade_Affective_Gaming_DIGRA_2005.pdf |access-date=2016-12-10 |archive-url=https://web.archive.org/web/20150406200454/http://comp.eprints.lancs.ac.uk/1057/1/Gilleade_Affective_Gaming_DIGRA_2005.pdf |archive-date=2015-04-06 |url-status=dead }}</ref> A particularly simple form of biofeedback is available through [[gamepad]]s that measure the pressure with which a button is pressed: this has been shown to correlate strongly with the players' level of [[arousal]];<ref>{{Cite conference| doi = 10.1145/765891.765957| title = Affective gaming: Measuring emotion through the gamepad| conference = CHI '03 Extended Abstracts on Human Factors in Computing Systems| year = 2003| last1 = Sykes | first1 = Jonathan| last2 = Brown | first2 = Simon| isbn = 1581136374| citeseerx = 10.1.1.92.2123}}</ref> at the other end of the scale are [[brain–computer interface]]s.<ref>{{Cite journal | doi = 10.1016/j.entcom.2009.09.007| title = Turning shortcomings into challenges: Brain–computer interfaces for games| journal = Entertainment Computing| volume = 1| issue = 2| pages = 85–94| year = 2009| last1 = Nijholt | first1 = Anton| last2 = Plass-Oude Bos | first2 = Danny| last3 = Reuderink | first3 = Boris| bibcode = 2009itie.conf..153N| url = http://wwwhome.cs.utwente.nl/~anijholt/artikelen/intetain_bci_2009.pdf}}</ref><ref>{{Cite conference| doi = 10.1007/978-3-642-02315-6_23| title = Affective Pacman: A Frustrating Game for Brain–Computer Interface Experiments| conference = Intelligent Technologies for Interactive Entertainment (INTETAIN)| pages = 221–227| year = 2009| last1 = Reuderink | first1 = Boris| last2 = Nijholt | first2 = Anton| last3 = Poel | first3 = Mannes| isbn = 978-3-642-02314-9}}</ref> Affective games have been used in medical research to support the emotional development of [[autism|autistic]] children.<ref>{{Cite journal | ||

| 第628行: | 第611行: | ||

情感视频游戏可以通过生物反馈设备访问玩家的情绪状态【48】。一种特别简单的生物反馈形式可以通过游戏手柄来测量按下按钮的压力:这已被证明与玩家的唤醒水平密切相关【49】; 另一方面是脑机接口【50】【51】。情感游戏已被用于医学研究,以支持自闭症儿童的情感发展【52】。 | 情感视频游戏可以通过生物反馈设备访问玩家的情绪状态【48】。一种特别简单的生物反馈形式可以通过游戏手柄来测量按下按钮的压力:这已被证明与玩家的唤醒水平密切相关【49】; 另一方面是脑机接口【50】【51】。情感游戏已被用于医学研究,以支持自闭症儿童的情感发展【52】。 | ||

| − | |||

| − | === Other applications === | + | ===Other applications=== |

| − | |||

Other potential applications are centered around social monitoring. For example, a car can monitor the emotion of all occupants and engage in additional safety measures, such as alerting other vehicles if it detects the driver to be angry.<ref>{{cite web|url=https://gizmodo.com/in-car-facial-recognition-detects-angry-drivers-to-prev-1543709793|title=In-Car Facial Recognition Detects Angry Drivers To Prevent Road Rage|date=30 August 2018|website=Gizmodo}}</ref> Affective computing has potential applications in [[human computer interaction|human–computer interaction]], such as affective mirrors allowing the user to see how he or she performs; emotion monitoring agents sending a warning before one sends an angry email; or even music players selecting tracks based on mood.<ref>{{cite journal|last1=Janssen|first1=Joris H.|last2=van den Broek|first2=Egon L.|date=July 2012|title=Tune in to Your Emotions: A Robust Personalized Affective Music Player|journal=User Modeling and User-Adapted Interaction|volume=22|issue=3|pages=255–279|doi=10.1007/s11257-011-9107-7|doi-access=free}}</ref> | Other potential applications are centered around social monitoring. For example, a car can monitor the emotion of all occupants and engage in additional safety measures, such as alerting other vehicles if it detects the driver to be angry.<ref>{{cite web|url=https://gizmodo.com/in-car-facial-recognition-detects-angry-drivers-to-prev-1543709793|title=In-Car Facial Recognition Detects Angry Drivers To Prevent Road Rage|date=30 August 2018|website=Gizmodo}}</ref> Affective computing has potential applications in [[human computer interaction|human–computer interaction]], such as affective mirrors allowing the user to see how he or she performs; emotion monitoring agents sending a warning before one sends an angry email; or even music players selecting tracks based on mood.<ref>{{cite journal|last1=Janssen|first1=Joris H.|last2=van den Broek|first2=Egon L.|date=July 2012|title=Tune in to Your Emotions: A Robust Personalized Affective Music Player|journal=User Modeling and User-Adapted Interaction|volume=22|issue=3|pages=255–279|doi=10.1007/s11257-011-9107-7|doi-access=free}}</ref> | ||

| 第652行: | 第633行: | ||

人们也可以利用情感状态识别来判断电视广告的影响,通过实时录像和随后对他或她的面部表情的研究。对大量主题的结果进行平均,我们就能知道这个广告(或电影)是否达到了预期的效果,以及观众最感兴趣的元素是什么。 | 人们也可以利用情感状态识别来判断电视广告的影响,通过实时录像和随后对他或她的面部表情的研究。对大量主题的结果进行平均,我们就能知道这个广告(或电影)是否达到了预期的效果,以及观众最感兴趣的元素是什么。 | ||

| − | |||

==Cognitivist vs. interactional approaches== | ==Cognitivist vs. interactional approaches== | ||

| − | |||

| − | |||

| − | |||

Within the field of [[human–computer interaction]], Rosalind Picard's [[cognitivism (psychology)|cognitivist]] or "information model" concept of emotion has been criticized by and contrasted with the "post-cognitivist" or "interactional" [[pragmatism|pragmatist]] approach taken by Kirsten Boehner and others which views emotion as inherently social.<ref>{{cite journal|last1=Battarbee|first1=Katja|last2=Koskinen|first2=Ilpo|title=Co-experience: user experience as interaction|journal=CoDesign|date=2005|volume=1|issue=1|pages=5–18|url=http://www2.uiah.fi/~ikoskine/recentpapers/mobile_multimedia/coexperience_reprint_lr_5-18.pdf|doi=10.1080/15710880412331289917|citeseerx=10.1.1.294.9178|s2cid=15296236}}</ref> | Within the field of [[human–computer interaction]], Rosalind Picard's [[cognitivism (psychology)|cognitivist]] or "information model" concept of emotion has been criticized by and contrasted with the "post-cognitivist" or "interactional" [[pragmatism|pragmatist]] approach taken by Kirsten Boehner and others which views emotion as inherently social.<ref>{{cite journal|last1=Battarbee|first1=Katja|last2=Koskinen|first2=Ilpo|title=Co-experience: user experience as interaction|journal=CoDesign|date=2005|volume=1|issue=1|pages=5–18|url=http://www2.uiah.fi/~ikoskine/recentpapers/mobile_multimedia/coexperience_reprint_lr_5-18.pdf|doi=10.1080/15710880412331289917|citeseerx=10.1.1.294.9178|s2cid=15296236}}</ref> | ||

| 第664行: | 第641行: | ||

在人机交互领域,罗莎琳德 · 皮卡德的情绪认知主义或“信息模型”概念受到了后认知主义或“互动”实用主义者柯尔斯滕 · 博纳等人的批判和对比【56】。 | 在人机交互领域,罗莎琳德 · 皮卡德的情绪认知主义或“信息模型”概念受到了后认知主义或“互动”实用主义者柯尔斯滕 · 博纳等人的批判和对比【56】。 | ||

| − | Picard's focus is human–computer interaction, and her goal for affective computing is to "give computers the ability to recognize, express, and in some cases, 'have' emotions".<ref name="Affective Computing"/> In contrast, the interactional approach seeks to help "people to understand and experience their own emotions"<ref name="How emotion is made and measured"/> and to improve computer-mediated interpersonal communication. It does not necessarily seek to map emotion into an objective mathematical model for machine interpretation, but rather let humans make sense of each other's emotional expressions in open-ended ways that might be ambiguous, subjective, and sensitive to context.<ref name="How emotion is made and measured"/>{{rp|284}}{{example needed|date=September 2018}} | + | Picard's focus is human–computer interaction, and her goal for affective computing is to "give computers the ability to recognize, express, and in some cases, 'have' emotions".<ref name="Affective Computing" /> In contrast, the interactional approach seeks to help "people to understand and experience their own emotions"<ref name="How emotion is made and measured">{{cite journal|last1=Boehner|first1=Kirsten|last2=DePaula|first2=Rogerio|last3=Dourish|first3=Paul|last4=Sengers|first4=Phoebe|title=How emotion is made and measured|journal=International Journal of Human–Computer Studies|date=2007|volume=65|issue=4|pages=275–291|doi=10.1016/j.ijhcs.2006.11.016}}</ref> and to improve computer-mediated interpersonal communication. It does not necessarily seek to map emotion into an objective mathematical model for machine interpretation, but rather let humans make sense of each other's emotional expressions in open-ended ways that might be ambiguous, subjective, and sensitive to context.<ref name="How emotion is made and measured" />{{rp|284}}{{example needed|date=September 2018}} |

Picard's focus is human–computer interaction, and her goal for affective computing is to "give computers the ability to recognize, express, and in some cases, 'have' emotions". In contrast, the interactional approach seeks to help "people to understand and experience their own emotions" and to improve computer-mediated interpersonal communication. It does not necessarily seek to map emotion into an objective mathematical model for machine interpretation, but rather let humans make sense of each other's emotional expressions in open-ended ways that might be ambiguous, subjective, and sensitive to context. | Picard's focus is human–computer interaction, and her goal for affective computing is to "give computers the ability to recognize, express, and in some cases, 'have' emotions". In contrast, the interactional approach seeks to help "people to understand and experience their own emotions" and to improve computer-mediated interpersonal communication. It does not necessarily seek to map emotion into an objective mathematical model for machine interpretation, but rather let humans make sense of each other's emotional expressions in open-ended ways that might be ambiguous, subjective, and sensitive to context. | ||

| 第670行: | 第647行: | ||

皮卡德的研究重点是人机交互,她研究情感计算的目标是“赋予计算机识别、表达、在某些情况下‘拥有’情感的能力”【4】。相比之下,交互式的方法旨在帮助“人们理解和体验他们自己的情绪”【57】,并改善以电脑为媒介的人际沟通。它不一定寻求将情感映射到机器解释的客观数学模型中,而是让人类以可能含糊不清、主观且对上下文敏感的开放式方式理解彼此的情感表达【57】。 | 皮卡德的研究重点是人机交互,她研究情感计算的目标是“赋予计算机识别、表达、在某些情况下‘拥有’情感的能力”【4】。相比之下,交互式的方法旨在帮助“人们理解和体验他们自己的情绪”【57】,并改善以电脑为媒介的人际沟通。它不一定寻求将情感映射到机器解释的客观数学模型中,而是让人类以可能含糊不清、主观且对上下文敏感的开放式方式理解彼此的情感表达【57】。 | ||

| − | Picard's critics describe her concept of emotion as "objective, internal, private, and mechanistic". They say it reduces emotion to a discrete psychological signal occurring inside the body that can be measured and which is an input to cognition, undercutting the complexity of emotional experience.<ref name="How emotion is made and measured"/>{{rp|280}}<ref name="How emotion is made and measured"/>{{rp|278}} | + | Picard's critics describe her concept of emotion as "objective, internal, private, and mechanistic". They say it reduces emotion to a discrete psychological signal occurring inside the body that can be measured and which is an input to cognition, undercutting the complexity of emotional experience.<ref name="How emotion is made and measured" />{{rp|280}}<ref name="How emotion is made and measured" />{{rp|278}} |

Picard's critics describe her concept of emotion as "objective, internal, private, and mechanistic". They say it reduces emotion to a discrete psychological signal occurring inside the body that can be measured and which is an input to cognition, undercutting the complexity of emotional experience. | Picard's critics describe her concept of emotion as "objective, internal, private, and mechanistic". They say it reduces emotion to a discrete psychological signal occurring inside the body that can be measured and which is an input to cognition, undercutting the complexity of emotional experience. | ||

| 第676行: | 第653行: | ||

皮卡德的批评者将她的情感概念描述为“客观的、内在的、私人的和机械的”。他们认为它把情绪简化为发生在身体内部的一个离散的心理信号,这个信号可以被测量,并且是认知的输入,削弱了情绪体验的复杂性。 | 皮卡德的批评者将她的情感概念描述为“客观的、内在的、私人的和机械的”。他们认为它把情绪简化为发生在身体内部的一个离散的心理信号,这个信号可以被测量,并且是认知的输入,削弱了情绪体验的复杂性。 | ||

| − | The interactional approach asserts that though emotion has biophysical aspects, it is "culturally grounded, dynamically experienced, and to some degree constructed in action and interaction".<ref name="How emotion is made and measured"/>{{rp|276}} Put another way, it considers "emotion as a social and cultural product experienced through our interactions".<ref>{{cite journal|last1=Boehner|first1=Kirsten|last2=DePaula|first2=Rogerio|last3=Dourish|first3=Paul|last4=Sengers|first4=Phoebe|title=Affection: From Information to Interaction|journal=Proceedings of the Aarhus Decennial Conference on Critical Computing|date=2005|pages=59–68}}</ref><ref name="How emotion is made and measured" | + | The interactional approach asserts that though emotion has biophysical aspects, it is "culturally grounded, dynamically experienced, and to some degree constructed in action and interaction".<ref name="How emotion is made and measured" />{{rp|276}} Put another way, it considers "emotion as a social and cultural product experienced through our interactions".<ref>{{cite journal|last1=Boehner|first1=Kirsten|last2=DePaula|first2=Rogerio|last3=Dourish|first3=Paul|last4=Sengers|first4=Phoebe|title=Affection: From Information to Interaction|journal=Proceedings of the Aarhus Decennial Conference on Critical Computing|date=2005|pages=59–68}}</ref><ref name="How emotion is made and measured" /><ref>{{cite journal|last1=Hook|first1=Kristina|last2=Staahl|first2=Anna|last3=Sundstrom|first3=Petra|last4=Laaksolahti|first4=Jarmo|title=Interactional empowerment|journal=Proc. CHI|date=2008|pages=647–656|url=http://research.microsoft.com/en-us/um/cambridge/projects/hci2020/pdf/interactional%20empowerment%20final%20Jan%2008.pdf}}</ref> |

The interactional approach asserts that though emotion has biophysical aspects, it is "culturally grounded, dynamically experienced, and to some degree constructed in action and interaction". Put another way, it considers "emotion as a social and cultural product experienced through our interactions". | The interactional approach asserts that though emotion has biophysical aspects, it is "culturally grounded, dynamically experienced, and to some degree constructed in action and interaction". Put another way, it considers "emotion as a social and cultural product experienced through our interactions". | ||

互动方法断言,虽然情绪具有生物物理方面,但它是“以文化为基础的,动态体验的,并在某种程度上构建于行动和互动中”【57】。换句话说,它认为“情感是一种通过我们的互动体验到的社会和文化产品”【57】【58】【59】。 | 互动方法断言,虽然情绪具有生物物理方面,但它是“以文化为基础的,动态体验的,并在某种程度上构建于行动和互动中”【57】。换句话说,它认为“情感是一种通过我们的互动体验到的社会和文化产品”【57】【58】【59】。 | ||

| + | |||

| + | |||

==See also== | ==See also== | ||

| 第696行: | 第675行: | ||

* [[Wearable computer]]}} | * [[Wearable computer]]}} | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | ==General sources== | |

| − | |||

| + | * {{cite journal | last = Hudlicka | first = Eva | title = To feel or not to feel: The role of affect in human–computer interaction | journal = International Journal of Human–Computer Studies | volume = 59 | issue = 1–2 | year = 2003 | pages = 1–32 | citeseerx = 10.1.1.180.6429 | doi=10.1016/s1071-5819(03)00047-8}} | ||

| + | *{{cite book | last1 = Scherer |first1=Klaus R |last2=Bänziger |first2= Tanja |last3=Roesch |first3=Etienne B | title = A Blueprint for Affective Computing: A Sourcebook and Manual | location = Oxford | publisher = Oxford University Press | year = 2010 }} | ||

| − | |||

| − | |||

| − | |||

==External links== | ==External links== | ||

* [http://affect.media.mit.edu/ Affective Computing Research Group at the MIT Media Laboratory] | * [http://affect.media.mit.edu/ Affective Computing Research Group at the MIT Media Laboratory] | ||

| − | * [http://emotions.usc.edu/ Computational Emotion Group at USC] | + | *[http://emotions.usc.edu/ Computational Emotion Group at USC] |

| − | * [http://emoshape.com/ Emotion Processing Unit – EPU] | + | *[http://emoshape.com/ Emotion Processing Unit – EPU] |

| − | * [http://sites.google.com/site/memphisemotivecomputing/ Emotive Computing Group at the University of Memphis] | + | *[http://sites.google.com/site/memphisemotivecomputing/ Emotive Computing Group at the University of Memphis] |

| − | * [https://web.archive.org/web/20180411230402/http://www.acii2011.org/ 2011 International Conference on Affective Computing and Intelligent Interaction] | + | *[https://web.archive.org/web/20180411230402/http://www.acii2011.org/ 2011 International Conference on Affective Computing and Intelligent Interaction] |

| − | * [https://web.archive.org/web/20091024081211/http://www.eecs.tufts.edu/~agirou01/workshop/ Brain, Body and Bytes: Psychophysiological User Interaction] ''CHI 2010 Workshop'' (10–15, April 2010) | + | *[https://web.archive.org/web/20091024081211/http://www.eecs.tufts.edu/~agirou01/workshop/ Brain, Body and Bytes: Psychophysiological User Interaction] ''CHI 2010 Workshop'' (10–15, April 2010) |

| − | * [https://web.archive.org/web/20110201001124/http://www.computer.org/portal/web/tac IEEE Transactions on Affective Computing] ''(TAC)'' | + | *[https://web.archive.org/web/20110201001124/http://www.computer.org/portal/web/tac IEEE Transactions on Affective Computing] ''(TAC)'' |

| − | * [http://opensmile.sourceforge.net/ openSMILE: popular state-of-the-art open-source toolkit for large-scale feature extraction for affect recognition and computational paralinguistics] | + | *[http://opensmile.sourceforge.net/ openSMILE: popular state-of-the-art open-source toolkit for large-scale feature extraction for affect recognition and computational paralinguistics] |

* Affective Computing Research Group at the MIT Media Laboratory | * Affective Computing Research Group at the MIT Media Laboratory | ||

| 第731行: | 第703行: | ||

| − | * MIT 媒体实验室情感计算研究小组 | + | * MIT 媒体实验室情感计算研究小组 |

| − | * USC 计算情感小组 | + | * USC 计算情感小组 |

| − | * 情感处理单元-EPU | + | * 情感处理单元-EPU |

| − | * 曼菲斯大学情感计算小组 | + | * 曼菲斯大学情感计算小组 |

| − | * 2011年国际情感计算和智能交互会议 | + | * 2011年国际情感计算和智能交互会议 |

| − | * 大脑,身体和字节: 精神生理学用户交互 CHI 2010研讨会(10-15,2010年4月) | + | * 大脑,身体和字节: 精神生理学用户交互 CHI 2010研讨会(10-15,2010年4月) |

| − | * IEEE 情感计算会刊(TAC) | + | * IEEE 情感计算会刊(TAC) |

* openSMILE: 流行的最先进的开源工具包,用于大规模的情感识别和计算语言学特征提取 | * openSMILE: 流行的最先进的开源工具包,用于大规模的情感识别和计算语言学特征提取 | ||

| 第749行: | 第721行: | ||

{{DEFAULTSORT:Affective Computing}} | {{DEFAULTSORT:Affective Computing}} | ||

| − | [[ | + | [[index.php?title=分类:Affective computing| ]] |

<noinclude> | <noinclude> | ||

| − | <small>This page was moved from [[wikipedia:en:Affective computing]]. Its edit history can be viewed at [[情感计算/edithistory]]</small> | + | <small>This page was moved from [[wikipedia:en:Affective computing]]. Its edit history can be viewed at [[情感计算/edithistory]]</small> |

| + | |||

| + | |||

| − | |} | + | |

| + | ==Citations== | ||

| + | {{Reflist|2}} | ||

| + | |||

| + | |||

| + | |||

| + | |||

| + | |||

| + | {{DEFAULTSORT:Affective Computing}} | ||

| + | [[index.php?title=分类:Affective computing| ]] | ||

2021年7月28日 (三) 18:17的版本

此词条暂由彩云小译翻译,翻译字数共5068,未经人工整理和审校,带来阅读不便,请见谅。

Affective computing is the study and development of systems and devices that can recognize, interpret, process, and simulate human affects. It is an interdisciplinary field spanning computer science, psychology, and cognitive science.[1] While some core ideas in the field may be traced as far back as to early philosophical inquiries into emotion,[2] the more modern branch of computer science originated with Rosalind Picard's 1995 paper[3] on affective computing and her book Affective Computing[4] published by MIT Press.[5][6] One of the motivations for the research is the ability to give machines emotional intelligence, including to simulate empathy. The machine should interpret the emotional state of humans and adapt its behavior to them, giving an appropriate response to those emotions.

One of the motivations for the research is the ability to give machines emotional intelligence, including to simulate empathy. The machine should interpret the emotional state of humans and adapt its behavior to them, giving an appropriate response to those emotions.

情感计算 Affective computing (也被称为人工情感智能或情感AI)是基于系统和设备的研究和开发来识别、理解、处理和模拟人的情感。这是一个融合计算机科学、心理学和认知科学的跨学科领域[1]。虽然该领域的一些核心思想可以追溯到早期对情感[2]的哲学研究,但计算机科学的更现代分支起源于罗莎琳德·皮卡德1995年关于情感计算的论文【3】和她的由麻省理工出版社【5】【6】出版的《情感计算》【4】。这项研究的动机之一是赋予机器情商的能力,包括模拟移情。机器应该解读人类的情绪状态,并使其行为适应人类的情绪,对这些情绪作出适当的反应。

Areas

Areas

= 面积 =

Detecting and recognizing emotional information

Detecting emotional information usually begins with passive sensors that capture data about the user's physical state or behavior without interpreting the input. The data gathered is analogous to the cues humans use to perceive emotions in others. For example, a video camera might capture facial expressions, body posture, and gestures, while a microphone might capture speech. Other sensors detect emotional cues by directly measuring physiological data, such as skin temperature and galvanic resistance.[7]

Detecting emotional information usually begins with passive sensors that capture data about the user's physical state or behavior without interpreting the input. The data gathered is analogous to the cues humans use to perceive emotions in others. For example, a video camera might capture facial expressions, body posture, and gestures, while a microphone might capture speech. Other sensors detect emotional cues by directly measuring physiological data, such as skin temperature and galvanic resistance.

检测情感信息通常从被动传感器开始,这些传感器捕捉关于用户身体状态或行为的数据,而不解释输入信息。收集的数据类似于人类用来感知他人情感的线索。例如,摄像机可以捕捉面部表情、身体姿势和手势,而麦克风可以捕捉语音。其他传感器通过直接测量生理数据(如皮肤温度和电流电阻)来探测情感信号【7】。

Recognizing emotional information requires the extraction of meaningful patterns from the gathered data. This is done using machine learning techniques that process different modalities, such as speech recognition, natural language processing, or facial expression detection. The goal of most of these techniques is to produce labels that would match the labels a human perceiver would give in the same situation: For example, if a person makes a facial expression furrowing their brow, then the computer vision system might be taught to label their face as appearing "confused" or as "concentrating" or "slightly negative" (as opposed to positive, which it might say if they were smiling in a happy-appearing way). These labels may or may not correspond to what the person is actually feeling.

Recognizing emotional information requires the extraction of meaningful patterns from the gathered data. This is done using machine learning techniques that process different modalities, such as speech recognition, natural language processing, or facial expression detection. The goal of most of these techniques is to produce labels that would match the labels a human perceiver would give in the same situation: For example, if a person makes a facial expression furrowing their brow, then the computer vision system might be taught to label their face as appearing "confused" or as "concentrating" or "slightly negative" (as opposed to positive, which it might say if they were smiling in a happy-appearing way). These labels may or may not correspond to what the person is actually feeling.

识别情感信息需要从收集到的数据中提取出有意义的模式。这是通过处理不同模式的机器学习技术完成的,如语音识别、自然语言处理或面部表情检测。大多数这些技术的目标是产生与人类感知者在相同情况下给出的标签相匹配的标签: 例如,如果一个人做出皱眉的面部表情,那么计算机视觉系统可能会被教导将他们的脸标记为看起来“困惑”、“专注”或“轻微消极”(与积极相反,它可能会说,如果他们正在以一种快乐的方式微笑)。这些标签可能与人们的真实感受相符,也可能不相符。

Emotion in machines

Another area within affective computing is the design of computational devices proposed to exhibit either innate emotional capabilities or that are capable of convincingly simulating emotions. A more practical approach, based on current technological capabilities, is the simulation of emotions in conversational agents in order to enrich and facilitate interactivity between human and machine.[8]

Another area within affective computing is the design of computational devices proposed to exhibit either innate emotional capabilities or that are capable of convincingly simulating emotions. A more practical approach, based on current technological capabilities, is the simulation of emotions in conversational agents in order to enrich and facilitate interactivity between human and machine.

情感计算的另一个领域是计算设备的设计,旨在展示先天的情感能力或能够令人信服地模拟情感。基于当前的技术能力,一个更加实用的方法是模拟会话代理中的情绪,以丰富和促进人与机器之间的互动【8】。

Marvin Minsky, one of the pioneering computer scientists in artificial intelligence, relates emotions to the broader issues of machine intelligence stating in The Emotion Machine that emotion is "not especially different from the processes that we call 'thinking.'"[9]

Marvin Minsky, one of the pioneering computer scientists in artificial intelligence, relates emotions to the broader issues of machine intelligence stating in The Emotion Machine that emotion is "not especially different from the processes that we call 'thinking.'"

人工智能领域的计算机科学先驱之一马文•明斯基(Marvin Minsky)在《情绪机器》(The Emotion Machine)一书中将情绪与更广泛的机器智能问题联系起来。他在书中表示,情绪“与我们所谓的‘思考’过程并没有特别的不同。'"【9】

Technologies

In psychology, cognitive science, and in neuroscience, there have been two main approaches for describing how humans perceive and classify emotion: continuous or categorical. The continuous approach tends to use dimensions such as negative vs. positive, calm vs. aroused.

In psychology, cognitive science, and in neuroscience, there have been two main approaches for describing how humans perceive and classify emotion: continuous or categorical. The continuous approach tends to use dimensions such as negative vs. positive, calm vs. aroused.

在心理学、认知科学和神经科学中,描述人类如何感知和分类情绪的方法主要有两种: 连续的和分类的。连续的方法倾向于使用诸如消极与积极、平静与激动之类的维度。

The categorical approach tends to use discrete classes such as happy, sad, angry, fearful, surprise, disgust. Different kinds of machine learning regression and classification models can be used for having machines produce continuous or discrete labels. Sometimes models are also built that allow combinations across the categories, e.g. a happy-surprised face or a fearful-surprised face.[10]

The categorical approach tends to use discrete classes such as happy, sad, angry, fearful, surprise, disgust. Different kinds of machine learning regression and classification models can be used for having machines produce continuous or discrete labels. Sometimes models are also built that allow combinations across the categories, e.g. a happy-surprised face or a fearful-surprised face.

分类方法倾向于使用离散的类别,如快乐,悲伤,愤怒,恐惧,惊讶,厌恶。不同类型的机器学习回归和分类模型可以用于让机器产生连续或离散的标签。有时还会构建允许跨类别组合的模型,例如 一张高兴而惊讶的脸或一张害怕而惊讶的脸【10】。

The following sections consider many of the kinds of input data used for the task of emotion recognition.

The following sections consider many of the kinds of input data used for the task of emotion recognition.

接下来的部分将讨论用于情感识别任务的各种输入数据。

Emotional speech

Various changes in the autonomic nervous system can indirectly alter a person's speech, and affective technologies can leverage this information to recognize emotion. For example, speech produced in a state of fear, anger, or joy becomes fast, loud, and precisely enunciated, with a higher and wider range in pitch, whereas emotions such as tiredness, boredom, or sadness tend to generate slow, low-pitched, and slurred speech.[11] Some emotions have been found to be more easily computationally identified, such as anger[12] or approval.[13]

Various changes in the autonomic nervous system can indirectly alter a person's speech, and affective technologies can leverage this information to recognize emotion. For example, speech produced in a state of fear, anger, or joy becomes fast, loud, and precisely enunciated, with a higher and wider range in pitch, whereas emotions such as tiredness, boredom, or sadness tend to generate slow, low-pitched, and slurred speech.Breazeal, C. and Aryananda, L. Recognition of affective communicative intent in robot-directed speech. Autonomous Robots 12 1, 2002. pp. 83–104. Some emotions have been found to be more easily computationally identified, such as anger or approval.

自主神经系统的各种变化可以间接地改变一个人的语言,情感技术可以利用这些信息来识别情绪。例如,在恐惧、愤怒或高兴的状态下发言变得快速、响亮、清晰,音调变得越来越高、越来越宽,而诸如疲倦、厌倦或悲伤等情绪往往会产生缓慢、低沉、含糊不清的发言【11】。有些情绪更容易被计算识别,比如愤怒【12】或赞同【13】。

Emotional speech processing technologies recognize the user's emotional state using computational analysis of speech features. Vocal parameters and prosodic features such as pitch variables and speech rate can be analyzed through pattern recognition techniques.[12][14]

Emotional speech processing technologies recognize the user's emotional state using computational analysis of speech features. Vocal parameters and prosodic features such as pitch variables and speech rate can be analyzed through pattern recognition techniques.Dellaert, F., Polizin, t., and Waibel, A., Recognizing Emotion in Speech", In Proc. Of ICSLP 1996, Philadelphia, PA, pp.1970–1973, 1996Lee, C.M.; Narayanan, S.; Pieraccini, R., Recognition of Negative Emotion in the Human Speech Signals, Workshop on Auto. Speech Recognition and Understanding, Dec 2001

情感语音处理技术通过对语音特征的计算分析来识别用户的情感状态。通过模式识别技术【12】【14】可以分析声音参数和韵律特征,如音高变量和语速等。

Speech analysis is an effective method of identifying affective state, having an average reported accuracy of 70 to 80% in recent research.[15][16] These systems tend to outperform average human accuracy (approximately 60%[12]) but are less accurate than systems which employ other modalities for emotion detection, such as physiological states or facial expressions.[17] However, since many speech characteristics are independent of semantics or culture, this technique is considered to be a promising route for further research.[18]

Speech analysis is an effective method of identifying affective state, having an average reported accuracy of 70 to 80% in recent research. These systems tend to outperform average human accuracy (approximately 60%) but are less accurate than systems which employ other modalities for emotion detection, such as physiological states or facial expressions. However, since many speech characteristics are independent of semantics or culture, this technique is considered to be a promising route for further research.

语音分析是一种有效的情感状态识别方法,在最近的研究中,语音分析的平均报告准确率为70%-80%【15】【16】 。这些系统往往比人类的平均准确率(大约60%【12】)更高,但是不如使用其他情绪检测方式的系统准确,比如生理状态或面部表情【17】。然而,由于许多言语特征是独立于语义或文化的,这种技术被认为是一个很有前途的研究路线【18】。

Algorithms

Algorithms

= = 算法 = =

The process of speech/text affect detection requires the creation of a reliable database, knowledge base, or vector space model,[19] broad enough to fit every need for its application, as well as the selection of a successful classifier which will allow for quick and accurate emotion identification.

The process of speech/text affect detection requires the creation of a reliable database, knowledge base, or vector space model,

broad enough to fit every need for its application, as well as the selection of a successful classifier which will allow for quick and accurate emotion identification.

语音/文本影响检测的过程需要创建一个可靠的数据库、知识库或者向量空间模型【19】,这些数据库的范围足以满足其应用的所有需要,同时还需要选择一个成功的分类器,这样才能快速准确地识别情感。

Currently, the most frequently used classifiers are linear discriminant classifiers (LDC), k-nearest neighbor (k-NN), Gaussian mixture model (GMM), support vector machines (SVM), artificial neural networks (ANN), decision tree algorithms and hidden Markov models (HMMs).[20] Various studies showed that choosing the appropriate classifier can significantly enhance the overall performance of the system.[17] The list below gives a brief description of each algorithm:

Currently, the most frequently used classifiers are linear discriminant classifiers (LDC), k-nearest neighbor (k-NN), Gaussian mixture model (GMM), support vector machines (SVM), artificial neural networks (ANN), decision tree algorithms and hidden Markov models (HMMs). Various studies showed that choosing the appropriate classifier can significantly enhance the overall performance of the system. The list below gives a brief description of each algorithm:

目前常用的分类器有线性判别分类器(LDC)、 k- 近邻分类器(k-NN)、高斯混合模型(GMM)、支持向量机(SVM)、人工神经网络(ANN)、决策树算法和隐马尔可夫模型(HMMs)【20】。各种研究表明,选择合适的分类器可以显著提高系统的整体性能。下面的列表给出了每个算法的简要描述:

- LDC – Classification happens based on the value obtained from the linear combination of the feature values, which are usually provided in the form of vector features.

- k-NN – Classification happens by locating the object in the feature space, and comparing it with the k nearest neighbors (training examples). The majority vote decides on the classification.

- GMM – is a probabilistic model used for representing the existence of subpopulations within the overall population. Each sub-population is described using the mixture distribution, which allows for classification of observations into the sub-populations.[21]

- SVM – is a type of (usually binary) linear classifier which decides in which of the two (or more) possible classes, each input may fall into.

- ANN – is a mathematical model, inspired by biological neural networks, that can better grasp possible non-linearities of the feature space.

- Decision tree algorithms – work based on following a decision tree in which leaves represent the classification outcome, and branches represent the conjunction of subsequent features that lead to the classification.

- HMMs – a statistical Markov model in which the states and state transitions are not directly available to observation. Instead, the series of outputs dependent on the states are visible. In the case of affect recognition, the outputs represent the sequence of speech feature vectors, which allow the deduction of states' sequences through which the model progressed. The states can consist of various intermediate steps in the expression of an emotion, and each of them has a probability distribution over the possible output vectors. The states' sequences allow us to predict the affective state which we are trying to classify, and this is one of the most commonly used techniques within the area of speech affect detection.

- LDC – Classification happens based on the value obtained from the linear combination of the feature values, which are usually provided in the form of vector features.

- k-NN – Classification happens by locating the object in the feature space, and comparing it with the k nearest neighbors (training examples). The majority vote decides on the classification.

- GMM – is a probabilistic model used for representing the existence of subpopulations within the overall population. Each sub-population is described using the mixture distribution, which allows for classification of observations into the sub-populations."Gaussian Mixture Model". Connexions – Sharing Knowledge and Building Communities. Retrieved 10 March 2011.

- SVM – is a type of (usually binary) linear classifier which decides in which of the two (or more) possible classes, each input may fall into.

- ANN – is a mathematical model, inspired by biological neural networks, that can better grasp possible non-linearities of the feature space.

- Decision tree algorithms – work based on following a decision tree in which leaves represent the classification outcome, and branches represent the conjunction of subsequent features that lead to the classification.

- HMMs – a statistical Markov model in which the states and state transitions are not directly available to observation. Instead, the series of outputs dependent on the states are visible. In the case of affect recognition, the outputs represent the sequence of speech feature vectors, which allow the deduction of states' sequences through which the model progressed. The states can consist of various intermediate steps in the expression of an emotion, and each of them has a probability distribution over the possible output vectors. The states' sequences allow us to predict the affective state which we are trying to classify, and this is one of the most commonly used techniques within the area of speech affect detection.

- LDC-根据特征值的线性组合值进行分类,特征值通常以矢量特征的形式提供。

- k-NN-分类是通过在特征空间中定位目标,并与 k 个最近邻(训练样本)进行比较来实现的。多数票决定分类。

- GMM-是一种概率模型,用于表示总体中是否存在亚群。 每个子群都使用混合分布来描述,这允许将观察结果分类到子群中【21】。

- SVM-是一种(通常为二进制)线性分类器,它决定每个输入可能属于两个(或多个)可能类别中的哪一个。

- ANN-是一种受生物神经网络启发的数学模型,能够更好地把握特征空间可能存在的非线性。

- 决策树算法——基于遵循决策树的工作,其中叶子代表分类结果,而分支代表导致分类的后续特征的结合

- HMMs-一个统计马尔可夫模型,其中的状态和状态转变不能直接用于观测。相反,依赖于状态的一系列输出是可见的。在情感识别的情况下,输出表示语音特征向量的序列,这样可以推导出模型所经过的状态序列。这些状态可以由表达情绪的各种中间步骤组成,每个概率分布都有一个可能的输出向量。状态序列允许我们预测我们试图分类的情感状态,这是语音情感检测领域最常用的技术之一。

It is proved that having enough acoustic evidence available the emotional state of a person can be classified by a set of majority voting classifiers. The proposed set of classifiers is based on three main classifiers: kNN, C4.5 and SVM-RBF Kernel. This set achieves better performance than each basic classifier taken separately. It is compared with two other sets of classifiers: one-against-all (OAA) multiclass SVM with Hybrid kernels and the set of classifiers which consists of the following two basic classifiers: C5.0 and Neural Network. The proposed variant achieves better performance than the other two sets of classifiers.[22]

It is proved that having enough acoustic evidence available the emotional state of a person can be classified by a set of majority voting classifiers. The proposed set of classifiers is based on three main classifiers: kNN, C4.5 and SVM-RBF Kernel. This set achieves better performance than each basic classifier taken separately. It is compared with two other sets of classifiers: one-against-all (OAA) multiclass SVM with Hybrid kernels and the set of classifiers which consists of the following two basic classifiers: C5.0 and Neural Network. The proposed variant achieves better performance than the other two sets of classifiers.

事实证明,如果有足够的声学证据,可以通过一组多数投票分类器对一个人的情绪状态进行分类。该分类器集合基于三个主要分类器: kNN、 C4.5和 SVM-RBF 核。该分类器比单独采集的基本分类器具有更好的分类性能。将其与其他两组分类器进行比较:具有混合内核的一对多 (OAA) 多类 SVM 和由以下两个基本分类器组成的分类器组:C5.0 和神经网络。所提出的变体比其他两组分类器获得了更好的性能【22】。

Databases

Databases

= = = 数据库 = =

The vast majority of present systems are data-dependent. This creates one of the biggest challenges in detecting emotions based on speech, as it implicates choosing an appropriate database used to train the classifier. Most of the currently possessed data was obtained from actors and is thus a representation of archetypal emotions. Those so-called acted databases are usually based on the Basic Emotions theory (by Paul Ekman), which assumes the existence of six basic emotions (anger, fear, disgust, surprise, joy, sadness), the others simply being a mix of the former ones.[23] Nevertheless, these still offer high audio quality and balanced classes (although often too few), which contribute to high success rates in recognizing emotions.

The vast majority of present systems are data-dependent. This creates one of the biggest challenges in detecting emotions based on speech, as it implicates choosing an appropriate database used to train the classifier. Most of the currently possessed data was obtained from actors and is thus a representation of archetypal emotions. Those so-called acted databases are usually based on the Basic Emotions theory (by Paul Ekman), which assumes the existence of six basic emotions (anger, fear, disgust, surprise, joy, sadness), the others simply being a mix of the former ones.Ekman, P. & Friesen, W. V (1969). The repertoire of nonverbal behavior: Categories, origins, usage, and coding. Semiotica, 1, 49–98. Nevertheless, these still offer high audio quality and balanced classes (although often too few), which contribute to high success rates in recognizing emotions.

绝大多数现有系统都依赖于数据。 这造成了基于语音检测情绪的最大挑战之一,因为它涉及选择用于训练分类器的合适数据库。 目前拥有的大部分数据都是从演员那里获得的,因此是原型情感的代表。这些所谓的行为数据库通常是基于基本情绪理论(保罗 · 埃克曼) ,该理论假定存在六种基本情绪(愤怒、恐惧、厌恶、惊讶、喜悦、悲伤) ,其他情绪只是前者的混合体【23】。尽管如此,这些仍然提供高音质和平衡的类别(尽管通常太少),有助于提高识别情绪的成功率。

However, for real life application, naturalistic data is preferred. A naturalistic database can be produced by observation and analysis of subjects in their natural context. Ultimately, such database should allow the system to recognize emotions based on their context as well as work out the goals and outcomes of the interaction. The nature of this type of data allows for authentic real life implementation, due to the fact it describes states naturally occurring during the human–computer interaction (HCI).

However, for real life application, naturalistic data is preferred. A naturalistic database can be produced by observation and analysis of subjects in their natural context. Ultimately, such database should allow the system to recognize emotions based on their context as well as work out the goals and outcomes of the interaction. The nature of this type of data allows for authentic real life implementation, due to the fact it describes states naturally occurring during the human–computer interaction (HCI).

然而,对于现实生活应用,自然数据是首选的。自然数据库可以通过在自然环境中观察和分析对象来产生。最终,这样的数据库应该允许系统根据他们的上下文识别情绪,并制定交互的目标和结果。此类数据的性质允许真实的现实生活实施,因为它描述了人机交互 (HCI) 期间自然发生的状态。

Despite the numerous advantages which naturalistic data has over acted data, it is difficult to obtain and usually has low emotional intensity. Moreover, data obtained in a natural context has lower signal quality, due to surroundings noise and distance of the subjects from the microphone. The first attempt to produce such database was the FAU Aibo Emotion Corpus for CEICES (Combining Efforts for Improving Automatic Classification of Emotional User States), which was developed based on a realistic context of children (age 10–13) playing with Sony's Aibo robot pet.[24][25] Likewise, producing one standard database for all emotional research would provide a method of evaluating and comparing different affect recognition systems.

Despite the numerous advantages which naturalistic data has over acted data, it is difficult to obtain and usually has low emotional intensity. Moreover, data obtained in a natural context has lower signal quality, due to surroundings noise and distance of the subjects from the microphone. The first attempt to produce such database was the FAU Aibo Emotion Corpus for CEICES (Combining Efforts for Improving Automatic Classification of Emotional User States), which was developed based on a realistic context of children (age 10–13) playing with Sony's Aibo robot pet. Likewise, producing one standard database for all emotional research would provide a method of evaluating and comparing different affect recognition systems.

尽管自然数据比行为数据具有许多优势,但很难获得并且通常情绪强度较低。此外,由于环境噪声和对象与麦克风的距离,在自然环境中获得的数据具有较低的信号质量。第一次尝试创建这样的数据库是 FAU Aibo Emotion Corpus for CEICES(Combining Efforts for Improvement Automatic Classification of Emotional User States),它是基于儿童(10-13 岁)与索尼 Aibo 机器人宠物玩耍的真实情境开发的 。同样,为所有情感研究生成一个标准数据库将提供一种评估和比较不同情感识别系统的方法。

Speech descriptors

Speech descriptors

= = 语言描述符 = =

The complexity of the affect recognition process increases with the number of classes (affects) and speech descriptors used within the classifier. It is, therefore, crucial to select only the most relevant features in order to assure the ability of the model to successfully identify emotions, as well as increasing the performance, which is particularly significant to real-time detection. The range of possible choices is vast, with some studies mentioning the use of over 200 distinct features.[20] It is crucial to identify those that are redundant and undesirable in order to optimize the system and increase the success rate of correct emotion detection. The most common speech characteristics are categorized into the following groups.[24][25]

The complexity of the affect recognition process increases with the number of classes (affects) and speech descriptors used within the classifier. It is, therefore, crucial to select only the most relevant features in order to assure the ability of the model to successfully identify emotions, as well as increasing the performance, which is particularly significant to real-time detection. The range of possible choices is vast, with some studies mentioning the use of over 200 distinct features. It is crucial to identify those that are redundant and undesirable in order to optimize the system and increase the success rate of correct emotion detection. The most common speech characteristics are categorized into the following groups.

情感识别过程的复杂性随着分类器中使用的类(情感)和语音描述符的数量的增加而增加。因此,为了保证模型能够成功地识别情绪,并提高性能,只选择最相关的特征是至关重要的,这对于实时检测尤为重要。可能的选择范围很广,有些研究提到使用了200多种不同的特征【20】。识别冗余和不需要的情感信息对于优化系统、提高情感检测的成功率至关重要。最常见的言语特征可分为以下几类【24】【25】。

- Frequency characteristics[26]

- Accent shape – affected by the rate of change of the fundamental frequency.

- Average pitch – description of how high/low the speaker speaks relative to the normal speech.

- Contour slope – describes the tendency of the frequency change over time, it can be rising, falling or level.

- Final lowering – the amount by which the frequency falls at the end of an utterance.

- Pitch range – measures the spread between the maximum and minimum frequency of an utterance.

- Time-related features:

- Speech rate – describes the rate of words or syllables uttered over a unit of time

- Stress frequency – measures the rate of occurrences of pitch accented utterances

- Voice quality parameters and energy descriptors:

- Breathiness – measures the aspiration noise in speech

- Brilliance – describes the dominance of high Or low frequencies In the speech

- Loudness – measures the amplitude of the speech waveform, translates to the energy of an utterance

- Pause Discontinuity – describes the transitions between sound and silence

- Pitch Discontinuity – describes the transitions of the fundamental frequency.

- Frequency characteristics

- Accent shape – affected by the rate of change of the fundamental frequency.

- Average pitch – description of how high/low the speaker speaks relative to the normal speech.

- Contour slope – describes the tendency of the frequency change over time, it can be rising, falling or level.

- Final lowering – the amount by which the frequency falls at the end of an utterance.

- Pitch range – measures the spread between the maximum and minimum frequency of an utterance.

- Time-related features:

- Speech rate – describes the rate of words or syllables uttered over a unit of time

- Stress frequency – measures the rate of occurrences of pitch accented utterances

- Voice quality parameters and energy descriptors:

- Breathiness – measures the aspiration noise in speech

- Brilliance – describes the dominance of high Or low frequencies In the speech

- Loudness – measures the amplitude of the speech waveform, translates to the energy of an utterance

- Pause Discontinuity – describes the transitions between sound and silence

- Pitch Discontinuity – describes the transitions of the fundamental frequency.

- 频率特性

- 重音形状-受基频变化率的影响。

- 平均音调-描述说话者相对于正常语言的音调高低。

- 曲线斜率-描述频率随时间变化的趋势,可以是上升、下降或水平。

- 最后降低频率-话语结束时频率下降的幅度。

- 音高范围-量度一段话语的最高和最低频率之间的差距。

- 2.与时间相关的特征:

- 语速-描述在一个时间单位内发出的单词或音节的频率

- 重音频率-测量音高重音出现的频率

- 3.语音质量参数和能量描述符:

- 呼吸质-测量语音中的吸气噪声

- 亮度-描述语音中高频或低频的主导地位

- 响度-测量语音的振幅,转换为话音的能量

- 暂停间断-描述声音和静音之间的转换

- 音高间断-描述基本频率的转换。

Facial affect detection

The detection and processing of facial expression are achieved through various methods such as optical flow, hidden Markov models, neural network processing or active appearance models. More than one modalities can be combined or fused (multimodal recognition, e.g. facial expressions and speech prosody,[27] facial expressions and hand gestures,[28] or facial expressions with speech and text for multimodal data and metadata analysis) to provide a more robust estimation of the subject's emotional state. Affectiva is a company (co-founded by Rosalind Picard and Rana El Kaliouby) directly related to affective computing and aims at investigating solutions and software for facial affect detection.

The detection and processing of facial expression are achieved through various methods such as optical flow, hidden Markov models, neural network processing or active appearance models. More than one modalities can be combined or fused (multimodal recognition, e.g. facial expressions and speech prosody, facial expressions and hand gestures, or facial expressions with speech and text for multimodal data and metadata analysis) to provide a more robust estimation of the subject's emotional state. Affectiva is a company (co-founded by Rosalind Picard and Rana El Kaliouby) directly related to affective computing and aims at investigating solutions and software for facial affect detection.

面部表情的检测和处理通过光流、隐马尔可夫模型、神经网络处理或主动外观模型等多种方法实现。可以组合或融合多种模态(多模态识别,例如面部表情和语音韵律【27】、面部表情和手势【28】,或用于多模态数据和元数据分析的带有语音和文本的面部表情),以提供对受试者情绪的更可靠估计状态。Affectiva 是一家与情感计算直接相关的公司(由 Rosalind Picard 和 Rana El Kaliouby 共同创办) ,旨在研究面部情感检测的解决方案和软件。

Facial expression databases

Creation of an emotion database is a difficult and time-consuming task. However, database creation is an essential step in the creation of a system that will recognize human emotions. Most of the publicly available emotion databases include posed facial expressions only. In posed expression databases, the participants are asked to display different basic emotional expressions, while in spontaneous expression database, the expressions are natural. Spontaneous emotion elicitation requires significant effort in the selection of proper stimuli which can lead to a rich display of intended emotions. Secondly, the process involves tagging of emotions by trained individuals manually which makes the databases highly reliable. Since perception of expressions and their intensity is subjective in nature, the annotation by experts is essential for the purpose of validation.

Creation of an emotion database is a difficult and time-consuming task. However, database creation is an essential step in the creation of a system that will recognize human emotions. Most of the publicly available emotion databases include posed facial expressions only. In posed expression databases, the participants are asked to display different basic emotional expressions, while in spontaneous expression database, the expressions are natural. Spontaneous emotion elicitation requires significant effort in the selection of proper stimuli which can lead to a rich display of intended emotions. Secondly, the process involves tagging of emotions by trained individuals manually which makes the databases highly reliable. Since perception of expressions and their intensity is subjective in nature, the annotation by experts is essential for the purpose of validation.

情感数据库的建立是一项既困难又耗时的工作。然而,创建数据库是创建识别人类情感的系统的关键步骤。大多数公开的情感数据库只包含摆出的面部表情。在姿势表情数据库中,参与者被要求展示不同的基本情绪表情,而在自发表情数据库中,表情是自然的。自发的情绪诱导需要在选择合适的刺激物时付出巨大的努力,这会导致丰富的预期情绪的展示。其次,该过程涉及由受过训练的个人手动标记情绪,这使得数据库高度可靠。 由于对表达及其强度的感知本质上是主观的,专家的注释对于验证的目的是必不可少的。

Researchers work with three types of databases, such as a database of peak expression images only, a database of image sequences portraying an emotion from neutral to its peak, and video clips with emotional annotations. Many facial expression databases have been created and made public for expression recognition purpose. Two of the widely used databases are CK+ and JAFFE.

Researchers work with three types of databases, such as a database of peak expression images only, a database of image sequences portraying an emotion from neutral to its peak, and video clips with emotional annotations. Many facial expression databases have been created and made public for expression recognition purpose. Two of the widely used databases are CK+ and JAFFE.

研究人员使用三种类型的数据库,例如仅峰值表达图像的数据库、描绘从中性到峰值的情绪的图像序列数据库以及带有情绪注释的视频剪辑。面部表情数据库是面部表情识别领域的一个重要研究课题。两个广泛使用的数据库是 CK+和 JAFFE。

Emotion classification

By doing cross-cultural research in Papua New Guinea, on the Fore Tribesmen, at the end of the 1960s, Paul Ekman proposed the idea that facial expressions of emotion are not culturally determined, but universal. Thus, he suggested that they are biological in origin and can, therefore, be safely and correctly categorized.[23] He therefore officially put forth six basic emotions, in 1972:[29]

By doing cross-cultural research in Papua New Guinea, on the Fore Tribesmen, at the end of the 1960s, Paul Ekman proposed the idea that facial expressions of emotion are not culturally determined, but universal. Thus, he suggested that they are biological in origin and can, therefore, be safely and correctly categorized.

He therefore officially put forth six basic emotions, in 1972:

1960 年代末,保罗·埃克曼 (Paul Ekman) 在巴布亚新几内亚的 Fore Tribesmen 上进行跨文化研究,提出了一种观点,即情感的面部表情不是由文化决定的,而是普遍存在的。因此,他认为它们是起源于生物的,能够可靠地分类。 因此,他在 1972 年正式提出了六种基本情绪【29】:

- Anger

- Disgust

- Fear

- Happiness

- Sadness

- Surprise

- 愤怒

- 厌恶

- 恐惧

- 快乐

- 悲伤

- 惊喜

However, in the 1990s Ekman expanded his list of basic emotions, including a range of positive and negative emotions not all of which are encoded in facial muscles.[30] The newly included emotions are:

- Amusement

- Contempt

- Contentment

- Embarrassment

- Excitement

- Guilt

- Pride in achievement

- Relief

- Satisfaction

- Sensory pleasure

- Shame

However, in the 1990s Ekman expanded his list of basic emotions, including a range of positive and negative emotions not all of which are encoded in facial muscles.. The newly included emotions are:

- Amusement

- Contempt

- Contentment

- Embarrassment

- Excitement

- Guilt

- Pride in achievement

- Relief

- Satisfaction

- Sensory pleasure

- Shame

然而,在20世纪90年代,埃克曼扩展了他的基本情绪列表,包括一系列积极和消极的情绪,这些情绪并非都编码在面部肌肉中。新增的情绪是:

# 娱乐

# 轻蔑

# 满足

# 尴尬

# 兴奋

# 内疚

# 成就骄傲

# 解脱

# 满足

# 感官愉悦

# 羞耻

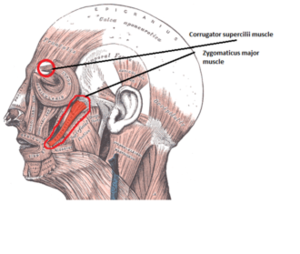

Facial Action Coding System

A system has been conceived by psychologists in order to formally categorize the physical expression of emotions on faces. The central concept of the Facial Action Coding System, or FACS, as created by Paul Ekman and Wallace V. Friesen in 1978 based on earlier work by Carl-Herman Hjortsjö[31] are action units (AU). They are, basically, a contraction or a relaxation of one or more muscles. Psychologists have proposed the following classification of six basic emotions, according to their action units ("+" here mean "and"):