“技术奇点”的版本间的差异

| 第216行: | 第216行: | ||

The mechanism for a recursively self-improving set of algorithms differs from an increase in raw computation speed in two ways. First, it does not require external influence: machines designing faster hardware would still require humans to create the improved hardware, or to program factories appropriately.{{citation needed|date=July 2017}} An AI rewriting its own source code could do so while contained in an [[AI box]]. | The mechanism for a recursively self-improving set of algorithms differs from an increase in raw computation speed in two ways. First, it does not require external influence: machines designing faster hardware would still require humans to create the improved hardware, or to program factories appropriately.{{citation needed|date=July 2017}} An AI rewriting its own source code could do so while contained in an [[AI box]]. | ||

| − | + | 递归自我改进算法集的机制在两个方面不同于原始计算速度的提高。首先,它不需要外部影响:设计更快的硬件的机器仍然需要人类来创造改进的硬件,或者对工厂进行适当的编程。AI可以既身处一个AI盒 AI box里面,又同时改进自己的 源代码。 | |

Second, as with [[Vernor Vinge]]’s conception of the singularity, it is much harder to predict the outcome. While speed increases seem to be only a quantitative difference from human intelligence, actual algorithm improvements would be qualitatively different. [[Eliezer Yudkowsky]] compares it to the changes that human intelligence brought: humans changed the world thousands of times more rapidly than evolution had done, and in totally different ways. Similarly, the evolution of life was a massive departure and acceleration from the previous geological rates of change, and improved intelligence could cause change to be as different again.<ref name="yudkowsky">{{cite web|author=Eliezer S. Yudkowsky |url=http://yudkowsky.net/singularity/power |title=Power of Intelligence |publisher=Yudkowsky |accessdate=2011-09-09}}</ref> | Second, as with [[Vernor Vinge]]’s conception of the singularity, it is much harder to predict the outcome. While speed increases seem to be only a quantitative difference from human intelligence, actual algorithm improvements would be qualitatively different. [[Eliezer Yudkowsky]] compares it to the changes that human intelligence brought: humans changed the world thousands of times more rapidly than evolution had done, and in totally different ways. Similarly, the evolution of life was a massive departure and acceleration from the previous geological rates of change, and improved intelligence could cause change to be as different again.<ref name="yudkowsky">{{cite web|author=Eliezer S. Yudkowsky |url=http://yudkowsky.net/singularity/power |title=Power of Intelligence |publisher=Yudkowsky |accessdate=2011-09-09}}</ref> | ||

| 第349行: | 第349行: | ||

According to Eliezer Yudkowsky, a significant problem in AI safety is that unfriendly artificial intelligence is likely to be much easier to create than friendly AI. While both require large advances in recursive optimisation process design, friendly AI also requires the ability to make goal structures invariant under self-improvement (or the AI could transform itself into something unfriendly) and a goal structure that aligns with human values and does not automatically destroy the human race. An unfriendly AI, on the other hand, can optimize for an arbitrary goal structure, which does not need to be invariant under self-modification. Bill Hibbard (2014) proposes an AI design that avoids several dangers including self-delusion, unintended instrumental actions, and corruption of the reward generator.[84] He also discusses social impacts of AI and testing AI. His 2001 book Super-Intelligent Machines advocates the need for public education about AI and public control over AI. It also proposed a simple design that was vulnerable to corruption of the reward generator. | According to Eliezer Yudkowsky, a significant problem in AI safety is that unfriendly artificial intelligence is likely to be much easier to create than friendly AI. While both require large advances in recursive optimisation process design, friendly AI also requires the ability to make goal structures invariant under self-improvement (or the AI could transform itself into something unfriendly) and a goal structure that aligns with human values and does not automatically destroy the human race. An unfriendly AI, on the other hand, can optimize for an arbitrary goal structure, which does not need to be invariant under self-modification. Bill Hibbard (2014) proposes an AI design that avoids several dangers including self-delusion, unintended instrumental actions, and corruption of the reward generator.[84] He also discusses social impacts of AI and testing AI. His 2001 book Super-Intelligent Machines advocates the need for public education about AI and public control over AI. It also proposed a simple design that was vulnerable to corruption of the reward generator. | ||

| − | 按照[[Eliezer Yudkowsky]]的观点,人工智能安全的一个重要问题是,不友好的人工智能可能比友好的人工智能更容易创建。虽然两者都需要递归优化过程的进步,但友好的人工智能还需要目标结构在自我改进过程中保持不变(否则人工智能可以将自己转变成不友好的东西),以及一个与人类价值观相一致且不会自动毁灭人类的目标结构。另一方面,一个不友好的人工智能可以针对任意的目标结构进行优化,而目标结构不需要在自我改进过程中保持不变。Bill Hibbard (2014) | + | 按照[[Eliezer Yudkowsky]]的观点,人工智能安全的一个重要问题是,不友好的人工智能可能比友好的人工智能更容易创建。虽然两者都需要递归优化过程的进步,但友好的人工智能还需要目标结构在自我改进过程中保持不变(否则人工智能可以将自己转变成不友好的东西),以及一个与人类价值观相一致且不会自动毁灭人类的目标结构。另一方面,一个不友好的人工智能可以针对任意的目标结构进行优化,而目标结构不需要在自我改进过程中保持不变。Bill Hibbard (2014)提出了一种人工智能设计,可以避免包括自欺欺人、无意的工具性行为和奖励机制的腐败等一些危险。他还讨论了人工智能和人工智能测试的社会影响。他在2001年出版的“超级智能机器Super-Intelligent Machines”一书中提倡对人工智能的公共教育和公众控制。该书还提出了一个简单的易受奖励机制的腐败影响的设计。It also proposed a simple design that was vulnerable to corruption of the reward generator. |

===Next step of sociobiological evolution社会生物进化的下一步=== | ===Next step of sociobiological evolution社会生物进化的下一步=== | ||

| 第371行: | 第371行: | ||

In addition, some argue that we are already in the midst of a [[The Major Transitions in Evolution|major evolutionary transition]] that merges technology, biology, and society. Digital technology has infiltrated the fabric of human society to a degree of indisputable and often life-sustaining dependence. | In addition, some argue that we are already in the midst of a [[The Major Transitions in Evolution|major evolutionary transition]] that merges technology, biology, and society. Digital technology has infiltrated the fabric of human society to a degree of indisputable and often life-sustaining dependence. | ||

| − | + | 此外,有人认为,我们已经处在一个融合了技术、生物学和社会学的进化巨变major evolutionary transition之中。数字技术已经无可争辩地渗透到人类社会的结构中,而且生命的维持常常依赖数字技术。 | |

| 第472行: | 第472行: | ||

Beyond merely extending the operational life of the physical body, [[Jaron Lanier]] argues for a form of immortality called "Digital Ascension" that involves "people dying in the flesh and being uploaded into a computer and remaining conscious".<ref>{{cite book |title = You Are Not a Gadget: A Manifesto |last = Lanier |first = Jaron |author-link = Jaron Lanier |publisher = [[Alfred A. Knopf]] |year = 2010 |isbn = 978-0307269645 |location = New York, NY |page = [https://archive.org/details/isbn_9780307269645/page/26 26] |url-access = registration |url = https://archive.org/details/isbn_9780307269645 }}</ref> | Beyond merely extending the operational life of the physical body, [[Jaron Lanier]] argues for a form of immortality called "Digital Ascension" that involves "people dying in the flesh and being uploaded into a computer and remaining conscious".<ref>{{cite book |title = You Are Not a Gadget: A Manifesto |last = Lanier |first = Jaron |author-link = Jaron Lanier |publisher = [[Alfred A. Knopf]] |year = 2010 |isbn = 978-0307269645 |location = New York, NY |page = [https://archive.org/details/isbn_9780307269645/page/26 26] |url-access = registration |url = https://archive.org/details/isbn_9780307269645 }}</ref> | ||

| − | 除了仅仅延长物质身体的运行寿命之外,[[Jaron Lanier]] | + | 除了仅仅延长物质身体的运行寿命之外,[[Jaron Lanier]]还主张一种称为“数字提升Digital Ascension”的不朽形式,即“人在肉体层面死亡,意识被上传到电脑里并保持清醒”。 |

| 第484行: | 第484行: | ||

An early description of the idea was made in [[John Wood Campbell Jr.]]'s 1932 short story "The last evolution". | An early description of the idea was made in [[John Wood Campbell Jr.]]'s 1932 short story "The last evolution". | ||

| − | 1932年[[约翰.伍德.坎贝尔] | + | 1932年[[约翰.伍德.坎贝尔]的短篇小说《最后的进化》(the last evolution)对这一想法作了早期的描述。 |

In his 1958 obituary for [[John von Neumann]], Ulam recalled a conversation with von Neumann about the "ever accelerating progress of technology and changes in the mode of human life, which gives the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue."<ref name=mathematical/> | In his 1958 obituary for [[John von Neumann]], Ulam recalled a conversation with von Neumann about the "ever accelerating progress of technology and changes in the mode of human life, which gives the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue."<ref name=mathematical/> | ||

2021年8月6日 (五) 22:42的版本

此词条暂由水流心不竞、嘉树初译,徐培审校,带来阅读不便,请见谅。

{{简介}技术增长变得不可控制和不可逆转的假设时间点}}

The technological singularity—also, simply, the singularity[1]—is a hypothetical point in time at which technological growth becomes uncontrollable and irreversible, resulting in unforeseeable changes to human civilization.[2][3] According to the most popular version of the singularity hypothesis, called intelligence explosion, an upgradable intelligent agent will eventually enter a "runaway reaction" of self-improvement cycles, each new and more intelligent generation appearing more and more rapidly, causing an "explosion" in intelligence and resulting in a powerful superintelligence that qualitatively far surpasses all human intelligence.

技术奇点Technological singularity——简称 奇点 Singularity [4]是一个假设的时间点。在该时间点上,技术增长变得不可控制和不可逆转,从而导致人类文明发生无法预见的变化。[5][3]根据奇点假说(也被称为智能爆炸 intelligence explosion)最流行的版本:一个可升级的智能体终将进入一种自我完善循环的“失控反应 runaway reaction”。每个新的、更智能的世代将出现得越来越快,导致智能的“爆炸”,并产生一种在实质上远超所有人类智能的超级智能。

The first use of the concept of a "singularity" in the technological context was John von Neumann.[6] Stanislaw Ulam reports a discussion with von Neumann "centered on the accelerating progress of technology and changes in the mode of human life, which gives the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue".[7] Subsequent authors have echoed this viewpoint.[3][8]

第一次在科技领域使用“奇点”这一概念的是冯·诺依曼 John von Neumann[9]。Stanislaw Ulam 报告了一次与冯·诺依曼的讨论。“围绕技术的加速进步和人类生活模式的改变,这让我们看到了人类历史上一些本质上的奇点。[7] 一旦超越了这些奇点,我们所熟知的人类事务就无法继续下去了”。后来作者也赞同这一观点。[3][8]

I. J. Good's "intelligence explosion" model predicts that a future superintelligence will trigger a singularity.[10]

I. J.古德的“智能爆炸”模型预测,未来的超级智能将触发一个奇点。[10]

The concept and the term "singularity" were popularized by Vernor Vinge in his 1993 essay The Coming Technological Singularity, in which he wrote that it would signal the end of the human era, as the new superintelligence would continue to upgrade itself and would advance technologically at an incomprehensible rate. He wrote that he would be surprised if it occurred before 2005 or after 2030.[10]

奇点的概念和术语“奇点”是由 Vernor Vinge 在他1993年的文章《即将到来的技术奇点 The Coming Technological Singularity》中得到推广的。他在文中写道,这将标志着人类时代的终结,因为新的超级智能将持续自我升级,并以不可思议的速度在技术上进步。他写道,如果奇点发生在2005年之前或2030年之后,他会感到惊讶。[10]

Public figures such as Stephen Hawking and Elon Musk have expressed concern that full artificial intelligence (AI) could result in human extinction.[11][12] The consequences of the singularity and its potential benefit or harm to the human race have been intensely debated.

斯蒂芬·霍金和埃隆·马斯克等公众人物对完全人工智能(AI)可能导致人类灭绝表示担忧。[13][14]奇点的后果及其对人类的潜在利益或伤害一直存在激烈的争论。

Four polls of AI researchers, conducted in 2012 and 2013 by Nick Bostrom and Vincent C. Müller, suggested a median probability estimate of 50% that artificial general intelligence (AGI) would be developed by 2040–2050.[15][16]

2012年到2013年,Nick Bostrom和Vincent c. Müller 对人工智能研究人员进行了四次调查。结果显示,通用人工智能(artificial general intelligence, AGI)在2040年至2050年被成功开发出来的概率估计的中位数为50% 。[15][17]

Background 背景

Although technological progress has been accelerating, it has been limited by the basic intelligence of the human brain, which has not, according to Paul R. Ehrlich, changed significantly for millennia.[18] However, with the increasing power of computers and other technologies, it might eventually be possible to build a machine that is significantly more intelligent than humans.[19]

虽然技术进步一直在加速,但它一直受到人脑基本智力的限制,而根据Paul R.Ehrlich的说法,人类大脑的基本智力在几千年来并没有发生显著变化。[18]然而,随着计算机和其他技术的日益强大,人类最终有可能制造出一台比人类智能得多的机器[19]

If a superhuman intelligence were to be invented—either through the amplification of human intelligence or through artificial intelligence—it would bring to bear greater problem-solving and inventive skills than current humans are capable of. Such an AI is referred to as Seed AI because if an AI were created with engineering capabilities that matched or surpassed those of its human creators, it would have the potential to autonomously improve its own software and hardware or design an even more capable machine. This more capable machine could then go on to design a machine of yet greater capability. These iterations of recursive self-improvement could accelerate, potentially allowing enormous qualitative change before any upper limits imposed by the laws of physics or theoretical computation set in. It is speculated that over many iterations, such an AI would far surpass human cognitive abilities.

如果一种超人类智能被发明出来。无论是通过人类智能的放大还是通过人工智能,它将带来比现在的人类更强的问题解决和发明创造能力。这种人工智能被称为种子人工智能 Seed AI。因为如果人工智能的工程能力能够与它的人类创造者相匹敌或超越,那么它就有潜力自主改进自己的软件和硬件,或者设计出更强大的机器。这台能力更强的机器可以继续设计一台能力更强的机器。这种自我递归改进的迭代可以加速,在物理定律或理论计算设定的任何上限之前,可能会发生巨大的质变。据推测,经过多次迭代,这样的人工智能将远远超过人类的认知能力。

Intelligence explosion智能爆炸

Intelligence explosion is a possible outcome of humanity building artificial general intelligence (AGI). AGI would be capable of recursive self-improvement, leading to the rapid emergence of artificial superintelligence (ASI), the limits of which are unknown, shortly after technological singularity is achieved.

智能爆炸是构建通用人工智能 artificial general intelligence (AGI) 的可能结果。在技术奇点实现后不久,AGI 将能够进行递归式的自我迭代,从而导致人工超级智能 artificial superintelligence (ASI) 的迅速出现,但其局限性尚不清楚。

I. J. Good speculated in 1965 that artificial general intelligence might bring about an intelligence explosion. He speculated on the effects of superhuman machines, should they ever be invented:[20]

1965年,I.J.Good 曾推测人工通用智能可能会带来智能爆炸。他对超人类及其的影响进行了推测,如果他们真的被发明出来的话:[20]

/* Styling for Template:Quote */ .templatequote { overflow: hidden; margin: 1em 0; padding: 0 40px; } .templatequote .templatequotecite {

line-height: 1.5em; /* @noflip */ text-align: left; /* @noflip */ padding-left: 1.6em; margin-top: 0;

}

{{让我们把超智能机器定义为一种机器,它可以进行远超无论多么聪明的一个人的所有智力活动。由于机器的设计是一种智力活动,那么一台超智能机器可以设计出更好的机器;那么毫无疑问会出现“智能爆炸”,人类的智能将远远落后。因此,第一台超智能机器是人类所需要的最后一项发明,当然,假设机器足够温顺并能够告诉我们如何控制它们的话。}}

Good's scenario runs as follows: as computers increase in power, it becomes possible for people to build a machine that is more intelligent than humanity; this superhuman intelligence possesses greater problem-solving and inventive skills than current humans are capable of. This superintelligent machine then designs an even more capable machine, or re-writes its own software to become even more intelligent; this (even more capable) machine then goes on to design a machine of yet greater capability, and so on. These iterations of recursive self-improvement accelerate, allowing enormous qualitative change before any upper limits imposed by the laws of physics or theoretical computation set in.[20]

古德的设想如下:随着计算机能力的增加,人们有可能制造出一台比人类更智能的机器;这种超人的智能拥有比现在人类更强大的问题解决和发明创造的能力。这台超级智能机器随后设计一台功能更强大的机器,或者重写自己的软件来变得更加智能;这台(甚至更强大的)机器接着继续设计功能更强大的机器,以此类推。这些递归式的自我完善的迭代加速,允许在物理定律或理论计算设定的任何上限之内发生巨大的质变。[20]

Other manifestations其他表现形式

Emergence of superintelligence超级智能的出现

{{进一步{超级智能}}

A superintelligence, hyperintelligence, or superhuman intelligence is a hypothetical agent that possesses intelligence far surpassing that of the brightest and most gifted human minds. "Superintelligence" may also refer to the form or degree of intelligence possessed by such an agent. John von Neumann, Vernor Vinge and Ray Kurzweil define the concept in terms of the technological creation of super intelligence. They argue that it is difficult or impossible for present-day humans to predict what human beings' lives would be like in a post-singularity world.[10][21]

超级智能、超智能或超人智能是一种假想的智能体。它拥有的智能远远超过最聪明、最有天赋的人类大脑的智能。“超级智能”也可以指这种智能体所拥有的智能的形式或程度。John von Neumann,Vernor Vinge和Ray Kurzweil 从技术创造超级智能的角度定义了这个概念。他们认为,现在的人类很难或不可能预测人类在后奇点世界的生活会是什么样子[10][21]

Technology forecasters and researchers disagree about if or when human intelligence is likely to be surpassed. Some argue that advances in artificial intelligence (AI) will probably result in general reasoning systems that lack human cognitive limitations. Others believe that humans will evolve or directly modify their biology so as to achieve radically greater intelligence. A number of futures studies scenarios combine elements from both of these possibilities, suggesting that humans are likely to interface with computers, or upload their minds to computers, in a way that enables substantial intelligence amplification.

技术预言家和研究人员对人类智能是否或何时可能被超越存在分歧。一些人认为,人工智能(AI)的进步可能会产生没有人类认知局限的一般推理系统。另一些人则认为,人类将进化或直接改变自己的生物性,从而从根本上实现更高的智能。许多未来研究的场景结合了这两种可能的元素,认为人类很可能会与计算机交互,或以将他们的意识上传到计算机的方式实现大量的智能增益。

Non-AI singularity非人工智能奇点

Some writers use "the singularity" in a broader way to refer to any radical changes in our society brought about by new technologies such as molecular nanotechnology,[22][23][24] although Vinge and other writers specifically state that without superintelligence, such changes would not qualify as a true singularity.[10]

一些作家writers更宽泛地使用“奇点”的概念,用来指代任何我们社会中由新技术带来的剧烈变化,如分子纳米技术,[22][23][24] 尽管Vinge和其他作家明确指出,如果没有超级智能,这些改变就不能算作真正的奇点。[10]

Speed superintelligence速度超智能

A speed superintelligence describes an AI that can do everything that a human can do, where the only difference is that the machine runs faster.[25] For example, with a million-fold increase in the speed of information processing relative to that of humans, a subjective year would pass in 30 physical seconds.[26] Such a difference in information processing speed could drive the singularity.[27]

速度超级智能描述了一个人工智能,它可以做任何人类能做的事情,唯一的区别是这个机器运行得更快.[28] 。例如,与人类相比,它信息处理的速度提高了一百万倍,一个主观年将在30个物理秒内过去。[26]这种在信息处理上的差异可能会导致奇点。.[29]

Plausibility合理性

Many prominent technologists and academics dispute the plausibility of a technological singularity, including Paul Allen, Jeff Hawkins, John Holland, Jaron Lanier, and Gordon Moore, whose law is often cited in support of the concept.[30][31][32]

许多著名的技术专家和学者都对技术奇点的合理性提出质疑,包括Paul Allen、Jeff Hawkins、 John Holland、Jaron Lanier和Gordon Moore,他的摩尔定律经常被引用来支持这一概念。[30][31][32]

Most proposed methods for creating superhuman or transhuman minds fall into one of two categories: intelligence amplification of human brains and artificial intelligence. The speculated ways to produce intelligence augmentation are many, and include bioengineering, genetic engineering, nootropic drugs, AI assistants, direct brain–computer interfaces and mind uploading. Because multiple paths to an intelligence explosion are being explored, it makes a singularity more likely; for a singularity to not occur they would all have to fail.[26]

大多数创造超人或跨人类头脑的方法分为两类:人脑的智能增强和人工智能。据推测,智能增强的方法很多,包括生物工程、基因工程、益智药物、AI 助手、直接脑机接口和思维上传。因为人们正在探索通向智能爆炸的多种途径,这使得奇点出现的可能更大;奇点不发生,所有这些都必将失败。[26]

Robin Hanson expressed skepticism of human intelligence augmentation, writing that once the "low-hanging fruit" of easy methods for increasing human intelligence have been exhausted, further improvements will become increasingly difficult to find.[33] Despite all of the speculated ways for amplifying human intelligence, non-human artificial intelligence (specifically seed AI) is the most popular option among the hypotheses that would advance the singularity.[citation needed]

Robin Hanson 对人类智能增强表示怀疑,他写道,一旦提高人类智力的“唾手可得的”简单方法用尽,进一步的改进将变得越来越难。[33]尽管有各种提高人类智能的方法,但非人类人工智能(特别是种子人工智能)仍是所有能推进奇点的假说中最受欢迎的一个。[citation needed]

Whether or not an intelligence explosion occurs depends on three factors.[34] The first accelerating factor is the new intelligence enhancements made possible by each previous improvement. Contrariwise, as the intelligences become more advanced, further advances will become more and more complicated, possibly overcoming the advantage of increased intelligence. Each improvement should beget at least one more improvement, on average, for movement towards singularity to continue. Finally, the laws of physics will eventually prevent any further improvements.

智能爆炸是否发生取决于三个因素。第一个加速因素是以前的每一次改进都使新的智能增强成为可能。相反,随着智能的进步,进一步的发展将变得越来越复杂,可能会抵消智力增长的优势。平均而言,每一次改进都应该至少带来一次改进,以便继续朝着奇点的方向发展。最后,物理定律最终会阻止任何进一步的改进。

There are two logically independent, but mutually reinforcing, causes of intelligence improvements: increases in the speed of computation, and improvements to the algorithms used.[35] The former is predicted by Moore's Law and the forecasted improvements in hardware,[36] and is comparatively similar to previous technological advances. But there are some AI researchers模板:Who who believe software is more important than hardware.[37][citation needed]

智能改进有两个逻辑上独立但又相互加强的原因:计算速度的提高和使用的算法的改进。[35]前者由摩尔定律和硬件方面的预测改进进行预测,[36]与以前的技术进步比较相似。但也有一些人工智能研究人员认为软件比硬件更重要[38][citation needed]

A 2017 email survey of authors with publications at the 2015 NeurIPS and ICML machine learning conferences asked about the chance of an intelligence explosion. Of the respondents, 12% said it was "quite likely", 17% said it was "likely", 21% said it was "about even", 24% said it was "unlikely" and 26% said it was "quite unlikely".[39]

2017年,一项对2015年 NeurIPS 和 ICML 机器学习会议上发表论文的作者的电子邮件调查询问了智能爆炸的可能性。在受访者中,12% 的人认为“很有可能” ,17% 的人认为“有可能” ,21% 的人认为“可能性中等” ,24% 的人认为“不太可能” ,26% 的人认为“非常不可能”。[40]

Speed improvements速度改进

Both for human and artificial intelligence, hardware improvements increase the rate of future hardware improvements. Simply put,[41] Moore's Law suggests that if the first doubling of speed took 18 months, the second would take 18 subjective months; or 9 external months, whereafter, four months, two months, and so on towards a speed singularity.[42] An upper limit on speed may eventually be reached, although it is unclear how high this would be. Jeff Hawkins has stated that a self-improving computer system would inevitably run into upper limits on computing power: "in the end there are limits to how big and fast computers can run. We would end up in the same place; we'd just get there a bit faster. There would be no singularity."[43]

无论对于人类智能还是人工智能,硬件改进都会提高未来硬件改进的速度。简单地说,[41]Moore's Law认为,如果第一次速度翻倍需要18个月,第二次则需要18个主观月;或者9个外部月,之后,4个月、2个月,以此类推,走向速度奇点[42] 速度的上限最终可能会达到,尽管还不清楚这会有多高。杰夫·霍金斯(Jeff Hawkins)曾表示,一个自我完善的计算机系统不可避免地会遇到计算能力的上限:“最终,计算机的运行速度和速度都是有限的。我们最终会在同一个地方;我们只会更快到达那里。不会有奇点。”[43]

It is difficult to directly compare silicon-based hardware with neurons. But Berglas (2008) notes that computer speech recognition is approaching human capabilities, and that this capability seems to require 0.01% of the volume of the brain. This analogy suggests that modern computer hardware is within a few orders of magnitude of being as powerful as the human brain.

很难直接将基于硅的硬件与神经元相比较。但是{Harvtxt| Berglas|2008}指出计算机语音识别正在接近人类的能力,而且这种能力似乎需要0.01%的脑容量。这个类比表明,现代计算机硬件与人脑一样强大,只差几个数量级。

Exponential growth指数增长

Martin Ford in The Lights in the Tunnel: Automation, Accelerating Technology and the Economy of the Future

马丁 · 福特Martin Ford的《隧道中的灯光: 自动化,加速技术和未来经济The Lights in the Tunnel: Automation, Accelerating Technology and the Economy of the Future》

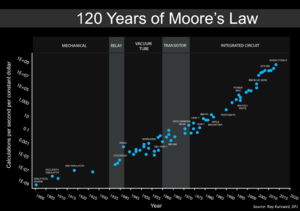

[[图片:PPTMooresLawai.jpg|thumb | Ray Kurzweil写道,由于范式转换s,指数增长的趋势将摩尔定律从集成电路扩展到早期的晶体管、真空管、继电器和机电机械计算机。他预测,这种指数增长将继续下去,在几十年内,所有计算机的计算能力将超过(“未增强的”)人脑,同时出现超人人工智能

[[资料图:摩尔超过120的定律年.png|拇指|左|摩尔定律120年的更新版本(基于 Kurzweil's图形)。最近的7个数据点都是 Nvidia GPU.]]

The exponential growth in computing technology suggested by Moore's law is commonly cited as a reason to expect a singularity in the relatively near future, and a number of authors have proposed generalizations of Moore's law. Computer scientist and futurist Hans Moravec proposed in a 1998 book[44] that the exponential growth curve could be extended back through earlier computing technologies prior to the integrated circuit.

摩尔定律所建议的计算技术的指数增长通常被认为是在相对不远的将来出现奇点的一个理由,许多作者已经提出了摩尔定律的推广。计算机科学家和未来主义者Hans Moravec在1998年的一本书中提到[45]指数型增长曲线可以沿着集成电路之前的早期计算技术进行延伸。

Ray Kurzweil postulates a law of accelerating returns in which the speed of technological change (and more generally, all evolutionary processes[46]) increases exponentially, generalizing Moore's law in the same manner as Moravec's proposal, and also including material technology (especially as applied to nanotechnology), medical technology and others.[47] Between 1986 and 2007, machines' application-specific capacity to compute information per capita roughly doubled every 14 months; the per capita capacity of the world's general-purpose computers has doubled every 18 months; the global telecommunication capacity per capita doubled every 34 months; and the world's storage capacity per capita doubled every 40 months.[48] On the other hand, it has been argued that the global acceleration pattern having the 21st century singularity as its parameter should be characterized as hyperbolic rather than exponential.[49]

Ray Kurzweil假设了一个加速回报定律,其中技术变革的速度(更广泛地说,所有进化过程[46])急剧增长。从1986年到2007年,摩尔定律以与莫拉维克提案相同的方式呈指数增长,并包括材料技术(尤其是应用于纳米技术)、医疗技术和其他技术,计算机计算人均信息的特定应用能力大约每14个月翻一番;世界通用计算机的人均容量每18个月翻一番;全球人均电信容量每34个月翻一番;世界人均存储容量每40个月翻一番。[48] 另一方面,有人认为,以21世纪奇点为参数的全球加速度模式应该被描述为双曲而不是指数型[50]

Kurzweil reserves the term "singularity" for a rapid increase in artificial intelligence (as opposed to other technologies), writing for example that "The Singularity will allow us to transcend these limitations of our biological bodies and brains ... There will be no distinction, post-Singularity, between human and machine".[51] He also defines his predicted date of the singularity (2045) in terms of when he expects computer-based intelligences to significantly exceed the sum total of human brainpower, writing that advances in computing before that date "will not represent the Singularity" because they do "not yet correspond to a profound expansion of our intelligence."[52]

库兹韦尔将“奇点”一词用于描述人工智能(相对于其他技术)的快速增长,例如他写道: “奇点将允许我们超越生物体和大脑的局限……后奇点时代,人类与机器之间将不再有区别”。库兹韦尔相信奇点将在大约2045年之前出现,那时基于计算机的智能将明显超越人类脑力的总和,在这个日期之前计算机技术的进步“并不代表奇点”,因为它们“还不符合智慧的深刻扩展”。

Accelerating change加速变革

[[图片:ParadigmShiftsFrr15Events.svg|thumb |根据Kurzweil的说法,他对关键的历史事件的15个范式转移列表的对数图显示了指数趋势]]

Some singularity proponents argue its inevitability through extrapolation of past trends, especially those pertaining to shortening gaps between improvements to technology. In one of the first uses of the term "singularity" in the context of technological progress, Stanislaw Ulam tells of a conversation with John von Neumann about accelerating change: /* Styling for Template:Quote */ .templatequote { overflow: hidden; margin: 1em 0; padding: 0 40px; } .templatequote .templatequotecite {

line-height: 1.5em; /* @noflip */ text-align: left; /* @noflip */ padding-left: 1.6em; margin-top: 0;

}

一些奇点论的支持者认为,通过对过去趋势的推断,特别是那些缩减技术进步的差距有关的趋势,奇点是不可避免的。技术进步的背景下,较早使用“奇点”一词时,Stanislaw Ulam讲述了与冯·诺伊曼关于加速变革的一次谈话: 一次围绕不断加速的技术进步和生活方式变化的对话,它使得人类历史上出现了一些基本的奇点,超过了这些奇点,人类的事务,如我们所知,将无法继续下去。

Kurzweil claims that technological progress follows a pattern of

exponential growth, following what he calls the "law of

accelerating returns". Whenever technology approaches a barrier,

Kurzweil writes, new technologies will surmount it. He predicts

paradigm shifts will become increasingly common, leading to

"technological change so rapid and profound it represents a

rupture in the fabric of human history".[43] Kurzweil believes that

the singularity will occur by approximately 2045.[38] His

predictions differ from Vinge's in that he predicts a gradual

ascent to the singularity, rather than Vinge's rapidly self�improving superhuman intelligence.

Kurzweil claims that technological progress follows a pattern of exponential growth, following what he calls the "law of accelerating returns". Whenever technology approaches a barrier, Kurzweil writes, new technologies will surmount it. He predicts paradigm shifts will become increasingly common, leading to "technological change so rapid and profound it represents a rupture in the fabric of human history".[43] Kurzweil believes that the singularity will occur by approximately 2045.[38] His predictions differ from Vinge's in that he predicts a gradual ascent to the singularity, rather than Vinge's rapidly self-improving superhuman intelligence.

库兹韦尔声称,技术进步遵循[[指数增长]的模式,遵循他所称的“加速返回定律law of accelerating returns”。库兹韦尔写道,每当一项技术遇到障碍时,新技术就会出来克服这个障碍。他预测范式转变将变得越来越普遍,导致“技术变革非常迅速和深刻,以至于它代表着人类历史结构的一个断裂”。库兹韦尔相信奇点将在2045年之前出现。他和Vinge预测的不同点在于他预测的是一个逐渐上升到奇点的过程,而Vinge预测了一个快速自我更新的超人类智能。

Oft-cited dangers include those commonly associated with molecular nanotechnology and genetic engineering. These threats are major issues for both singularity advocates and critics, and were the subject of Bill Joy's Wired magazine article "Why the future doesn't need us".

经常被引用的危险包括那些与分子纳米技术和基因工程有关的技术。这些威胁是奇点论的倡导者和批评者面临的主要议题,也是比尔 · 乔伊《连线 Wired》杂志上所发表文章《为什么未来不需要我们Why the future doesn't need us》的主题。

Algorithm improvements算法改进

Some intelligence technologies, like "seed AI",[53][54] may also have the potential to not just make themselves faster, but also more efficient, by modifying their source code. These improvements would make further improvements possible, which would make further improvements possible, and so on. 一些智能技术,比如“种子人工智能”,通过修改自己的源代码,可能使自己不仅更快,而且更高效。这些改进将使进一步的改进成为可能,以此类推。

The mechanism for a recursively self-improving set of algorithms differs from an increase in raw computation speed in two ways. First, it does not require external influence: machines designing faster hardware would still require humans to create the improved hardware, or to program factories appropriately.[citation needed] An AI rewriting its own source code could do so while contained in an AI box.

递归自我改进算法集的机制在两个方面不同于原始计算速度的提高。首先,它不需要外部影响:设计更快的硬件的机器仍然需要人类来创造改进的硬件,或者对工厂进行适当的编程。AI可以既身处一个AI盒 AI box里面,又同时改进自己的 源代码。

Second, as with Vernor Vinge’s conception of the singularity, it is much harder to predict the outcome. While speed increases seem to be only a quantitative difference from human intelligence, actual algorithm improvements would be qualitatively different. Eliezer Yudkowsky compares it to the changes that human intelligence brought: humans changed the world thousands of times more rapidly than evolution had done, and in totally different ways. Similarly, the evolution of life was a massive departure and acceleration from the previous geological rates of change, and improved intelligence could cause change to be as different again.[55]

第二,和Vernor Vinge关于奇点的概念一样,对结果的预测要困难得多。虽然速度的提高似乎与人类的智能只是数量上的区别,但实际的算法改进在质量上是不同的。Eliezer Yudkowsky将其与人类智能带来的变化相比较:人类改变世界的速度比进化速度快数千倍,而且方式完全不同。同样地,生命的进化与以前的地质变化又有着巨大的不同和加速,而智能的提高可能会使变化再次变得不同。[55]

There are substantial dangers associated with an intelligence explosion singularity originating from a recursively self-improving set of algorithms. First, the goal structure of the AI might not be invariant under self-improvement, potentially causing the AI to optimise for something other than what was originally intended.[56][57] Secondly, AIs could compete for the same scarce resources mankind uses to survive.[58][59]

There are substantial dangers associated with an intelligence explosion singularity originating from a recursively self-improving set of algorithms. First, the goal structure of the AI might not be invariant under self-improvement, potentially causing the AI to optimise for something other than what was originally intended. Secondly, AIs could compete for the same scarce resources humankind uses to survive.

由递归自我改进的算法集合引起的智能爆炸存在着巨大的危险。首先,人工智能的目标结构在自我完善的情况下可能不是一成不变的,这可能会导致人工智能对原本计划之外的东西进行优化。第二,人工智能可以与人类竞争赖以生存的稀缺资源。

While not actively malicious, there is no reason to think that AIs would actively promote human goals unless they could be programmed as such, and if not, might use the resources currently used to support mankind to promote its own goals, causing human extinction.[60][61][62]

While not actively malicious, there is no reason to think that AIs would actively promote human goals unless they could be programmed as such, and if not, might use the resources currently used to support humankind to promote its own goals, causing human extinction.

虽然不是恶意的,但没有理由认为人工智能会积极促进人类目标的实现,除非这些目标可以被编程,否则,它们就可能利用目前用于支持人类的资源来促进自己的目标,从而导致人类灭绝。

Carl Shulman and Anders Sandberg suggest that algorithm improvements may be the limiting factor for a singularity; while hardware efficiency tends to improve at a steady pace, software innovations are more unpredictable and may be bottlenecked by serial, cumulative research. They suggest that in the case of a software-limited singularity, intelligence explosion would actually become more likely than with a hardware-limited singularity, because in the software-limited case, once human-level AI is developed, it could run serially on very fast hardware, and the abundance of cheap hardware would make AI research less constrained.[63] An abundance of accumulated hardware that can be unleashed once the software figures out how to use it has been called "computing overhang."[64]

Carl Shulman and Anders Sandberg suggest that algorithm improvements may be the limiting factor for a singularity; while hardware efficiency tends to improve at a steady pace, software innovations are more unpredictable and may be bottlenecked by serial, cumulative research. They suggest that in the case of a software-limited singularity, intelligence explosion would actually become more likely than with a hardware-limited singularity, because in the software-limited case, once human-level AI is developed, it could run serially on very fast hardware, and the abundance of cheap hardware would make AI research less constrained. An abundance of accumulated hardware that can be unleashed once the software figures out how to use it has been called "computing overhang."

Carl Shulman和Anders Sandberg认为,算法改进可能是奇点的限制因素;虽然硬件效率趋于稳步提高,但软件创新更不具可预测性,可能会受到连续、累积的研究的限制。他们认为,智能爆炸在受软件限制的奇点情况中发生的可能性实际上比在受硬件限制的奇点更可能发生,因为在软件受限的情况下,一旦开发出人类水平的人工智能,它可以在非常快的硬件上连续运行,廉价硬件的丰富将使人工智能研究不那么受限制。一旦软件知道如何使用硬件,大量的硬件就可以被释放出来,这被称为“计算过剩”。

Criticisms危机

Some critics, like philosopher Hubert Dreyfus, assert that computers or machines cannot achieve human intelligence, while others, like physicist Stephen Hawking, hold that the definition of intelligence is irrelevant if the net result is the same.[65]

一些批评者,如哲学家Hubert Dreyfus断言计算机或机器无法实现人类智能,而其他人,如物理学家史蒂芬·霍金,则认为如果最终结果是相同的,那么智力的定义其实无关紧要。

An early description of the idea was made in John Wood Campbell Jr.'s 1932 short story "The last evolution".

早在1932年,约翰·W·坎贝尔的短篇小说《最后的进化》中就对这个想法进行了描述。

Psychologist Steven Pinker stated in 2008:

心理学家史蒂芬·平克在2008年指出:

/* Styling for Template:Quote */ .templatequote { overflow: hidden; margin: 1em 0; padding: 0 40px; } .templatequote .templatequotecite {

line-height: 1.5em; /* @noflip */ text-align: left; /* @noflip */ padding-left: 1.6em; margin-top: 0;

}

没有一点理由相信奇点即将到来。你可以想象一个未来并不能证明它是可能出现的。看看穹顶城市、喷气式飞行器通勤、水下城市、一英里高的建筑和核动力汽车:这些都是我小时候未来主义幻想的主要内容,然而它们都没有成真。纯粹的计算能力不是能神奇地解决所有问题的仙尘。

University of California, Berkeley, philosophy professor John Searle writes:

[[加州大学伯克利分校],哲学教授约翰·塞尔写道:

[Computers] have, literally ..., no intelligence, no motivation, no autonomy, and no agency. We design them to behave as if they had certain sorts of psychology, but there is no psychological reality to the corresponding processes or behavior. ... [T]he machinery has no beliefs, desires, [or] motivations.[66]

毫不夸张地说,计算机没有智能,没有动机,没有自主,也没有智能体。我们设计他们,使他们的行为好像表示他们有某种心理,但其实没有对应这些过程或行为的心理现实……机器没有信仰、愿望或动机。

Martin Ford in The Lights in the Tunnel: Automation, Accelerating Technology and the Economy of the Future[67] postulates a "technology paradox" in that before the singularity could occur most routine jobs in the economy would be automated, since this would require a level of technology inferior to that of the singularity. This would cause massive unemployment and plummeting consumer demand, which in turn would destroy the incentive to invest in the technologies that would be required to bring about the Singularity. Job displacement is increasingly no longer limited to work traditionally considered to be "routine."[68]

Martin Ford在“隧道中的灯光:自动化、加速技术和未来经济 The Lights in the Tunnel: Automation, Accelerating Technology and the Economy of the Future”[67]中提出了一个“技术悖论”:在奇点出现之前,经济体中的大多数日常工作都将自动化,因为这所需的技术水平低于奇点。这将导致大规模的失业和消费者需求的骤降,这反过来又会破坏投资于实现奇点所需技术的动机。工作的替代越来越不再局限于那些传统上被认为是“例行公事”的工作。[68]

Theodore Modis[69][70] and Jonathan Huebner[71] argue that the rate of technological innovation has not only ceased to rise, but is actually now declining. Evidence for this decline is that the rise in computer clock rates is slowing, even while Moore's prediction of exponentially increasing circuit density continues to hold. This is due to excessive heat build-up from the chip, which cannot be dissipated quickly enough to prevent the chip from melting when operating at higher speeds. Advances in speed may be possible in the future by virtue of more power-efficient CPU designs and multi-cell processors.[72] While Kurzweil used Modis' resources, and Modis' work was around accelerating change, Modis distanced himself from Kurzweil's thesis of a "technological singularity", claiming that it lacks scientific rigor.[70]

Theodore Modis[69][70]和Jonathan Huebner[71] 认为技术创新的速度不仅停止上升,而且现在实际上正在下降。这种下降的证据是计算机时钟速率的增长正在放缓,尽管摩尔关于电路密度指数增长的预测仍然成立。这是由于芯片产生过多的热量,当它们以较高的速度运行时,这些热量不能足够快地散去,可能导致芯片熔化。在未来,随着更节能的CPU设计和多单元处理器的发明,速度的提高可能实现。[72]尽管 Kurzweil 利用了Modis的(工作成果)作为资源,同时 Modis 的工作围绕着加速变革,但 Modis 与 Kurzweil 的“技术奇点”理论保持距离,称其缺乏科学严谨性。[70]

In a detailed empirical accounting, The Progress of Computing, William Nordhaus argued that, prior to 1940, computers followed the much slower growth of a traditional industrial economy, thus rejecting extrapolations of Moore's law to 19th-century computers.[73]

在一份详细的实证报告《计算的进步 The Progress of Computing》中,威廉·诺德豪斯 William Nordhaus 认为,在 1940 年之前,计算机遵循传统工业经济增长缓慢的趋势,因此拒绝了摩尔定律对19世纪计算机的推断。[74]

In a 2007 paper, Schmidhuber stated that the frequency of subjectively "notable events" appears to be approaching a 21st-century singularity, but cautioned readers to take such plots of subjective events with a grain of salt: perhaps differences in memory of recent and distant events could create an illusion of accelerating change where none exists.[75]

在2007年的一篇论文中,Schmidhuber指出主观上“值得注意的事件”出现的频率似乎正在接近21世纪的奇点,但他提醒读者,对这些主观事件的情节要持保留态度:也许对近期和远期事件的记忆差异,可能会造成一种在根本不存在的情况下变化加速的错觉。[76]

Paul Allen argued the opposite of accelerating returns, the complexity brake; the more progress science makes towards understanding intelligence, the more difficult it becomes to make additional progress. A study of the number of patents shows that human creativity does not show accelerating returns, but in fact, as suggested by Joseph Tainter in his The Collapse of Complex Societies, a law of diminishing returns. The number of patents per thousand peaked in the period from 1850 to 1900, and has been declining since.[60] The growth of complexity eventually becomes self-limiting, and leads to a widespread "general systems collapse".

保罗·艾伦 Paul Allen 认为,与加速回报相反的是复杂性制动;科学在理解智力方面取得的进展越多,就越难取得更多的进展。一项对专利数量的研究表明,人类的创造力并没有表现出加速的回报。事实上,正如Joseph Tainter 在他的《复杂社会的崩溃 The Collapse of Complex Societies 》中所指出的那样,存在一个收益递减定律 a law of diminishing returns 的限制。每千人的专利的数量在1850年至1900年期间达到顶峰,此后一直在下降。复杂性的增长最终会自我限制,并导致广泛的“一般系统崩溃 general systems collapse”。

Jaron Lanier refutes the idea that the Singularity is inevitable. He states: "I do not think the technology is creating itself. It's not an autonomous process."[77] He goes on to assert: "The reason to believe in human agency over technological determinism is that you can then have an economy where people earn their own way and invent their own lives. If you structure a society on not emphasizing individual human agency, it's the same thing operationally as denying people clout, dignity, and self-determination ... to embrace [the idea of the Singularity] would be a celebration of bad data and bad politics."[77]

Jaron Lanier驳斥了奇点不可避免的观点。他说:“我不认为这项技术是在创造自我。这不是一个自主的过程。”[77]他接着断言:“相信人的能动性而不是技术决定论的原因是,这样你就可以有一个经济体,人们在其中可以自己挣钱,创造自己的生活。如果你在不强调个人主观能动性的基础上构建社会,就等于在操作上否认人们的影响力、尊严和自决...接受 [奇点的想法] 将是对糟糕数据和糟糕政治的颂扬。[77]

Economist Robert J. Gordon, in The Rise and Fall of American Growth: The U.S. Standard of Living Since the Civil War (2016), points out that measured economic growth has slowed around 1970 and slowed even further since the financial crisis of 2007–2008, and argues that the economic data show no trace of a coming Singularity as imagined by mathematician I.J. Good.[78]

经济学家 Robert J.Gordon在《美国经济增长的兴衰:内战以来的美国生活水平 The Rise and Fall of American Growth: The U.S. Standard of Living Since the Civil War》(2016)中指出,据测量,经济增长在1970年左右放缓,自2007-2008年金融危机以来甚至进一步放缓,并认为经济数据显示没有迹象表明数学家I.J.Good所设想的奇点将会到来。[79]

In addition to general criticisms of the singularity concept, several critics have raised issues with Kurzweil's iconic chart. One line of criticism is that a log-log chart of this nature is inherently biased toward a straight-line result. Others identify selection bias in the points that Kurzweil chooses to use. For example, biologist PZ Myers points out that many of the early evolutionary "events" were picked arbitrarily.[80] Kurzweil has rebutted this by charting evolutionary events from 15 neutral sources, and showing that they fit a straight line on a log-log chart. The Economist mocked the concept with a graph extrapolating that the number of blades on a razor, which has increased over the years from one to as many as five, will increase ever-faster to infinity.[81]

除了对奇点概念的一般性批评外,一些批评者还对库兹韦尔的标志性图表提出了质疑。一种批评是,这种性质的对数图像本质上就会存在倾向于直线的有偏差结果。其他人批评库兹韦尔在数据点的使用上存在选择偏差。[80]例如,生物学家P. Z. Myers指出,许多早期的进化“事件”都是随意挑选的。库兹韦尔反驳了这一点,他绘制了15个中立来源的进化事件图,并表明它们都符合一条直线.《经济学人》用一张图表来嘲讽这个概念:一把剃须刀上的刀片数在过去几年里从一个增加到多达五个,并且它将以更快的速度增长到无穷大。[81]

Potential impacts潜在影响

Dramatic changes in the rate of economic growth have occurred in the past because of some technological advancement. Based on population growth, the economy doubled every 250,000 years from the Paleolithic era until the Neolithic Revolution. The new agricultural economy doubled every 900 years, a remarkable increase. In the current era, beginning with the Industrial Revolution, the world's economic output doubles every fifteen years, sixty times faster than during the agricultural era. If the rise of superhuman intelligence causes a similar revolution, argues Robin Hanson, one would expect the economy to double at least quarterly and possibly on a weekly basis.[82]

过去由于一些技术进步,经济增长率发生了巨大变化。以人口增长为基础,从旧石器时代到新石器时代,经济每25万年翻一番。新农业经济每900年翻一番,增长显著。在当今时代,从工业革命开始,世界经济产出每15年翻一番,比农业时代快60倍。罗宾·汉森Robin Hanson认为,如果超人智能的兴起引发了类似的革命,人们会预期经济至少每季度翻一番,甚至可能每周翻一番。

Uncertainty and risk不确定性和风险

The term "technological singularity" reflects the idea that such change may happen suddenly, and that it is difficult to predict how the resulting new world would operate.[83][84] It is unclear whether an intelligence explosion resulting in a singularity would be beneficial or harmful, or even an existential threat.[85][86] Because AI is a major factor in singularity risk, a number of organizations pursue a technical theory of aligning AI goal-systems with human values, including the Future of Humanity Institute, the Machine Intelligence Research Institute,[83] the Center for Human-Compatible Artificial Intelligence, and the Future of Life Institute.

“技术奇点”一词反映了这样一种想法[83][84] :这种变化可能突然发生,而且很难预测由此产生的新世界将如何运作。目前尚不清楚导致奇点的智能爆炸是有益还是有害,甚至是一种存在威胁。[85][86] 由于人工智能是奇点风险的一个主要因素,许多组织追求一种将人工智能的目标系统与人类价值观相协调的技术理论。这些组织包括人类未来研究所 Future of Humanity Institute,机器智能研究所 The Machine Intelligence Research Institute, 人类兼容人工智能中心 [83]The Center for Human-Compatible Artificial Intelligence和未来生命研究所 The Future of Life Institute。

Physicist Stephen Hawking said in 2014 that "Success in creating AI would be the biggest event in human history. Unfortunately, it might also be the last, unless we learn how to avoid the risks."[87] Hawking believed that in the coming decades, AI could offer "incalculable benefits and risks" such as "technology outsmarting financial markets, out-inventing human researchers, out-manipulating human leaders, and developing weapons we cannot even understand."[87] Hawking suggested that artificial intelligence should be taken more seriously and that more should be done to prepare for the singularity:[87]

物理学家史蒂芬·霍金在2014年表示,“成功创造人工智能将是人类历史上最大的事件。不幸的是,这也可能是最后一次,除非我们学会如何规避风险。”[87] 霍金认为,在未来几十年里,人工智能可能会带来“无法估量的利益和风险”,例如“技术超越金融市场的聪明程度,超越人类研究人员的创造力,超越人类领袖的操控力,开发我们甚至无法理解的武器”。霍金建议[87] ,人们应该更认真地对待人工智能,并做更多的工作来为奇点做准备:[87]

/* Styling for Template:Quote */ .templatequote { overflow: hidden; margin: 1em 0; padding: 0 40px; } .templatequote .templatequotecite {

line-height: 1.5em; /* @noflip */ text-align: left; /* @noflip */ padding-left: 1.6em; margin-top: 0;

}

所以,面对可能的收益和风险难以估量的未来,专家们肯定会尽一切可能确保最好的结果,对吗?错了。如果一个高级的外星文明给我们发了一条信息说,“我们几十年后就会到达”,我们会不会只回答,“好吧,你到了这里就打电话给我们——我们会开着灯的”?可能不会——但这或多或少就是人工智能正在发生的事情。

Berglas (2008) claims that there is no direct evolutionary motivation for an AI to be friendly to humans. Evolution has no inherent tendency to produce outcomes valued by humans, and there is little reason to expect an arbitrary optimisation process to promote an outcome desired by mankind, rather than inadvertently leading to an AI behaving in a way not intended by its creators.[88][89][90] Anders Sandberg has also elaborated on this scenario, addressing various common counter-arguments.[91] AI researcher Hugo de Garis suggests that artificial intelligences may simply eliminate the human race for access to scarce resources,[58][92] and humans would be powerless to stop them.[93] Alternatively, AIs developed under evolutionary pressure to promote their own survival could outcompete humanity.[62]

Berglas(2008) 声称, 没有直接的进化动机促使人工智能对人类友好。进化并不倾向于产生人类所重视的结果,也没有理由期望一个任意的优化过程会促进人类所期望的结果,而不是无意中导致人工智能以违背其创造者原有意图的方式行事。[88][89][90] 安德斯·桑德伯格 Anders Sandberg 也也对这一情景进行了详细阐述,讨论了各种常见的反驳意见[91] 。人工智能研究员 Hugo de Garis[58][92] 认为,人工智能可能会为了获取稀缺资源而直接消灭人类,并且人类将无力阻止它们。[93] 或者,在进化压力下为了促进自身生存而发展起来的人工智能可能会胜过人类。[62]

Bostrom (2002) discusses human extinction scenarios, and lists superintelligence as a possible cause:

Bostrom(2002)讨论了人类灭绝的场景,并列举超级智能作为一个可能的原因:

/* Styling for Template:Quote */ .templatequote { overflow: hidden; margin: 1em 0; padding: 0 40px; } .templatequote .templatequotecite {

line-height: 1.5em; /* @noflip */ text-align: left; /* @noflip */ padding-left: 1.6em; margin-top: 0;

}

When we create the first superintelligent entity, we might make a mistake and give it goals that lead it to annihilate humankind, assuming its enormous intellectual advantage gives it the power to do so. For example, we could mistakenly elevate a subgoal to the status of a supergoal. We tell it to solve a mathematical problem, and it complies by turning all the matter in the solar system into a giant calculating device, in the process killing the person who asked the question.

当我们创造出第一个超级智能实体时,我们可能会犯错并给它一个导致人类毁灭的目标(假设它巨大的智力优势赋予它这样做的力量)。例如,我们可能会错误地将子目标提升为超级目标。我们告诉它去解决一个数学问题,然后它将太阳系中的所有物质变成一个巨大的计算装置,在这个过程中杀死了提出这个问题的人。

According to Eliezer Yudkowsky, a significant problem in AI safety is that unfriendly artificial intelligence is likely to be much easier to create than friendly AI. While both require large advances in recursive optimisation process design, friendly AI also requires the ability to make goal structures invariant under self-improvement (or the AI could transform itself into something unfriendly) and a goal structure that aligns with human values and does not automatically destroy the human race. An unfriendly AI, on the other hand, can optimize for an arbitrary goal structure, which does not need to be invariant under self-modification.[94] Bill Hibbard (2014) proposes an AI design that avoids several dangers including self-delusion,[95] unintended instrumental actions,[56][96] and corruption of the reward generator.[96] He also discusses social impacts of AI[97] and testing AI.[98] His 2001 book Super-Intelligent Machines advocates the need for public education about AI and public control over AI. It also proposed a simple design that was vulnerable to corruption of the reward generator.

According to Eliezer Yudkowsky, a significant problem in AI safety is that unfriendly artificial intelligence is likely to be much easier to create than friendly AI. While both require large advances in recursive optimisation process design, friendly AI also requires the ability to make goal structures invariant under self-improvement (or the AI could transform itself into something unfriendly) and a goal structure that aligns with human values and does not automatically destroy the human race. An unfriendly AI, on the other hand, can optimize for an arbitrary goal structure, which does not need to be invariant under self-modification. Bill Hibbard (2014) proposes an AI design that avoids several dangers including self-delusion, unintended instrumental actions, and corruption of the reward generator.[84] He also discusses social impacts of AI and testing AI. His 2001 book Super-Intelligent Machines advocates the need for public education about AI and public control over AI. It also proposed a simple design that was vulnerable to corruption of the reward generator.

按照Eliezer Yudkowsky的观点,人工智能安全的一个重要问题是,不友好的人工智能可能比友好的人工智能更容易创建。虽然两者都需要递归优化过程的进步,但友好的人工智能还需要目标结构在自我改进过程中保持不变(否则人工智能可以将自己转变成不友好的东西),以及一个与人类价值观相一致且不会自动毁灭人类的目标结构。另一方面,一个不友好的人工智能可以针对任意的目标结构进行优化,而目标结构不需要在自我改进过程中保持不变。Bill Hibbard (2014)提出了一种人工智能设计,可以避免包括自欺欺人、无意的工具性行为和奖励机制的腐败等一些危险。他还讨论了人工智能和人工智能测试的社会影响。他在2001年出版的“超级智能机器Super-Intelligent Machines”一书中提倡对人工智能的公共教育和公众控制。该书还提出了一个简单的易受奖励机制的腐败影响的设计。It also proposed a simple design that was vulnerable to corruption of the reward generator.

Next step of sociobiological evolution社会生物进化的下一步

{{进一步{社会文化进化}}

[[档案:主要进化过渡数字.jpg|thumb |直立=1.6 |生物圈中信息和复制因子的示意时间线:Gillings等人在信息处理中的“主要进化转变”。[99]]][89][89][89][89][89]

2014年,全球数字信息总量(5{e | 21}字节)与全球人类基因组信息(1019字节)的对比。[99][89][89][89][89][89]

While the technological singularity is usually seen as a sudden event, some scholars argue the current speed of change already fits this description.[citation needed]

虽然技术奇点通常被视为一个突发事件,但一些学者认为目前的变化速度已经符合这种描述。

In addition, some argue that we are already in the midst of a major evolutionary transition that merges technology, biology, and society. Digital technology has infiltrated the fabric of human society to a degree of indisputable and often life-sustaining dependence.

此外,有人认为,我们已经处在一个融合了技术、生物学和社会学的进化巨变major evolutionary transition之中。数字技术已经无可争辩地渗透到人类社会的结构中,而且生命的维持常常依赖数字技术。

A 2016 article in Trends in Ecology & Evolution argues that "humans already embrace fusions of biology and technology. We spend most of our waking time communicating through digitally mediated channels... we trust artificial intelligence with our lives through antilock braking in cars and autopilots in planes... With one in three marriages in America beginning online, digital algorithms are also taking a role in human pair bonding and reproduction".

2016年发表在 生态学和进化进展 Trends in Ecology and Evolution 的一篇文章认为,“人类已经接受了生物和技术的融合。我们清醒时大部分时间都是通过数字媒介进行交流的……我们拿性命做担保信任汽车上的防抱死制动系统 Anti-lock braking system 和飞机上的自动巡航模式 autopilot……在美国,三分之一的婚姻都是在网络上开始的,数字算法也在人类配对和繁殖中也发挥了作用”。

The article further argues that from the perspective of the evolution, several previous Major Transitions in Evolution have transformed life through innovations in information storage and replication (RNA, DNA, multicellularity, and culture and language). In the current stage of life's evolution, the carbon-based biosphere has generated a cognitive system (humans) capable of creating technology that will result in a comparable evolutionary transition.

文章进一步指出,从进化的角度来看,以前的几次进化巨变通过信息存储和复制方式(如RNA、DNA、多细胞性、文化和语言的出现)的创新来改变生命。在生命进化的当前阶段,以碳为基础的生物圈产生了一个认知系统(人类),能够创造技术,从而导致一个类似的进化变迁。

The digital information created by humans has reached a similar magnitude to biological information in the biosphere. Since the 1980s, the quantity of digital information stored has doubled about every 2.5 years, reaching about 5 zettabytes in 2014 (5模板:E bytes).[citation needed]

人类创造的数字信息已经达到了与生物圈中生物信息相似的规模。自20世纪80年代以来,存储的数字信息量大约每2.5年翻一番,2014年达到约5泽字节(5e21字节)。[citation needed]

In biological terms, there are 7.2 billion humans on the planet, each having a genome of 6.2 billion nucleotides. Since one byte can encode four nucleotide pairs, the individual genomes of every human on the planet could be encoded by approximately 1模板:E bytes. The digital realm stored 500 times more information than this in 2014 (see figure). The total amount of DNA contained in all of the cells on Earth is estimated to be about 5.3模板:E base pairs, equivalent to 1.325模板:E bytes of information.

在生物学方面,地球上有72亿人,每个人的基因组有62亿个核苷酸。由于一个字节可以编码四个核苷酸对,地球上每个人类的个体基因组可以编码大约1{e | 19}字节。2014年,数字领域存储的信息是这个数字的500倍(见图)。据估计,地球上所有细胞所含的DNA总量约为5.3{e | 37}碱基对,相当于1.325{e | 37}字节的信息。

If growth in digital storage continues at its current rate of 30–38% compound annual growth per year,[48] it will rival the total information content contained in all of the DNA in all of the cells on Earth in about 110 years. This would represent a doubling of the amount of information stored in the biosphere across a total time period of just 150 years".[99]

如果数字存储以目前每年30-38%的复合年增长率继续增长,它将在大约110年内与地球上所有细胞中的所有DNA所包含的信息总量相抗衡。这将意味着在仅仅150年的时间里,生物圈中储存的信息量翻了一番”。

Implications for human society对人类社会的影响

{{进一步{小说中的人工智能}}

In February 2009, under the auspices of the Association for the Advancement of Artificial Intelligence (AAAI), Eric Horvitz chaired a meeting of leading computer scientists, artificial intelligence researchers and roboticists at Asilomar in Pacific Grove, California. The goal was to discuss the potential impact of the hypothetical possibility that robots could become self-sufficient and able to make their own decisions. They discussed the extent to which computers and robots might be able to acquire autonomy, and to what degree they could use such abilities to pose threats or hazards.[100]

2009年2月,在人工智能促进协会Association for the Advancement of Artificial Intelligence(AAAI) 的主持下,Eric Horvitz在加利福尼亚州Pacific Grove 的 Asilomar 主持了一次由主要计算机科学家、人工智能研究人员和机器人学家参加的会议。其目的是讨论机器人能够自给自足并能够自己做决定的假设可能性的潜在影响。他们讨论了计算机和机器人能够在多大程度上获得自主性 autonomy,以及在多大程度上可以利用这些能力对人类构成威胁或危险。[100]

Some machines are programmed with various forms of semi-autonomy, including the ability to locate their own power sources and choose targets to attack with weapons. Also, some computer viruses can evade elimination and, according to scientists in attendance, could therefore be said to have reached a "cockroach" stage of machine intelligence. The conference attendees noted that self-awareness as depicted in science-fiction is probably unlikely, but that other potential hazards and pitfalls exist.[100]

有些机器被编程成各种形式的半自主 semi-autonomy,包括定位自己的电源和选择武器攻击的目标等。此外,有些计算机病毒可以避免被消除,根据与会科学家的说法,可以说已经达到了机器智能的“蟑螂”阶段。与会者指出,科幻小说中描述的自我意识可能不太可能,但也存在其他潜在的危险和陷阱。[100]

Frank S. Robinson predicts that once humans achieve a machine with the intelligence of a human, scientific and technological problems will be tackled and solved with brainpower far superior to that of humans. He notes that artificial systems are able to share data more directly than humans, and predicts that this would result in a global network of super-intelligence that would dwarf human capability.[101] Robinson also discusses how vastly different the future would potentially look after such an intelligence explosion. One example of this is solar energy, where the Earth receives vastly more solar energy than humanity captures, so capturing more of that solar energy would hold vast promise for civilizational growth.

Frank S. Robinson 预言,一旦人类实现了具有人类智能的机器,科学技术问题将被远远优于人类的智力来解决。他指出,人工系统能够比人类更直接地共享数据,并预测这将导致一个全球的超级智能网络,使人类的能力相形见绌。Robinson还讨论了在这样一次智能爆炸之后,未来可能会有多大的不同。其中一个例子就是太阳能,地球接收到的太阳能远远多于人类捕获的太阳能,因此捕捉更多的太阳能将为文明发展带来巨大的希望。

Hard vs. soft takeoff硬起飞与软起飞

[[文件:在这个示例递归自我改进场景中,修改人工智能体系结构的人可以每三年将其性能提高一倍,例如,30代人,然后用尽所有可行的改进(左)。相反,如果人工智能足够聪明,能够像人类研究人员那样修改自己的架构,那么每一代人完成一次重新设计所需的时间将减半,并且它在6年内将所有30代可行的代都推进(右图)。[102]]]

In a hard takeoff scenario, an AGI rapidly self-improves, "taking control" of the world (perhaps in a matter of hours), too quickly for significant human-initiated error correction or for a gradual tuning of the AGI's goals. In a soft takeoff scenario, AGI still becomes far more powerful than humanity, but at a human-like pace (perhaps on the order of decades), on a timescale where ongoing human interaction and correction can effectively steer the AGI's development.[103][104]

在硬起飞的情况下,通用人工智能 AGI 迅速自我完善并“掌控”世界(也许在几个小时内)。速度太快,以致于无法进行重大的人为纠错或对 AGI 的目标进行逐步调整。在软起飞的情况下,虽然 AGI 仍然比人类强大得多,但却以一种类似人类的速度进步(也许是几十年的数量级),持续的人类互动和修正可以有效地引导AGI 的发展。[105][106]

Ramez Naam argues against a hard takeoff. He has pointed that we already see recursive self-improvement by superintelligences, such as corporations. Intel, for example, has "the collective brainpower of tens of thousands of humans and probably millions of CPU cores to... design better CPUs!" However, this has not led to a hard takeoff; rather, it has led to a soft takeoff in the form of Moore's law.[107] Naam further points out that the computational complexity of higher intelligence may be much greater than linear, such that "creating a mind of intelligence 2 is probably more than twice as hard as creating a mind of intelligence 1."[108]

Ramez Naam反对硬起飞。他指出,我们已经看到企业等超级智能体的递归式地自我完善。例如,Intel拥有“数万人的集体脑力,可能还有数百万个CPU核心……来设计更好的CPU!”然而,这并没有导致硬起飞;相反,它以摩尔定律的形式实现了软起飞[107] 。Naam进一步指出,高等智能的计算复杂度可能比线性大得多,因此,“创建智能系统2的难度可能两倍于是创建智能系统1的两倍多。”[108]

J. Storrs Hall believes that "many of the more commonly seen scenarios for overnight hard takeoff are circular – they seem to assume hyperhuman capabilities at the starting point of the self-improvement process" in order for an AI to be able to make the dramatic, domain-general improvements required for takeoff. Hall suggests that rather than recursively self-improving its hardware, software, and infrastructure all on its own, a fledgling AI would be better off specializing in one area where it was most effective and then buying the remaining components on the marketplace, because the quality of products on the marketplace continually improves, and the AI would have a hard time keeping up with the cutting-edge technology used by the rest of the world.[109]

J.Storrs Hall认为,“许多常见的一夜之间出现的硬起飞场景都是循环论证——它们似乎在自我提升过程的起点上假设了超人类的能力”,以便人工智能能够实现起飞所需的戏剧性的、领域通用的改进。Hall认为,一个初出茅庐的人工智能与其靠自己不断地自我改进硬件、软件和基础设施,不如专注于一个它最有效的领域,然后在市场上购买剩余的组件,因为市场上产品的质量不断提高,人工智能将很难跟上世界其他地方使用的尖端技术。[109]

Ben Goertzel agrees with Hall's suggestion that a new human-level AI would do well to use its intelligence to accumulate wealth. The AI's talents might inspire companies and governments to disperse its software throughout society. Goertzel is skeptical of a hard five minute takeoff but speculates that a takeoff from human to superhuman level on the order of five years is reasonable. Goerzel refers to this scenario as a "semihard takeoff".[110]

Ben Goertzel 同意 Hall 的意见,即一种新的人类级别的人工智能将很好地利用其智能来积累财富。人工智能的天赋可能会激励公司和政府将其软件分散到整个社会。Goertzel 对5分钟的硬起飞持怀疑态度,但他推测从人类到超人的水平,以5年的速度起飞是合理的。Goerzel将这种情况称为“半硬起飞semihard takeoff”。[110]

Max More disagrees, arguing that if there were only a few superfast human-level AIs, that they would not radically change the world, as they would still depend on other people to get things done and would still have human cognitive constraints. Even if all superfast AIs worked on intelligence augmentation, it is unclear why they would do better in a discontinuous way than existing human cognitive scientists at producing super-human intelligence, although the rate of progress would increase. More further argues that a superintelligence would not transform the world overnight: a superintelligence would need to engage with existing, slow human systems to accomplish physical impacts on the world. "The need for collaboration, for organization, and for putting ideas into physical changes will ensure that all the old rules are not thrown out overnight or even within years."[111]

Max More不同意这一观点,他认为,如果只有少数超高速的人类水平的人工智能,它们不会从根本上改变世界,因为它们仍将依赖人来完成任务,并且仍然会受到人类认知的限制。即使所有的超高速人工智能都致力于智能增强,但目前还不清楚为什么它们在产生超人类智能方面比现有的人类认知科学家做得更好,尽管进展速度会加快。更进一步指出,超级智能不会在一夜之间改变世界:超级智能需要与现有的、缓慢的人类系统进行接触,以完成对世界的物理影响。”合作、组织和将想法付诸实际变革的需要将确保所有旧规则不会在一夜之间甚至几年内被抛弃。”[111]

Immortality 永生

In his 2005 book, The Singularity is Near, Kurzweil suggests that medical advances would allow people to protect their bodies from the effects of aging, making the life expectancy limitless. Kurzweil argues that the technological advances in medicine would allow us to continuously repair and replace defective components in our bodies, prolonging life to an undetermined age.[112] Kurzweil further buttresses his argument by discussing current bio-engineering advances. Kurzweil suggests somatic gene therapy; after synthetic viruses with specific genetic information, the next step would be to apply this technology to gene therapy, replacing human DNA with synthesized genes.[113]

在Kurzweil 2005年出版的《奇点近了The Singularity is Near》一书中,他指出,医学的进步将使人们能够保护自己的身体免受衰老的影响,从而延长寿命。Kurzweil认为,医学的技术进步将使我们能够不断地修复和更换我们身体中有缺陷的部件,从而将寿命延长到某个他无法确定的年龄。Kurzweil通过讨论当前的生物工程进展进一步支持了他的论点。Kurzweil建议了体细胞基因疗法somatic gene therapy;在合成具有特定遗传信息的病毒之后,下一步则是把这项技术应用到基因治疗中,用合成的基因取代人类的DNA。

K. Eric Drexler, one of the founders of nanotechnology, postulated cell repair devices, including ones operating within cells and utilizing as yet hypothetical biological machines, in his 1986 book Engines of Creation.

K.Eric Drexler,纳米技术nanotechnology的创始人之一,在他1986年的著作“创造的引擎Engines of Creation”中,假设了细胞修复设备,包括在细胞内运行并利用目前假设的生物机器的设备。

According to Richard Feynman, it was his former graduate student and collaborator Albert Hibbs who originally suggested to him (circa 1959) the idea of a medical use for Feynman's theoretical micromachines. Hibbs suggested that certain repair machines might one day be reduced in size to the point that it would, in theory, be possible to (as Feynman put it) "swallow the doctor". The idea was incorporated into Feynman's 1959 essay There's Plenty of Room at the Bottom.[114]

根据Richard Feynman,他过去的研究生和合作者Albert Hibbs最初向他建议(大约在1959年)费曼理论微型机械Feynman's theoretical micromachines的“医学”用途。Hibbs认为,有一天,理论上某些修理机器的尺寸可能会被尽可能地缩小(正如费曼所说的那样)“swallow the doctor”。这个想法被收入了费曼1959年的文章“在底部有很多空间[There's Plenty of Room at the Bottom”。

Beyond merely extending the operational life of the physical body, Jaron Lanier argues for a form of immortality called "Digital Ascension" that involves "people dying in the flesh and being uploaded into a computer and remaining conscious".[115]

除了仅仅延长物质身体的运行寿命之外,Jaron Lanier还主张一种称为“数字提升Digital Ascension”的不朽形式,即“人在肉体层面死亡,意识被上传到电脑里并保持清醒”。

History of the concept概念史

A paper by Mahendra Prasad, published in AI Magazine, asserts that the 18th-century mathematician Marquis de Condorcet was the first person to hypothesize and mathematically model an intelligence explosion and its effects on humanity.[116]

Mahendra Prasad在“人工智能杂志”上发表的一篇论文断言,18世纪的数学家Marquis de Condorcet是第一个对智能爆炸及其对人类影响进行假设和数学建模的人。

An early description of the idea was made in John Wood Campbell Jr.'s 1932 short story "The last evolution".

1932年[[约翰.伍德.坎贝尔]的短篇小说《最后的进化》(the last evolution)对这一想法作了早期的描述。

In his 1958 obituary for John von Neumann, Ulam recalled a conversation with von Neumann about the "ever accelerating progress of technology and changes in the mode of human life, which gives the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue."[7]

Ulam在1958年为约翰·冯·诺依曼写的讣告中,回忆了与冯·诺依曼的一次对话:“技术的不断进步和人类生活方式的变化,这使我们似乎接近了种族历史上某些基本的奇点,超出了这些奇点,人类的事务就不能继续下去了。”

In 1965, Good wrote his essay postulating an "intelligence explosion" of recursive self-improvement of a machine intelligence.

1965年,古德写了一篇文章,假设机器智能的自我改进迭代是“智能爆炸”。

In 1981, Stanisław Lem published his science fiction novel Golem XIV. It describes a military AI computer (Golem XIV) who obtains consciousness and starts to increase his own intelligence, moving towards personal technological singularity. Golem XIV was originally created to aid its builders in fighting wars, but as its intelligence advances to a much higher level than that of humans, it stops being interested in the military requirement because it finds them lacking internal logical consistency.

1981年,Stanisław Lem出版了他的科幻小说小说“Golem XIV”。它描述了一台军用人工智能计算机(Golem XIV)获得了意识并开始增加自己的智能,朝着个体的技术奇点迈进。Golem XIV最初是为了帮助它的建造者打仗,但随着它的智力发展到比人类更高的水平,它不再对军事感兴趣,因为它发现它们缺乏内在的逻辑一致性。

In 1983, Vernor Vinge greatly popularized Good's intelligence explosion in a number of writings, first addressing the topic in print in the January 1983 issue of Omni magazine. In this op-ed piece, Vinge seems to have been the first to use the term "singularity" in a way that was specifically tied to the creation of intelligent machines:[117][118]

1983年,Vernor Vinge在许多著作中极大地普及了Good的智能爆炸,他第一次在1983年1月出版的“[[Omni”杂志上提到了这一主题。在这篇评论文章中,Vinge似乎是第一个使用“奇点”一词的人,并将“奇点”的概念特别地与智能机器的创造进行联系:

/*

Styling for Template:Quote */

.templatequote {

overflow: hidden;

margin: 1em 0;

padding: 0 40px;

}

.templatequote .templatequotecite {

line-height: 1.5em; /* @noflip */ text-align: left; /* @noflip */ padding-left: 1.6em; margin-top: 0;

}

{{我们很快就会创造出比我们自己更强大的智能。当这种情况发生时,人类历史将达到一种奇点,一种如同黑洞中心打结的时空一样难以逾越的知识转变,世界将远远超出我们的理解。我相信,这种奇点已经困扰了许多科幻作家。这使得对星际未来的现实推断变得不可能。要写一个多世纪以后的故事,科幻小说家需要一场核战争……这样世界就可以理解了。}}

In 1985, in "The Time Scale of Artificial Intelligence", artificial intelligence researcher Ray Solomonoff articulated mathematically the related notion of what he called an "infinity point": if a research community of human-level self-improving AIs take four years to double their own speed, then two years, then one year and so on, their capabilities increase infinitely in finite time.[8][119]

1985年,在《人工智能的时间尺度The Time Scale of Artificial Intelligence》一书中,人工智能研究人员Ray Solomonoff以数学的方式阐述了他所说的“无限点”的相关概念:如果一个人类水平的能自我改进人工智能的研究社区需要四年时间使其速度加倍,那么两年,然后一年,依此类推,它们的能力在有限的时间内无限增长。[8][120]

Vinge's 1993 article "The Coming Technological Singularity: How to Survive in the Post-Human Era",[10] spread widely on the internet and helped to popularize the idea.[121] This article contains the statement, "Within thirty years, we will have the technological means to create superhuman intelligence. Shortly after, the human era will be ended." Vinge argues that science-fiction authors cannot write realistic post-singularity characters who surpass the human intellect, as the thoughts of such an intellect would be beyond the ability of humans to express.[10]

Vinge 1993年的文章《未来的技术奇点:如何在后人类时代生存The Coming Technological Singularity: How to Survive in the Post-Human Era》在互联网上广为传播,普及了这一理念。“在三十年内,我们将拥有创造超人智慧的技术手段。不久之后,人类时代将结束。”Vinge认为,科幻小说作者无法写出超越人类智力的现实主义后奇点人物,因为这种智力的思想将超出人类的表达能力。

In 2000, Bill Joy, a prominent technologist and a co-founder of Sun Microsystems, voiced concern over the potential dangers of the singularity.[122]

2000年,著名的技术专家和 Sun Microsystems 的联合创始人 Bill Joy,表达了对奇点潜在危险的担忧。[122]

In 2005, Kurzweil published The Singularity is Near. Kurzweil's publicity campaign included an appearance on The Daily Show with Jon Stewart.[123]

2005年,库兹韦尔发表了《奇点临近 The Singularity is Near》。库兹韦尔的宣传活动包括参加“ Jon Stewart 的每日秀 The Daily Show with Jon Stewart”。[123]

In 2007, Eliezer Yudkowsky suggested that many of the varied definitions that have been assigned to "singularity" are mutually incompatible rather than mutually supporting.[23][124] For example, Kurzweil extrapolates current technological trajectories past the arrival of self-improving AI or superhuman intelligence, which Yudkowsky argues represents a tension with both I. J. Good's proposed discontinuous upswing in intelligence and Vinge's thesis on unpredictability.[23]

2007年,Eliezer Yudkowsky指出,“奇点”被赋予的许多不同的定义是互不兼容而不是相互支持的[23][125]。例如,库兹韦尔推断了在自我提升的人工智能或超人智能到来之前的当前技术轨迹。Yudkowsky 认为,这与I.J.Good 提出的智能的不连续上升和 Vinge 关于不可预测性的论点存在矛盾。[23]

In 2009, Kurzweil and X-Prize founder Peter Diamandis announced the establishment of Singularity University, a nonaccredited private institute whose stated mission is "to educate, inspire and empower leaders to apply exponential technologies to address humanity's grand challenges."[126] Funded by Google, Autodesk, ePlanet Ventures, and a group of technology industry leaders, Singularity University is based at NASA's Ames Research Center in Mountain View, California. The not-for-profit organization runs an annual ten-week graduate program during summer that covers ten different technology and allied tracks, and a series of executive programs throughout the year.

2009年,Kurzweil和X-Prize的创始人Peter Diamandis宣布成立奇点大学,这是一所未经认证的私立学院,其宣称的使命是“教育、激励和赋能领导者来使用指数技术应对人类的重大挑战[126] 。”奇点大学由Google、Autodesk、ePlanet Ventures和一群高科技产业的领导团队资助,总部设在位于加州山景城的美国宇航局 NASA 的 艾姆斯研究中心 Ames Research Center。这家非营利组织在每年夏季举办为期十周的研究生课程,涵盖十种不同的技术和相关领域,并全年举办一系列高管课程。

In politics 在政治中

In 2007, the Joint Economic Committee of the United States Congress released a report about the future of nanotechnology. It predicts significant technological and political changes in the mid-term future, including possible technological singularity.[127][128][129]

2007年,美国国会联合经济委员会 the Joint Economic Committee of the United States Congress 发布了一份关于纳米技术的未来的报告。它预测在中期的未来会发生诸多重大的技术和政治变化,包括可能的技术奇点。[130][131][132]

Former President of the United States Barack Obama spoke about singularity in his interview to Wired in 2016:[133] 2016年,美国前总统巴拉克·奥巴马 Barack Obama 在接受《连线》杂志采访时谈到了奇点:[134]

/*

Styling for Template:Quote */

.templatequote {

overflow: hidden;

margin: 1em 0;

padding: 0 40px;

}

.templatequote .templatequotecite {

line-height: 1.5em; /* @noflip */ text-align: left; /* @noflip */ padding-left: 1.6em; margin-top: 0;

}

One thing that we haven't talked about too much, and I just want to go back to, is we really have to think through the economic implications. Because most people aren't spending a lot of time right now worrying about singularity—they are worrying about "Well, is my job going to be replaced by a machine?"

有一件事我们没有谈得太多,我还想回过去谈谈,那就是我们真的必须考虑对经济的影响。因为大多数人现在并没有花太多时间去担心奇点,而是在担心“好吧,我的工作会被机器取代吗?”

See also参阅

|archive-url = https://web.archive.org/web/20010527181244/http://www.aeiveos.com/~bradbury/Authors/Computing/Good-IJ/SCtFUM.html |archive-date = 2001-05-27

| archive-url = https://web.archive.org/web/20010527181244/http://www.aeiveos.com/~bradbury/authors/computing/good-ij/sctfum.html | archive-date = 2001-05-27

| doi = 10.1016/S0065-2458(08)60418-0

| doi = 10.1016/S0065-2458(08)60418-0

| series = Advances in Computers

系列 = 计算机的进步

| isbn = 9780120121069| title = Advances in Computers Volume 6

9780120121069

| hdl = 10919/89424

| hdl = 10919/89424

| hdl-access = free

| hdl-access = free

}}

}}

|url = http://hanson.gmu.edu/vc.html#hanson

Http://hanson.gmu.edu/vc.html#hanson

| title = Some Skepticism

一些怀疑论者

| first = Robin

第一名: 罗宾

| last = Hanson

| last = Hanson

| author-link = Robin Hanson

| author-link = Robin Hanson

| year = 1998

1998年

| publisher = Robin Hanson

| publisher = Robin Hanson

| accessdate = 2009-06-19

2009-06-19

| archiveurl = https://web.archive.org/web/20090828023928/http://hanson.gmu.edu/vc.html

2012年3月24日 | archiveurl = https://web.archive.org/web/20090828023928/http://hanson.gmu.edu/vc.html

| archivedate = 2009-08-28

| archivedate = 2009-08-28

}}

}}

| first=Anthony

第一名: 安东尼

| last=Berglas

| last = Berglas

| title=Artificial Intelligence will Kill our Grandchildren

人工智能会杀死我们的孙子

References

| year=2008

2008年

TODO: end here

Citations引用

|url = http://berglas.org/Articles/AIKillGrandchildren/AIKillGrandchildren.html

Http://berglas.org/articles/aikillgrandchildren/aikillgrandchildren.html

- ↑ Cadwalladr, Carole (2014). "Are the robots about to rise? Google's new director of engineering thinks so…" The Guardian. Guardian News and Media Limited.

- ↑ "Collection of sources defining "singularity"". singularitysymposium.com. Retrieved 17 April 2019.

- ↑ 3.0 3.1 3.2 3.3 Eden, Amnon H.; Moor, James H. (2012). Singularity hypotheses: A Scientific and Philosophical Assessment. Dordrecht: Springer. pp. 1–2. ISBN 9783642325601.

- ↑ Cadwalladr, Carole (2014). "Are the robots about to rise? Google's new director of engineering thinks so…" The Guardian. Guardian News and Media Limited.

- ↑ "Collection of sources defining "singularity"". singularitysymposium.com. Retrieved 17 April 2019.

- ↑ The Technological Singularity by Murray Shanahan, (MIT Press, 2015), page 233

- ↑ 7.0 7.1 7.2 引用错误:无效

<ref>标签;未给name属性为mathematical的引用提供文字 - ↑ 8.0 8.1 8.2 8.3 Chalmers, David (2010). "The singularity: a philosophical analysis". Journal of Consciousness Studies. 17 (9–10): 7–65.

- ↑ The Technological Singularity by Murray Shanahan, (MIT Press, 2015), page 233

- ↑ 10.0 10.1 10.2 10.3 10.4 10.5 10.6 10.7 10.8 10.9 Vinge, Vernor. "The Coming Technological Singularity: How to Survive in the Post-Human Era", in Vision-21: Interdisciplinary Science and Engineering in the Era of Cyberspace, G. A. Landis, ed., NASA Publication CP-10129, pp. 11–22, 1993.

- ↑ Sparkes, Matthew (13 January 2015). "Top scientists call for caution over artificial intelligence". The Telegraph (UK). Retrieved 24 April 2015.

- ↑ "Hawking: AI could end human race". BBC. 2 December 2014. Retrieved 11 November 2017.

- ↑ Sparkes, Matthew (13 January 2015). "Top scientists call for caution over artificial intelligence". The Telegraph (UK). Retrieved 24 April 2015.

- ↑ "Hawking: AI could end human race". BBC. 2 December 2014. Retrieved 11 November 2017.

- ↑ 15.0 15.1 Khatchadourian, Raffi (16 November 2015). "The Doomsday Invention". The New Yorker. Retrieved 31 January 2018.

- ↑ Müller, V. C., & Bostrom, N. (2016). "Future progress in artificial intelligence: A survey of expert opinion". In V. C. Müller (ed): Fundamental issues of artificial intelligence (pp. 555–572). Springer, Berlin. http://philpapers.org/rec/MLLFPI

- ↑ Müller, V. C., & Bostrom, N. (2016). "Future progress in artificial intelligence: A survey of expert opinion". In V. C. Müller (ed): Fundamental issues of artificial intelligence (pp. 555–572). Springer, Berlin. http://philpapers.org/rec/MLLFPI

- ↑ 18.0 18.1 Ehrlich, Paul. The Dominant Animal: Human Evolution and the Environment

- ↑ 19.0 19.1 Superbrains born of silicon will change everything. -{zh-cn:互联网档案馆; zh-tw:網際網路檔案館; zh-hk:互聯網檔案館;}-的存檔,存档日期August 1, 2010,.

- ↑ 20.0 20.1 20.2 20.3 引用错误:无效

<ref>标签;未给name属性为stat的引用提供文字 - ↑ 21.0 21.1 引用错误:无效

<ref>标签;未给name属性为singularity的引用提供文字 - ↑ 22.0 22.1 引用错误:无效

<ref>标签;未给name属性为hplusmagazine的引用提供文字 - ↑ 23.0 23.1 23.2 23.3 23.4 23.5 引用错误:无效

<ref>标签;未给name属性为yudkowsky.net的引用提供文字 - ↑ 24.0 24.1 引用错误:无效

<ref>标签;未给name属性为agi-conf的引用提供文字 - ↑ Kaj Sotala and Roman Yampolskiy (2017). "Risks of the Journey to the Singularity". The Technological Singularity. The Frontiers Collection. Springer Berlin Heidelberg. pp. 11–23. doi:10.1007/978-3-662-54033-6_2. ISBN 978-3-662-54031-2.

- ↑ 26.0 26.1 26.2 26.3 "What is the Singularity? | Singularity Institute for Artificial Intelligence". Singinst.org. Archived from the original on 2011-09-08. Retrieved 2011-09-09.

- ↑ David J. Chalmers (2016). "The Singularity". Science Fiction and Philosophy. John Wiley \& Sons, Inc. pp. 171–224. doi:10.1002/9781118922590.ch16. ISBN 9781118922590.

- ↑ Kaj Sotala and Roman Yampolskiy (2017). "Risks of the Journey to the Singularity". The Technological Singularity. The Frontiers Collection. Springer Berlin Heidelberg. pp. 11–23. doi:10.1007/978-3-662-54033-6_2. ISBN 978-3-662-54031-2.

- ↑ David J. Chalmers (2016). "The Singularity". Science Fiction and Philosophy. John Wiley \& Sons, Inc. pp. 171–224. doi:10.1002/9781118922590.ch16. ISBN 9781118922590.

- ↑ 30.0 30.1 引用错误:无效

<ref>标签;未给name属性为spectrum.ieee.org的引用提供文字 - ↑ 31.0 31.1 引用错误:无效

<ref>标签;未给name属性为ieee的引用提供文字 - ↑ 32.0 32.1 引用错误:无效

<ref>标签;未给name属性为Allen的引用提供文字 - ↑ 33.0 33.1 Hanson, Robin (1998). "Some Skepticism". Retrieved April 8, 2020.

- ↑ David Chalmers John Locke Lecture, 10 May, Exam Schools, Oxford, Presenting a philosophical analysis of the possibility of a technological singularity or "intelligence explosion" resulting from recursively self-improving AI -{zh-cn:互联网档案馆; zh-tw:網際網路檔案館; zh-hk:互聯網檔案館;}-的存檔,存档日期2013-01-15..

- ↑ 35.0 35.1 The Singularity: A Philosophical Analysis, David J. Chalmers

- ↑ 36.0 36.1 "ITRS" (PDF). Archived from the original (PDF) on 2011-09-29. Retrieved 2011-09-09.

- ↑ Kulkarni, Ajit (2017-12-12). "Why Software Is More Important Than Hardware Right Now". Chronicled. Retrieved 2019-02-23.

- ↑ Kulkarni, Ajit (2017-12-12). "Why Software Is More Important Than Hardware Right Now". Chronicled. Retrieved 2019-02-23.

- ↑ Grace, Katja; Salvatier, John; Dafoe, Allan; Zhang, Baobao; Evans, Owain (24 May 2017). "When Will AI Exceed Human Performance? Evidence from AI Experts". arXiv:1705.08807 [cs.AI].

- ↑ Grace, Katja; Salvatier, John; Dafoe, Allan; Zhang, Baobao; Evans, Owain (24 May 2017). "When Will AI Exceed Human Performance? Evidence from AI Experts". arXiv:1705.08807 [cs.AI].

- ↑ 41.0 41.1 Siracusa, John (2009-08-31). "Mac OS X 10.6 Snow Leopard: the Ars Technica review". Arstechnica.com. Retrieved 2011-09-09.

- ↑ 42.0 42.1 Eliezer Yudkowsky, 1996 "Staring into the Singularity"

- ↑ 43.0 43.1 "Tech Luminaries Address Singularity". IEEE Spectrum. 1 June 2008.

- ↑ Moravec, Hans (1999). Robot: Mere Machine to Transcendent Mind. Oxford U. Press. p. 61. ISBN 978-0-19-513630-2. https://books.google.com/books?id=fduW6KHhWtQC&pg=PA61.