机器学习

此词条暂由彩云小译翻译,未经人工整理和审校,带来阅读不便,请见谅。

Machine learning (ML) is the study of computer algorithms that improve automatically through experience.[1] It is seen as a subset of artificial intelligence. Machine learning algorithms build a mathematical model based on sample data, known as "training data", in order to make predictions or decisions without being explicitly programmed to do so.模板:Refn[2]:2 Machine learning algorithms are used in a wide variety of applications, such as email filtering and computer vision, where it is difficult or infeasible to develop conventional algorithms to perform the needed tasks.

Machine learning (ML) is the study of computer algorithms that improve automatically through experience. It is seen as a subset of artificial intelligence. Machine learning algorithms build a mathematical model based on sample data, known as "training data", in order to make predictions or decisions without being explicitly programmed to do so.}} Machine learning algorithms are used in a wide variety of applications, such as email filtering and computer vision, where it is difficult or infeasible to develop conventional algorithms to perform the needed tasks.

机器学习 ML关于如何通过经验学习来实现自动改进的计算机算法研究。它被看作是人工智能的一个子集(属于人工智能的范畴)。机器学习算法的基础是建立一个基于样本数据(称为“训练数据”)的数学模型,以便在没有明确编程策略的情况下进行预测或决策。机器学习算法被广泛应用于各种各样的场景中,如电子邮件过滤和计算机视觉等。而这些问题,如果仅仅使用传统算法来实现是十分困难的,甚至是或不可行的。

Machine learning is closely related to computational statistics, which focuses on making predictions using computers. The study of mathematical optimization delivers methods, theory and application domains to the field of machine learning. Data mining is a related field of study, focusing on exploratory data analysis through unsupervised learning.模板:Refn[3] In its application across business problems, machine learning is also referred to as predictive analytics.

Machine learning is closely related to computational statistics, which focuses on making predictions using computers. The study of mathematical optimization delivers methods, theory and application domains to the field of machine learning. Data mining is a related field of study, focusing on exploratory data analysis through unsupervised learning.模板:Refn</ref> As of 2020, deep learning has become the dominant approach for much ongoing work in the field of machine learning .

截至2020年,深度学习已经成为机器学习领域许多正在进行的工作的热门方法。

历史以及和其他领域的关系 History and relationships to other fields

The term machine learning was coined in 1959 by Arthur Samuel, an American IBMer and pioneer in the field of computer gaming and artificial intelligence. [4][5] A representative book of the machine learning research during the 1960s was the Nilsson's book on Learning Machines, dealing mostly with machine learning for pattern classification.[6] Interest related to pattern recognition continued into the 1970s, as described by Duda and Hart in 1973. [7] In 1981 a report was given on using teaching strategies so that a neural network learns to recognize 40 characters (26 letters, 10 digits, and 4 special symbols) from a computer terminal. [8]

The term machine learning was coined in 1959 by Arthur Samuel, an American IBMer and pioneer in the field of computer gaming and artificial intelligence. A representative book of the machine learning research during the 1960s was the Nilsson's book on Learning Machines, dealing mostly with machine learning for pattern classification. Interest related to pattern recognition continued into the 1970s, as described by Duda and Hart in 1973. In 1981 a report was given on using teaching strategies so that a neural network learns to recognize 40 characters (26 letters, 10 digits, and 4 special symbols) from a computer terminal.

机器学习这个名词最早是1959年由美国 IBMer 提出的,他是计算机游戏和人工智能领域的先驱。20世纪60年代机器学习研究的一本代表性书籍是尼尔森的《学习机器 Learning Machines》,主要介绍了模式分类上的机器学习方法。正如 Duda 和 Hart 在1973年所描述的那样,研究人员对模式识别的研究兴趣一直持续到了20世纪70年代。1981年,一份关于利用学习策略使神经网络从计算机终端学习识别到了40个字符(26个字母、10个数字和4个特殊符号)的报告发表。

Tom M. Mitchell provided a widely quoted, more formal definition of the algorithms studied in the machine learning field: "A computer program is said to learn from experience E with respect to some class of tasks T and performance measure P if its performance at tasks in T, as measured by P, improves with experience E."引用错误:没有找到与</ref>对应的<ref>标签 This definition of the tasks in which machine learning is concerned offers a fundamentally operational definition rather than defining the field in cognitive terms. This follows Alan Turing's proposal in his paper "Computing Machinery and Intelligence", in which the question "Can machines think?" is replaced with the question "Can machines do what we (as thinking entities) can do?".[9]

|year=1997}}</ref> This definition of the tasks in which machine learning is concerned offers a fundamentally operational definition rather than defining the field in cognitive terms. This follows Alan Turing's proposal in his paper "Computing Machinery and Intelligence", in which the question "Can machines think?" is replaced with the question "Can machines do what we (as thinking entities) can do?".

这个关于机器学习任务的定义提供了一个基本的操作型定义,但并不是通过已知的术语来进行定义的。此前,阿兰 · 图灵 Alan Turing在他的论文《计算机器与智能 Computing Machinery and Intelligence》中提出了“机器能思考吗?”这个问题的替代问题为“机器能做我们(作为思考实体)能做的事情吗?”.

与人工智能 Artificial intelligence的关系Relation to artificial intelligence

As a scientific endeavor, machine learning grew out of the quest for artificial intelligence. In the early days of AI as an academic discipline, some researchers were interested in having machines learn from data. They attempted to approach the problem with various symbolic methods, as well as what were then termed "neural networks"; these were mostly perceptrons and other models that were later found to be reinventions of the generalized linear models of statistics.[10] Probabilistic reasoning was also employed, especially in automated medical diagnosis.[11]:488

As a scientific endeavor, machine learning grew out of the quest for artificial intelligence. In the early days of AI as an academic discipline, some researchers were interested in having machines learn from data. They attempted to approach the problem with various symbolic methods, as well as what were then termed "neural networks"; these were mostly perceptrons and other models that were later found to be reinventions of the generalized linear models of statistics. Probabilistic reasoning was also employed, especially in automated medical diagnosis.

作为一项科研成果,机器学习源于对人工智能的探索。在人工智能这一学科研究的早期,一些研究人员对于让机器从数据中进行学习这一问题很感兴趣。他们试图用各种符号方法甚至是当时被称为”神经网络 Neural Networks”的方法来处理这个问题;但这些方法大部分是感知器或其他模型。后来这些模型随着统计学中广义线性模型的发展而重新出现在大众视野中,与此同时概率推理的方法也被广泛使用,特别是在自动医疗诊断问题上。

However, an increasing emphasis on the logical, knowledge-based approach caused a rift between AI and machine learning. Probabilistic systems were plagued by theoretical and practical problems of data acquisition and representation.[11]:488 By 1980, expert systems had come to dominate AI, and statistics was out of favor.[12] Work on symbolic/knowledge-based learning did continue within AI, leading to inductive logic programming, but the more statistical line of research was now outside the field of AI proper, in pattern recognition and information retrieval.[11]:708–710; 755 Neural networks research had been abandoned by AI and computer science around the same time. This line, too, was continued outside the AI/CS field, as "connectionism", by researchers from other disciplines including Hopfield, Rumelhart and Hinton. Their main success came in the mid-1980s with the reinvention of backpropagation.[11]:25

However, an increasing emphasis on the logical, knowledge-based approach caused a rift between AI and machine learning. Probabilistic systems were plagued by theoretical and practical problems of data acquisition and representation. Work on symbolic/knowledge-based learning did continue within AI, leading to inductive logic programming, but the more statistical line of research was now outside the field of AI proper, in pattern recognition and information retrieval. Neural networks research had been abandoned by AI and computer science around the same time. This line, too, was continued outside the AI/CS field, as "connectionism", by researchers from other disciplines including Hopfield, Rumelhart and Hinton. Their main success came in the mid-1980s with the reinvention of backpropagation.

然而,日益强调的基于知识的逻辑方法 Knowledge-based approach导致了人工智能和机器学习之间的裂痕。概率系统一直被数据获取和表示的理论和实际问题所困扰。在人工智能内部,符号/知识学习的工作确实在继续,导致了归纳逻辑编程,但更多的统计研究现在已经超出了人工智能本身的领域,即模式识别和信息检索。神经网络的研究几乎在同一时间被人工智能和计算机科学领域所抛弃,但这种思路却在人工智能/计算机之外的领域被延续了下来,被其他学科的研究人员称为“连接主义”,包括霍普菲尔德 Hopfield、鲁梅尔哈特 Rumelhart和辛顿 Hinton。他们的主要成就集中在20世纪80年代中期,在这一阶段神经网络的方法随着反向传播算法的出现而重新被世人所重视。

Machine learning, reorganized as a separate field, started to flourish in the 1990s. The field changed its goal from achieving artificial intelligence to tackling solvable problems of a practical nature. It shifted focus away from the symbolic approaches it had inherited from AI, and toward methods and models borrowed from statistics and probability theory.[12] As of 2019, many sources continue to assert that machine learning remains a sub field of AI. Yet some practitioners, for example Dr Daniel Hulme, who both teaches AI and runs a company operating in the field, argues that machine learning and AI are separate. 引用错误:没有找到与</ref>对应的<ref>标签引用错误:无效<ref>标签;未填name属性的引用必须填写内容[13][14]

</ref>

/ 参考

与数据挖掘 Data mining的关系Relation to data mining

Machine learning and data mining often employ the same methods and overlap significantly, but while machine learning focuses on prediction, based on known properties learned from the training data, data mining focuses on the discovery of (previously) unknown properties in the data (this is the analysis step of knowledge discovery in databases). Data mining uses many machine learning methods, but with different goals; on the other hand, machine learning also employs data mining methods as "unsupervised learning" or as a preprocessing step to improve learner accuracy. Much of the confusion between these two research communities (which do often have separate conferences and separate journals, ECML PKDD being a major exception) comes from the basic assumptions they work with: in machine learning, performance is usually evaluated with respect to the ability to reproduce known knowledge, while in knowledge discovery and data mining (KDD) the key task is the discovery of previously unknown knowledge. Evaluated with respect to known knowledge, an uninformed (unsupervised) method will easily be outperformed by other supervised methods, while in a typical KDD task, supervised methods cannot be used due to the unavailability of training data.

Machine learning and data mining often employ the same methods and overlap significantly, but while machine learning focuses on prediction, based on known properties learned from the training data, data mining focuses on the discovery of (previously) unknown properties in the data (this is the analysis step of knowledge discovery in databases). Data mining uses many machine learning methods, but with different goals; on the other hand, machine learning also employs data mining methods as "unsupervised learning" or as a preprocessing step to improve learner accuracy. Much of the confusion between these two research communities (which do often have separate conferences and separate journals, ECML PKDD being a major exception) comes from the basic assumptions they work with: in machine learning, performance is usually evaluated with respect to the ability to reproduce known knowledge, while in knowledge discovery and data mining (KDD) the key task is the discovery of previously unknown knowledge. Evaluated with respect to known knowledge, an uninformed (unsupervised) method will easily be outperformed by other supervised methods, while in a typical KDD task, supervised methods cannot be used due to the unavailability of training data.

机器学习和数据挖掘虽然在使用方法上有些相似并且有很大的重叠,但是机器学习的重点是预测,基于从训练数据中学到的已知属性,而数据挖掘的重点则是发现数据中(以前)未知的属性(这是数据库中知识发现 Knowledge Discovery in Database, KDD的基本分析步骤),也就是说数据挖掘虽然使用了许多机器学习方法,但二者的目标不同; 另一方面,机器学习也使用数据挖掘方法作为“无监督学习”或作为提高学习者准确性的预处理步骤。这两个研究领域之间的混淆(这两个领域通常有各自单独的会议和单独的期刊,ECML PKDD是一个例外)来自他们工作的基本假设: 在机器学习中,算法性能通常是根据再现已知知识的能力来评估,而在知识发现和数据挖掘中,其关键任务是发现以前未知的知识,因此在对已知知识进行评价时,其他监督方法很容易超过未知(无监督)方法,而在典型的知识发现任务中,由于缺乏训练数据,无法使用有监督的学习算法。

与优化的关系 Relation to optimization

Machine learning also has intimate ties to optimization: many learning problems are formulated as minimization of some loss function on a training set of examples. Loss functions express the discrepancy between the predictions of the model being trained and the actual problem instances (for example, in classification, one wants to assign a label to instances, and models are trained to correctly predict the pre-assigned labels of a set of examples). The difference between the two fields arises from the goal of generalization: while optimization algorithms can minimize the loss on a training set, machine learning is concerned with minimizing the loss on unseen samples.[15]

Machine learning also has intimate ties to optimization: many learning problems are formulated as minimization of some loss function on a training set of examples. Loss functions express the discrepancy between the predictions of the model being trained and the actual problem instances (for example, in classification, one wants to assign a label to instances, and models are trained to correctly predict the pre-assigned labels of a set of examples). The difference between the two fields arises from the goal of generalization: while optimization algorithms can minimize the loss on a training set, machine learning is concerned with minimizing the loss on unseen samples.

机器学习与优化也有着密切的联系: 许多学习问题被表述为最小化训练样本集上的某些损失函数 Loss function。损失函数表示正在训练的模型预测结果与实际数据之间的差异(例如,在分类问题中,人们的目标是给一个未知的实例分配其对应标签,而模型经过训练学习到的是如何正确地为一组实例标记事先已知的标签)。这两个领域之间的差异源于泛化的目标: 优化算法可以最小化训练集上的损失,而机器学习关注于最小化未知样本上的损失。

与统计学的关系 Relation to statistics

Machine learning and statistics are closely related fields in terms of methods, but distinct in their principal goal: statistics draws population inferences from a sample, while machine learning finds generalizable predictive patterns.[16] According to Michael I. Jordan, the ideas of machine learning, from methodological principles to theoretical tools, have had a long pre-history in statistics.[17] He also suggested the term data science as a placeholder to call the overall field.[17]

Machine learning and statistics are closely related fields in terms of methods, but distinct in their principal goal: statistics draws population inferences from a sample, while machine learning finds generalizable predictive patterns. According to Michael I. Jordan, the ideas of machine learning, from methodological principles to theoretical tools, have had a long pre-history in statistics. He also suggested the term data science as a placeholder to call the overall field.

就方法而言,机器学习和统计学是密切相关的两个领域,但它们的主要目标是不同的: 统计学侧重从样本中得出总体推论,而机器学习则是找到可概括的预测模式。根据迈克尔 · 乔丹 Michael I. Jordan的观点,机器学习的思想,从方法论原则到理论工具,在统计学中已经有很长的历史了。他还建议用数据科学 Data science这个词作为整个领域的总称。

Leo Breiman distinguished two statistical modeling paradigms: data model and algorithmic model,[18] wherein "algorithmic model" means more or less the machine learning algorithms like Random forest.

Leo Breiman distinguished two statistical modeling paradigms: data model and algorithmic model, wherein "algorithmic model" means more or less the machine learning algorithms like Random forest.

Leo Breiman 区分了两种统计建模范式: 数据模型和算法模型,其中“算法模型”或多或少意味着像随机森林 Random forest,RF这样的机器学习算法。

Some statisticians have adopted methods from machine learning, leading to a combined field that they call statistical learning.[19]

Some statisticians have adopted methods from machine learning, leading to a combined field that they call statistical learning.

一些统计学家采用了机器学习的方法,形成了一个他们称之为“统计学习 Statistical learning”的综合领域。

模板:Anchor 理论 Theory

A core objective of a learner is to generalize from its experience.[2][20] Generalization in this context is the ability of a learning machine to perform accurately on new, unseen examples/tasks after having experienced a learning data set. The training examples come from some generally unknown probability distribution (considered representative of the space of occurrences) and the learner has to build a general model about this space that enables it to produce sufficiently accurate predictions in new cases.

A core objective of a learner is to generalize from its experience. Generalization in this context is the ability of a learning machine to perform accurately on new, unseen examples/tasks after having experienced a learning data set. The training examples come from some generally unknown probability distribution (considered representative of the space of occurrences) and the learner has to build a general model about this space that enables it to produce sufficiently accurate predictions in new cases.

学习者的一个核心目标是从经验中进行总结。在这种情况下,泛化 Generalization指的是学习机器在经历了一个学习数据集之后,能够准确地执行新的、未知的例子 / 任务的能力。这些训练的例子来自于一些通常不为人知的概率分布(被认为是事件空间的代表),学习者必须建立一个关于这个空间的通用模型,才能保证其能够在新案例中产生足够准确的预测。

The computational analysis of machine learning algorithms and their performance is a branch of theoretical computer science known as computational learning theory. Because training sets are finite and the future is uncertain, learning theory usually does not yield guarantees of the performance of algorithms. Instead, probabilistic bounds on the performance are quite common. The bias–variance decomposition is one way to quantify generalization error.

The computational analysis of machine learning algorithms and their performance is a branch of theoretical computer science known as computational learning theory. Because training sets are finite and the future is uncertain, learning theory usually does not yield guarantees of the performance of algorithms. Instead, probabilistic bounds on the performance are quite common. The bias–variance decomposition is one way to quantify generalization error.

机器学习算法及其性能的计算分析是理论计算机科学 Theoretical computer science的一个分支,也被称为机器学习理论 Computational learning theory。由于训练集是有限的,未来是不确定的,学习理论通常不能保证算法的性能。相反,性能的概率界限是相当常见的。偏差-方差分解 Bias–variance decomposition就是量化泛化误差 Errors and residuals的一种方法。

For the best performance in the context of generalization, the complexity of the hypothesis should match the complexity of the function underlying the data. If the hypothesis is less complex than the function, then the model has under fitted the data. If the complexity of the model is increased in response, then the training error decreases. But if the hypothesis is too complex, then the model is subject to overfitting and generalization will be poorer.[21]

For the best performance in the context of generalization, the complexity of the hypothesis should match the complexity of the function underlying the data. If the hypothesis is less complex than the function, then the model has under fitted the data. If the complexity of the model is increased in response, then the training error decreases. But if the hypothesis is too complex, then the model is subject to overfitting and generalization will be poorer.

为了在泛化情景下获得最佳性能,假设的复杂性应该与数据所依赖的功能的复杂性相匹配。如果假设(模型结构)没有问题本身那么复杂,那么模型就不能很好地拟合数据。如果在响应时增加模型的复杂度,训练误差就会减小。但如果假设过于复杂,则模型容易过拟合,导致模型泛化能力较差。

In addition to performance bounds, learning theorists study the time complexity and feasibility of learning. In computational learning theory, a computation is considered feasible if it can be done in polynomial time. There are two kinds of time complexity results. Positive results show that a certain class of functions can be learned in polynomial time. Negative results show that certain classes cannot be learned in polynomial time.

In addition to performance bounds, learning theorists study the time complexity and feasibility of learning. In computational learning theory, a computation is considered feasible if it can be done in polynomial time. There are two kinds of time complexity results. Positive results show that a certain class of functions can be learned in polynomial time. Negative results show that certain classes cannot be learned in polynomial time.

除了性能界限,学习理论家们还研究学习的时间复杂性和可行性。在机器学习理论中,若一个计算被认为是可行的,当且仅当它可以在复杂度为多项式的时间范围内完成。关于时间复杂度的结果有两种。正样结果 Negative results表明,有些模式的函数可以在多项式时间复杂度内学习到。但同时负样结果 Negative results表明,某些模式并不能在多项式时间内学习到。

方法 Approaches

学习算法的分类 Types of learning algorithms

The types of machine learning algorithms differ in their approach, the type of data they input and output, and the type of task or problem that they are intended to solve.

The types of machine learning algorithms differ in their approach, the type of data they input and output, and the type of task or problem that they are intended to solve.

不同类型的机器学习算法的方法、输入和输出的数据类型以及它们要解决的任务或问题的类型都有所不同。

监督学习 Supervised learning

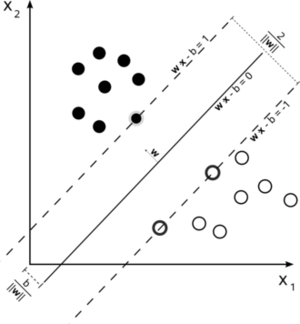

支持向量机是一个有监督学习模型,它将数据划分为由线性边界分隔的区域。在这里,有一个线性边界可以将黑色圆圈和白色圆圈分开。]

Supervised learning algorithms build a mathematical model of a set of data that contains both the inputs and the desired outputs.[22] The data is known as training data, and consists of a set of training examples. Each training example has one or more inputs and the desired output, also known as a supervisory signal. In the mathematical model, each training example is represented by an array or vector, sometimes called a feature vector, and the training data is represented by a matrix. Through iterative optimization of an objective function, supervised learning algorithms learn a function that can be used to predict the output associated with new inputs.[23] An optimal function will allow the algorithm to correctly determine the output for inputs that were not a part of the training data. An algorithm that improves the accuracy of its outputs or predictions over time is said to have learned to perform that task.[24]

Supervised learning algorithms build a mathematical model of a set of data that contains both the inputs and the desired outputs. The data is known as training data, and consists of a set of training examples. Each training example has one or more inputs and the desired output, also known as a supervisory signal. In the mathematical model, each training example is represented by an array or vector, sometimes called a feature vector, and the training data is represented by a matrix. Through iterative optimization of an objective function, supervised learning algorithms learn a function that can be used to predict the output associated with new inputs. An optimal function will allow the algorithm to correctly determine the output for inputs that were not a part of the training data. An algorithm that improves the accuracy of its outputs or predictions over time is said to have learned to perform that task.

有监督学习算法会建立一个包含输入和期望输出的数据集上的的数学模型。这些数据被称为训练数据,由一组组训练样本组成。每个训练样本都有一个或多个输入和期望的输出,也称为监督信号。在数学模型中,每个训练样本由一个数组或向量表示,有时也称为特征向量 Feature vector,训练数据由一个矩阵表示。通过对目标函数的迭代优化,监督式学习算法可以学习到一个用来预测与新输入相关的输出的函数。一个达到最优的目标函数可以实现算法对未知输入的输出结果有正确的预判,这种正确的预判并不仅限于训练数据上(即模型具有良好的泛化能力)。随着时间的推移,提高输出或预测精度的算法被称为已学会执行该任务。

Types of supervised learning algorithms include Active learning , classification and regression.[25] Classification algorithms are used when the outputs are restricted to a limited set of values, and regression algorithms are used when the outputs may have any numerical value within a range. As an example, for a classification algorithm that filters emails, the input would be an incoming email, and the output would be the name of the folder in which to file the email.

Types of supervised learning algorithms include Active learning , classification and regression. Classification algorithms are used when the outputs are restricted to a limited set of values, and regression algorithms are used when the outputs may have any numerical value within a range. As an example, for a classification algorithm that filters emails, the input would be an incoming email, and the output would be the name of the folder in which to file the email.

监督式学习算法的类型包括主动学习 Active learning、分类 Classification和回归 Regression。当输出被限制在一个有限的值集内时使用分类算法,当输出在一个范围内可能有任何数值时使用回归算法。例如,对于过滤电子邮件的分类算法,输入将是一封收到的电子邮件,输出将是用于将电子邮件归档的文件夹的名称。

Similarity learning is an area of supervised machine learning closely related to regression and classification, but the goal is to learn from examples using a similarity function that measures how similar or related two objects are. It has applications in ranking, recommendation systems, visual identity tracking, face verification, and speaker verification.

Similarity learning is an area of supervised machine learning closely related to regression and classification, but the goal is to learn from examples using a similarity function that measures how similar or related two objects are. It has applications in ranking, recommendation systems, visual identity tracking, face verification, and speaker verification.

相似性学习 Similarity learning是监督学习领域中与回归和分类密切相关的一个领域,但其目标是从实例中学习如何通过使用相似性函数来衡量两个对象之间的相似程度。它在排名、推荐系统、视觉身份跟踪、人脸验证和语者验证 Speaker verification等方面都有应用。

无监督学习 Unsupervised learning

Unsupervised learning algorithms take a set of data that contains only inputs, and find structure in the data, like grouping or clustering of data points. The algorithms, therefore, learn from test data that has not been labeled, classified or categorized. Instead of responding to feedback, unsupervised learning algorithms identify commonalities in the data and react based on the presence or absence of such commonalities in each new piece of data. A central application of unsupervised learning is in the field of density estimation in statistics, such as finding the probability density function.[26] Though unsupervised learning encompasses other domains involving summarizing and explaining data features.

Unsupervised learning algorithms take a set of data that contains only inputs, and find structure in the data, like grouping or clustering of data points. The algorithms, therefore, learn from test data that has not been labeled, classified or categorized. Instead of responding to feedback, unsupervised learning algorithms identify commonalities in the data and react based on the presence or absence of such commonalities in each new piece of data. A central application of unsupervised learning is in the field of density estimation in statistics, such as finding the probability density function. Though unsupervised learning encompasses other domains involving summarizing and explaining data features.

无监督式学习算法只需要一组只包含输入的数据,通过寻找数据中潜在结构、规律,对数据点进行分组或聚类。因此,算法是从未被标记、分类或分类的测试数据中学习,而不是通过响应反馈来改进策略。无监督式学习算法可以识别数据中的共性,并根据每个新数据中是否存在这些共性而做出反应。无监督学习的一个核心应用是统计学中的密度估计领域,比如寻找概率密度函数。尽管非监督式学习也包含了其他领域,如总结和解释数据特性。

Cluster analysis is the assignment of a set of observations into subsets (called clusters) so that observations within the same cluster are similar according to one or more predesignated criteria, while observations drawn from different clusters are dissimilar. Different clustering techniques make different assumptions on the structure of the data, often defined by some similarity metric and evaluated, for example, by internal compactness, or the similarity between members of the same cluster, and separation, the difference between clusters. Other methods are based on estimated density and graph connectivity.

Cluster analysis is the assignment of a set of observations into subsets (called clusters) so that observations within the same cluster are similar according to one or more predesignated criteria, while observations drawn from different clusters are dissimilar. Different clustering techniques make different assumptions on the structure of the data, often defined by some similarity metric and evaluated, for example, by internal compactness, or the similarity between members of the same cluster, and separation, the difference between clusters. Other methods are based on estimated density and graph connectivity.

聚类分析 Cluster analysis是将一组观测值分配到一个子集(称为集群)中,这样同一个集群中的观测值就可以根据一个或多个预先指定的相似数据点来给定,而从不同的集群中提取的观测值就不一样了。不同的聚类技术对数据的结构会做出不同的假设,通常用一些相似度量来进行定义和评估,例如,通过内部紧凑性,或同一集群成员之间的相似性,以及分离,集群之间的差异(这里的翻译有待改进)。也有其他方法是基于密度估计和图连通性来进行相似性度量。

半监督学习 Semi-supervised learning

Semi-supervised learning falls between unsupervised learning (without any labeled training data) and supervised learning (with completely labeled training data). Some of the training examples are missing training labels, yet many machine-learning researchers have found that unlabeled data, when used in conjunction with a small amount of labeled data, can produce a considerable improvement in learning accuracy.

Semi-supervised learning falls between unsupervised learning (without any labeled training data) and supervised learning (with completely labeled training data). Some of the training examples are missing training labels, yet many machine-learning researchers have found that unlabeled data, when used in conjunction with a small amount of labeled data, can produce a considerable improvement in learning accuracy.

半监督学习介于无监督式学习(没有任何标记的训练数据)和有监督学习(完全标记的训练数据)之间。有些训练样本缺少训练标签,但许多机器学习研究人员发现,如果将未标记的数据与少量标记的数据结合使用,可以大大提高学习的准确性。

In weakly supervised learning, the training labels are noisy, limited, or imprecise; however, these labels are often cheaper to obtain, resulting in larger effective training sets.[27]

In weakly supervised learning, the training labels are noisy, limited, or imprecise; however, these labels are often cheaper to obtain, resulting in larger effective training sets.

在弱监督学习 Weak supervision中,训练标签是有噪声的、有限的或不精确的; 然而,这些标签使用起来往往更加“实惠”——这种数据更容易得到、更容易拥有更大的有效训练集。

强化学习 Reinforcement learning

Reinforcement learning is an area of machine learning concerned with how software agents ought to take actions in an environment so as to maximize some notion of cumulative reward. Due to its generality, the field is studied in many other disciplines, such as game theory, control theory, operations research, information theory, simulation-based optimization, multi-agent systems, swarm intelligence, statistics and genetic algorithms. In machine learning, the environment is typically represented as a Markov Decision Process (MDP). Many reinforcement learning algorithms use dynamic programming techniques.[28] Reinforcement learning algorithms do not assume knowledge of an exact mathematical model of the MDP, and are used when exact models are infeasible. Reinforcement learning algorithms are used in autonomous vehicles or in learning to play a game against a human opponent.

Reinforcement learning is an area of machine learning concerned with how software agents ought to take actions in an environment so as to maximize some notion of cumulative reward. Due to its generality, the field is studied in many other disciplines, such as game theory, control theory, operations research, information theory, simulation-based optimization, multi-agent systems, swarm intelligence, statistics and genetic algorithms. In machine learning, the environment is typically represented as a Markov Decision Process (MDP). Many reinforcement learning algorithms use dynamic programming techniques. Reinforcement learning algorithms do not assume knowledge of an exact mathematical model of the MDP, and are used when exact models are infeasible. Reinforcement learning algorithms are used in autonomous vehicles or in learning to play a game against a human opponent.

强化学习是机器学习的一个领域,它研究软件组件应该如何在某个环境中进行行动决策,以便最大化某种累积收益的概念。由于其存在的普遍性,该领域的研究在许多其他学科,如博弈论 Game theory,控制理论 Control theory,运筹学 Operations research,信息论 Information theory,基于仿真的优化 Simulation-based optimization,多主体系统 Multi-agent system,群体智能 Swarm intelligence,统计学 Statistics和遗传算法 Genetic algorithm。在机器学习中,环境通常被表示为马可夫决策过程 Markov Decision Process ,MDP。许多强化学习算法使用动态编程技术。强化学习算法不需要知道 MDP 的精确数学模型,而是在精确模型不可行的情况下使用。强化学习算法常用于车辆自动驾驶问题或人机游戏场景。

自学习 Self learning

Self-learning as machine learning paradigm was introduced in 1982 along with a neural network capable of self-learning named Crossbar Adaptive Array (CAA). [29] It is a learning with no external rewards and no external teacher advices. The CAA self-learning algorithm computes, in a crossbar fashion, both decisions about actions and emotions (feelings) about consequence situations. The system is driven by the interaction between cognition and emotion. [30]

Self-learning as machine learning paradigm was introduced in 1982 along with a neural network capable of self-learning named Crossbar Adaptive Array (CAA). It is a learning with no external rewards and no external teacher advices. The CAA self-learning algorithm computes, in a crossbar fashion, both decisions about actions and emotions (feelings) about consequence situations. The system is driven by the interaction between cognition and emotion.

自学习作为一种机器学习范式,于1982年提出,并提出了一种具有自学习能力的神经网络 叫做交叉自适应矩阵 Crossbar Adaptive Array,CAA。这是一种没有外部激励和学习器建议的学习方法。CAA自学习算法以交叉方式计算关于行为的决策和关于后果情况的情绪(感觉)。这个系统是由认知和情感的相互作用所驱动的。

The self-learning algorithm updates a memory matrix W =||w(a,s)|| such that in each iteration executes the following machine learning routine:

The self-learning algorithm updates a memory matrix W =||w(a,s)|| such that in each iteration executes the following machine learning routine:

自学习算法更新内存矩阵 w | | | w (a,s) | | ,以便在每次迭代中执行以下机器学习例程:

In situation s perform action a;

In situation s perform action a;

在情境中执行动作 a;

Receive consequence situation s’;

Receive consequence situation s’;

接受结果状态 s’ ;

Compute emotion of being in consequence situation v(s’);

Compute emotion of being in consequence situation v(s’);

计算处于结果情境 v (s’)中的情绪;

Update crossbar memory w’(a,s) = w(a,s) + v(s’).

Update crossbar memory w’(a,s) = w(a,s) + v(s’).

更新交叉条记忆存储 w’(a,s) w (a,s) + v (s’)。

It is a system with only one input, situation s, and only one output, action (or behavior) a. There is neither a separate reinforcement input nor an advice input from the environment. The backpropagated value (secondary reinforcement) is the emotion toward the consequence situation. The CAA exists in two environments, one is behavioral environment where it behaves, and the other is genetic environment, wherefrom it initially and only once receives initial emotions about situations to be encountered in the behavioral environment. After receiving the genome (species) vector from the genetic environment, the CAA learns a goal seeking behavior, in an environment that contains both desirable and undesirable situations. [31]

It is a system with only one input, situation s, and only one output, action (or behavior) a. There is neither a separate reinforcement input nor an advice input from the environment. The backpropagated value (secondary reinforcement) is the emotion toward the consequence situation. The CAA exists in two environments, one is behavioral environment where it behaves, and the other is genetic environment, wherefrom it initially and only once receives initial emotions about situations to be encountered in the behavioral environment. After receiving the genome (species) vector from the genetic environment, the CAA learns a goal seeking behavior, in an environment that contains both desirable and undesirable situations.

它是一个只有一个输入、情景和一个输出、动作(或行为)的系统。既没有单独的强化输入,也没有来自环境的通知输入。反向传播价值(二次强化)是对结果情境的情感信息。CAA 存在于两种环境中,一种是行为环境,另一种是遗传环境,CAA将从这样的环境中获取且仅获取到一次关于它自身的初始情绪(这种情绪信息描述了算法应该对这样环境下对应的结果持有何种态度)。在从遗传环境中获得基因组(物种)载体后,CAA 会在一个既包含理想情况又包含不理想情况的环境中学习一种寻求目标的行为。

特征学习 Feature learning

Several learning algorithms aim at discovering better representations of the inputs provided during training.[32] Classic examples include principal components analysis and cluster analysis. Feature learning algorithms, also called representation learning algorithms, often attempt to preserve the information in their input but also transform it in a way that makes it useful, often as a pre-processing step before performing classification or predictions. This technique allows reconstruction of the inputs coming from the unknown data-generating distribution, while not being necessarily faithful to configurations that are implausible under that distribution. This replaces manual feature engineering, and allows a machine to both learn the features and use them to perform a specific task.

Several learning algorithms aim at discovering better representations of the inputs provided during training. Classic examples include principal components analysis and cluster analysis. Feature learning algorithms, also called representation learning algorithms, often attempt to preserve the information in their input but also transform it in a way that makes it useful, often as a pre-processing step before performing classification or predictions. This technique allows reconstruction of the inputs coming from the unknown data-generating distribution, while not being necessarily faithful to configurations that are implausible under that distribution. This replaces manual feature engineering, and allows a machine to both learn the features and use them to perform a specific task.

一些学习算法旨在发现更好的训练数据输入的对应表示,其典型的例子包括主成分分析 Principal components analysis和聚类分析 Cluster analysis。特征学习 Feature learning算法,也称为表征学习 Representation learning算法,通常试图保留输入中的信息,但也可以使用有效的方式对输入进行转换从而达到提升学习效率和效果的目的,通常作为执行分类或预测行为之前的预处理步骤。这种技术可以重构来自未知数据分布生成的输入,但不一定忠实于在这种分布下不可信的配置。这取代了手工特性工程,并且允许机器学习特性并使用它们来执行特定的任务。

Feature learning can be either supervised or unsupervised. In supervised feature learning, features are learned using labeled input data. Examples include artificial neural networks, multilayer perceptrons, and supervised dictionary learning. In unsupervised feature learning, features are learned with unlabeled input data. Examples include dictionary learning, independent component analysis, autoencoders, matrix factorization[33] and various forms of clustering.引用错误:没有找到与</ref>对应的<ref>标签[34][35]

}}</ref>

{} / ref

特征学习可以是有监督的,也可以是无监督的。在有监督的特征学习中,可以利用标记输入数据学习特征。例如人工神经网络 Artificial neural networks,ANN、多层感知机 Multilayer perceptrons,MLP和受控字典式学习模型 Supervised dictionary learning model,SDLM。在无监督的特征学习中,特征是通过未标记的输入数据进行学习的。例如,字典学习 Dictionary learning、独立元素分析 Independent component analysis、自动编码器 Autoencoders、矩阵分解 Matrix factorization和各种形式的聚类

Manifold learning algorithms attempt to do so under the constraint that the learned representation is low-dimensional. Sparse coding algorithms attempt to do so under the constraint that the learned representation is sparse, meaning that the mathematical model has many zeros. Multilinear subspace learning algorithms aim to learn low-dimensional representations directly from tensor representations for multidimensional data, without reshaping them into higher-dimensional vectors.[36] Deep learning algorithms discover multiple levels of representation, or a hierarchy of features, with higher-level, more abstract features defined in terms of (or generating) lower-level features. It has been argued that an intelligent machine is one that learns a representation that disentangles the underlying factors of variation that explain the observed data.[37]

Manifold learning algorithms attempt to do so under the constraint that the learned representation is low-dimensional. Sparse coding algorithms attempt to do so under the constraint that the learned representation is sparse, meaning that the mathematical model has many zeros. Multilinear subspace learning algorithms aim to learn low-dimensional representations directly from tensor representations for multidimensional data, without reshaping them into higher-dimensional vectors. Deep learning algorithms discover multiple levels of representation, or a hierarchy of features, with higher-level, more abstract features defined in terms of (or generating) lower-level features. It has been argued that an intelligent machine is one that learns a representation that disentangles the underlying factors of variation that explain the observed data.

流形学习 Manifold learning算法试图在学习表示为低维的约束条件下进行流形学习。稀疏编码算法 Sparse coding algorithms试图在学习表示为稀疏的约束条件下进行编码,这意味着数学模型有许多零点 Zeros。多线性子空间学习算法 Multilinear subspace learning algorithms旨在直接从多维数据的张量表示中学习低维的表示,而不是将它们重塑为高维向量。深度学习算法 Deep learning algorithms发现了多层次的表示,或者是一个特征层次结构,具有更高层次、更抽象的特征,这些特征定义为(或可以生成)低层次的特征。有人认为,一个智能机器的表现是可以学习到一种表示的方法,并能够解释数据观测值变化背后的机理或潜在影响。

Feature learning is motivated by the fact that machine learning tasks such as classification often require input that is mathematically and computationally convenient to process. However, real-world data such as images, video, and sensory data has not yielded to attempts to algorithmically define specific features. An alternative is to discover such features or representations through examination, without relying on explicit algorithms.

Feature learning is motivated by the fact that machine learning tasks such as classification often require input that is mathematically and computationally convenient to process. However, real-world data such as images, video, and sensory data has not yielded to attempts to algorithmically define specific features. An alternative is to discover such features or representations through examination, without relying on explicit algorithms.

特征学习的动力来自于机器学习任务,如分类中,通常需要数学上和计算上方便处理的输入。然而,真实世界的数据,如图像、视频和感官数据,并没有那么简单就可以用通过算法定义特定特征。另一种方法是通过检查发现这些特征或表示,而不依赖于显式算法。

稀疏字典学习 Sparse dictionary learning

Sparse dictionary learning is a feature learning method where a training example is represented as a linear combination of basis functions, and is assumed to be a sparse matrix. The method is strongly NP-hard and difficult to solve approximately.[38] A popular heuristic method for sparse dictionary learning is the K-SVD algorithm. Sparse dictionary learning has been applied in several contexts. In classification, the problem is to determine the class to which a previously unseen training example belongs. For a dictionary where each class has already been built, a new training example is associated with the class that is best sparsely represented by the corresponding dictionary. Sparse dictionary learning has also been applied in image de-noising. The key idea is that a clean image patch can be sparsely represented by an image dictionary, but the noise cannot.[39]

Sparse dictionary learning is a feature learning method where a training example is represented as a linear combination of basis functions, and is assumed to be a sparse matrix. The method is strongly NP-hard and difficult to solve approximately. A popular heuristic method for sparse dictionary learning is the K-SVD algorithm. Sparse dictionary learning has been applied in several contexts. In classification, the problem is to determine the class to which a previously unseen training example belongs. For a dictionary where each class has already been built, a new training example is associated with the class that is best sparsely represented by the corresponding dictionary. Sparse dictionary learning has also been applied in image de-noising. The key idea is that a clean image patch can be sparsely represented by an image dictionary, but the noise cannot.

稀疏词典学习是一种特征学习方法,在这种方法中,一个训练样本被表示为基函数的线性组合,并假设为稀疏矩阵。该方法具有强 NP- 困难性并且近似求解困难。一种流行的启发式 Heuristic稀疏字典学习方法是 K-SVD 算法。稀疏词典学习已经应用于以下几种情况下:在分类中,问题在于如何确定先前未见的训练样本所属的类;对于已经构建了每个类的字典,一个新的训练示例将与相应的字典最好地稀疏表示的类相关联。稀疏字典学习也被广泛应用到图像去噪的问题中。其关键思想是,一个干净的图像补丁 patch可以由图像字典稀疏地表示,但噪声不能。

异常检测 Anomaly detection

In data mining, anomaly detection, also known as outlier detection, is the identification of rare items, events or observations which raise suspicions by differing significantly from the majority of the data.[40] Typically, the anomalous items represent an issue such as bank fraud, a structural defect, medical problems or errors in a text. Anomalies are referred to as outliers, novelties, noise, deviations and exceptions.[41]

In data mining, anomaly detection, also known as outlier detection, is the identification of rare items, events or observations which raise suspicions by differing significantly from the majority of the data. Typically, the anomalous items represent an issue such as bank fraud, a structural defect, medical problems or errors in a text. Anomalies are referred to as outliers, novelties, noise, deviations and exceptions.

在数据挖掘中,异常检测 Anomaly / Outlier detection是指识别那些引起怀疑的稀有项目、事件或者观测结果,它们与其他大多数数据有很大的不同。一般来说,这些不正常的项目都可以反映出数据背后的一个问题,如银行欺诈、结构缺陷、医疗问题或文本中的错误。异常也被称为异常值 Outliers、奇异值 Novelties、噪音 Noise、偏差 Deviations和异常 Exceptions。

In particular, in the context of abuse and network intrusion detection, the interesting objects are often not rare objects, but unexpected bursts in activity. This pattern does not adhere to the common statistical definition of an outlier as a rare object, and many outlier detection methods (in particular, unsupervised algorithms) will fail on such data, unless it has been aggregated appropriately. Instead, a cluster analysis algorithm may be able to detect the micro-clusters formed by these patterns.[42]

In particular, in the context of abuse and network intrusion detection, the interesting objects are often not rare objects, but unexpected bursts in activity. This pattern does not adhere to the common statistical definition of an outlier as a rare object, and many outlier detection methods (in particular, unsupervised algorithms) will fail on such data, unless it has been aggregated appropriately. Instead, a cluster analysis algorithm may be able to detect the micro-clusters formed by these patterns.

特别是在滥用和网络入侵检测的背景下,人们感兴趣的往往不是罕见的对象,而是突发性的活动。这种模式并不符合异常值作为稀有对象的通用统计学定义,而且许多异常检测方法(特别是无监督的算法)将无法处理这类数据,除非它已经被适当地聚合处理。相反地,数据聚类算法可以检测到这些模式形成的微团簇。

Three broad categories of anomaly detection techniques exist.[43] Unsupervised anomaly detection techniques detect anomalies in an unlabeled test data set under the assumption that the majority of the instances in the data set are normal, by looking for instances that seem to fit least to the remainder of the data set. Supervised anomaly detection techniques require a data set that has been labeled as "normal" and "abnormal" and involves training a classifier (the key difference to many other statistical classification problems is the inherently unbalanced nature of outlier detection). Semi-supervised anomaly detection techniques construct a model representing normal behavior from a given normal training data set and then test the likelihood of a test instance to be generated by the model.

Three broad categories of anomaly detection techniques exist. Unsupervised anomaly detection techniques detect anomalies in an unlabeled test data set under the assumption that the majority of the instances in the data set are normal, by looking for instances that seem to fit least to the remainder of the data set. Supervised anomaly detection techniques require a data set that has been labeled as "normal" and "abnormal" and involves training a classifier (the key difference to many other statistical classification problems is the inherently unbalanced nature of outlier detection). Semi-supervised anomaly detection techniques construct a model representing normal behavior from a given normal training data set and then test the likelihood of a test instance to be generated by the model.

异常检测技术有3大类。无监督的异常检测 / 测试技术在假设数据集中大多数实例都是正常的情况下,通过是来寻找数据集中最违和的实例,从而实现检测未被标记的测试数据集中的异常。监督式的异常检测分析技术需要一个被标记为“正常”和“异常”的数据集,还需要训练一个分类器(和许多其他分类分析问题的关键区别在于异常检测本身的不平衡性)。半监督的异常检测技术从给定的正常训练数据集构建一个表示正常行为的模型,然后测试由该模型生成的测试实例的可能性。

机器人学习 Robot learning

In developmental robotics, robot learning algorithms generate their own sequences of learning experiences, also known as a curriculum, to cumulatively acquire new skills through self-guided exploration and social interaction with humans. These robots use guidance mechanisms such as active learning, maturation, motor synergies and imitation.

In developmental robotics, robot learning algorithms generate their own sequences of learning experiences, also known as a curriculum, to cumulatively acquire new skills through self-guided exploration and social interaction with humans. These robots use guidance mechanisms such as active learning, maturation, motor synergies and imitation.

在发展型机器人 Developmental robotics学习中,机器人学习算法能够产生自己的学习经验序列,也称为课程,通过自我引导的探索来与人类社会进行互动,累积获得新技能。这些机器人在学习的过程中会使用诸如主动学习、成熟、协同运动和模仿等引导机制。

关联规则 Association rules

Association rule learning is a rule-based machine learning method for discovering relationships between variables in large databases. It is intended to identify strong rules discovered in databases using some measure of "interestingness".[44]

Association rule learning is a rule-based machine learning method for discovering relationships between variables in large databases. It is intended to identify strong rules discovered in databases using some measure of "interestingness".

关联规则学习 Association rule learning是一种基于规则的机器学习 Rule-based machine learning方法,用于发现大型数据库中变量之间的关系。它旨在利用某种“有趣度”的度量,识别在数据库中发现的强大规则。

Rule-based machine learning is a general term for any machine learning method that identifies, learns, or evolves "rules" to store, manipulate or apply knowledge. The defining characteristic of a rule-based machine learning algorithm is the identification and utilization of a set of relational rules that collectively represent the knowledge captured by the system. This is in contrast to other machine learning algorithms that commonly identify a singular model that can be universally applied to any instance in order to make a prediction.[45] Rule-based machine learning approaches include learning classifier systems, association rule learning, and artificial immune systems.

Rule-based machine learning is a general term for any machine learning method that identifies, learns, or evolves "rules" to store, manipulate or apply knowledge. The defining characteristic of a rule-based machine learning algorithm is the identification and utilization of a set of relational rules that collectively represent the knowledge captured by the system. This is in contrast to other machine learning algorithms that commonly identify a singular model that can be universally applied to any instance in order to make a prediction. Rule-based machine learning approaches include learning classifier systems, association rule learning, and artificial immune systems.

基于规则的机器学习是任何机器学习方法的通用术语,这些机器学习方法识别、学习或发展“规则”来存储、操作或应用知识。基于规则的机器学习算法这一定义的特点是识别和利用一组共同表示系统捕获的知识的关系规则。这与其他机器学习算法不同,后者往往只识别一个单一的模型,这个模型可以普遍应用于任何实例,以便进行预测。基于规则的机器学习方法包括学习分类器系统 Learning classifier system、关联规则学习和人工免疫系统 Artificial immune system。

Based on the concept of strong rules, Rakesh Agrawal, Tomasz Imieliński and Arun Swami introduced association rules for discovering regularities between products in large-scale transaction data recorded by point-of-sale (POS) systems in supermarkets.[46] For example, the rule [math]\displaystyle{ \{\mathrm{onions, potatoes}\} \Rightarrow \{\mathrm{burger}\} }[/math] found in the sales data of a supermarket would indicate that if a customer buys onions and potatoes together, they are likely to also buy hamburger meat. Such information can be used as the basis for decisions about marketing activities such as promotional pricing or product placements. In addition to market basket analysis, association rules are employed today in application areas including Web usage mining, intrusion detection, continuous production, and bioinformatics. In contrast with sequence mining, association rule learning typically does not consider the order of items either within a transaction or across transactions.

Based on the concept of strong rules, Rakesh Agrawal, Tomasz Imieliński and Arun Swami introduced association rules for discovering regularities between products in large-scale transaction data recorded by point-of-sale (POS) systems in supermarkets. For example, the rule [math]\displaystyle{ \{\mathrm{onions, potatoes}\} \Rightarrow \{\mathrm{burger}\} }[/math] found in the sales data of a supermarket would indicate that if a customer buys onions and potatoes together, they are likely to also buy hamburger meat. Such information can be used as the basis for decisions about marketing activities such as promotional pricing or product placements. In addition to market basket analysis, association rules are employed today in application areas including Web usage mining, intrusion detection, continuous production, and bioinformatics. In contrast with sequence mining, association rule learning typically does not consider the order of items either within a transaction or across transactions.

基于强规则的原理,Rakesh Agrawal、 Tomasz imieli ski 和 Arun Swami 引入了关联规则这一概念,用于在超市销售点(POS)系统记录的大规模交易数据中发现产品之间的规则。例如,在超市的销售数据中发现的规则表明,如果某位顾客同时购买洋葱和土豆,那么他也很可能会购买汉堡肉。这些信息可以作为市场决策的依据,如促销价格或产品植入。除了市场篮子分析之外,关联规则还应用于 Web 使用挖掘 Web usage mining、入侵检测 Intrusion detection、连续生产和生物信息学 Bioinformatics等应用领域。与序列挖掘相比,关联规则学习通常不考虑事务内或事务之间的先后顺序。

下面是几种常见的基于规则的机器学习算法:

Learning classifier systems (LCS) are a family of rule-based machine learning algorithms that combine a discovery component, typically a genetic algorithm, with a learning component, performing either supervised learning, reinforcement learning, or unsupervised learning. They seek to identify a set of context-dependent rules that collectively store and apply knowledge in a piecewise manner in order to make predictions.[47]

Learning classifier systems (LCS) are a family of rule-based machine learning algorithms that combine a discovery component, typically a genetic algorithm, with a learning component, performing either supervised learning, reinforcement learning, or unsupervised learning. They seek to identify a set of context-dependent rules that collectively store and apply knowledge in a piecewise manner in order to make predictions.

学习分类器系统 Learning classifier systems,LCS是一系列基于规则的机器学习算法,它结合了一个发现组件,通常是一个遗传算法和一个学习组件,执行监督式学习、强化学习或非监督式学习。他们试图给出一组与上下文相关的规则,而这些规则以分段的方式共同储存和应用知识,以便进行预测。

Inductive logic programming (ILP) is an approach to rule-learning using logic programming as a uniform representation for input examples, background knowledge, and hypotheses. Given an encoding of the known background knowledge and a set of examples represented as a logical database of facts, an ILP system will derive a hypothesized logic program that entails all positive and no negative examples. Inductive programming is a related field that considers any kind of programming languages for representing hypotheses (and not only logic programming), such as functional programs.

Inductive logic programming (ILP) is an approach to rule-learning using logic programming as a uniform representation for input examples, background knowledge, and hypotheses. Given an encoding of the known background knowledge and a set of examples represented as a logical database of facts, an ILP system will derive a hypothesized logic program that entails all positive and no negative examples. Inductive programming is a related field that considers any kind of programming languages for representing hypotheses (and not only logic programming), such as functional programs.

归纳逻辑规划 Inductive logic programming,ILP是一种用逻辑规划作为输入示例、背景知识和假设的统一表示的规则学习方法。如果将已知的背景知识进行编码,并将一组示例表示为事实的逻辑数据库,ILP 系统将推导出一个假设的逻辑程序,其中包含所有正面和负面的样例。归纳编程是一个与其相关的领域,它考虑用任何一种编程语言来表示假设(不仅仅是逻辑编程),比如函数编程 Functional programs。

Inductive logic programming is particularly useful in bioinformatics and natural language processing. Gordon Plotkin and Ehud Shapiro laid the initial theoretical foundation for inductive machine learning in a logical setting.[48][49][50] Shapiro built their first implementation (Model Inference System) in 1981: a Prolog program that inductively inferred logic programs from positive and negative examples.[51] The term inductive here refers to philosophical induction, suggesting a theory to explain observed facts, rather than mathematical induction, proving a property for all members of a well-ordered set.

Inductive logic programming is particularly useful in bioinformatics and natural language processing. Gordon Plotkin and Ehud Shapiro laid the initial theoretical foundation for inductive machine learning in a logical setting. Shapiro built their first implementation (Model Inference System) in 1981: a Prolog program that inductively inferred logic programs from positive and negative examples. The term inductive here refers to philosophical induction, suggesting a theory to explain observed facts, rather than mathematical induction, proving a property for all members of a well-ordered set.

归纳逻辑程序设计 Inductive logic programming在生物信息学和自然语言处理 Natural language processing中特别有用。戈登 · 普洛特金 Gordon Plotkin和埃胡德 · 夏皮罗 Ehud Shapiro为归纳机器学习在逻辑上奠定了最初的理论基础。夏皮罗 Shapiro在1981年实现了他们的第一个模型推理系统: 一个从正反例中归纳推断逻辑程序的 Prolog 程序。这里的”归纳“指的是哲学上的归纳,通过提出一个理论来解释观察到的事实,而不是数学归纳法证明了一个有序集合的所有成员的性质。

模型 Models

Performing machine learning involves creating a model, which is trained on some training data and then can process additional data to make predictions. Various types of models have been used and researched for machine learning systems.

Performing machine learning involves creating a model, which is trained on some training data and then can process additional data to make predictions. Various types of models have been used and researched for machine learning systems.

执行机器学习需要建立一个算法模型,该模型根据一些训练数据进行训练,然后可以处理额外的数据进行预测。机器学习系统已经使用和研究了各种类型的模型。

人工神经网络 Artificial neural networks

An artificial neural network is an interconnected group of nodes, akin to the vast network of neurons in a brain. Here, each circular node represents an artificial neuron and an arrow represents a connection from the output of one artificial neuron to the input of another.

人工神经网络 Artificial neural network,ANN是一组相互连接的节点,类似于大脑中庞大的神经元网络。在这里,每个圆形节点代表一个人工神经元 Neuron,一个箭头代表从一个人工神经元的输出到另一个输入的连接

Artificial neural networks (ANNs), or connectionist systems, are computing systems vaguely inspired by the biological neural networks that constitute animal brains. Such systems "learn" to perform tasks by considering examples, generally without being programmed with any task-specific rules.

Artificial neural networks (ANNs), or connectionist systems, are computing systems vaguely inspired by the biological neural networks that constitute animal brains. Such systems "learn" to perform tasks by considering examples, generally without being programmed with any task-specific rules.

人工神经网络,或连接主义系统 Connectionism system,是计算机系统受到构成动物大脑的生物神经网络的启发后的研究成果。这种系统通过研究样本来“学习”如何执行任务,通常不需要对任何特定任务的规则进行编程。

An ANN is a model based on a collection of connected units or nodes called "artificial neurons", which loosely model the neurons in a biological brain. Each connection, like the synapses in a biological brain, can transmit information, a "signal", from one artificial neuron to another. An artificial neuron that receives a signal can process it and then signal additional artificial neurons connected to it. In common ANN implementations, the signal at a connection between artificial neurons is a real number, and the output of each artificial neuron is computed by some non-linear function of the sum of its inputs. The connections between artificial neurons are called "edges". Artificial neurons and edges typically have a weight that adjusts as learning proceeds. The weight increases or decreases the strength of the signal at a connection. Artificial neurons may have a threshold such that the signal is only sent if the aggregate signal crosses that threshold. Typically, artificial neurons are aggregated into layers. Different layers may perform different kinds of transformations on their inputs. Signals travel from the first layer (the input layer) to the last layer (the output layer), possibly after traversing the layers multiple times.

An ANN is a model based on a collection of connected units or nodes called "artificial neurons", which loosely model the neurons in a biological brain. Each connection, like the synapses in a biological brain, can transmit information, a "signal", from one artificial neuron to another. An artificial neuron that receives a signal can process it and then signal additional artificial neurons connected to it. In common ANN implementations, the signal at a connection between artificial neurons is a real number, and the output of each artificial neuron is computed by some non-linear function of the sum of its inputs. The connections between artificial neurons are called "edges". Artificial neurons and edges typically have a weight that adjusts as learning proceeds. The weight increases or decreases the strength of the signal at a connection. Artificial neurons may have a threshold such that the signal is only sent if the aggregate signal crosses that threshold. Typically, artificial neurons are aggregated into layers. Different layers may perform different kinds of transformations on their inputs. Signals travel from the first layer (the input layer) to the last layer (the output layer), possibly after traversing the layers multiple times.

人工神经网络是一种基于一组被称为“人工神经元”的连接单元或节点的模型,人工神经元可以对生物大脑中的神经元进行松散的建模。每一个连接,就像生物大脑中的突触一样,可以将信息,一个“信号” ,从一个人工神经元传递到另一个。接收到信号的人工神经元可以处理它,然后发送信号给连接到它的其他人工神经元。在通常的人工神经网络实现中,人工神经元之间连接处的信号是一个实数,每个人工神经元的输出是由一些输入和的非线性函数计算出来的。人造神经元之间的连接称为“边缘”。人工神经元和边缘通常有一个权重,可以随着学习的进行而调整。重量增加或减少连接处信号的强度。人工神经元可能有一个阈值,这样只有当聚合信号超过这个阈值时才发送信号。通常,人造神经元聚集成层。不同的层可以对其输入执行不同类型的转换。信号从第一层(输入层)传输到最后一层(输出层) ,可能是在多次遍历这些层之后。

The original goal of the ANN approach was to solve problems in the same way that a human brain would. However, over time, attention moved to performing specific tasks, leading to deviations from biology. Artificial neural networks have been used on a variety of tasks, including computer vision, speech recognition, machine translation, social network filtering, playing board and video games and medical diagnosis.

The original goal of the ANN approach was to solve problems in the same way that a human brain would. However, over time, attention moved to performing specific tasks, leading to deviations from biology. Artificial neural networks have been used on a variety of tasks, including computer vision, speech recognition, machine translation, social network filtering, playing board and video games and medical diagnosis.

人工神经网络方法的最初目标是用人类大脑解决问题的同样方式。然而,随着时间的推移,其注意力转移到执行特定的任务上,导致了与生物学的偏差。现在人工神经网络已被用于各种任务中,包括计算机视觉 Computer visio、语音识别 Speech recognition、机器翻译 Machine translation、社会网络过滤 Social network filtering、玩棋盘和视频游戏以及医疗诊断。

Deep learning consists of multiple hidden layers in an artificial neural network. This approach tries to model the way the human brain processes light and sound into vision and hearing. Some successful applications of deep learning are computer vision and speech recognition.[52]

Deep learning consists of multiple hidden layers in an artificial neural network. This approach tries to model the way the human brain processes light and sound into vision and hearing. Some successful applications of deep learning are computer vision and speech recognition.

深度学习由人工神经网络中的多个隐层组成,通过这种方法可以尽量模拟人类大脑将光和声音处理成视觉和听觉的方式。深度学习的一些成功应用是计算机视觉和语音识别。

决策树 Decision trees

Decision tree learning uses a decision tree as a predictive model to go from observations about an item (represented in the branches) to conclusions about the item's target value (represented in the leaves). It is one of the predictive modeling approaches used in statistics, data mining and machine learning. Tree models where the target variable can take a discrete set of values are called classification trees; in these tree structures, leaves represent class labels and branches represent conjunctions of features that lead to those class labels. Decision trees where the target variable can take continuous values (typically real numbers) are called regression trees. In decision analysis, a decision tree can be used to visually and explicitly represent decisions and decision making. In data mining, a decision tree describes data, but the resulting classification tree can be an input for decision making.

Decision tree learning uses a decision tree as a predictive model to go from observations about an item (represented in the branches) to conclusions about the item's target value (represented in the leaves). It is one of the predictive modeling approaches used in statistics, data mining and machine learning. Tree models where the target variable can take a discrete set of values are called classification trees; in these tree structures, leaves represent class labels and branches represent conjunctions of features that lead to those class labels. Decision trees where the target variable can take continuous values (typically real numbers) are called regression trees. In decision analysis, a decision tree can be used to visually and explicitly represent decisions and decision making. In data mining, a decision tree describes data, but the resulting classification tree can be an input for decision making.

决策树 Decision tree学习是使用一个决策树作为一个预测模型,从对一个项目的观察(在分支中表示)到对该项目的目标值的结论(在叶子结点中表示)。它是统计学、数据挖掘和机器学习中常用的预测建模方法之一。目标变量接受到的一组离散值的树模型称为分类树; 在这些树结构中,叶子代表类标签,分支代表连接到这些类标签的特征。其中目标变量可以取连续值(通常是实数)的决策树称为回归树。在决策分析中,可以使用决策树直观地表示决策和决策。在数据挖掘中,决策树是用来描述数据的,但得到的分类树可以作为决策的输入。

支持向量机 Support vector machines

Support vector machines (SVMs), also known as support vector networks, are a set of related supervised learning methods used for classification and regression. Given a set of training examples, each marked as belonging to one of two categories, an SVM training algorithm builds a model that predicts whether a new example falls into one category or the other.[53] An SVM training algorithm is a non-probabilistic, binary, linear classifier, although methods such as Platt scaling exist to use SVM in a probabilistic classification setting. In addition to performing linear classification, SVMs can efficiently perform a non-linear classification using what is called the kernel trick, implicitly mapping their inputs into high-dimensional feature spaces.

Support vector machines (SVMs), also known as support vector networks, are a set of related supervised learning methods used for classification and regression. Given a set of training examples, each marked as belonging to one of two categories, an SVM training algorithm builds a model that predicts whether a new example falls into one category or the other. An SVM training algorithm is a non-probabilistic, binary, linear classifier, although methods such as Platt scaling exist to use SVM in a probabilistic classification setting. In addition to performing linear classification, SVMs can efficiently perform a non-linear classification using what is called the kernel trick, implicitly mapping their inputs into high-dimensional feature spaces.

支持向量机 Support vector machines,SVMs,也称为支持向量网络,是一系列用于分类和回归的相关监督式学习方法。给定一组训练样本,每个样本标记为两个类别中的一个,SVM 训练算法通过建立一个模型来预测一个新样本是两个类别中的哪一个。支持向量机的训练算法用到的是一种非概率的二进制线性分类器,尽管在概率分类环境中也存在使用支持向量机的方法,如 Platt 缩放法。除了执行线性分类,支持向量机可以有效地执行非线性分类使用所谓的核技巧 Kernel trick,隐式地将模型输入映射到高维特征空间。

Illustration of linear regression on a data set.

数据集上的线性回归。

回归分析 Regression analysis

Regression analysis encompasses a large variety of statistical methods to estimate the relationship between input variables and their associated features. Its most common form is linear regression, where a single line is drawn to best fit the given data according to a mathematical criterion such as ordinary least squares. The latter is often extended by regularization (mathematics) methods to mitigate overfitting and bias, as in ridge regression. When dealing with non-linear problems, go-to models include polynomial regression (for example, used for trendline fitting in Microsoft Excel [54]), Logistic regression (often used in statistical classification) or even kernel regression, which introduces non-linearity by taking advantage of the kernel trick to implicitly map input variables to higher dimensional space.

Regression analysis encompasses a large variety of statistical methods to estimate the relationship between input variables and their associated features. Its most common form is linear regression, where a single line is drawn to best fit the given data according to a mathematical criterion such as ordinary least squares. The latter is often extended by regularization (mathematics) methods to mitigate overfitting and bias, as in ridge regression. When dealing with non-linear problems, go-to models include polynomial regression (for example, used for trendline fitting in Microsoft Excel ), Logistic regression (often used in statistical classification) or even kernel regression, which introduces non-linearity by taking advantage of the kernel trick to implicitly map input variables to higher dimensional space.

回归分析 Regression analysis包含了大量的统计方法来估计输入变量和它们的相关特征之间的关系。它最常见的形式是线性回归 Linear regression,根据一个数学标准,比如一般最小平方法,画一条线来最好地拟合给定的数据。后者通常通过正则化(数学)方法来扩展,以减少过拟合和偏差,如岭回归。在处理非线性问题时,常用的模型包括多项式回归(例如,在 Microsoft Excel 中用于趋势线拟合)、 Logit模型回归(通常用于分类)甚至核回归,它利用核技巧将输入变量隐式地映射到更高维度空间,从而引入了非线性。

贝叶斯网络 Bayesian networks

A simple Bayesian network. Rain influences whether the sprinkler is activated, and both rain and the sprinkler influence whether the grass is wet.

一个简单的贝叶斯网路。雨水会影响喷头是否被激活,雨水和喷头都会影响草地是否湿润。

A Bayesian network, belief network or directed acyclic graphical model is a probabilistic graphical model that represents a set of random variables and their conditional independence with a directed acyclic graph (DAG). For example, a Bayesian network could represent the probabilistic relationships between diseases and symptoms. Given symptoms, the network can be used to compute the probabilities of the presence of various diseases. Efficient algorithms exist that perform inference and learning. Bayesian networks that model sequences of variables, like speech signals or protein sequences, are called dynamic Bayesian networks. Generalizations of Bayesian networks that can represent and solve decision problems under uncertainty are called influence diagrams.

A Bayesian network, belief network or directed acyclic graphical model is a probabilistic graphical model that represents a set of random variables and their conditional independence with a directed acyclic graph (DAG). For example, a Bayesian network could represent the probabilistic relationships between diseases and symptoms. Given symptoms, the network can be used to compute the probabilities of the presence of various diseases. Efficient algorithms exist that perform inference and learning. Bayesian networks that model sequences of variables, like speech signals or protein sequences, are called dynamic Bayesian networks. Generalizations of Bayesian networks that can represent and solve decision problems under uncertainty are called influence diagrams.

一个贝叶斯网路、信念网络 Belief network或有向无环图 Directed acyclic graph,DAG模型是一个概率图模型,代表一组随机变量及其条件独立与有向无环图。例如,贝叶斯网路可以表示疾病和症状之间的概率关系。在给定症状的情况下,该网络可用于计算各种疾病出现的概率。现有的高效算法可以执行推理和学习。贝叶斯网络模型的变量序列,如语音信号或蛋白质序列,被称为动态贝叶斯网络。而贝叶斯网络能够表示和解决不确定性决策问题的推广称为影响图。

遗传算法 Genetic algorithms

A genetic algorithm (GA) is a search algorithm and heuristic technique that mimics the process of natural selection, using methods such as mutation and crossover to generate new genotypes in the hope of finding good solutions to a given problem. In machine learning, genetic algorithms were used in the 1980s and 1990s.[55][56] Conversely, machine learning techniques have been used to improve the performance of genetic and evolutionary algorithms.[57]

A genetic algorithm (GA) is a search algorithm and heuristic technique that mimics the process of natural selection, using methods such as mutation and crossover to generate new genotypes in the hope of finding good solutions to a given problem. In machine learning, genetic algorithms were used in the 1980s and 1990s. Conversely, machine learning techniques have been used to improve the performance of genetic and evolutionary algorithms.

遗传算法 Genetic algorithm,GA是一种模仿自然选择过程的搜索算法和启发式技术,利用变异和交叉等方法产生新的基因型,以期为给定的问题找到最优解。在机器学习中,遗传算法在20世纪80年代和90年代被广泛使用,而现在的机器学习技术已经可以被用来改善遗传和进化算法的性能。

训练模型 Training models

Usually, machine learning models require a lot of data in order for them to perform well. Usually, when training a machine learning model, one needs to collect a large, representative sample of data from a training set. Data from the training set can be as varied as a corpus of text, a collection of images, and data collected from individual users of a service. Overfitting is something to watch out for when training a machine learning model.

Usually, machine learning models require a lot of data in order for them to perform well. Usually, when training a machine learning model, one needs to collect a large, representative sample of data from a training set. Data from the training set can be as varied as a corpus of text, a collection of images, and data collected from individual users of a service. Overfitting is something to watch out for when training a machine learning model.

通常情况下,机器学习模型需要大量的数据才能有良好的性能,因此当训练一个机器学习模型时,需要从一个训练集中收集大量有代表性的数据样本。来自训练集的数据可以像文本语料库、图像集合和从服务的单个用户收集的数据一样多种多样。当训练一个机器学习模型时,需要特别注意过拟合问题。

联合学习 Federated learning

Federated learning is a new approach to training machine learning models that decentralizes the training process, allowing for users' privacy to be maintained by not needing to send their data to a centralized server. This also increases efficiency by decentralizing the training process to many devices. For example, Gboard uses federated machine learning to train search query prediction models on users' mobile phones without having to send individual searches back to Google.[58]

Federated learning is a new approach to training machine learning models that decentralizes the training process, allowing for users' privacy to be maintained by not needing to send their data to a centralized server. This also increases efficiency by decentralizing the training process to many devices. For example, Gboard uses federated machine learning to train search query prediction models on users' mobile phones without having to send individual searches back to Google.

联合学习 Federated learning是一种新的训练机器学习模型的方法,它分散了训练的过程,允许用户不需要将他们的数据发送到一个集中的服务器这样的做法来维护他们的隐私。通过将模型的训练过程分散到许多设备上,提升了算法效率。例如,谷歌董事会使用联合机器学习刚发来训练用户手机上的搜索查询预测模型,而不必将每个人地搜索信息发送回谷歌。

Applications

There are many applications for machine learning, including:

There are many applications for machine learning, including:

机器学习有许多应用,包括:

- Agriculture 精准农业

- Anatomy 计算解剖学

- Adaptive website s 自适应站点

- Affective computing 情感计算

- Banking 银行

- Bioinformatics 生物信息学

- Brain–machine interfaces 脑-机接口

- Cheminformatics 化学信息学

- Citizen science 公民科学

- Computer networks 网络仿真

- Computer vision 计算机视觉

- Credit-card fraud detection 信用卡欺诈检测

- Data quality 数据质量

- DNA sequence classification DNA序列分类

- Economics 计算经济学

- Financial market 金融市场 analysis [59]

- General game playing 一般游戏

- Handwriting recognition 手写体识别

- Insurance 保险

- Internet fraud detection 网络欺诈检测

- Linguistics 计算语言学

- Machine learning control 机器学习控制

- Machine perception 机器感知

- Machine translation 机器翻译

- Marketing 营销

- Medical diagnosis 自动医疗诊断

- Natural language processing 自然语言处理

- Online advertising 在线广告

- Optimization 数学优化

- Recommender systems 推荐系统

- Robot locomotion 机器人运动

- Search engines 搜索引擎

- Sentiment analysis 情绪分析

- Sequence mining 序列挖掘

- Software engineering 软件工程

- Speech recognition 语音识别

- Structural health monitoring 结构健康监测

- Theorem proving 自动定理证明

- Time series forecasting 时间序列预测

- User behavior analytics 用户行为分析

In 2006, the media-services provider Netflix held the first "Netflix Prize" competition to find a program to better predict user preferences and improve the accuracy on its existing Cinematch movie recommendation algorithm by at least 10%. A joint team made up of researchers from AT&T Labs-Research in collaboration with the teams Big Chaos and Pragmatic Theory built an ensemble model to win the Grand Prize in 2009 for $1 million.[60] Shortly after the prize was awarded, Netflix realized that viewers' ratings were not the best indicators of their viewing patterns ("everything is a recommendation") and they changed their recommendation engine accordingly.[61] In 2010 The Wall Street Journal wrote about the firm Rebellion Research and their use of machine learning to predict the financial crisis.[62] In 2012, co-founder of Sun Microsystems, Vinod Khosla, predicted that 80% of medical doctors' jobs would be lost in the next two decades to automated machine learning medical diagnostic software.[63] In 2014, it was reported that a machine learning algorithm had been applied in the field of art history to study fine art paintings, and that it may have revealed previously unrecognized influences among artists.[64] In 2019 Springer Nature published the first research book created using machine learning.[65]

In 2006, the media-services provider Netflix held the first "Netflix Prize" competition to find a program to better predict user preferences and improve the accuracy on its existing Cinematch movie recommendation algorithm by at least 10%. A joint team made up of researchers from AT&T Labs-Research in collaboration with the teams Big Chaos and Pragmatic Theory built an ensemble model to win the Grand Prize in 2009 for $1 million. Shortly after the prize was awarded, Netflix realized that viewers' ratings were not the best indicators of their viewing patterns ("everything is a recommendation") and they changed their recommendation engine accordingly. In 2010 The Wall Street Journal wrote about the firm Rebellion Research and their use of machine learning to predict the financial crisis. In 2012, co-founder of Sun Microsystems, Vinod Khosla, predicted that 80% of medical doctors' jobs would be lost in the next two decades to automated machine learning medical diagnostic software. In 2014, it was reported that a machine learning algorithm had been applied in the field of art history to study fine art paintings, and that it may have revealed previously unrecognized influences among artists. In 2019 Springer Nature published the first research book created using machine learning.

2006年,媒体服务提供商 Netflix 举办了首届“ Netflix 大奖”竞赛,目的是找到一个能更好地预测用户偏好的程序,并将其现有的 Cinematch 电影推荐算法的准确性提高至少10% 。由 AT&Tt 实验室的研究人员组成的联合团队与 Big Chaos 和 Pragmatic Theory 的团队合作建立了一个集成模型,赢得了2009年的一百万美元大奖。在该奖项颁发后不久,Netflix 意识到观众的收视率并不是他们观看模式的最佳指标(“一切都是推荐”),于是他们相应地改变了自己的推荐引擎。2010年,《华尔街日报》报道了 Rebellion Research 公司及其利用机器学习预测金融危机的情况。2012年,昇阳电脑的联合创始人 Vinod Khosla 预测,在未来的20年里,80%的医生的工作将会因为自动化的机器学习医疗诊断软件而流失。2014年,据报道,一种机器学习算法已应用于艺术史领域,用于研究美术绘画,它可能揭示了艺术家们前所未有的影响。2019年,Springer Nature出版了第一本利用机器学习进行研究的书。

局限性Limitations

Although machine learning has been transformative in some fields, machine-learning programs often fail to deliver expected results.[66][67][68] Reasons for this are numerous: lack of (suitable) data, lack of access to the data, data bias, privacy problems, badly chosen tasks and algorithms, wrong tools and people, lack of resources, and evaluation problems.[69]