负熵

In information theory and statistics, negentropy is used as a measure of distance to normality. The concept and phrase "negative entropy" was introduced by Erwin Schrödinger in his 1944 popular-science book What is Life?[1] Later, Léon Brillouin shortened the phrase to negentropy.[2][3] In 1974, Albert Szent-Györgyi proposed replacing the term negentropy with syntropy. That term may have originated in the 1940s with the Italian mathematician Luigi Fantappiè, who tried to construct a unified theory of biology and physics. Buckminster Fuller tried to popularize this usage, but negentropy remains common.

In information theory and statistics, negentropy is used as a measure of distance to normality. The concept and phrase "negative entropy" was introduced by Erwin Schrödinger in his 1944 popular-science book What is Life? Later, Léon Brillouin shortened the phrase to negentropy. In 1974, Albert Szent-Györgyi proposed replacing the term negentropy with syntropy. That term may have originated in the 1940s with the Italian mathematician Luigi Fantappiè, who tried to construct a unified theory of biology and physics. Buckminster Fuller tried to popularize this usage, but negentropy remains common.

在信息论和统计学中,负熵被用来度量与正态分布之间的距离。“负的熵”这个概念和短语是由埃尔温·薛定谔在他1944年的科普著作《生命是什么?》引入,后来莱昂·布里渊 把这个短语缩写为“负熵”。1974年, 阿尔伯特·圣捷尔吉提出用短语“同向”代替“负熵”。这个术语可能起源于20世纪40年代意大利数学家 Luigi fantappi,他试图建立一个生物学和物理学的统一理论。巴克敏斯特·福乐试图推广这种用法,但是负熵仍然很常用。

In a note to What is Life? Schrödinger explained his use of this phrase.

In a note to What is Life? Schrödinger explained his use of this phrase.

在《生命是什么?》的一个附注中,薛定谔解释了他使用这个短语的原因。

如果我只是迎合他们物理学家,我应该让讨论转向“自由能”。在这个语境中,自由能是更熟悉的概念。但是,这个高度专业的术语在语言学上似乎太接近于“能量”,无法让普通读者生动地看到两者之间的区别。

In 2009, Mahulikar & Herwig redefined negentropy of a dynamically ordered sub-system as the specific entropy deficit of the ordered sub-system relative to its surrounding chaos.[4] Thus, negentropy has SI units of (J kg−1 K−1) when defined based on specific entropy per unit mass, and (K−1) when defined based on specific entropy per unit energy. This definition enabled: i) scale-invariant thermodynamic representation of dynamic order existence, ii) formulation of physical principles exclusively for dynamic order existence and evolution, and iii) mathematical interpretation of Schrödinger's negentropy debt.

In 2009, Mahulikar & Herwig redefined negentropy of a dynamically ordered sub-system as the specific entropy deficit of the ordered sub-system relative to its surrounding chaos. Thus, negentropy has SI units of (J kg−1 K−1) when defined based on specific entropy per unit mass, and (K−1) when defined based on specific entropy per unit energy. This definition enabled: i) scale-invariant thermodynamic representation of dynamic order existence, ii) formulation of physical principles exclusively for dynamic order existence and evolution, and iii) mathematical interpretation of Schrödinger's negentropy debt.

2009年,Mahulikar 和 Herwig 将动态有序子系统的负熵重新定义为有序子系统相对于周围混沌的特定熵赤字。因此,根据单位质量的熵定义负熵的 SI 单位为(J kg−1 K−1) ,其中(K−1)的定义基于单位能量的熵。这个定义实现了: i)动态有序存在的尺度不变的热力学表示,ii)专门为动态有序存在和演化而制定的物理原理,iii)薛定谔负熵的数学解释。

Information theory

In information theory and statistics, negentropy is used as a measure of distance to normality.[5][6][7] Out of all distributions with a given mean and variance, the normal or Gaussian distribution is the one with the highest entropy. Negentropy measures the difference in entropy between a given distribution and the Gaussian distribution with the same mean and variance. Thus, negentropy is always nonnegative, is invariant by any linear invertible change of coordinates, and vanishes if and only if the signal is Gaussian.

In information theory and statistics, negentropy is used as a measure of distance to normality. Out of all distributions with a given mean and variance, the normal or Gaussian distribution is the one with the highest entropy. Negentropy measures the difference in entropy between a given distribution and the Gaussian distribution with the same mean and variance. Thus, negentropy is always nonnegative, is invariant by any linear invertible change of coordinates, and vanishes if and only if the signal is Gaussian.

在信息论和统计学中,负熵被用来度量到正态的距离。在所有具有给定均值和方差的分布中,正态分布或正态分布分布的熵最大。负熵用相同的均值和方差来度量给定分布和正态分布之间的熵差。因此,负熵总是非负的,是不变的任何线性可逆变化的坐标,并消失的当且仅当信号是高斯。

Negentropy is defined as

Negentropy is defined as

负熵定义为

- [math]\displaystyle{ J(p_x) = S(\varphi_x) - S(p_x)\, }[/math]

[math]\displaystyle{ J(p_x) = S(\varphi_x) - S(p_x)\, }[/math]

数学 j (p x) s ( varphi x)-s (p x) ,/ math

where [math]\displaystyle{ S(\varphi_x) }[/math] is the differential entropy of the Gaussian density with the same mean and variance as [math]\displaystyle{ p_x }[/math] and [math]\displaystyle{ S(p_x) }[/math] is the differential entropy of [math]\displaystyle{ p_x }[/math]:

where [math]\displaystyle{ S(\varphi_x) }[/math] is the differential entropy of the Gaussian density with the same mean and variance as [math]\displaystyle{ p_x }[/math] and [math]\displaystyle{ S(p_x) }[/math] is the differential entropy of [math]\displaystyle{ p_x }[/math]:

其中 math s ( varphi x) / math 是 Gaussian 密度的微分熵,其均值和方差与 math p x / math 和 math s (px) / math 是 math p x / math 的微分熵:

- [math]\displaystyle{ S(p_x) = - \int p_x(u) \log p_x(u) \, du }[/math]

[math]\displaystyle{ S(p_x) = - \int p_x(u) \log p_x(u) \, du }[/math]

数学 s (p x)- int p x (u) log p x (u) ,du / math

Negentropy is used in statistics and signal processing. It is related to network entropy, which is used in independent component analysis.[8][9]

Negentropy is used in statistics and signal processing. It is related to network entropy, which is used in independent component analysis.

负熵用于统计和信号处理。它与网络熵有关,而网络熵被用于独立元素分析。

The negentropy of a distribution is equal to the Kullback–Leibler divergence between [math]\displaystyle{ p_x }[/math] and a Gaussian distribution with the same mean and variance as [math]\displaystyle{ p_x }[/math] (see Differential entropy#Maximization in the normal distribution for a proof). In particular, it is always nonnegative.

The negentropy of a distribution is equal to the Kullback–Leibler divergence between [math]\displaystyle{ p_x }[/math] and a Gaussian distribution with the same mean and variance as [math]\displaystyle{ p_x }[/math] (see Differential entropy#Maximization in the normal distribution for a proof). In particular, it is always nonnegative.

分布的负熵等于数学 p x / math 和数学 p x / math 的均值和方差相同的正态分布的 Kullback-Leibler 散度(参见正态分布的微分熵 # 最大化)。特别是,它总是非负的。

Br /

Correlation between statistical negentropy and Gibbs' free energy

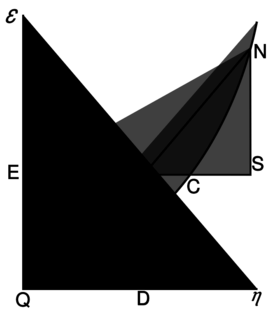

[[Willard Gibbs’ 1873 available energy (free energy) graph, which shows a plane perpendicular to the axis of v (volume) and passing through point A, which represents the initial state of the body. MN is the section of the surface of dissipated energy. Qε and Qη are sections of the planes η = 0 and ε = 0, and therefore parallel to the axes of ε (internal energy) and η (entropy) respectively. AD and AE are the energy and entropy of the body in its initial state, AB and AC its available energy (Gibbs energy) and its capacity for entropy (the amount by which the entropy of the body can be increased without changing the energy of the body or increasing its volume) respectively.]]

[ Willard Gibbs 的1873可用能量(自由能)图,它显示了一个垂直于 v (体积)轴和通过点 a 的平面,a 表示物体的初始状态。Mn 是表面耗散能的截面。Q 和 q 是平面0和0的截面,因此分别与(内能)和(熵)轴平行。Ad 和 AE 分别是物体初始状态的能量和熵,AB 和 AC 分别是物体的有效能(吉布斯能)和熵能(在不改变物体能量或增加物体体积的情况下增加物体熵的量)

There is a physical quantity closely linked to free energy (free enthalpy), with a unit of entropy and isomorphic to negentropy known in statistics and information theory. In 1873, Willard Gibbs created a diagram illustrating the concept of free energy corresponding to free enthalpy. On the diagram one can see the quantity called capacity for entropy. This quantity is the amount of entropy that may be increased without changing an internal energy or increasing its volume.[10] In other words, it is a difference between maximum possible, under assumed conditions, entropy and its actual entropy. It corresponds exactly to the definition of negentropy adopted in statistics and information theory. A similar physical quantity was introduced in 1869 by Massieu for the isothermal process[11][12][13] (both quantities differs just with a figure sign) and then Planck for the isothermal-isobaric process.[14] More recently, the Massieu–Planck thermodynamic potential, known also as free entropy, has been shown to play a great role in the so-called entropic formulation of statistical mechanics,[15] applied among the others in molecular biology[16] and thermodynamic non-equilibrium processes.[17]

There is a physical quantity closely linked to free energy (free enthalpy), with a unit of entropy and isomorphic to negentropy known in statistics and information theory. In 1873, Willard Gibbs created a diagram illustrating the concept of free energy corresponding to free enthalpy. On the diagram one can see the quantity called capacity for entropy. This quantity is the amount of entropy that may be increased without changing an internal energy or increasing its volume. In other words, it is a difference between maximum possible, under assumed conditions, entropy and its actual entropy. It corresponds exactly to the definition of negentropy adopted in statistics and information theory. A similar physical quantity was introduced in 1869 by Massieu for the isothermal process (both quantities differs just with a figure sign) and then Planck for the isothermal-isobaric process. More recently, the Massieu–Planck thermodynamic potential, known also as free entropy, has been shown to play a great role in the so-called entropic formulation of statistical mechanics, applied among the others in molecular biology and thermodynamic non-equilibrium processes.

有一个与自由能(自由焓)密切相关的物理量,其熵的单位与统计学和信息论中已知的负熵同构。1873年,威拉德 · 吉布斯创建了一个图表,说明了自由能对应于自由焓的概念。在图表上,我们可以看到称为熵的容量。这个量是在不改变内部能量或增加其体积的情况下增加的熵值。换句话说,它是假定条件下最大可能性与其实际熵之间的差异。它正好符合统计学和信息论中负熵的定义。1869年,Massieu 引入了一个类似的物理量,用于等温过程(两个量只是因为一个图形符号不同) ,然后 Planck 引入了等温-等压过程。最近,马歇尔-普朗克热动力位能,也被称为自由熵,已被证明在所谓的统计力学熵公式中发挥了重要作用,在分子生物学和热力学非平衡过程中应用。

- [math]\displaystyle{ J = S_\max - S = -\Phi = -k \ln Z\, }[/math]

[math]\displaystyle{ J = S_\max - S = -\Phi = -k \ln Z\, }[/math]

数学 j s max-s- Phi-k ln z ,/ math

- where:

where:

在哪里:

- [math]\displaystyle{ S }[/math] is entropy

[math]\displaystyle{ S }[/math] is entropy

数学是熵

- [math]\displaystyle{ J }[/math] is negentropy (Gibbs "capacity for entropy")

[math]\displaystyle{ J }[/math] is negentropy (Gibbs "capacity for entropy")

数学 j / math 是负熵(吉布斯“熵的容量”)

- [math]\displaystyle{ \Phi }[/math] is the Massieu potential

[math]\displaystyle{ \Phi }[/math] is the Massieu potential

Math Phi / math 是 Massieu 的潜力

- [math]\displaystyle{ Z }[/math] is the partition function

[math]\displaystyle{ Z }[/math] is the partition function

Math z / math 就是配分函数

- [math]\displaystyle{ k }[/math] the Boltzmann constant

[math]\displaystyle{ k }[/math] the Boltzmann constant

数学 / 数学波兹曼常数

In particular, mathematically the negentropy (the negative entropy function, in physics interpreted as free entropy) is the convex conjugate of LogSumExp (in physics interpreted as the free energy).

In particular, mathematically the negentropy (the negative entropy function, in physics interpreted as free entropy) is the convex conjugate of LogSumExp (in physics interpreted as the free energy).

特别是,数学上的负熵(负熵函数,在物理学中解释为自由熵)是 LogSumExp 的凸共轭(在物理学中解释为自由能)。

Brillouin's negentropy principle of information

In 1953, Léon Brillouin derived a general equation[18] stating that the changing of an information bit value requires at least kT ln(2) energy. This is the same energy as the work Leó Szilárd's engine produces in the idealistic case. In his book,[19] he further explored this problem concluding that any cause of this bit value change (measurement, decision about a yes/no question, erasure, display, etc.) will require the same amount of energy.

In 1953, Léon Brillouin derived a general equation stating that the changing of an information bit value requires at least kT ln(2) energy. This is the same energy as the work Leó Szilárd's engine produces in the idealistic case. In his book, he further explored this problem concluding that any cause of this bit value change (measurement, decision about a yes/no question, erasure, display, etc.) will require the same amount of energy.

1953年,布里渊上的 l 推导出一个一般方程,说明信息比特值的变化至少需要 kT ln (2)能量。在理想的情况下,这是与 le szil rd 的引擎所产生的功相同的能量。在他的书中,他进一步探讨了这个问题,得出结论: 导致这个位值变化的任何原因(测量、关于 yes / no 问题的决定、擦除、显示等等)都需要同样的能量。

See also

Notes

- ↑ Schrödinger, Erwin, What is Life – the Physical Aspect of the Living Cell, Cambridge University Press, 1944

- ↑ Brillouin, Leon: (1953) "Negentropy Principle of Information", J. of Applied Physics, v. 24(9), pp. 1152–1163

- ↑ Léon Brillouin, La science et la théorie de l'information, Masson, 1959

- ↑ Mahulikar, S.P. & Herwig, H.: (2009) "Exact thermodynamic principles for dynamic order existence and evolution in chaos", Chaos, Solitons & Fractals, v. 41(4), pp. 1939–1948

- ↑ Aapo Hyvärinen, Survey on Independent Component Analysis, node32: Negentropy, Helsinki University of Technology Laboratory of Computer and Information Science

- ↑ Aapo Hyvärinen and Erkki Oja, Independent Component Analysis: A Tutorial, node14: Negentropy, Helsinki University of Technology Laboratory of Computer and Information Science

- ↑ Ruye Wang, Independent Component Analysis, node4: Measures of Non-Gaussianity

- ↑ P. Comon, Independent Component Analysis – a new concept?, Signal Processing, 36 287–314, 1994.

- ↑ Didier G. Leibovici and Christian Beckmann, An introduction to Multiway Methods for Multi-Subject fMRI experiment, FMRIB Technical Report 2001, Oxford Centre for Functional Magnetic Resonance Imaging of the Brain (FMRIB), Department of Clinical Neurology, University of Oxford, John Radcliffe Hospital, Headley Way, Headington, Oxford, UK.

- ↑ Willard Gibbs, A Method of Geometrical Representation of the Thermodynamic Properties of Substances by Means of Surfaces, Transactions of the Connecticut Academy, 382–404 (1873)

- ↑ Massieu, M. F. (1869a). Sur les fonctions caractéristiques des divers fluides. C. R. Acad. Sci. LXIX:858–862.

- ↑ Massieu, M. F. (1869b). Addition au precedent memoire sur les fonctions caractéristiques. C. R. Acad. Sci. LXIX:1057–1061.

- ↑ Massieu, M. F. (1869), Compt. Rend. 69 (858): 1057.

- ↑ Planck, M. (1945). Treatise on Thermodynamics. Dover, New York.

- ↑ Antoni Planes, Eduard Vives, Entropic Formulation of Statistical Mechanics, Entropic variables and Massieu–Planck functions 2000-10-24 Universitat de Barcelona

- ↑ John A. Scheilman, Temperature, Stability, and the Hydrophobic Interaction, Biophysical Journal 73 (December 1997), 2960–2964, Institute of Molecular Biology, University of Oregon, Eugene, Oregon 97403 USA

- ↑ Z. Hens and X. de Hemptinne, Non-equilibrium Thermodynamics approach to Transport Processes in Gas Mixtures, Department of Chemistry, Catholic University of Leuven, Celestijnenlaan 200 F, B-3001 Heverlee, Belgium

- ↑ Leon Brillouin, The negentropy principle of information, J. Applied Physics 24, 1152–1163 1953

- ↑ Leon Brillouin, Science and Information theory, Dover, 1956

| 40x40px | Look up 负熵 in Wiktionary, the free dictionary. |

Category:Entropy and information

类别: 熵和信息

Category:Negative concepts

类别: 否定概念

Category:Statistical deviation and dispersion

类别: 统计偏差和离散度

Category:Thermodynamic entropy

类别: 熵

This page was moved from wikipedia:en:Negentropy. Its edit history can be viewed at 负熵/edithistory