条件熵

此词条由Jie翻译。

In information theory, the conditional entropy quantifies the amount of information needed to describe the outcome of a random variable [math]\displaystyle{ Y }[/math] given that the value of another random variable [math]\displaystyle{ X }[/math] is known. Here, information is measured in shannons, nats, or hartleys. The entropy of [math]\displaystyle{ Y }[/math] conditioned on [math]\displaystyle{ X }[/math] is written as H(Y ǀ X).

在 信息论Information theory中,假设随机变量[math]\displaystyle{ X }[/math]的值已知,那么 条件熵Conditional entropy则用于量化描述随机变量[math]\displaystyle{ Y }[/math]的结果所需的信息量。此时,信息以 香农Shannon , 奈特nat或 哈特莱hartley衡量。以[math]\displaystyle{ X }[/math]为条件的[math]\displaystyle{ Y }[/math]熵写为[math]\displaystyle{ \Eta(Y|X) }[/math]。

Definition

The conditional entropy of [math]\displaystyle{ Y }[/math] given [math]\displaystyle{ X }[/math] is defined as

[math]\displaystyle{ \Eta(Y|X)\ = -\sum_{x\in\mathcal X, y\in\mathcal Y}p(x,y)\log \frac {p(x,y)} {p(x)} }[/math]

|

|

(Eq.1) |

where [math]\displaystyle{ \mathcal X }[/math] and [math]\displaystyle{ \mathcal Y }[/math] denote the support sets of [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math].

Note: It is conventioned that the expressions [math]\displaystyle{ 0 \log 0 }[/math] and [math]\displaystyle{ 0 \log c/0 }[/math] for fixed [math]\displaystyle{ c \gt 0 }[/math] should be treated as being equal to zero. This is because [math]\displaystyle{ \lim_{\theta\to0^+} \theta\, \log \,c/\theta = 0 }[/math] and [math]\displaystyle{ \lim_{\theta\to0^+} \theta\, \log \theta = 0 }[/math][1]

Intuitive explanation of the definition : According to the definition, [math]\displaystyle{ \displaystyle H( Y|X) =\mathbb{E}( \ f( X,Y) \ ) }[/math] where [math]\displaystyle{ \displaystyle f:( x,y) \ \rightarrow -\log( \ p( y|x) \ ) . }[/math] [math]\displaystyle{ \displaystyle f }[/math] associates to [math]\displaystyle{ \displaystyle ( x,y) }[/math] the information content of [math]\displaystyle{ \displaystyle ( Y=y) }[/math] given [math]\displaystyle{ \displaystyle (X=x) }[/math], which is the amount of information needed to describe the event [math]\displaystyle{ \displaystyle (Y=y) }[/math] given [math]\displaystyle{ (X=x) }[/math]. According to the law of large numbers, [math]\displaystyle{ \displaystyle H(Y|X) }[/math] is the arithmetic mean of a large number of independent realizations of [math]\displaystyle{ \displaystyle f(X,Y) }[/math].

Motivation

Let [math]\displaystyle{ \Eta(Y|X=x) }[/math] be the entropy of the discrete random variable [math]\displaystyle{ Y }[/math] conditioned on the discrete random variable [math]\displaystyle{ X }[/math] taking a certain value [math]\displaystyle{ x }[/math]. Denote the support sets of [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] by [math]\displaystyle{ \mathcal X }[/math] and [math]\displaystyle{ \mathcal Y }[/math]. Let [math]\displaystyle{ Y }[/math] have probability mass function [math]\displaystyle{ p_Y{(y)} }[/math]. The unconditional entropy of [math]\displaystyle{ Y }[/math] is calculated as [math]\displaystyle{ \Eta(Y) := \mathbb{E}[\operatorname{I}(Y)] }[/math], i.e.

- [math]\displaystyle{ \Eta(Y) = \sum_{y\in\mathcal Y} {\mathrm{Pr}(Y=y)\,\mathrm{I}(y)} = -\sum_{y\in\mathcal Y} {p_Y(y) \log_2{p_Y(y)}}, }[/math]

where [math]\displaystyle{ \operatorname{I}(y_i) }[/math] is the information content of the outcome of [math]\displaystyle{ Y }[/math] taking the value [math]\displaystyle{ y_i }[/math]. The entropy of [math]\displaystyle{ Y }[/math] conditioned on [math]\displaystyle{ X }[/math] taking the value [math]\displaystyle{ x }[/math] is defined analogously by conditional expectation:

- [math]\displaystyle{ \Eta(Y|X=x) = -\sum_{y\in\mathcal Y} {\Pr(Y = y|X=x) \log_2{\Pr(Y = y|X=x)}}. }[/math]

Note that [math]\displaystyle{ \Eta(Y|X) }[/math] is the result of averaging [math]\displaystyle{ \Eta(Y|X=x) }[/math] over all possible values [math]\displaystyle{ x }[/math] that [math]\displaystyle{ X }[/math] may take. Also, if the above sum is taken over a sample [math]\displaystyle{ y_1, \dots, y_n }[/math], the expected value [math]\displaystyle{ E_X[ \Eta(y_1, \dots, y_n \mid X = x)] }[/math] is known in some domains as equivocation.[2]

Given discrete random variables [math]\displaystyle{ X }[/math] with image [math]\displaystyle{ \mathcal X }[/math] and [math]\displaystyle{ Y }[/math] with image [math]\displaystyle{ \mathcal Y }[/math], the conditional entropy of [math]\displaystyle{ Y }[/math] given [math]\displaystyle{ X }[/math] is defined as the weighted sum of [math]\displaystyle{ \Eta(Y|X=x) }[/math] for each possible value of [math]\displaystyle{ x }[/math], using [math]\displaystyle{ p(x) }[/math] as the weights:[3]:15

- [math]\displaystyle{ \begin{align} \Eta(Y|X)\ &\equiv \sum_{x\in\mathcal X}\,p(x)\,\Eta(Y|X=x)\\ & =-\sum_{x\in\mathcal X} p(x)\sum_{y\in\mathcal Y}\,p(y|x)\,\log\, p(y|x)\\ & =-\sum_{x\in\mathcal X}\sum_{y\in\mathcal Y}\,p(x,y)\,\log\,p(y|x)\\ & =-\sum_{x\in\mathcal X, y\in\mathcal Y}p(x,y)\log\,p(y|x)\\ & =-\sum_{x\in\mathcal X, y\in\mathcal Y}p(x,y)\log \frac {p(x,y)} {p(x)}. \\ & = \sum_{x\in\mathcal X, y\in\mathcal Y}p(x,y)\log \frac {p(x)} {p(x,y)}. \\ \end{align} }[/math]

Properties

Conditional entropy equals zero

[math]\displaystyle{ \Eta(Y|X)=0 }[/math] if and only if the value of [math]\displaystyle{ Y }[/math] is completely determined by the value of [math]\displaystyle{ X }[/math].

Conditional entropy of independent random variables

Conversely, [math]\displaystyle{ \Eta(Y|X) = \Eta(Y) }[/math] if and only if [math]\displaystyle{ Y }[/math] and [math]\displaystyle{ X }[/math] are independent random variables.

Chain rule

Assume that the combined system determined by two random variables [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] has joint entropy [math]\displaystyle{ \Eta(X,Y) }[/math], that is, we need [math]\displaystyle{ \Eta(X,Y) }[/math] bits of information on average to describe its exact state. Now if we first learn the value of [math]\displaystyle{ X }[/math], we have gained [math]\displaystyle{ \Eta(X) }[/math] bits of information. Once [math]\displaystyle{ X }[/math] is known, we only need [math]\displaystyle{ \Eta(X,Y)-\Eta(X) }[/math] bits to describe the state of the whole system. This quantity is exactly [math]\displaystyle{ \Eta(Y|X) }[/math], which gives the chain rule of conditional entropy:

- [math]\displaystyle{ \Eta(Y|X)\, = \, \Eta(X,Y)- \Eta(X). }[/math][3]:17

The chain rule follows from the above definition of conditional entropy:

- [math]\displaystyle{ \begin{align} \Eta(Y|X) &= \sum_{x\in\mathcal X, y\in\mathcal Y}p(x,y)\log \left(\frac{p(x)}{p(x,y)} \right) \\[4pt] &= \sum_{x\in\mathcal X, y\in\mathcal Y}p(x,y)(\log (p(x))-\log (p(x,y))) \\[4pt] &= -\sum_{x\in\mathcal X, y\in\mathcal Y}p(x,y)\log (p(x,y)) + \sum_{x\in\mathcal X, y\in\mathcal Y}{p(x,y)\log(p(x))} \\[4pt] & = \Eta(X,Y) + \sum_{x \in \mathcal X} p(x)\log (p(x) ) \\[4pt] & = \Eta(X,Y) - \Eta(X). \end{align} }[/math]

In general, a chain rule for multiple random variables holds:

- [math]\displaystyle{ \Eta(X_1,X_2,\ldots,X_n) = \sum_{i=1}^n \Eta(X_i | X_1, \ldots, X_{i-1}) }[/math][3]:22

It has a similar form to chain rule in probability theory, except that addition instead of multiplication is used.

Bayes' rule

Bayes' rule for conditional entropy states

- [math]\displaystyle{ \Eta(Y|X) \,=\, \Eta(X|Y) - \Eta(X) + \Eta(Y). }[/math]

Proof. [math]\displaystyle{ \Eta(Y|X) = \Eta(X,Y) - \Eta(X) }[/math] and [math]\displaystyle{ \Eta(X|Y) = \Eta(Y,X) - \Eta(Y) }[/math]. Symmetry entails [math]\displaystyle{ \Eta(X,Y) = \Eta(Y,X) }[/math]. Subtracting the two equations implies Bayes' rule.

If [math]\displaystyle{ Y }[/math] is conditionally independent of [math]\displaystyle{ Z }[/math] given [math]\displaystyle{ X }[/math] we have:

- [math]\displaystyle{ \Eta(Y|X,Z) \,=\, \Eta(Y|X). }[/math]

Other properties

For any [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math]:

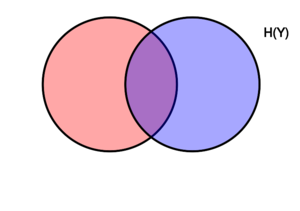

- [math]\displaystyle{ \begin{align} \Eta(Y|X) &\le \Eta(Y) \, \\ \Eta(X,Y) &= \Eta(X|Y) + \Eta(Y|X) + \operatorname{I}(X;Y),\qquad \\ \Eta(X,Y) &= \Eta(X) + \Eta(Y) - \operatorname{I}(X;Y),\, \\ \operatorname{I}(X;Y) &\le \Eta(X),\, \end{align} }[/math]

where [math]\displaystyle{ \operatorname{I}(X;Y) }[/math] is the mutual information between [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math].

For independent [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math]:

- [math]\displaystyle{ \Eta(Y|X) = \Eta(Y) }[/math] and [math]\displaystyle{ \Eta(X|Y) = \Eta(X) \, }[/math]

Although the specific-conditional entropy [math]\displaystyle{ \Eta(X|Y=y) }[/math] can be either less or greater than [math]\displaystyle{ \Eta(X) }[/math] for a given random variate [math]\displaystyle{ y }[/math] of [math]\displaystyle{ Y }[/math], [math]\displaystyle{ \Eta(X|Y) }[/math] can never exceed [math]\displaystyle{ \Eta(X) }[/math].

Conditional differential entropy

Definition

The above definition is for discrete random variables. The continuous version of discrete conditional entropy is called conditional differential (or continuous) entropy. Let [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] be a continuous random variables with a joint probability density function [math]\displaystyle{ f(x,y) }[/math]. The differential conditional entropy [math]\displaystyle{ h(X|Y) }[/math] is defined as[3]:249

[math]\displaystyle{ h(X|Y) = -\int_{\mathcal X, \mathcal Y} f(x,y)\log f(x|y)\,dx dy }[/math]

|

|

(Eq.2) |

Properties

In contrast to the conditional entropy for discrete random variables, the conditional differential entropy may be negative.

As in the discrete case there is a chain rule for differential entropy:

- [math]\displaystyle{ h(Y|X)\,=\,h(X,Y)-h(X) }[/math][3]:253

Notice however that this rule may not be true if the involved differential entropies do not exist or are infinite.

Joint differential entropy is also used in the definition of the mutual information between continuous random variables:

- [math]\displaystyle{ \operatorname{I}(X,Y)=h(X)-h(X|Y)=h(Y)-h(Y|X) }[/math]

[math]\displaystyle{ h(X|Y) \le h(X) }[/math] with equality if and only if [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] are independent.[3]:253

Relation to estimator error

The conditional differential entropy yields a lower bound on the expected squared error of an estimator. For any random variable [math]\displaystyle{ X }[/math], observation [math]\displaystyle{ Y }[/math] and estimator [math]\displaystyle{ \widehat{X} }[/math] the following holds:[3]:255

- [math]\displaystyle{ \mathbb{E}\left[\bigl(X - \widehat{X}{(Y)}\bigr)^2\right] \ge \frac{1}{2\pi e}e^{2h(X|Y)} }[/math]

This is related to the uncertainty principle from quantum mechanics.

Generalization to quantum theory

In quantum information theory, the conditional entropy is generalized to the conditional quantum entropy. The latter can take negative values, unlike its classical counterpart.

See also

- Entropy (information theory)

- Mutual information

- Conditional quantum entropy

- Variation of information

- Entropy power inequality

- Likelihood function

References

- ↑ "David MacKay: Information Theory, Pattern Recognition and Neural Networks: The Book". www.inference.org.uk. Retrieved 2019-10-25.

- ↑ Hellman, M.; Raviv, J. (1970). "Probability of error, equivocation, and the Chernoff bound". IEEE Transactions on Information Theory. 16 (4): 368–372.

- ↑ 3.0 3.1 3.2 3.3 3.4 3.5 3.6 T. Cover; J. Thomas (1991). Elements of Information Theory. ISBN 0-471-06259-6. https://archive.org/details/elementsofinform0000cove.