联合熵

此词条Jie翻译。

In information theory, joint entropy is a measure of the uncertainty associated with a set of variables.[1]

在 信息论Information theory中, 联合熵Joint entropy是用于对与一组变量相关的不确定性进行度量。

Definition 定义

The joint Shannon entropy (in bits) of two discrete random variables [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] with images [math]\displaystyle{ \mathcal X }[/math] and [math]\displaystyle{ \mathcal Y }[/math] is defined as[2]:16

联合香农熵Shannon entropy 的定义是:以比特为单位,具有[math]\displaystyle{ \mathcal X }[/math]和[math]\displaystyle{ \mathcal Y }[/math]的两个离散随机变量[math]\displaystyle{ X }[/math]和[math]\displaystyle{ Y }[/math]的

[math]\displaystyle{ \Eta(X,Y) = -\sum_{x\in\mathcal X} \sum_{y\in\mathcal Y} P(x,y) \log_2[P(x,y)] }[/math]

|

|

(Eq.1) |

where [math]\displaystyle{ x }[/math] and [math]\displaystyle{ y }[/math] are particular values of [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math], respectively, [math]\displaystyle{ P(x,y) }[/math] is the joint probability of these values occurring together, and [math]\displaystyle{ P(x,y) \log_2[P(x,y)] }[/math] is defined to be 0 if [math]\displaystyle{ P(x,y)=0 }[/math].

其中[math]\displaystyle{ x }[/math]和[math]\displaystyle{ y }[/math]分别是[math]\displaystyle{ X }[/math]和[math]\displaystyle{ Y }[/math]的特定值,[math]\displaystyle{ P(x,y) }[/math]是这些值产生交集时的联合概率,如果[math]\displaystyle{ P(x,y)=0 }[/math]那么[math]\displaystyle{ P(x,y) \log_2[P(x,y)] }[/math]定义为0。

For more than two random variables [math]\displaystyle{ X_1, ..., X_n }[/math] this expands to

对于两个以上的随机变量[math]\displaystyle{ X_1, ..., X_n }[/math],它扩展为

[math]\displaystyle{ \Eta(X_1, ..., X_n) =

-\sum_{x_1 \in\mathcal X_1} ... \sum_{x_n \in\mathcal X_n} P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)] }[/math]

|

|

(Eq.2) |

where [math]\displaystyle{ x_1,...,x_n }[/math] are particular values of [math]\displaystyle{ X_1,...,X_n }[/math], respectively, [math]\displaystyle{ P(x_1, ..., x_n) }[/math] is the probability of these values occurring together, and [math]\displaystyle{ P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)] }[/math] is defined to be 0 if [math]\displaystyle{ P(x_1, ..., x_n)=0 }[/math].

其中[math]\displaystyle{ x_1,...,x_n }[/math]分别是[math]\displaystyle{ X_1,...,X_n }[/math]的特定值,[math]\displaystyle{ P(x_1, ..., x_n) }[/math]是这些值产生交集的概率,如果[math]\displaystyle{ P(x_1, ..., x_n)=0 }[/math]那么[math]\displaystyle{ P(x_1, ..., x_n) \log_2[P(x_1, ..., x_n)] }[/math]定义为0。

Properties 属性

Nonnegativity 非负性

The joint entropy of a set of random variables is a nonnegative number.

一组随机变量的联合熵是一个非负数。

- [math]\displaystyle{ H(X,Y) \geq 0 }[/math]

- [math]\displaystyle{ H(X_1,\ldots, X_n) \geq 0 }[/math]

Greater than individual entropies 高值性

The joint entropy of a set of variables is greater than or equal to the maximum of all of the individual entropies of the variables in the set.

一组变量的联合熵大于或等于该组变量的所有单个熵的最大值。

- [math]\displaystyle{ H(X,Y) \geq \max \left[H(X),H(Y) \right] }[/math]

- [math]\displaystyle{ H \bigl(X_1,\ldots, X_n \bigr) \geq \max_{1 \le i \le n} \Bigl\{H\bigl(X_i\bigr) \Bigr\} }[/math]

Less than or equal to the sum of individual entropies 小于或等于单个熵的总和

The joint entropy of a set of variables is less than or equal to the sum of the individual entropies of the variables in the set. This is an example of subadditivity. This inequality is an equality if and only if [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] are statistically independent.[2]:30

一组变量的联合熵小于或等于该组变量各个熵的总和。这是次可加性的一个例子。即当且仅当[math]\displaystyle{ X }[/math]和[math]\displaystyle{ Y }[/math]在统计上独立时,该不等式才是等式。[2]:30

- [math]\displaystyle{ H(X,Y) \leq H(X) + H(Y) }[/math]

- [math]\displaystyle{ H(X_1,\ldots, X_n) \leq H(X_1) + \ldots + H(X_n) }[/math]

Relations to other entropy measures 与其他熵测度的关系

Joint entropy is used in the definition of conditional entropy[2]:22

联合熵用于定义 条件熵Conditional entropy :

- [math]\displaystyle{ H(X|Y) = H(X,Y) - H(Y)\, }[/math],

and [math]\displaystyle{ H(X_1,\dots,X_n) = \sum_{k=1}^n H(X_k|X_{k-1},\dots, X_1) }[/math]

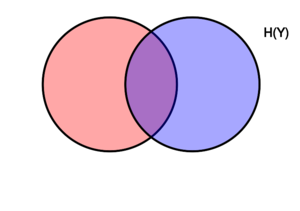

It is also used in the definition of mutual information[2]:21

它也用于定义 交互信息Mutual information:

- [math]\displaystyle{ \operatorname{I}(X;Y) = H(X) + H(Y) - H(X,Y)\, }[/math]

In quantum information theory, the joint entropy is generalized into the joint quantum entropy.

在 量子信息论Quantum information theory中,联合熵被广义化为 联合量子熵Joint quantum entropy。

Applications 应用

A python package for computing all multivariate joint entropies, mutual informations, conditional mutual information, total correlations, information distance in a dataset of n variables is available.[3]

提供了一个python软件包,用于计算n个变量的数据集中的所有多元联合熵,交互信息,条件交互信息,总相关性,信息距离。

Joint differential entropy 联合微分熵

Definition 定义

The above definition is for discrete random variables and just as valid in the case of continuous random variables. The continuous version of discrete joint entropy is called joint differential (or continuous) entropy. Let [math]\displaystyle{ X }[/math] and [math]\displaystyle{ Y }[/math] be a continuous random variables with a joint probability density function [math]\displaystyle{ f(x,y) }[/math]. The differential joint entropy [math]\displaystyle{ h(X,Y) }[/math] is defined as[2]:249

上面的定义是针对离散随机变量的,不过对于连续随机变量同样有效。离散联合熵的连续形式称为联合微分(或连续)熵。令[math]\displaystyle{ X }[/math]和[math]\displaystyle{ Y }[/math]为具有 联合概率密度函数Joint probability density function [math]\displaystyle{ f(x,y) }[/math]的连续随机变量,那么微分联合熵[math]\displaystyle{ h(X,Y) }[/math]定义为:

[math]\displaystyle{ h(X,Y) = -\int_{\mathcal X , \mathcal Y} f(x,y)\log f(x,y)\,dx dy }[/math]

|

|

(Eq.3) |

For more than two continuous random variables [math]\displaystyle{ X_1, ..., X_n }[/math] the definition is generalized to:

对于两个以上的连续随机变量[math]\displaystyle{ X_1, ..., X_n }[/math],其定义可概括为:

[math]\displaystyle{ h(X_1, \ldots,X_n) = -\int f(x_1, \ldots,x_n)\log f(x_1, \ldots,x_n)\,dx_1 \ldots dx_n }[/math]

|

|

(Eq.4) |

The integral is taken over the support of [math]\displaystyle{ f }[/math]. It is possible that the integral does not exist in which case we say that the differential entropy is not defined.

这里可以用积分处理表达[math]\displaystyle{ f }[/math]。当然如果微分熵没有定义,积分也可能不存在。

Properties 属性

As in the discrete case the joint differential entropy of a set of random variables is smaller or equal than the sum of the entropies of the individual random variables:

与离散情况一样,一组随机变量的联合微分熵小于或等于各个随机变量的熵之和:

- [math]\displaystyle{ h(X_1,X_2, \ldots,X_n) \le \sum_{i=1}^n h(X_i) }[/math][2]:253

The following chain rule holds for two random variables:

以下链式法则适用于两个随机变量:

- [math]\displaystyle{ h(X,Y) = h(X|Y) + h(Y) }[/math]

In the case of more than two random variables this generalizes to:[2]:253

对于两个以上的随机变量,一般可归纳为:

- [math]\displaystyle{ h(X_1,X_2, \ldots,X_n) = \sum_{i=1}^n h(X_i|X_1,X_2, \ldots,X_{i-1}) }[/math]

Joint differential entropy is also used in the definition of the mutual information between continuous random variables:

联合微分熵也用于定义连续随机变量之间的交互信息:

- [math]\displaystyle{ \operatorname{I}(X,Y)=h(X)+h(Y)-h(X,Y) }[/math]

References 参考文献

- ↑ Theresa M. Korn; Korn, Granino Arthur. Mathematical Handbook for Scientists and Engineers: Definitions, Theorems, and Formulas for Reference and Review. New York: Dover Publications. ISBN 0-486-41147-8.

- ↑ 2.0 2.1 2.2 2.3 2.4 2.5 2.6 2.7 Thomas M. Cover; Joy A. Thomas. Elements of Information Theory. Hoboken, New Jersey: Wiley. ISBN 0-471-24195-4.

- ↑ "InfoTopo: Topological Information Data Analysis. Deep statistical unsupervised and supervised learning - File Exchange - Github". github.com/pierrebaudot/infotopopy/. Retrieved 26 September 2020.