“信息论 Information theory”的版本间的差异

| 第1行: | 第1行: | ||

| − | + | {{distinguish|Information science}} | |

| 第187行: | 第187行: | ||

==Quantities of information== | ==Quantities of information== | ||

| − | + | 信息的度量 | |

{{Main|Quantities of information}} | {{Main|Quantities of information}} | ||

| 第199行: | 第199行: | ||

Information theory is based on [[probability theory]] and statistics. Information theory often concerns itself with measures of information of the distributions associated with random variables. Important quantities of information are entropy, a measure of information in a single random variable, and mutual information, a measure of information in common between two random variables. The former quantity is a property of the probability distribution of a random variable and gives a limit on the rate at which data generated by independent samples with the given distribution can be reliably compressed. The latter is a property of the joint distribution of two random variables, and is the maximum rate of reliable communication across a noisy [[Communication channel|channel]] in the limit of long block lengths, when the channel statistics are determined by the joint distribution. | Information theory is based on [[probability theory]] and statistics. Information theory often concerns itself with measures of information of the distributions associated with random variables. Important quantities of information are entropy, a measure of information in a single random variable, and mutual information, a measure of information in common between two random variables. The former quantity is a property of the probability distribution of a random variable and gives a limit on the rate at which data generated by independent samples with the given distribution can be reliably compressed. The latter is a property of the joint distribution of two random variables, and is the maximum rate of reliable communication across a noisy [[Communication channel|channel]] in the limit of long block lengths, when the channel statistics are determined by the joint distribution. | ||

| − | Information theory is based on probability theory and statistics. Information theory often concerns itself with measures of information of the distributions associated with random variables. Important quantities of information are entropy, a measure of information in a single random variable, and mutual information, a measure of information in common between two random variables. The former quantity is a property of the probability distribution of a random variable and gives a limit on the rate at which data generated by independent samples with the given distribution can be reliably compressed. The latter is a property of the joint distribution of two random variables, and is the maximum rate of reliable communication across a noisy channel in the limit of long block lengths, when the channel statistics are determined by the joint distribution. | + | Information theory is based on probability theory and statistics. Information theory often concerns itself with measures of information of the distributions associated with random variables. Important quantities of information are entropy, a measure of information in a single random variable, and mutual information, a measure of information in common between two random variables. The former quantity is a property of the probability distribution of a random variable and gives a limit on the rate at which data generated by independent samples with the given distribution can be reliably compressed. |

| + | The latter is a property of the joint distribution of two random variables, and is the maximum rate of reliable communication across a noisy channel in the limit of long block lengths, when the channel statistics are determined by the joint distribution. | ||

| − | + | 信息论基于概率论和统计学,其中经常涉及与随机变量相关的分布信息的度量。信息论中重要的信息量有:熵(两个随机变量中信息的度量)和互信息(两个随机变量之间共有的信息的度量)。熵是随机变量的概率分布的一个属性,它限制了由具有给定分布的独立样本生成的数据能够进行可靠压缩的速率。互信息是两个随机变量联合分布的一个属性,是当信道统计量由联合分布确定时,在长块长度的限制下通过噪声信道的可靠通信的最大速率。 | |

| 第211行: | 第212行: | ||

The choice of logarithmic base in the following formulae determines the unit of information entropy that is used. A common unit of information is the bit, based on the binary logarithm. Other units include the nat, which is based on the natural logarithm, and the decimal digit, which is based on the common logarithm. | The choice of logarithmic base in the following formulae determines the unit of information entropy that is used. A common unit of information is the bit, based on the binary logarithm. Other units include the nat, which is based on the natural logarithm, and the decimal digit, which is based on the common logarithm. | ||

| − | + | 在下列公式中,对数底数的选择决定了信息熵的单位。信息的常见单位是比特(基于二进制对数)。其他单位包括nat(自然对数)和十进制数字(常用对数)。 | |

| − | |||

| 第219行: | 第219行: | ||

In what follows, an expression of the form {{math|''p'' log ''p''}} is considered by convention to be equal to zero whenever {{math|1=''p'' = 0}}. This is justified because <math>\lim_{p \rightarrow 0+} p \log p = 0</math> for any logarithmic base. | In what follows, an expression of the form {{math|''p'' log ''p''}} is considered by convention to be equal to zero whenever {{math|1=''p'' = 0}}. This is justified because <math>\lim_{p \rightarrow 0+} p \log p = 0</math> for any logarithmic base. | ||

| − | In what follows, an expression of the form | + | In what follows, an expression of the form is considered by convention to be equal to zero whenever . This is justified because <math>\lim_{p \rightarrow 0+} p \log p = 0</math> for any logarithmic base. |

| − | + | 下文中,按惯例将形式的表达式视为等于零。这是合理的,因为<math>\lim_{p \rightarrow 0+} p \log p = 0</math>适用于任何对数底。 | |

| 第231行: | 第231行: | ||

===Entropy of an information source=== | ===Entropy of an information source=== | ||

| − | + | 信源的熵 | |

Based on the [[probability mass function]] of each source symbol to be communicated, the Shannon [[Entropy (information theory)|entropy]] {{math|''H''}}, in units of bits (per symbol), is given by | Based on the [[probability mass function]] of each source symbol to be communicated, the Shannon [[Entropy (information theory)|entropy]] {{math|''H''}}, in units of bits (per symbol), is given by | ||

Based on the probability mass function of each source symbol to be communicated, the Shannon entropy , in units of bits (per symbol), is given by | Based on the probability mass function of each source symbol to be communicated, the Shannon entropy , in units of bits (per symbol), is given by | ||

| + | <math>H = - \sum_{i} p_i \log_2 (p_i)</math> | ||

| − | + | 基于每个要通信的源符号的概率质量函数,香农熵(以比特为单位)由下式给出: | |

| − | |||

| − | |||

| − | |||

<math>H = - \sum_{i} p_i \log_2 (p_i)</math> | <math>H = - \sum_{i} p_i \log_2 (p_i)</math> | ||

| − | |||

where {{math|''p<sub>i</sub>''}} is the probability of occurrence of the {{math|''i''}}-th possible value of the source symbol. This equation gives the entropy in the units of "bits" (per symbol) because it uses a logarithm of base 2, and this base-2 measure of entropy has sometimes been called the [[Shannon (unit)|shannon]] in his honor. Entropy is also commonly computed using the natural logarithm (base [[E (mathematical constant)|{{mvar|e}}]], where {{mvar|e}} is Euler's number), which produces a measurement of entropy in nats per symbol and sometimes simplifies the analysis by avoiding the need to include extra constants in the formulas. Other bases are also possible, but less commonly used. For example, a logarithm of base {{nowrap|1=2<sup>8</sup> = 256}} will produce a measurement in [[byte]]s per symbol, and a logarithm of base 10 will produce a measurement in decimal digits (or hartleys) per symbol. | where {{math|''p<sub>i</sub>''}} is the probability of occurrence of the {{math|''i''}}-th possible value of the source symbol. This equation gives the entropy in the units of "bits" (per symbol) because it uses a logarithm of base 2, and this base-2 measure of entropy has sometimes been called the [[Shannon (unit)|shannon]] in his honor. Entropy is also commonly computed using the natural logarithm (base [[E (mathematical constant)|{{mvar|e}}]], where {{mvar|e}} is Euler's number), which produces a measurement of entropy in nats per symbol and sometimes simplifies the analysis by avoiding the need to include extra constants in the formulas. Other bases are also possible, but less commonly used. For example, a logarithm of base {{nowrap|1=2<sup>8</sup> = 256}} will produce a measurement in [[byte]]s per symbol, and a logarithm of base 10 will produce a measurement in decimal digits (or hartleys) per symbol. | ||

| 第249行: | 第246行: | ||

where is the probability of occurrence of the -th possible value of the source symbol. This equation gives the entropy in the units of "bits" (per symbol) because it uses a logarithm of base 2, and this base-2 measure of entropy has sometimes been called the shannon in his honor. Entropy is also commonly computed using the natural logarithm (base E (mathematical constant)|, where is Euler's number), which produces a measurement of entropy in nats per symbol and sometimes simplifies the analysis by avoiding the need to include extra constants in the formulas. Other bases are also possible, but less commonly used. For example, a logarithm of base will produce a measurement in bytes per symbol, and a logarithm of base 10 will produce a measurement in decimal digits (or hartleys) per symbol. | where is the probability of occurrence of the -th possible value of the source symbol. This equation gives the entropy in the units of "bits" (per symbol) because it uses a logarithm of base 2, and this base-2 measure of entropy has sometimes been called the shannon in his honor. Entropy is also commonly computed using the natural logarithm (base E (mathematical constant)|, where is Euler's number), which produces a measurement of entropy in nats per symbol and sometimes simplifies the analysis by avoiding the need to include extra constants in the formulas. Other bases are also possible, but less commonly used. For example, a logarithm of base will produce a measurement in bytes per symbol, and a logarithm of base 10 will produce a measurement in decimal digits (or hartleys) per symbol. | ||

| − | + | 其中{{math|''p<sub>i</sub>''}}是源符号的第{{math|''i''}}个可能值出现的概率。该方程式以比特(每个符号)为单位给出熵,因为它使用以2为底的对数,所以这个熵有时被称为香农熵以表纪念。熵的计算也通常使用自然对数(以[[E (mathematical constant)|{{mvar|e}}]]为底数,其中{{mvar|e}}是欧拉数,其他底数也是可行的,但不常用),这样就可以测量每个符号的熵值,有时在公式中可以通过避免额外的常量来简化分析。例如以{{nowrap|1=2<sup>8</sup> = 256}}为底的对数,每个符号将产生以的字节为单位的测量值。以10为底的对数,每个符号将产生以十进制数字(或哈特利)为单位的测量值。 | |

| − | |||

| − | |||

| − | |||

| 第259行: | 第253行: | ||

Intuitively, the entropy of a discrete random variable is a measure of the amount of uncertainty associated with the value of when only its distribution is known. | Intuitively, the entropy of a discrete random variable is a measure of the amount of uncertainty associated with the value of when only its distribution is known. | ||

| − | + | 直观的来看,离散型随机变量{{math|''X''}}的熵{{math|''H<sub>X</sub>''}}是不确定性度量,当只知道其分布时,它的值与{{math|''X''}}的值相关。 | |

| 第269行: | 第263行: | ||

The entropy of a source that emits a sequence of symbols that are independent and identically distributed (iid) is bits (per message of symbols). If the source data symbols are identically distributed but not independent, the entropy of a message of length will be less than . | The entropy of a source that emits a sequence of symbols that are independent and identically distributed (iid) is bits (per message of symbols). If the source data symbols are identically distributed but not independent, the entropy of a message of length will be less than . | ||

| − | + | 发出{{math|''N''}}个[[独立同分布]] (iid)的符号序列的源,其熵为{{math|''N'' ⋅ ''H''}}位(每个信息{{math|''N''}}符号)。如果源数据符号是同分布但不独立的,则长度为{{math|''N''}}的消息的熵将小于{{math|''N'' ⋅ ''H''}}。 | |

| 第279行: | 第273行: | ||

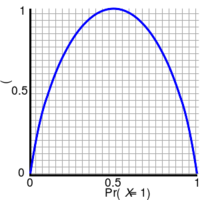

The entropy of a [[Bernoulli trial as a function of success probability, often called the , . The entropy is maximized at 1 bit per trial when the two possible outcomes are equally probable, as in an unbiased coin toss.]] | The entropy of a [[Bernoulli trial as a function of success probability, often called the , . The entropy is maximized at 1 bit per trial when the two possible outcomes are equally probable, as in an unbiased coin toss.]] | ||

| − | + | [[File:Binary entropy plot.svg|thumbnail|right|200px|以[[Bernoulli trial]]的熵作为成功概率的函数,通常称作{{em|[[binary entropy function]]}}, {{math|''H''<sub>b</sub>(''p'')}}。当两个可能的结果发生概率相等时(例如投掷无偏硬币),每次试验的熵最大为1比特。]] | |

| 第285行: | 第279行: | ||

| − | If one transmits 1000 bits (0s and 1s), and the value of each of these bits is known to the receiver (has a specific value with certainty) ahead of transmission, it is clear that no information is transmitted. If, however, each bit is independently equally likely to be 0 or 1, 1000 shannons of information (more often called bits) have been transmitted. Between these two extremes, information can be quantified as follows. If 𝕏 is the set of all messages {{math|{{mset|''x''<sub>1</sub>, ..., ''x''<sub>''n''</sub>}}}} that {{math|''X''}} could be, and {{math|''p''(''x'')}} is the probability of some <math>x \in \mathbb X</math>, then the entropy, {{math|''H''}}, of | + | If one transmits 1000 bits (0s and 1s), and the value of each of these bits is known to the receiver (has a specific value with certainty) ahead of transmission, it is clear that no information is transmitted. If, however, each bit is independently equally likely to be 0 or 1, 1000 shannons of information (more often called bits) have been transmitted. Between these two extremes, information can be quantified as follows. If 𝕏 is the set of all messages {{math|{{mset|''x''<sub>1</sub>, ..., ''x''<sub>''n''</sub>}}}} that {{math|''X''}} could be, and {{math|''p''(''x'')}} is the probability of some <math>x \in \mathbb X</math>, then the entropy, {{math|''H''}}, of is defined:<ref name = Reza>{{cite book | title = An Introduction to Information Theory | author = Fazlollah M. Reza | publisher = Dover Publications, Inc., New York | origyear = 1961| year = 1994 | isbn = 0-486-68210-2 | url = https://books.google.com/books?id=RtzpRAiX6OgC&pg=PA8&dq=intitle:%22An+Introduction+to+Information+Theory%22++%22entropy+of+a+simple+source%22}}</ref> |

| − | If one transmits 1000 bits (0s and 1s), and the value of each of these bits is known to the receiver (has a specific value with certainty) ahead of transmission, it is clear that no information is transmitted. If, however, each bit is independently equally likely to be 0 or 1, 1000 shannons of information (more often called bits) have been transmitted. Between these two extremes, information can be quantified as follows. If 𝕏 is the set of all messages }} that could be, and is the probability of some <math>x \in \mathbb X</math>, then the entropy, , of is defined: | + | If one transmits 1000 bits (0s and 1s), and the value of each of these bits is known to the receiver (has a specific value with certainty) ahead of transmission, it is clear that no information is transmitted. If, however, each bit is independently equally likely to be 0 or 1, 1000 shannons of information (more often called bits) have been transmitted. Between these two extremes, information can be quantified as follows. If 𝕏 is the set of all messages }} that could be, and is the probability of some <math>x \in \mathbb X</math>, then the entropy, , of is defined: |

| + | |||

| + | 如果一个人发送了1000比特(0s和1s),并且接收者在发送之前就已知这些比特中的每一个的值(具有确定性的特定值),显然就不会发送任何信息。但是,如果每个比特独立且等可能的为0或1时,则已经发送了1000香农信息(通常称为:比特)。在这两个极端之间,信息可以按以下方式进行量化。如果𝕏是{{math|''X''}}可能在的所有消息的集合{{math|{{mset|''x''<sub>1</sub>, ..., ''x''<sub>''n''</sub>}}}},且{{math|''p''(''x'')}}是<math>x \in \mathbb X</math>的概率,那么熵、{{math|''H''}}和{{math|''H''}}的定义如下: <ref name = Reza>{{cite book | title = An Introduction to Information Theory | author = Fazlollah M. Reza | publisher = Dover Publications, Inc., New York | origyear = 1961| year = 1994 | isbn = 0-486-68210-2 | url = https://books.google.com/books?id=RtzpRAiX6OgC&pg=PA8&dq=intitle:%22An+Introduction+to+Information+Theory%22++%22entropy+of+a+simple+source%22}}</ref> | ||

| − | |||

| 第299行: | 第294行: | ||

<math> H(X) = \mathbb{E}_{X} [I(x)] = -\sum_{x \in \mathbb{X}} p(x) \log p(x).</math> | <math> H(X) = \mathbb{E}_{X} [I(x)] = -\sum_{x \in \mathbb{X}} p(x) \log p(x).</math> | ||

| − | + | :<math> H(X) = \mathbb{E}_{X} [I(x)] = -\sum_{x \in \mathbb{X}} p(x) \log p(x).</math> | |

| 第305行: | 第300行: | ||

| − | (Here, | + | (Here, is the [[self-information]], which is the entropy contribution of an individual message, and {{math|''I''(''x'')}}{{math|𝔼<sub>''X''</sub>}} is the [[expected value]].) A property of entropy is that it is maximized when all the messages in the message space are equiprobable {{math|1=''p''(''x'') = 1/''n''}}; i.e., most unpredictable, in which case {{math|1=''H''(''X'') = log ''n''}}. |

(Here, is the self-information, which is the entropy contribution of an individual message, and is the expected value.) A property of entropy is that it is maximized when all the messages in the message space are equiprobable ; i.e., most unpredictable, in which case . | (Here, is the self-information, which is the entropy contribution of an individual message, and is the expected value.) A property of entropy is that it is maximized when all the messages in the message space are equiprobable ; i.e., most unpredictable, in which case . | ||

| − | ( | + | (其中:{{math|''I''(''x'')}}是[[自信息]],表示单个信息的熵贡献;{{math|''I''(''x'')}}{{math|𝔼<sub>''X''</sub>}}为{{math|''X''}}的期望。)熵的一个特性是,当消息空间中的所有消息都是等概率{{math|1=''p''(''x'') = 1/''n''}}时熵最大; 也就是说,在{{math|1=''H''(''X'') = log ''n''}}这种情况下,熵是最不可预测的。 |

| 第319行: | 第314行: | ||

The special case of information entropy for a random variable with two outcomes is the binary entropy function, usually taken to the logarithmic base 2, thus having the shannon (Sh) as unit: | The special case of information entropy for a random variable with two outcomes is the binary entropy function, usually taken to the logarithmic base 2, thus having the shannon (Sh) as unit: | ||

| − | + | 对于具有两个结果的随机变量的信息熵,其特殊情况为二进制熵函数(通常用以为底2对数,因此以香农(Sh)为单位): | |

| 第329行: | 第324行: | ||

<math>H_{\mathrm{b}}(p) = - p \log_2 p - (1-p)\log_2 (1-p).</math> | <math>H_{\mathrm{b}}(p) = - p \log_2 p - (1-p)\log_2 (1-p).</math> | ||

| − | (p)-p | + | :<math>H_{\mathrm{b}}(p) = - p \log_2 p - (1-p)\log_2 (1-p).</math> |

| 第345行: | 第340行: | ||

The of two discrete random variables and is merely the entropy of their pairing: . This implies that if and are independent, then their joint entropy is the sum of their individual entropies. | The of two discrete random variables and is merely the entropy of their pairing: . This implies that if and are independent, then their joint entropy is the sum of their individual entropies. | ||

| − | + | 两个离散的随机变量{{math|''X''}}和{{math|''Y''}}的联合熵大致是它们的组合熵: {{math|(''X'', ''Y'')}}。若{{math|''X''}}和{{math|''Y''}}是独立的,那么它们的联合熵就是其各自熵的总和。 | |

| 第355行: | 第350行: | ||

For example, if represents the position of a chess piece — the row and the column, then the joint entropy of the row of the piece and the column of the piece will be the entropy of the position of the piece. | For example, if represents the position of a chess piece — the row and the column, then the joint entropy of the row of the piece and the column of the piece will be the entropy of the position of the piece. | ||

| − | + | 例如:如果{{math|(''X'', ''Y'')}}代表棋子的位置({{math|''X''}} 表示行和{{math|''Y''}}表示列),那么棋子所在位置的熵就是棋子行、列的联合熵。 | |

| 第365行: | 第360行: | ||

<math>H(X, Y) = \mathbb{E}_{X,Y} [-\log p(x,y)] = - \sum_{x, y} p(x, y) \log p(x, y) \,</math> | <math>H(X, Y) = \mathbb{E}_{X,Y} [-\log p(x,y)] = - \sum_{x, y} p(x, y) \log p(x, y) \,</math> | ||

| − | + | :<math>H(X, Y) = \mathbb{E}_{X,Y} [-\log p(x,y)] = - \sum_{x, y} p(x, y) \log p(x, y) \,</math> | |

| 第375行: | 第370行: | ||

Despite similar notation, joint entropy should not be confused with . | Despite similar notation, joint entropy should not be confused with . | ||

| − | + | 尽管符号相似,注意联合熵与交叉熵不能混淆。 | |

| 第385行: | 第380行: | ||

===Conditional entropy (equivocation)=== | ===Conditional entropy (equivocation)=== | ||

| − | + | 条件熵(含糊度) | |

The {{em|[[conditional entropy]]}} or ''conditional uncertainty'' of {{math|''X''}} given random variable {{math|''Y''}} (also called the ''equivocation'' of {{math|''X''}} about {{math|''Y''}}) is the average conditional entropy over {{math|''Y''}}:<ref name=Ash>{{cite book | title = Information Theory | author = Robert B. Ash | publisher = Dover Publications, Inc. | origyear = 1965| year = 1990 | isbn = 0-486-66521-6 | url = https://books.google.com/books?id=ngZhvUfF0UIC&pg=PA16&dq=intitle:information+intitle:theory+inauthor:ash+conditional+uncertainty}}</ref> | The {{em|[[conditional entropy]]}} or ''conditional uncertainty'' of {{math|''X''}} given random variable {{math|''Y''}} (also called the ''equivocation'' of {{math|''X''}} about {{math|''Y''}}) is the average conditional entropy over {{math|''Y''}}:<ref name=Ash>{{cite book | title = Information Theory | author = Robert B. Ash | publisher = Dover Publications, Inc. | origyear = 1965| year = 1990 | isbn = 0-486-66521-6 | url = https://books.google.com/books?id=ngZhvUfF0UIC&pg=PA16&dq=intitle:information+intitle:theory+inauthor:ash+conditional+uncertainty}}</ref> | ||

The or conditional uncertainty of given random variable (also called the equivocation of about ) is the average conditional entropy over : | The or conditional uncertainty of given random variable (also called the equivocation of about ) is the average conditional entropy over : | ||

| + | 在给定随机变量{{math|''Y''}}下{{math|''X''}}的条件熵(或条件不确定性,也可称为{{math|''X''}}关于{{math|''Y''}}的含糊度))是{{math|''Y''}}上的条件平均熵: <ref name=Ash>{{cite book | title = Information Theory | author = Robert B. Ash | publisher = Dover Publications, Inc. | origyear = 1965| year = 1990 | isbn = 0-486-66521-6 | url = https://books.google.com/books?id=ngZhvUfF0UIC&pg=PA16&dq=intitle:information+intitle:theory+inauthor:ash+conditional+uncertainty}}</ref> | ||

| − | |||

| 第401行: | 第396行: | ||

<math> H(X|Y) = \mathbb E_Y [H(X|y)] = -\sum_{y \in Y} p(y) \sum_{x \in X} p(x|y) \log p(x|y) = -\sum_{x,y} p(x,y) \log p(x|y).</math> | <math> H(X|Y) = \mathbb E_Y [H(X|y)] = -\sum_{y \in Y} p(y) \sum_{x \in X} p(x|y) \log p(x|y) = -\sum_{x,y} p(x,y) \log p(x|y).</math> | ||

| − | + | :<math> H(X|Y) = \mathbb E_Y [H(X|y)] = -\sum_{y \in Y} p(y) \sum_{x \in X} p(x|y) \log p(x|y) = -\sum_{x,y} p(x,y) \log p(x|y).</math> | |

| 第411行: | 第406行: | ||

Because entropy can be conditioned on a random variable or on that random variable being a certain value, care should be taken not to confuse these two definitions of conditional entropy, the former of which is in more common use. A basic property of this form of conditional entropy is that: | Because entropy can be conditioned on a random variable or on that random variable being a certain value, care should be taken not to confuse these two definitions of conditional entropy, the former of which is in more common use. A basic property of this form of conditional entropy is that: | ||

| − | + | 由于熵能够以随机变量或该随机变量的某个值为条件,所以应注意不要混淆条件熵的这两个定义(前者更为常用)。该类条件熵的一个基本属性为: | |

| 第421行: | 第416行: | ||

<math> H(X|Y) = H(X,Y) - H(Y) .\,</math> | <math> H(X|Y) = H(X,Y) - H(Y) .\,</math> | ||

| − | + | : <math> H(X|Y) = H(X,Y) - H(Y) .\,</math> | |

| 第431行: | 第426行: | ||

===Mutual information (transinformation)=== | ===Mutual information (transinformation)=== | ||

| − | 互信息( | + | 互信息(转移信息) |

''[[Mutual information]]'' measures the amount of information that can be obtained about one random variable by observing another. It is important in communication where it can be used to maximize the amount of information shared between sent and received signals. The mutual information of {{math|''X''}} relative to {{math|''Y''}} is given by: | ''[[Mutual information]]'' measures the amount of information that can be obtained about one random variable by observing another. It is important in communication where it can be used to maximize the amount of information shared between sent and received signals. The mutual information of {{math|''X''}} relative to {{math|''Y''}} is given by: | ||

| 第437行: | 第432行: | ||

Mutual information measures the amount of information that can be obtained about one random variable by observing another. It is important in communication where it can be used to maximize the amount of information shared between sent and received signals. The mutual information of relative to is given by: | Mutual information measures the amount of information that can be obtained about one random variable by observing another. It is important in communication where it can be used to maximize the amount of information shared between sent and received signals. The mutual information of relative to is given by: | ||

| − | + | 互信息度量的是通过观察另一个随机变量可以获得的信息量。在通信中可以用它来最大化发送和接收信号之间共享的信息量,这一点至关重要。{{math|''X''}}相对于{{math|''X''}}的互信息由以下公式给出: | |

| 第447行: | 第442行: | ||

<math>I(X;Y) = \mathbb{E}_{X,Y} [SI(x,y)] = \sum_{x,y} p(x,y) \log \frac{p(x,y)}{p(x)\, p(y)}</math> | <math>I(X;Y) = \mathbb{E}_{X,Y} [SI(x,y)] = \sum_{x,y} p(x,y) \log \frac{p(x,y)}{p(x)\, p(y)}</math> | ||

| − | + | :<math>I(X;Y) = \mathbb{E}_{X,Y} [SI(x,y)] = \sum_{x,y} p(x,y) \log \frac{p(x,y)}{p(x)\, p(y)}</math> | |

where {{math|SI}} (''S''pecific mutual ''I''nformation) is the [[pointwise mutual information]]. | where {{math|SI}} (''S''pecific mutual ''I''nformation) is the [[pointwise mutual information]]. | ||

| 第453行: | 第448行: | ||

where (Specific mutual Information) is the pointwise mutual information. | where (Specific mutual Information) is the pointwise mutual information. | ||

| − | 其中( | + | 其中{{math|SI}} (Specific mutual Information,特定互信息)是点间的互信息。 |

| 第463行: | 第458行: | ||

A basic property of the mutual information is that | A basic property of the mutual information is that | ||

| − | + | 互信息的一个基本属性为: | |

: <math>I(X;Y) = H(X) - H(X|Y).\,</math> | : <math>I(X;Y) = H(X) - H(X|Y).\,</math> | ||

| 第469行: | 第464行: | ||

<math>I(X;Y) = H(X) - H(X|Y).\,</math> | <math>I(X;Y) = H(X) - H(X|Y).\,</math> | ||

| − | + | : <math>I(X;Y) = H(X) - H(X|Y).\,</math> | |

That is, knowing ''Y'', we can save an average of {{math|''I''(''X''; ''Y'')}} bits in encoding ''X'' compared to not knowing ''Y''. | That is, knowing ''Y'', we can save an average of {{math|''I''(''X''; ''Y'')}} bits in encoding ''X'' compared to not knowing ''Y''. | ||

| 第475行: | 第470行: | ||

That is, knowing Y, we can save an average of bits in encoding X compared to not knowing Y. | That is, knowing Y, we can save an average of bits in encoding X compared to not knowing Y. | ||

| − | + | 也就是说,在编码的过程中知道''Y''比不知道''Y''平均节省{{math|''I''(''X''; ''Y'')}}比特。 | |

| 第491行: | 第486行: | ||

<math>I(X;Y) = I(Y;X) = H(X) + H(Y) - H(X,Y).\,</math> | <math>I(X;Y) = I(Y;X) = H(X) + H(Y) - H(X,Y).\,</math> | ||

| − | + | : <math>I(X;Y) = I(Y;X) = H(X) + H(Y) - H(X,Y).\,</math> | |

| 第501行: | 第496行: | ||

Mutual information can be expressed as the average Kullback–Leibler divergence (information gain) between the posterior probability distribution of X given the value of Y and the prior distribution on X: | Mutual information can be expressed as the average Kullback–Leibler divergence (information gain) between the posterior probability distribution of X given the value of Y and the prior distribution on X: | ||

| − | + | 互信息可以表示为在给定''Y''值和''X''的后验分布的情况下,''X''的后验概率之间的平均 Kullback-Leibler 散度(信息增益) : | |

: <math>I(X;Y) = \mathbb E_{p(y)} [D_{\mathrm{KL}}( p(X|Y=y) \| p(X) )].</math> | : <math>I(X;Y) = \mathbb E_{p(y)} [D_{\mathrm{KL}}( p(X|Y=y) \| p(X) )].</math> | ||

| 第507行: | 第502行: | ||

<math>I(X;Y) = \mathbb E_{p(y)} [D_{\mathrm{KL}}( p(X|Y=y) \| p(X) )].</math> | <math>I(X;Y) = \mathbb E_{p(y)} [D_{\mathrm{KL}}( p(X|Y=y) \| p(X) )].</math> | ||

| − | + | : <math>I(X;Y) = \mathbb E_{p(y)} [D_{\mathrm{KL}}( p(X|Y=y) \| p(X) )].</math> | |

In other words, this is a measure of how much, on the average, the probability distribution on ''X'' will change if we are given the value of ''Y''. This is often recalculated as the divergence from the product of the marginal distributions to the actual joint distribution: | In other words, this is a measure of how much, on the average, the probability distribution on ''X'' will change if we are given the value of ''Y''. This is often recalculated as the divergence from the product of the marginal distributions to the actual joint distribution: | ||

| 第513行: | 第508行: | ||

In other words, this is a measure of how much, on the average, the probability distribution on X will change if we are given the value of Y. This is often recalculated as the divergence from the product of the marginal distributions to the actual joint distribution: | In other words, this is a measure of how much, on the average, the probability distribution on X will change if we are given the value of Y. This is often recalculated as the divergence from the product of the marginal distributions to the actual joint distribution: | ||

| − | + | 换句话说这是一种度量方法:若我们给出''Y''的值,得出''X''上的概率分布将会平均变化多少。这通常用于计算边际分布的乘积与实际联合分布的差异: | |

: <math>I(X; Y) = D_{\mathrm{KL}}(p(X,Y) \| p(X)p(Y)).</math> | : <math>I(X; Y) = D_{\mathrm{KL}}(p(X,Y) \| p(X)p(Y)).</math> | ||

| 第519行: | 第514行: | ||

<math>I(X; Y) = D_{\mathrm{KL}}(p(X,Y) \| p(X)p(Y)).</math> | <math>I(X; Y) = D_{\mathrm{KL}}(p(X,Y) \| p(X)p(Y)).</math> | ||

| − | + | : <math>I(X; Y) = D_{\mathrm{KL}}(p(X,Y) \| p(X)p(Y)).</math> | |

| 第529行: | 第524行: | ||

Mutual information is closely related to the log-likelihood ratio test in the context of contingency tables and the multinomial distribution and to Pearson's χ<sup>2</sup> test: mutual information can be considered a statistic for assessing independence between a pair of variables, and has a well-specified asymptotic distribution. | Mutual information is closely related to the log-likelihood ratio test in the context of contingency tables and the multinomial distribution and to Pearson's χ<sup>2</sup> test: mutual information can be considered a statistic for assessing independence between a pair of variables, and has a well-specified asymptotic distribution. | ||

| − | + | 互信息与列联表中的似然比检验和多项分布以及皮尔森卡方检验密切相关: 互信息可以视为评估一对变量之间独立性的统计量,并且具有明确指定的渐近分布。 | |

| 第539行: | 第534行: | ||

===Kullback–Leibler divergence (information gain)=== | ===Kullback–Leibler divergence (information gain)=== | ||

| − | Kullback-Leibler | + | Kullback-Leibler 散度(信息增益) |

The ''[[Kullback–Leibler divergence]]'' (or ''information divergence'', ''information gain'', or ''relative entropy'') is a way of comparing two distributions: a "true" [[probability distribution]] ''p(X)'', and an arbitrary probability distribution ''q(X)''. If we compress data in a manner that assumes ''q(X)'' is the distribution underlying some data, when, in reality, ''p(X)'' is the correct distribution, the Kullback–Leibler divergence is the number of average additional bits per datum necessary for compression. It is thus defined | The ''[[Kullback–Leibler divergence]]'' (or ''information divergence'', ''information gain'', or ''relative entropy'') is a way of comparing two distributions: a "true" [[probability distribution]] ''p(X)'', and an arbitrary probability distribution ''q(X)''. If we compress data in a manner that assumes ''q(X)'' is the distribution underlying some data, when, in reality, ''p(X)'' is the correct distribution, the Kullback–Leibler divergence is the number of average additional bits per datum necessary for compression. It is thus defined | ||

| 第545行: | 第540行: | ||

The Kullback–Leibler divergence (or information divergence, information gain, or relative entropy) is a way of comparing two distributions: a "true" probability distribution p(X), and an arbitrary probability distribution q(X). If we compress data in a manner that assumes q(X) is the distribution underlying some data, when, in reality, p(X) is the correct distribution, the Kullback–Leibler divergence is the number of average additional bits per datum necessary for compression. It is thus defined | The Kullback–Leibler divergence (or information divergence, information gain, or relative entropy) is a way of comparing two distributions: a "true" probability distribution p(X), and an arbitrary probability distribution q(X). If we compress data in a manner that assumes q(X) is the distribution underlying some data, when, in reality, p(X) is the correct distribution, the Kullback–Leibler divergence is the number of average additional bits per datum necessary for compression. It is thus defined | ||

| − | Kullback-Leibler | + | Kullback-Leibler 散度(或信息散度、相对熵、信息增益)是比较两种分布的方法: “真实的”概率分布''p(X)''和任意概率分布''q(X)''。若假设''q(X)''是基于某种方式压缩的数据的分布,而实际上''p(X)''才是真正分布,那么 Kullback-Leibler 散度是每个数据压缩所需的平均额外比特数。因此定义: |

| 第555行: | 第550行: | ||

<math>D_{\mathrm{KL}}(p(X) \| q(X)) = \sum_{x \in X} -p(x) \log {q(x)} \, - \, \sum_{x \in X} -p(x) \log {p(x)} = \sum_{x \in X} p(x) \log \frac{p(x)}{q(x)}.</math> | <math>D_{\mathrm{KL}}(p(X) \| q(X)) = \sum_{x \in X} -p(x) \log {q(x)} \, - \, \sum_{x \in X} -p(x) \log {p(x)} = \sum_{x \in X} p(x) \log \frac{p(x)}{q(x)}.</math> | ||

| − | + | :<math>D_{\mathrm{KL}}(p(X) \| q(X)) = \sum_{x \in X} -p(x) \log {q(x)} \, - \, \sum_{x \in X} -p(x) \log {p(x)} = \sum_{x \in X} p(x) \log \frac{p(x)}{q(x)}.</math> | |

| 第565行: | 第560行: | ||

Although it is sometimes used as a 'distance metric', KL divergence is not a true metric since it is not symmetric and does not satisfy the triangle inequality (making it a semi-quasimetric). | Although it is sometimes used as a 'distance metric', KL divergence is not a true metric since it is not symmetric and does not satisfy the triangle inequality (making it a semi-quasimetric). | ||

| − | + | 尽管有时会将KL散度用作距离量度但它并不是一个真正的指标,因为它是不对称的,同时也不满足三角不等式(KL散度为一个半准度量)。 | |

| 第575行: | 第570行: | ||

Another interpretation of the KL divergence is the "unnecessary surprise" introduced by a prior from the truth: suppose a number X is about to be drawn randomly from a discrete set with probability distribution p(x). If Alice knows the true distribution p(x), while Bob believes (has a prior) that the distribution is q(x), then Bob will be more surprised than Alice, on average, upon seeing the value of X. The KL divergence is the (objective) expected value of Bob's (subjective) surprisal minus Alice's surprisal, measured in bits if the log is in base 2. In this way, the extent to which Bob's prior is "wrong" can be quantified in terms of how "unnecessarily surprised" it is expected to make him. | Another interpretation of the KL divergence is the "unnecessary surprise" introduced by a prior from the truth: suppose a number X is about to be drawn randomly from a discrete set with probability distribution p(x). If Alice knows the true distribution p(x), while Bob believes (has a prior) that the distribution is q(x), then Bob will be more surprised than Alice, on average, upon seeing the value of X. The KL divergence is the (objective) expected value of Bob's (subjective) surprisal minus Alice's surprisal, measured in bits if the log is in base 2. In this way, the extent to which Bob's prior is "wrong" can be quantified in terms of how "unnecessarily surprised" it is expected to make him. | ||

| − | + | KL散度的另一种解释是先验者从事实中引入的“不必要的惊喜”。假设将从概率分布为“ p(x)”的离散集合中随机抽取数字“ X”,如果Alice知道真实的分布“p(x)”,而Bob认为“q(x)”具有先验概率分布,那么Bob将比Alice更多次看到“X”的值,Bob也将具有更多的信息内容。KL散度就是Bob意外惊喜的期望值减去Alice意外惊喜的期望值(如果对数以2为底,则以比特为单位),这样Bob的先验是“错误的”程度可以根据他变得“不必要的惊讶”的期望来进行量化。 | |

| − | |||

| − | |||

| 第585行: | 第578行: | ||

===Other quantities=== | ===Other quantities=== | ||

| − | + | 其他度量 | |

Other important information theoretic quantities include [[Rényi entropy]] (a generalization of entropy), [[differential entropy]] (a generalization of quantities of information to continuous distributions), and the [[conditional mutual information]]. | Other important information theoretic quantities include [[Rényi entropy]] (a generalization of entropy), [[differential entropy]] (a generalization of quantities of information to continuous distributions), and the [[conditional mutual information]]. | ||

| 第591行: | 第584行: | ||

Other important information theoretic quantities include Rényi entropy (a generalization of entropy), differential entropy (a generalization of quantities of information to continuous distributions), and the conditional mutual information. | Other important information theoretic quantities include Rényi entropy (a generalization of entropy), differential entropy (a generalization of quantities of information to continuous distributions), and the conditional mutual information. | ||

| − | + | 信息论中其他重要的量包括Rényi熵(一种熵的推广),微分熵(信息量推广到连续分布),以及条件互信息。 | |

| 第615行: | 第608行: | ||

A picture showing scratches on the readable surface of a CD-R. Music and data CDs are coded using error correcting codes and thus can still be read even if they have minor scratches using [[error detection and correction.]] | A picture showing scratches on the readable surface of a CD-R. Music and data CDs are coded using error correcting codes and thus can still be read even if they have minor scratches using [[error detection and correction.]] | ||

| − | + | [[File:CDSCRATCHES.jpg|thumb|right|在可读CD的表面上显示划痕的图片。音乐和数据CD使用纠错编码进行编码,因此即使它们有轻微的划痕,也可以通过错误检测和纠正来对CD进行读取。]] | |

| 第625行: | 第618行: | ||

Coding theory is one of the most important and direct applications of information theory. It can be subdivided into source coding theory and channel coding theory. Using a statistical description for data, information theory quantifies the number of bits needed to describe the data, which is the information entropy of the source. | Coding theory is one of the most important and direct applications of information theory. It can be subdivided into source coding theory and channel coding theory. Using a statistical description for data, information theory quantifies the number of bits needed to describe the data, which is the information entropy of the source. | ||

| − | + | 编码理论是信息论最重要、最直接的应用之一,可以细分为信源编码理论和信道编码理论。信息理论使用统计数据描述来量化描述数据所需的比特数,这是源的信息熵。 | |

| − | |||

| 第632行: | 第624行: | ||

* Data compression (source coding): There are two formulations for the compression problem: | * Data compression (source coding): There are two formulations for the compression problem: | ||

| − | + | *数据压缩(源编码):压缩问题有两个相关公式; | |

*[[lossless data compression]]: the data must be reconstructed exactly; | *[[lossless data compression]]: the data must be reconstructed exactly; | ||

| − | + | * [[[无损数据压缩]]:数据必须准确重构; | |

*[[lossy data compression]]: allocates bits needed to reconstruct the data, within a specified fidelity level measured by a distortion function. This subset of information theory is called ''[[rate–distortion theory]]''. | *[[lossy data compression]]: allocates bits needed to reconstruct the data, within a specified fidelity level measured by a distortion function. This subset of information theory is called ''[[rate–distortion theory]]''. | ||

| − | + | * [[有损数据压缩]]:由失真函数测得的在指定保真度级别内分配重构数据所需的比特数。信息理论中的这个子集称为率失真理论。 | |

* Error-correcting codes (channel coding): While data compression removes as much redundancy as possible, an error correcting code adds just the right kind of redundancy (i.e., error correction) needed to transmit the data efficiently and faithfully across a noisy channel. | * Error-correcting codes (channel coding): While data compression removes as much redundancy as possible, an error correcting code adds just the right kind of redundancy (i.e., error correction) needed to transmit the data efficiently and faithfully across a noisy channel. | ||

| − | + | *纠错码(信道编码):数据压缩会尽可能多的消除冗余,而纠错码会添加所需的冗余(即纠错),以便在嘈杂的信道上有效且保真地传输数据。 | |

| 第655行: | 第647行: | ||

This division of coding theory into compression and transmission is justified by the information transmission theorems, or source–channel separation theorems that justify the use of bits as the universal currency for information in many contexts. However, these theorems only hold in the situation where one transmitting user wishes to communicate to one receiving user. In scenarios with more than one transmitter (the multiple-access channel), more than one receiver (the broadcast channel) or intermediary "helpers" (the relay channel), or more general networks, compression followed by transmission may no longer be optimal. Network information theory refers to these multi-agent communication models. | This division of coding theory into compression and transmission is justified by the information transmission theorems, or source–channel separation theorems that justify the use of bits as the universal currency for information in many contexts. However, these theorems only hold in the situation where one transmitting user wishes to communicate to one receiving user. In scenarios with more than one transmitter (the multiple-access channel), more than one receiver (the broadcast channel) or intermediary "helpers" (the relay channel), or more general networks, compression followed by transmission may no longer be optimal. Network information theory refers to these multi-agent communication models. | ||

| − | + | 信息传输定理或源-信道分离定理证明编码理论划分为压缩和传输是正确的,这些定理证明了在许多情况下使用比特作为信息的‘’通用货币‘’是合理的,但这只在发送用户与特定接收用户建立通信的情况下才成立。在具有多个发送器(多路访问信道),多个接收器(广播信道)或中转器(中继信道)或多个计算机网络的情况下,压缩后再进行传输可能就不再是最佳选择。[[网络信息论]]是指这些多主体通信模型。 | |

| − | |||

| − | |||

| 第665行: | 第655行: | ||

===Source theory=== | ===Source theory=== | ||

| − | + | 源理论 | |

Any process that generates successive messages can be considered a {{em|[[Communication source|source]]}} of information. A memoryless source is one in which each message is an [[Independent identically distributed random variables|independent identically distributed random variable]], whereas the properties of [[ergodic theory|ergodicity]] and [[stationary process|stationarity]] impose less restrictive constraints. All such sources are [[stochastic process|stochastic]]. These terms are well studied in their own right outside information theory. | Any process that generates successive messages can be considered a {{em|[[Communication source|source]]}} of information. A memoryless source is one in which each message is an [[Independent identically distributed random variables|independent identically distributed random variable]], whereas the properties of [[ergodic theory|ergodicity]] and [[stationary process|stationarity]] impose less restrictive constraints. All such sources are [[stochastic process|stochastic]]. These terms are well studied in their own right outside information theory. | ||

| − | Any process that generates successive messages can be considered a | + | Any process that generates successive messages can be considered a of information. A memoryless source is one in which each message is an independent identically distributed random variable, whereas the properties of ergodicity and stationarity impose less restrictive constraints. All such sources are stochastic. These terms are well studied in their own right outside information theory. |

| − | |||

| − | |||

| − | |||

| + | 生成连续消息的任何过程都可以视为信息的通讯来源。无记忆信源是指每个消息都是独立同分布的随机变量,而遍历理论和平稳过程的性质对信源施加的限制较少。所有这些源都满足随机过程。在信息论领域外,这些术语已经有很全面的相关研究。 | ||

| 第681行: | 第669行: | ||

====Rate====<!-- This section is linked from Channel capacity --> | ====Rate====<!-- This section is linked from Channel capacity --> | ||

| − | 速率 | + | ====速率==== |

| − | |||

Information ''[[Entropy rate|rate]]'' is the average entropy per symbol. For memoryless sources, this is merely the entropy of each symbol, while, in the case of a stationary stochastic process, it is | Information ''[[Entropy rate|rate]]'' is the average entropy per symbol. For memoryless sources, this is merely the entropy of each symbol, while, in the case of a stationary stochastic process, it is | ||

Information rate is the average entropy per symbol. For memoryless sources, this is merely the entropy of each symbol, while, in the case of a stationary stochastic process, it is | Information rate is the average entropy per symbol. For memoryless sources, this is merely the entropy of each symbol, while, in the case of a stationary stochastic process, it is | ||

| − | + | 信息的熵率是每个符号的平均熵。对于无记忆信源,信息的熵率仅表示每个符号的熵,而在平稳随机过程中,它是: | |

| 第697行: | 第684行: | ||

<math>r = \lim_{n \to \infty} H(X_n|X_{n-1},X_{n-2},X_{n-3}, \ldots);</math> | <math>r = \lim_{n \to \infty} H(X_n|X_{n-1},X_{n-2},X_{n-3}, \ldots);</math> | ||

| − | ( | + | :<math>r = \lim_{n \to \infty} H(X_n|X_{n-1},X_{n-2},X_{n-3}, \ldots);</math> |

| 第707行: | 第694行: | ||

that is, the conditional entropy of a symbol given all the previous symbols generated. For the more general case of a process that is not necessarily stationary, the average rate is | that is, the conditional entropy of a symbol given all the previous symbols generated. For the more general case of a process that is not necessarily stationary, the average rate is | ||

| − | + | 也就是说,一个符号的条件熵给出了所有之前生成的符号。对于不一定平稳的过程的更一般情况,平均速率为: | |

| 第717行: | 第704行: | ||

<math>r = \lim_{n \to \infty} \frac{1}{n} H(X_1, X_2, \dots X_n);</math> | <math>r = \lim_{n \to \infty} \frac{1}{n} H(X_1, X_2, \dots X_n);</math> | ||

| − | ( | + | :<math>r = \lim_{n \to \infty} \frac{1}{n} H(X_1, X_2, \dots X_n);</math> |

| 第727行: | 第714行: | ||

that is, the limit of the joint entropy per symbol. For stationary sources, these two expressions give the same result. | that is, the limit of the joint entropy per symbol. For stationary sources, these two expressions give the same result. | ||

| − | + | 也就是每个符号的联合熵的极限。对于固定源,这两个表达式得出的结果相同。<ref>{{cite book | title = Digital Compression for Multimedia: Principles and Standards | author = Jerry D. Gibson | publisher = Morgan Kaufmann | year = 1998 | url = https://books.google.com/books?id=aqQ2Ry6spu0C&pg=PA56&dq=entropy-rate+conditional#PPA57,M1 | isbn = 1-55860-369-7 }}</ref> | |

| + | |||

| 第737行: | 第725行: | ||

It is common in information theory to speak of the "rate" or "entropy" of a language. This is appropriate, for example, when the source of information is English prose. The rate of a source of information is related to its redundancy and how well it can be compressed, the subject of . | It is common in information theory to speak of the "rate" or "entropy" of a language. This is appropriate, for example, when the source of information is English prose. The rate of a source of information is related to its redundancy and how well it can be compressed, the subject of . | ||

| − | + | 在信息论中谈论一种语言的“速率”或“熵”是很常见的,比如当信源是英文散文时就很合适。信息源的速率与其冗余度以及可被压缩程度有关。 | |

| 第761行: | 第749行: | ||

Communications over a channel—such as an ethernet cable—is the primary motivation of information theory. However, such channels often fail to produce exact reconstruction of a signal; noise, periods of silence, and other forms of signal corruption often degrade quality. | Communications over a channel—such as an ethernet cable—is the primary motivation of information theory. However, such channels often fail to produce exact reconstruction of a signal; noise, periods of silence, and other forms of signal corruption often degrade quality. | ||

| − | 通过信道( | + | 通过信道(例如:英特网电缆)进行通信是信息论的主要动机。然而,这样的信道往往不能产生信号的精确重建; 在静默时段内的噪声和其他形式的信号损坏往往会使得信息质量的降低。 |

| 第771行: | 第759行: | ||

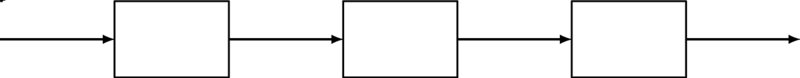

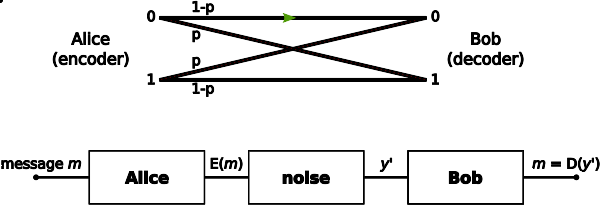

Consider the communications process over a discrete channel. A simple model of the process is shown below: | Consider the communications process over a discrete channel. A simple model of the process is shown below: | ||

| − | + | 考虑离散信道上的通信过程。该过程的简单模型如下: | |

| 第781行: | 第769行: | ||

Channel model | Channel model | ||

| − | + | [[File:Channel model.svg|center|800px|信道模型] | |

| 第791行: | 第779行: | ||

Here X represents the space of messages transmitted, and Y the space of messages received during a unit time over our channel. Let x)}} be the conditional probability distribution function of Y given X. We will consider x)}} to be an inherent fixed property of our communications channel (representing the nature of the noise of our channel). Then the joint distribution of X and Y is completely determined by our channel and by our choice of , the marginal distribution of messages we choose to send over the channel. Under these constraints, we would like to maximize the rate of information, or the signal, we can communicate over the channel. The appropriate measure for this is the mutual information, and this maximum mutual information is called the and is given by: | Here X represents the space of messages transmitted, and Y the space of messages received during a unit time over our channel. Let x)}} be the conditional probability distribution function of Y given X. We will consider x)}} to be an inherent fixed property of our communications channel (representing the nature of the noise of our channel). Then the joint distribution of X and Y is completely determined by our channel and by our choice of , the marginal distribution of messages we choose to send over the channel. Under these constraints, we would like to maximize the rate of information, or the signal, we can communicate over the channel. The appropriate measure for this is the mutual information, and this maximum mutual information is called the and is given by: | ||

| − | 这里 x | + | 这里''X''表示单位时间内通过信道发送的信息空间,''Y''表示单位时间内通过信道接收的信息空间。设{{math|''p''(''y''{{pipe}}''x'')}}是给定''X''的''Y''的条件概率分布函数。将{{math|''p''(''y''{{pipe}}''x'')}}视为通信信道的固定属性(表示信道噪声的性质)。那么''X''和''Y''的联合分布完全取决于所选用的信道和{{math|''f''(''x'')}},以及通过信道发送的信息的边缘分布。在这些约束条件下,我们希望最大化信息速率或信号速率,可以通过信道进行通信。对此的适当度量为互信息,信道容量即为最大互信息,且由下式给出: |

| + | |||

:<math> C = \max_{f} I(X;Y).\! </math> | :<math> C = \max_{f} I(X;Y).\! </math> | ||

| 第797行: | 第786行: | ||

<math> C = \max_{f} I(X;Y).\! </math> | <math> C = \max_{f} I(X;Y).\! </math> | ||

| − | + | :<math> C = \max_{f} I(X;Y).\! </math> | |

This capacity has the following property related to communicating at information rate ''R'' (where ''R'' is usually bits per symbol). For any information rate ''R < C'' and coding error ε > 0, for large enough ''N'', there exists a code of length ''N'' and rate ≥ R and a decoding algorithm, such that the maximal probability of block error is ≤ ε; that is, it is always possible to transmit with arbitrarily small block error. In addition, for any rate ''R > C'', it is impossible to transmit with arbitrarily small block error. | This capacity has the following property related to communicating at information rate ''R'' (where ''R'' is usually bits per symbol). For any information rate ''R < C'' and coding error ε > 0, for large enough ''N'', there exists a code of length ''N'' and rate ≥ R and a decoding algorithm, such that the maximal probability of block error is ≤ ε; that is, it is always possible to transmit with arbitrarily small block error. In addition, for any rate ''R > C'', it is impossible to transmit with arbitrarily small block error. | ||

| 第803行: | 第792行: | ||

This capacity has the following property related to communicating at information rate R (where R is usually bits per symbol). For any information rate R < C and coding error ε > 0, for large enough N, there exists a code of length N and rate ≥ R and a decoding algorithm, such that the maximal probability of block error is ≤ ε; that is, it is always possible to transmit with arbitrarily small block error. In addition, for any rate R > C, it is impossible to transmit with arbitrarily small block error. | This capacity has the following property related to communicating at information rate R (where R is usually bits per symbol). For any information rate R < C and coding error ε > 0, for large enough N, there exists a code of length N and rate ≥ R and a decoding algorithm, such that the maximal probability of block error is ≤ ε; that is, it is always possible to transmit with arbitrarily small block error. In addition, for any rate R > C, it is impossible to transmit with arbitrarily small block error. | ||

| − | + | 信道容量具有以下与以信息速率“R”进行通信有关的属性(其中“R”通常为每个符号的比特数)。对于任意信息速率''R < C''和编码错误ε > 0,对于足够大的''N'',存在长度为''N''和速率大于等于R的代码以及解码算法使得块错误的最大概率小于等于ε;即总是可以在任意小的块错误下进行传输。此外对于任何速率的“ R> C”,不可能以很小的块错误进行发送。 | |

| − | |||

| 第813行: | 第801行: | ||

Channel coding is concerned with finding such nearly optimal codes that can be used to transmit data over a noisy channel with a small coding error at a rate near the channel capacity. | Channel coding is concerned with finding such nearly optimal codes that can be used to transmit data over a noisy channel with a small coding error at a rate near the channel capacity. | ||

| − | + | 信道编码就寻找一种接近最优的编码,它可以用于在噪声信道上以接近信道容量的速率传输数据,且编码错误很小。 | |

| 第823行: | 第811行: | ||

====Capacity of particular channel models==== | ====Capacity of particular channel models==== | ||

| − | + | 特定信道容量模型 | |

* A continuous-time analog communications channel subject to [[Gaussian noise]] — see [[Shannon–Hartley theorem]]. | * A continuous-time analog communications channel subject to [[Gaussian noise]] — see [[Shannon–Hartley theorem]]. | ||

| − | + | *连续时间内受高斯噪声(Gaussian noise)限制的模拟通信信道(详细内容请参见[[Shannon–Hartley定理]])。 | |

* A [[binary symmetric channel]] (BSC) with crossover probability ''p'' is a binary input, binary output channel that flips the input bit with probability ''p''. The BSC has a capacity of {{math|1 − ''H''<sub>b</sub>(''p'')}} bits per channel use, where {{math|''H''<sub>b</sub>}} is the binary entropy function to the base-2 logarithm: | * A [[binary symmetric channel]] (BSC) with crossover probability ''p'' is a binary input, binary output channel that flips the input bit with probability ''p''. The BSC has a capacity of {{math|1 − ''H''<sub>b</sub>(''p'')}} bits per channel use, where {{math|''H''<sub>b</sub>}} is the binary entropy function to the base-2 logarithm: | ||

| − | + | *二进制对称通道([[binary symmetric channel]],BSC)是交叉概率为''p''的二进制输入、二进制输出(以概率''p''翻转输入位)通道。每个通道使用的BSC容量为{{math|1 − ''H''<sub>b</sub>(''p'')}}比特,其中{{math|''H''<sub>b</sub>}}是以2为底的对数的二进制熵函数: | |

| 第841行: | 第829行: | ||

File:Binary symmetric channel.svg | File:Binary symmetric channel.svg | ||

| − | + | ::[[File:Binary symmetric channel.svg]] | |

| 第848行: | 第836行: | ||

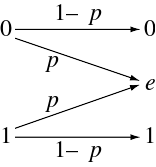

* A [[binary erasure channel]] (BEC) with erasure probability ''p'' is a binary input, ternary output channel. The possible channel outputs are 0, 1, and a third symbol 'e' called an erasure. The erasure represents complete loss of information about an input bit. The capacity of the BEC is {{nowrap|1 − ''p''}} bits per channel use. | * A [[binary erasure channel]] (BEC) with erasure probability ''p'' is a binary input, ternary output channel. The possible channel outputs are 0, 1, and a third symbol 'e' called an erasure. The erasure represents complete loss of information about an input bit. The capacity of the BEC is {{nowrap|1 − ''p''}} bits per channel use. | ||

| − | + | *二进制擦除通道([[binary erasure channel]],BEC)是擦除概率为“ p”的二进制输入、三进制输出通道。可能的通道输出为0、1和擦除符号'e'。擦除表示信息输入位的完全丢失。每个通道使用的BEC容量为{{nowrap|1 − ''p''}}比特。 | |

| 第859行: | 第847行: | ||

File:Binary erasure channel.svg | File:Binary erasure channel.svg | ||

| − | + | ::[[File:Binary erasure channel.svg]] | |

| 第879行: | 第867行: | ||

===Intelligence uses and secrecy applications=== | ===Intelligence uses and secrecy applications=== | ||

| − | + | 情报使用和安保中的应用 | |

Information theoretic concepts apply to cryptography and cryptanalysis. Turing's information unit, the [[Ban (unit)|ban]], was used in the [[Ultra]] project, breaking the German [[Enigma machine]] code and hastening the [[Victory in Europe Day|end of World War II in Europe]]. Shannon himself defined an important concept now called the [[unicity distance]]. Based on the redundancy of the [[plaintext]], it attempts to give a minimum amount of [[ciphertext]] necessary to ensure unique decipherability. | Information theoretic concepts apply to cryptography and cryptanalysis. Turing's information unit, the [[Ban (unit)|ban]], was used in the [[Ultra]] project, breaking the German [[Enigma machine]] code and hastening the [[Victory in Europe Day|end of World War II in Europe]]. Shannon himself defined an important concept now called the [[unicity distance]]. Based on the redundancy of the [[plaintext]], it attempts to give a minimum amount of [[ciphertext]] necessary to ensure unique decipherability. | ||

| 第885行: | 第873行: | ||

Information theoretic concepts apply to cryptography and cryptanalysis. Turing's information unit, the ban, was used in the Ultra project, breaking the German Enigma machine code and hastening the end of World War II in Europe. Shannon himself defined an important concept now called the unicity distance. Based on the redundancy of the plaintext, it attempts to give a minimum amount of ciphertext necessary to ensure unique decipherability. | Information theoretic concepts apply to cryptography and cryptanalysis. Turing's information unit, the ban, was used in the Ultra project, breaking the German Enigma machine code and hastening the end of World War II in Europe. Shannon himself defined an important concept now called the unicity distance. Based on the redundancy of the plaintext, it attempts to give a minimum amount of ciphertext necessary to ensure unique decipherability. | ||

| − | + | 信息论概念应用于密码学和密码分析。在[[Ultra]]的项目中使用了Turing的信息单元[[Ban(unit)| ban]],破解了德国的恩尼格玛密码,加速了二战在欧洲的结束。香农定义了一个重要的概念,现在称为单一性距离([[unicity distance]]),基于明文的冗余性尝试给出具有唯一可解密性所需的最少量的密文。 | |

| − | |||

| 第895行: | 第882行: | ||

Information theory leads us to believe it is much more difficult to keep secrets than it might first appear. A brute force attack can break systems based on asymmetric key algorithms or on most commonly used methods of symmetric key algorithms (sometimes called secret key algorithms), such as block ciphers. The security of all such methods currently comes from the assumption that no known attack can break them in a practical amount of time. | Information theory leads us to believe it is much more difficult to keep secrets than it might first appear. A brute force attack can break systems based on asymmetric key algorithms or on most commonly used methods of symmetric key algorithms (sometimes called secret key algorithms), such as block ciphers. The security of all such methods currently comes from the assumption that no known attack can break them in a practical amount of time. | ||

| − | + | 信息论使我们觉得保守秘密比最初看起来要困难得多。穷举法可以基于非对称密钥算法或最常用的对称密钥算法(也称为秘密密钥算法),如分组密码破坏系统。当前,所有这些方法的安全性都来自一下假设:在已知的时间内没有已知的攻击可以破坏它们。 | |

| − | |||

| 第905行: | 第891行: | ||

Information theoretic security refers to methods such as the one-time pad that are not vulnerable to such brute force attacks. In such cases, the positive conditional mutual information between the plaintext and ciphertext (conditioned on the key) can ensure proper transmission, while the unconditional mutual information between the plaintext and ciphertext remains zero, resulting in absolutely secure communications. In other words, an eavesdropper would not be able to improve his or her guess of the plaintext by gaining knowledge of the ciphertext but not of the key. However, as in any other cryptographic system, care must be used to correctly apply even information-theoretically secure methods; the Venona project was able to crack the one-time pads of the Soviet Union due to their improper reuse of key material. | Information theoretic security refers to methods such as the one-time pad that are not vulnerable to such brute force attacks. In such cases, the positive conditional mutual information between the plaintext and ciphertext (conditioned on the key) can ensure proper transmission, while the unconditional mutual information between the plaintext and ciphertext remains zero, resulting in absolutely secure communications. In other words, an eavesdropper would not be able to improve his or her guess of the plaintext by gaining knowledge of the ciphertext but not of the key. However, as in any other cryptographic system, care must be used to correctly apply even information-theoretically secure methods; the Venona project was able to crack the one-time pads of the Soviet Union due to their improper reuse of key material. | ||

| − | + | 信息理论安全性指的是诸如一次性密钥之类的不易受到这种暴力攻击的方法。在这种情况下,可以确保明文和密文(以密钥为条件)之间的正条件互信息正确的传输,而明文和密文之间的无条件互信息仍为零,从而保证绝对安全的通信。换句话说,窃听者将无法通过获取密文而不是密钥的知识来改善其对纯文本的猜测。但是,就像在其他任何密码系统中一样,必须小心正确的使用信息论中安全的方法; 之所以Venona 项目能够破解苏联的一次性密钥,是因为苏联不当地重复使用关键材料。 | |

| − | |||

| 第915行: | 第900行: | ||

===Pseudorandom number generation=== | ===Pseudorandom number generation=== | ||

| − | + | 伪随机数的生成 | |

[[Pseudorandom number generator]]s are widely available in computer language libraries and application programs. They are, almost universally, unsuited to cryptographic use as they do not evade the deterministic nature of modern computer equipment and software. A class of improved random number generators is termed [[cryptographically secure pseudorandom number generator]]s, but even they require [[random seed]]s external to the software to work as intended. These can be obtained via [[Extractor (mathematics)|extractors]], if done carefully. The measure of sufficient randomness in extractors is [[min-entropy]], a value related to Shannon entropy through [[Rényi entropy]]; Rényi entropy is also used in evaluating randomness in cryptographic systems. Although related, the distinctions among these measures mean that a random variable with high Shannon entropy is not necessarily satisfactory for use in an extractor and so for cryptography uses. | [[Pseudorandom number generator]]s are widely available in computer language libraries and application programs. They are, almost universally, unsuited to cryptographic use as they do not evade the deterministic nature of modern computer equipment and software. A class of improved random number generators is termed [[cryptographically secure pseudorandom number generator]]s, but even they require [[random seed]]s external to the software to work as intended. These can be obtained via [[Extractor (mathematics)|extractors]], if done carefully. The measure of sufficient randomness in extractors is [[min-entropy]], a value related to Shannon entropy through [[Rényi entropy]]; Rényi entropy is also used in evaluating randomness in cryptographic systems. Although related, the distinctions among these measures mean that a random variable with high Shannon entropy is not necessarily satisfactory for use in an extractor and so for cryptography uses. | ||

| 第921行: | 第906行: | ||

Pseudorandom number generators are widely available in computer language libraries and application programs. They are, almost universally, unsuited to cryptographic use as they do not evade the deterministic nature of modern computer equipment and software. A class of improved random number generators is termed cryptographically secure pseudorandom number generators, but even they require random seeds external to the software to work as intended. These can be obtained via extractors, if done carefully. The measure of sufficient randomness in extractors is min-entropy, a value related to Shannon entropy through Rényi entropy; Rényi entropy is also used in evaluating randomness in cryptographic systems. Although related, the distinctions among these measures mean that a random variable with high Shannon entropy is not necessarily satisfactory for use in an extractor and so for cryptography uses. | Pseudorandom number generators are widely available in computer language libraries and application programs. They are, almost universally, unsuited to cryptographic use as they do not evade the deterministic nature of modern computer equipment and software. A class of improved random number generators is termed cryptographically secure pseudorandom number generators, but even they require random seeds external to the software to work as intended. These can be obtained via extractors, if done carefully. The measure of sufficient randomness in extractors is min-entropy, a value related to Shannon entropy through Rényi entropy; Rényi entropy is also used in evaluating randomness in cryptographic systems. Although related, the distinctions among these measures mean that a random variable with high Shannon entropy is not necessarily satisfactory for use in an extractor and so for cryptography uses. | ||

| − | + | 伪随机数生成器在计算机语言库和应用程序中广泛应用。由于它们没有规避现代计算机设备和软件的确定性,因此普遍不适合密码使用。一类改进的随机数生成器称为加密安全的伪随机数生成器,但也需要软件外部的随机种子才能正常工作。如果更进一步开发可以通过提取器来获得。提取器中充分随机性的度量是最小熵,该值与通过[[Rényi熵]]的Shannon熵有关;Rényi熵还用于评估密码系统中的随机性。虽然相关,具有较高Shannon熵的随机变量不一定适合在提取器中使用,因此在密码学用途中并不令人满意。 | |

| − | |||

| − | |||

| 第937行: | 第920行: | ||

One early commercial application of information theory was in the field of seismic oil exploration. Work in this field made it possible to strip off and separate the unwanted noise from the desired seismic signal. Information theory and digital signal processing offer a major improvement of resolution and image clarity over previous analog methods. | One early commercial application of information theory was in the field of seismic oil exploration. Work in this field made it possible to strip off and separate the unwanted noise from the desired seismic signal. Information theory and digital signal processing offer a major improvement of resolution and image clarity over previous analog methods. | ||

| − | + | 信息论的一个早期商业应用是在地震石油勘探领域。在该领域的应用可以从期望的地震信号中剔除和分离不需要的噪声。与以前的模拟方法相比,信息论和数字信号处理大大提高了图像的分辨率和清晰度。<ref>{{cite journal|doi=10.1002/smj.4250020202 | volume=2 | issue=2 | title=The corporation and innovation | year=1981 | journal=Strategic Management Journal | pages=97–118 | last1 = Haggerty | first1 = Patrick E.}}</ref> | |

| − | |||

| 第953行: | 第935行: | ||

Semioticians Doede Nauta and Winfried Nöth both considered Charles Sanders Peirce as having created a theory of information in his works on semiotics. Nauta defined semiotic information theory as the study of "the internal processes of coding, filtering, and information processing." | Semioticians Doede Nauta and Winfried Nöth both considered Charles Sanders Peirce as having created a theory of information in his works on semiotics. Nauta defined semiotic information theory as the study of "the internal processes of coding, filtering, and information processing." | ||

| − | 符号学家 Doede Nauta 和 Winfried | + | 符号学家[[:nl:Doede Nauta|Doede Nauta]]和[[Winfried Nöth]]都认为[[Charles Sanders Peirce]]在他的符号学著作中创造了信息论。<ref name="Nauta 1972">{{cite book |ref=harv |last1=Nauta |first1=Doede |title=The Meaning of Information |date=1972 |publisher=Mouton |location=The Hague |isbn=9789027919960}}</ref>{{rp|171}}<ref name="Nöth 2012">{{cite journal |ref=harv |last1=Nöth |first1=Winfried |title=Charles S. Peirce's theory of information: a theory of the growth of symbols and of knowledge |journal=Cybernetics and Human Knowing |date=January 2012 |volume=19 |issue=1–2 |pages=137–161 |url=https://edisciplinas.usp.br/mod/resource/view.php?id=2311849}}</ref>{{rp|137}} Nauta将符号信息论定义为研究编码、过滤和信息处理的内部过程。<ref name="Nauta 1972"/>{{rp|91}} |

| + | |||

| 第965行: | 第948行: | ||

来自信息论的概念,如冗余和代码控制,已经被符号学家如 Umberto Eco 和 Ferruccio Rossi-Landi 用来解释意识形态作为一种信息传递的形式,主导社会阶层通过使用高度冗余的标志来发出信息,这样在一系列相互竞争的标志中只有一个信息被解码。 | 来自信息论的概念,如冗余和代码控制,已经被符号学家如 Umberto Eco 和 Ferruccio Rossi-Landi 用来解释意识形态作为一种信息传递的形式,主导社会阶层通过使用高度冗余的标志来发出信息,这样在一系列相互竞争的标志中只有一个信息被解码。 | ||

| − | + | 信息论的概念(例如冗余和代码控制)已被符号学家如Umberto Eco和Ferruccio Rossi-Landi用来解释意识形态,将其作为消息传输的一种形式,占统治地位的社会阶层通过使用具有高度冗余性的标志来发出其信息,从而在一系列相互竞争的标志中只有一个信息被解码。 | |

| 第973行: | 第956行: | ||

===Miscellaneous applications=== | ===Miscellaneous applications=== | ||

| − | + | 其他杂项中的应用 | |

| − | |||

Information theory also has applications in [[Gambling and information theory]], [[black hole information paradox|black holes]], and [[bioinformatics]]. | Information theory also has applications in [[Gambling and information theory]], [[black hole information paradox|black holes]], and [[bioinformatics]]. | ||

Information theory also has applications in Gambling and information theory, black holes, and bioinformatics. | Information theory also has applications in Gambling and information theory, black holes, and bioinformatics. | ||

| − | + | 信息论在赌博和信息论、黑洞信息悖论和生物信息学中也有应用。 | |

| 第989行: | 第971行: | ||

==See also== | ==See also== | ||

| − | + | 另请参阅 | |

{{Portal|Mathematics}} | {{Portal|Mathematics}} | ||

| 第1,541行: | 第1,523行: | ||

===MOOC on information theory=== | ===MOOC on information theory=== | ||

| − | + | 信息论大型开放式课程 | |

* Raymond W. Yeung, "[http://www.inc.cuhk.edu.hk/InformationTheory/index.html Information Theory]" ([[The Chinese University of Hong Kong]]) | * Raymond W. Yeung, "[http://www.inc.cuhk.edu.hk/InformationTheory/index.html Information Theory]" ([[The Chinese University of Hong Kong]]) | ||

2020年9月1日 (二) 15:27的版本

Information theory studies the quantification, storage, and communication of information. It was originally proposed by Claude Shannon in 1948 to find fundamental limits on signal processing and communication operations such as data compression, in a landmark paper titled "A Mathematical Theory of Communication". Its impact has been crucial to the success of the Voyager missions to deep space, the invention of the compact disc, the feasibility of mobile phones, the development of the Internet, the study of linguistics and of human perception, the understanding of black holes, and numerous other fields.

Information theory studies the quantification, storage, and communication of information. It was originally proposed by Claude Shannon in 1948 to find fundamental limits on signal processing and communication operations such as data compression, in a landmark paper titled "A Mathematical Theory of Communication". Its impact has been crucial to the success of the Voyager missions to deep space, the invention of the compact disc, the feasibility of mobile phones, the development of the Internet, the study of linguistics and of human perception, the understanding of black holes, and numerous other fields.

信息论研究的是信息的量化、存储与传播。信息论最初是由Claude Shannon在1948年的一篇题为"A Mathematical Theory of Communication"的论文中提出的,其目的是找到信号处理和通信操作(如数据压缩)的基本限制。信息论对于旅行者号深空探测任务的成功、光盘的发明、移动电话的可行性、互联网的发展、语言学和人类感知的研究、对黑洞的理解以及许多其他领域的研究都是至关重要的。

The field is at the intersection of mathematics, statistics, computer science, physics, neurobiology, information engineering, and electrical engineering. The theory has also found applications in other areas, including statistical inference, natural language processing, cryptography, neurobiology,[1] human vision,[2] the evolution[3] and function[4] of molecular codes (bioinformatics), model selection in statistics,[5] thermal physics,[6] quantum computing, linguistics, plagiarism detection,[7] pattern recognition, and anomaly detection.引用错误:没有找到与</ref>对应的<ref>标签 Important sub-fields of information theory include source coding, algorithmic complexity theory, algorithmic information theory, information-theoretic security, Grey system theory and measures of information.

</ref> Important sub-fields of information theory include source coding, algorithmic complexity theory, algorithmic information theory, information-theoretic security, Grey system theory and measures of information.

信息论的重要分支包括信源编码、算法复杂性理论、算法信息论、资讯理论安全性、灰色系统理论和信息度量。

Applications of fundamental topics of information theory include lossless data compression (e.g. ZIP files), lossy data compression (e.g. MP3s and JPEGs), and channel coding (e.g. for DSL). Information theory is used in information retrieval, intelligence gathering, gambling, and even in musical composition.

Applications of fundamental topics of information theory include lossless data compression (e.g. ZIP files), lossy data compression (e.g. MP3s and JPEGs), and channel coding (e.g. for DSL). Information theory is used in information retrieval, intelligence gathering, gambling, and even in musical composition.

信息论基本主题的应用包括无损数据压缩(例如:ZIP压缩文件)、有损数据压缩(例如:Mp3和jpeg格式) ,以及频道编码(例如:DSL)。信息论应用于信息检索、情报收集、赌博,甚至在音乐创作中也有应用。

A key measure in information theory is entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a fair coin flip (with two equally likely outcomes) provides less information (lower entropy) than specifying the outcome from a roll of a 模板:Dice (with six equally likely outcomes). Some other important measures in information theory are mutual information, channel capacity, error exponents, and relative entropy.

A key measure in information theory is entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a fair coin flip (with two equally likely outcomes) provides less information (lower entropy) than specifying the outcome from a roll of a (with six equally likely outcomes). Some other important measures in information theory are mutual information, channel capacity, error exponents, and relative entropy.

信息论中的一个关键度量是熵。熵量化了一个随机变量的值或者一个随机过程的结果所包含的不确定性。例如,识别一次公平抛硬币的结果(有两个同样可能的结果)所提供的信息(较低的熵)少于指定一卷 a 的结果(有六个同样可能的结果)。信息论中的其他一些重要指标有:互信息、信道容量、误差指数和相对熵。

Overview

Overview

概览

Information theory studies the transmission, processing, extraction, and utilization of information. Abstractly, information can be thought of as the resolution of uncertainty. In the case of communication of information over a noisy channel, this abstract concept was made concrete in 1948 by Claude Shannon in his paper "A Mathematical Theory of Communication", in which "information" is thought of as a set of possible messages, where the goal is to send these messages over a noisy channel, and then to have the receiver reconstruct the message with low probability of error, in spite of the channel noise. Shannon's main result, the noisy-channel coding theorem showed that, in the limit of many channel uses, the rate of information that is asymptotically achievable is equal to the channel capacity, a quantity dependent merely on the statistics of the channel over which the messages are sent.[1]

Information theory studies the transmission, processing, extraction, and utilization of information. Abstractly, information can be thought of as the resolution of uncertainty. In the case of communication of information over a noisy channel, this abstract concept was made concrete in 1948 by Claude Shannon in his paper "A Mathematical Theory of Communication", in which "information" is thought of as a set of possible messages, where the goal is to send these messages over a noisy channel, and then to have the receiver reconstruct the message with low probability of error, in spite of the channel noise. Shannon's main result, the noisy-channel coding theorem showed that, in the limit of many channel uses, the rate of information that is asymptotically achievable is equal to the channel capacity, a quantity dependent merely on the statistics of the channel over which the messages are sent.

信息论主要研究信息的传递、处理、提取和利用。抽象地说,信息可以作为不确定性的解决方案。1948年,Claude Shannon在他的论文"A Mathematical Theory of Communication"中将这个抽象的概念具体化,在这篇论文中“信息”被认为是一组可能的信息,其目标是通过噪声信道发送这些信息,然后让接收器在信道噪声的影响下以较低的错误概率来重构信息。Shannon的主要结果为:噪信道编码定理表明,在许多信道(这个数量仅仅依赖于信息发送所经过的信道的统计信息)使用的限制下,信道容量为渐近可达到的信息传输速率,。

Information theory is closely associated with a collection of pure and applied disciplines that have been investigated and reduced to engineering practice under a variety of rubrics throughout the world over the past half century or more: adaptive systems, anticipatory systems, artificial intelligence, complex systems, complexity science, cybernetics, informatics, machine learning, along with systems sciences of many descriptions. Information theory is a broad and deep mathematical theory, with equally broad and deep applications, amongst which is the vital field of coding theory.

Information theory is closely associated with a collection of pure and applied disciplines that have been investigated and reduced to engineering practice under a variety of rubrics throughout the world over the past half century or more: adaptive systems, anticipatory systems, artificial intelligence, complex systems, complexity science, cybernetics, informatics, machine learning, along with systems sciences of many descriptions. Information theory is a broad and deep mathematical theory, with equally broad and deep applications, amongst which is the vital field of coding theory.

信息论与一系列纯粹的、应用的学科密切相关,在过去半个世纪甚至更久的时间里,在全球范围内已经有各种专栏下被研究和简化为工程实践,比如在自适应系统,预期系统,人工智能,复杂系统,复杂性科学,控制论,信息学,机器学习,以及许多描述的系统科学等学科中的研究与应用。信息论是一个广泛而深入的数学理论,同样也具有广泛而深入的应用,其中编码理论是至关重要的领域。

Coding theory is concerned with finding explicit methods, called codes, for increasing the efficiency and reducing the error rate of data communication over noisy channels to near the channel capacity. These codes can be roughly subdivided into data compression (source coding) and error-correction (channel coding) techniques. In the latter case, it took many years to find the methods Shannon's work proved were possible.

Coding theory is concerned with finding explicit methods, called codes, for increasing the efficiency and reducing the error rate of data communication over noisy channels to near the channel capacity. These codes can be roughly subdivided into data compression (source coding) and error-correction (channel coding) techniques. In the latter case, it took many years to find the methods Shannon's work proved were possible.

编码理论与寻找明确的方法(编码)有关,用于提高效率和将噪声信道上传输的数据错误率降低到接近信道容量。这些编码可大致分为数据压缩编码(信源编码)和纠错(信道编码)技术。在后一种技术中,花了很多年才证明Shannon的工作是可行的。

A third class of information theory codes are cryptographic algorithms (both codes and ciphers). Concepts, methods and results from coding theory and information theory are widely used in cryptography and cryptanalysis. See the article ban (unit) for a historical application.

A third class of information theory codes are cryptographic algorithms (both codes and ciphers). Concepts, methods and results from coding theory and information theory are widely used in cryptography and cryptanalysis. See the article ban (unit) for a historical application.