Effective Information

Effective Information (EI) is a core concept in the theory of Causal Emergence, used to measure the strength of Causal Effects in Markov Dynamics. In this context, causal effect refers to the extent to which different input distributions lead to different output distributions when viewing the dynamics as a black box, and the strength of this connection between the input and output distributions is the causal effect. EI can typically be decomposed into two components: Determinism and Degeneracy. Determinism indicates how well the next state of the system can be predicted from its previous state, while Degeneracy refers to how well one can infer the previous state from the next state. A system with higher Determinism or lower Degeneracy will have higher Effective Information. In this page, all [math]\log[/math] represent logarithmic operations with a base of 2.

Historical Background

The concept of Effective Information (EI) was first introduced by Giulio Tononi in 2003 [1], as a key measure in Integrated Information Theory. A system is said to have a high degree of integration when there is a strong causal connection among its components, and EI is the metric used to quantify this degree of causal connection.

In 2013, Giulio Tononi's student, Erik Hoel, further refined the concept of EI to quantitatively characterize emergence, leading to the development of the theory of Causal Emergence[2]. In this theory, Hoel used Judea Pearl's do operator to modify the general Mutual Information metric [3], which made EI fundamentally different from Mutual Information. While Mutual Information measures correlation, EI—due to the use of the do operator—measures causality. The article also introduced a Normalized Version of EI, referred to as Eff.

Traditionally, EI was primarily applied to discrete-state Markov Chains. To extend this to continuous domains, P. Chvykov and E. Hoel collaborated in 2020 to propose the theory of Causal Geometry [4], expanding EI's definition to function mappings with continuous state variables. By incorporating Information Geometry, they explored a perturbative form of EI and compared it with Fisher Information, proposing the concept of Causal Geometry. However, this method of calculating EI for continuous variables required the assumption of infinitesimal variance for normal distribution variables, which was an overly stringent condition.

In 2022, to address the calculation of EI in general Feedforward Neural Networks, Zhang Jiang and Liu Kaiwei removed the variance constraint from the Causal Geometry approach and explored a more general form of EI, [5][6][7]. Nonetheless, a limitation remained: because the uniform distribution of variables in the real-number domain is strictly defined over an infinite space, the calculation of EI involved a parameter [math]L[/math], representing the range of the uniform distribution. To avoid this issue and enable comparisons of EI at different levels of Granularity, the authors proposed the concept of Dimension-averaged EI. They found that the Measure of Causal Emergence defined by Dimension-averaged EI was solely dependent on the determinant of the Neural Network's Jacobian Matrix and the variance of the random variables in the two compared dimensions, independent of other parameters such as L. Additionally, Dimension-averaged EI could be viewed as a Normalized EI, or Eff.

Essentially, EI is a quantity that depends only on the Dynamics of a Markov Dynamic System—specifically on the Markov State Probability Transition Matrix[8]. In their latest work on Dynamical Reversibility and Causal Emergence, Zhang Jiang and colleagues pointed out that EI is actually a characterization of the reversibility of the underlying Markov Probability Transition Matrix, and they attempted to directly characterize the reversibility of Markov chain dynamics as a replacement for EI[9].

Overview

The EI metric is primarily used to measure the strength of causal effects in Markov dynamics. Unlike general causal inference theories, EI is used in cases where the dynamics (the Markov transition probability matrix) are known and no unknown variables (i.e., Confounders) are present. Its core objective is to measure the strength of causal effects, rather than the existence of causal effects. This means EI is more suitable for scenarios where a causal relationship between variables X and Y is already established.

Formally, EI is a function of the causal mechanism (in a discrete-state Markov Chain, this is the Probability Transition Matrix of the Markov chain) and is independent of other factors. The formal definition of EI is:

[math]\displaystyle{ EI(P)\equiv I(Y;X|do(X\sim U)) }[/math]

where P represents the causal mechanism from X to Y, which is a probability transition matrix, [math]p_{ij}\equiv Pr(Y=j|X=i)[/math]; X is the cause variable, Y is the effect variable, and [math]do(X\sim U)[/math] denotes the do Intervention on X, changing its distribution to a uniform one. Under this intervention, and assuming the causal mechanism P remains unchanged, Y will be indirectly affected by the intervention on X. EI measures the mutual information between X and Y after this intervention.

The introduction of the do operator aims to eliminate the influence of X's distribution on EI, ensuring that the final EI metric is only a function of the causal mechanism f and is independent of X's distribution.

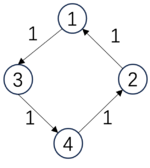

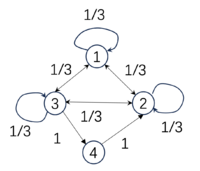

Below are three examples of Markov chains, with their respective EI values included:

| [math]\displaystyle{ P_1=\begin{pmatrix} &0 &0 &1 &0& \\ &1 &0 &0 &0& \\ &0 &0 &0 &1& \\ &0 &1 &0 &0& \\ \end{pmatrix} }[/math], | [math]\displaystyle{ P_2=\begin{pmatrix} &1/3 &1/3 &1/3 &0& \\ &1/3 &1/3 &1/3 &0& \\ &1/3 &1/3 &1/3 &0& \\ &0 &0 &0 &1& \\ \end{pmatrix} }[/math], | [math]\displaystyle{ P_3=\begin{pmatrix} &0 &0 &1 &0& \\ &1 &0 &0 &0& \\ &1 &0 &0 &0& \\ &1 &0 &0 &0& \\ \end{pmatrix} }[/math]. |

| [math]\begin{aligned}&EI(P_1)=2\ bits,\\&Det(P_1)=2\ bits,\\&Deg(P_1)=0\ bits\end{aligned}[/math] | [math]\begin{aligned}&EI(P_2)=0.81\ bits,\\&Det(P_2)=0.81\ bits,\\&Deg(P_2)=0\ bits\end{aligned}[/math] | [math]\begin{aligned}&EI(P_3)=0.81\ bits\\&Det(P_3)=2\ bits,\\&Deg(P_3)=1.19\ bits.\end{aligned}[/math] |

-

(example)

As we can see, the EI of the first matrix [math]P_1[/math] is higher than that of the second [math]P_2[/math] because this probability transition is fully deterministic: starting from a particular state, it transitions to another state with 100% probability. However, not all deterministic matrices correspond to high EI, such as matrix [math]P_3[/math]. Although its transition probabilities are also either 100% or 0, because all of the last three states transition to the first state, we cannot distinguish which state it was in the previous moment. Therefore, its EI is low, which we call Degeneracy. Hence, if a transition matrix has high determinism and low degeneracy, its EI will be high. Additionally, EI can be decomposed as follows:

[math]\displaystyle{ EI=Det-Deg }[/math]

Where Det stands for Determinism, and Deg stands for Degeneracy. EI is the difference between the two. In the table, we also list the values of Det and Deg corresponding to the matrices.

The first transition probability matrix is a Permutation matrix and is reversible; thus, it has the highest determinism, no degeneracy, and therefore the highest EI. The second matrix's first three states transition to each other with a 1/3 probability, resulting in the lowest determinism but also low degeneracy, yielding an EI of 0.81. The third matrix, despite having binary transitions, has high degeneracy because all three states transition to state 1, meaning we cannot infer their previous state. Thus, its EI equals that of the second matrix at 0.81.

Although in the original literature [2], EI was mostly applied to discrete-state Markov chains, Zhang Jiang, Liu Kaiwei, and Yang Mingzhe extended the definition to more general continuous-variable cases[5][6][7]. This extension builds on EI's original definition by intervening on the cause variable X as a uniform distribution over a sufficiently large bounded interval, [math][-\frac{L}{2}, \frac{L}{2}]^n[/math]. The causal mechanism is assumed to be a conditional probability that follows a Gaussian distribution with a mean function [math]f(x)[/math] and covariance matrix [math]\Sigma[/math]. Based on this, the EI between the causal variables is then measured. The causal mechanism here is determined by the mapping [math]f(x)[/math] and the covariance matrix, which together define the conditional probability [math]Pr(y|x)[/math].

More detailed explanations follow.

The Do-Form and Its Explanation

The original definition of effective information (EI) was based on discrete Markov chains. However, to expand its applicability, we explore a more general form of EI here.

Formal Definition

Consider two random variables, [math]X[/math] and [math]Y[/math], representing the Cause Variable and the Effect Variable, respectively. Let their value ranges be [math]\mathcal{X}[/math] and [math]\mathcal{Y}[/math]. The effective information (EI) from [math]X[/math] to [math]Y[/math] is defined as:

[math]\displaystyle{ EI\equiv I(X:Y|do(X\sim U(\mathcal{X})))\equiv I(\tilde{X}:\tilde{Y}) }[/math]

Here, [math]do(X\sim U(\mathcal{X}))[/math] represents applying a do-operator on [math]X[/math], making it following a uniform distribution [math]U(\mathcal{X})[/math] over [math]\mathcal{X}[/math], which corresponds to a Maximum Entropy Distribution. [math]\tilde{X}[/math] and [math]\tilde{Y}[/math] represent the variables after the [math]do[/math]-intervention on [math]X[/math] and [math]Y[/math], respectively, where:

[math]\displaystyle{ Pr(\tilde{X}=x)=\frac{1}{\#(\mathcal{X})}, }[/math]

This means that the main difference between [math]\tilde{X}[/math] after the intervention and [math]X[/math] before the intervention is their distributions: [math]\tilde{X}[/math] follows a uniform distribution over [math]\mathcal{X}[/math], while [math]X[/math] may follow any arbitrary distribution. [math]\#(\mathcal{X})[/math] represents the cardinality of the set [math]\mathcal{X}[/math], or the number of elements in the set if it is finite.

According to Judea Pearl's theory, the do-operator cuts off all causal arrows pointing to variable [math]X[/math], while keeping other factors unchanged, particularly the causal mechanism from [math]X[/math] to [math]Y[/math]. The Causal Mechanism is defined as the conditional probability of [math]Y[/math] taking any value [math]y\in \mathcal{Y}[/math] given [math]X[/math] takes a value [math]x\in \mathcal{X}[/math]:

[math]\displaystyle{ f\equiv Pr(Y=y|X=x) }[/math]

In the intervention, this Causal Mechanism [math]f[/math] remains unchanged. When no other variables are influencing the system, this leads to a change in the distribution of [math]Y[/math], which is indirectly intervened upon and becomes:

[math]\displaystyle{ Pr(\tilde{Y}=y)=\sum_{x\in \mathcal{X}}Pr(X=x) Pr(Y=y|X=x)=\sum_{x\in \mathcal{X}} \frac{Pr(Y=y|X=x)}{\#(\mathcal{X})}. }[/math]

Among them, [math]\tilde{Y}[/math] represents the [math]Y[/math] variable indirectly changed by [math]X[/math]'s do-intervention while maintaining the causal mechanism [math]f[/math] unchanged, and this change is mainly reflected in the change of probability distribution of [math]Y[/math].

Therefore, the effective information (EI) of a causal mechanism [math]f[/math] is the Mutual Information between the intervened cause variable [math]\tilde{X}[/math] and the intervened effect variable [math]\tilde{Y}[/math].

Why Use the Do-Operator?

While EI is essentially a measure of Mutual Information, unlike traditional Information Theory, effective information EI includes a do-operator in its definition, which involves an Intervention Operation on the input variable. Why is this intervention necessary?

According to Judea Pearl’s Ladder of Causality[3], Causal Inference can be divided into three levels: association, Intervention, and Counterfactuals. The higher the level, the more apparent the causal characteristics. Directly estimating Mutual Information from observational data measures the level of association. If we can intervene in the variables, i.e., set a variable to a specific value or make it follow a particular distribution, we move up to the intervention level. By introducing the [math]do[/math] operator in the definition of EI, we allow EI to capture causal features more effectively than Mutual Information alone.

From a practical perspective, incorporating the do-operator in EI’s calculation separates the data from the dynamics, eliminating the effect of the data distribution (i.e., [math]X[/math] distribution) on the EI measurement. In general Causal Graphs, the do-operator cuts off all causal arrows pointing to the intervened variable, preventing Confounding Factors from creating Spurious Associations. Similarly, in EI's definition, the do-operator removes all causal arrows pointing to the cause variable [math]X[/math], including influences from other variables (both observable and unobservable). This ensures that EI captures the intrinsic characteristics of the dynamics itself.

The introduction of the do-operator makes EI distinct from other information metrics. The key difference is that EI is solely a function of the Causal Mechanism, which allows it to more precisely capture the essence of causality compared to other metrics like Transfer Entropy. However, this also means that EI requires the knowledge of or access to the Causal Mechanism, which may be challenging if only observational data is available.

Why Intervene to Achieve a Uniform Distribution?

In Erik Hoel's original definition, the do-operator intervenes on the dependent variable [math]X[/math], transforming it into a Uniform Distribution over its domain [math]\mathcal{X}[/math] (which is also the Maximum Entropy Distribution)[2][10]. So, why should we intervene to achieve a Uniform Distribution? Can other distributions be used?

Firstly, according to the previous section, the essence of the do-operator is to allow the Effective Information (EI) to better describe the nature of the Causal Mechanism [math]f[/math]. Therefore, it is necessary to sever the connection between the dependent variable [math]X[/math] and other variables and change its distribution so that the EI metric becomes independent of the distribution of [math]X[/math].

The reason for intervening to achieve a Uniform Distribution for the input variable is to more accurately characterize the properties of the Causal Mechanism.

This is because, when both [math]\mathcal{X}[/math] and [math]\mathcal{Y}[/math] are finite, countable sets, the causal mechanism [math]f\equiv Pr(Y=y|X=x)[/math] becomes a matrix with [math]\#(\mathcal{X})[/math] rows and [math]\#(\mathcal{Y})[/math] columns. We can expand the definition of EI:

-

[math]\displaystyle{ \begin{aligned} EI &= I(X,Y|do(X)\sim U)= \sum_{x\in\mathcal{X}}\sum_{y\in\mathcal{Y}}Pr(X=x,Y=y)\log \frac{Pr(X=x,Y=y)}{Pr(X=x)Pr(Y=y)}\\ &= \sum_{x\in\mathcal{X}}\sum_{y\in\mathcal{Y}}Pr(X=x)Pr(Y=y|X=x)\log \frac{Pr(Y=y|X=x)}{Pr(Y=y)}\\ &= \sum_{x\in\mathcal{X}}\sum_{y\in\mathcal{Y}}Pr(X=x)Pr(Y=y|X=x)\log Pr(Y=y|X=x)- \sum_{x\in\mathcal{X}}\sum_{y\in\mathcal{Y}}Pr(X=x)Pr(Y=y|X=x)\log Pr(Y=y) \\ &=\frac{1}{\#(\mathcal{X})}\left(-\sum_{x\in\mathcal{X}}H(Pr(Y|X))\right) + H(Pr(Y)) \end{aligned} }[/math]

(1)

It is not difficult to see that the final equation tells us that EI is actually composed of two terms: the first term is the average of the negative entropy of each row of the causal mechanism matrix, and the second term is the entropy of the variable [math]Y[/math]. In the first term, the probability distribution [math]Pr(X=x)[/math] of [math]X[/math] acts as the weight when averaging the entropy of each row. Only when we set this weight to be the same value (i.e., intervene to make [math]X[/math] uniformly distributed) can we treat each row of the causal mechanism matrix equally.

If the distribution is not uniform, some rows will be assigned a larger weight, while others will be given a smaller weight. This weight represents a certain bias, which prevents the EI from reflecting the natural properties of the causal mechanism.

Effective Information as the Distribution Difference

In the literature[11], the author defines effective information in another way. This new form of effective information depends on the state of the outcome variable (Y), that is, the state of [math]\tilde{Y}[/math] after intervening [math]X[/math] to be uniformly distributed is the given value [math]Y_0[/math]. Under this condition, effective information is defined as the KL Divergence between two probability distributions: the prior distribution of the dependent variable [math]\tilde{X}[/math], i.e. the uniform distribution [math]U[/math] on [math]\mathcal{X}[/math], and the posterior distribution of it, i.e., [math]P(\tilde{X}|\tilde{Y}=Y_0,f)[/math], which is the inferred distribution of [math]\tilde{X}[/math] after the event [math]\tilde{Y}=Y_0[/math] is observed. And this event is the effect caused by the intervention of [math]do(X\sim U(\mathcal{X}))[/math] under the causal mechanism [math]f[/math].

So, there will be a difference between the prior probability distribution and the posterior probability distribution, which is the effective information generated by the causal mechanism f, and can be defined as:

[math]\displaystyle{ ei(f,Y_0)=D_{KL}(U||P(\tilde{X}|\tilde{Y}=Y_0,f) }[/math]

Here, [math]\tilde{X}[/math] and [math]\tilde{Y}[/math] respectively represent the cause and effect variables after intervening [math]X[/math] into a uniform distribution (i.e. prior distribution), while keeping the causal mechanism [math]f[/math] unchanged. It is worth noting that in the literature[11], the author did not explicitly provide the form of the KL Divergence. In subsequent literature (Integrated Information Theory 3.0 Version[12]), the author used other symmetry measures related to probability distribution distance, such as the Earth Moving Distance.

In fact, [math]ei(f,Y_0)[/math] is the effective information value under a certain [math]Y_0[/math] value. If we take the average of all [math]Y_0[/math] values, we can obtain the effective information in the usual sense, which is the equation 1. To understand this, we first need to introduce the Bayesian Formula, which is:

[math]\displaystyle{ P(\tilde{X}|\tilde{Y}=Y_0,f)=\frac{P(\tilde{X},\tilde{Y_0})}{P(\tilde{Y_0})}=\frac{P(\tilde{X_0})P(\tilde{Y_0}|\tilde{X})}{P(\tilde{Y_0})}=\frac{P(\tilde{Y_0}|\tilde{X})}{\#(\mathcal{X})\cdot P(\tilde{Y_0})} }[/math]

Here, [math]\tilde{Y_0}\equiv (\tilde{Y}=Y_0)[/math]. Please note that the conditional probability [math]P(\tilde{Y_0}|\tilde{X})[/math] here is actually the causal mechanism [math]f[/math]. Furthermore, by substituting it into the formula [math]ei(f,Y_0)[/math], we can easily obtain:

[math]\displaystyle{ ei(f,Y_0)=D_{KL}(U||P(\tilde{X}|\tilde{Y}=Y_0,f)=-\log \#(\mathcal{X})-\frac{1}{\#(\mathcal{X})}\sum_{\tilde{X}}\log\frac{P(\tilde{Y_0}|\tilde{X})}{P(\tilde{Y_0})} }[/math]

By taking the expected value of [math]\tilde{Y_0}[/math] for all values in the above equation, we can obtain:

[math]\displaystyle{ EI=\frac{1}{\#(\mathcal{X})}\sum_{\tilde{X},\tilde{Y_0}}P(\tilde{Y_0}|\tilde{X})\log\frac{P(\tilde{Y_0}|\tilde{X})}{P(\tilde{Y_0})}=\log \#(\mathcal{X})-\mathbb{E}_{P(\tilde{Y_0}|\tilde{X})}(ei(f,Y_0)) }[/math]

The introduction of [math]ei[/math] helps us to understand how a local causal mechanism changes the distribution of the original variable, or in Tononi's language, it is a mechanism of information generation, as detailed in the article[11] or the Integrated Information Theory.

Effective Information of Markov Chains

Introduction to Markov Chains

In this section, all Markov transition probability matrices are denoted as [math]P[/math]. N is the total number of states.

Erik Hoel and colleagues first proposed the measure of causality, Effective Information (EI), on the Markov Dynamics with discrete states, also known as Markov Chains. Therefore, this section introduces the specific form of EI on Markov Chains.

A Markov Chain refers to a type of Stationary Stochastic Process with discrete states and discrete time. Its dynamics can generally be represented by a Transitional Probability Matrix (TPM), also known as a Probability Transition Matrix or State Transition Matrix.

Specifically, a Markov Chain consists of a set of random variables [math]X_t[/math], which take values in the state space [math]\mathcal{X}=\{1,2,\cdots,N\}[/math], where [math]t[/math] typically represents time. A Transition Probability Matrix is a probability matrix, where the element in the [math]i[/math]-th row and [math]j[/math]-th column, [math]p_{ij}[/math], represents the probability of the system transitioning from state [math]i[/math] at any time [math]t[/math] to state [math]j[/math] at time [math]t+1[/math]. Each row satisfies the normalization condition:

[math]\displaystyle{ \sum_{j=1}^Np_{ij}=1, }[/math]

The Transitional Probability Matrix can be viewed as the Dynamics of the Markov Chain because the probability distribution of the state at any time [math]t+1[/math], [math]Pr(X_t)[/math], can be uniquely determined by the state probability distribution at the previous moment, i.e., [math]Pr(X_t)[/math] and Transitional Probability Matrix, satisfying the relation:

[math]\displaystyle{ Pr(X_{t+1}=j)=\sum_{i=1}^N p_{ij}\cdot Pr(X_t=i), }[/math]

Here [math]i,j\in \mathcal{X}[/math] are all arbitrary states in [math]\mathcal{X}[/math], and [math]N=\#(\mathcal{X})[/math] is the total number of states in [math]\mathcal{X}[/math].

The following table presents three different transition probability matrices and their EI values:

| [math]\displaystyle{ P_1=\begin{pmatrix} &0 &0 &1 &0& \\ &1 &0 &0 &0& \\ &0 &0 &0 &1& \\ &0 &1 &0 &0& \\ \end{pmatrix} }[/math], | [math]\displaystyle{ P_2=\begin{pmatrix} &1/3 &1/3 &1/3 &0& \\ &1/3 &1/3 &1/3 &0& \\ &0 &0 &0 &1& \\ &0 &0 &0 &1& \\ \end{pmatrix} }[/math], | [math]\displaystyle{ P_3=\begin{pmatrix} &1/4 &1/4 &1/4 &1/4& \\ &1/4 &1/4 &1/4 &1/4& \\ &1/4 &1/4 &1/4 &1/4& \\ &1/4 &1/4 &1/4 &1/4& \\ \end{pmatrix} }[/math]. |

| [math]EI(P_1)=2[/math] bits | [math]EI(P_2)=1[/math] bits | [math]EI(P_3)=0[/math] bits |

For these three Markov Chains, the state space is [math]\mathcal{X}=\{1,2,3,4\}[/math], so the size of their TPM is [math]4\times 4[/math].

EI of Markov Chains

In a Markov Chain, the state variable at any time [math]X_t[/math] can be considered as the cause, and the state variable at the next time [math]X_{t+1}[/math] can be considered as the effect. Thus, the Transitional Probability Matrix of a Markov Chain is its Causal Mechanism. Therefore, we can apply the definition of Effective Information to the Markov Chain.

[math]\displaystyle{

\begin{aligned}

EI &= I(X_t,X_{t+1}|do(X_t)\sim U(\mathcal{X}))=I(\tilde{X}_t,\tilde{X}_{t+1}) \\

&= \sum^N_{i=1}\sum^N_{j=1}Pr(\tilde{X}_t=i,\tilde{X}_{t+1}=j)\log \frac{Pr(\tilde{X}_t=i,\tilde{X}_{t+1}=j)}{Pr(\tilde{X}_t=i)Pr(\tilde{X}_{t+1}=j)}\\

&= \sum^N_{i=1}Pr(\tilde{X}_t=i)\sum^N_{j=1}Pr(\tilde{X}_{t+1}=j|\tilde{X}_t=i)\log \frac{Pr(\tilde{X}_{t+1}=j|\tilde{X}_t=i)}{Pr(\tilde{X}_{t+1}=j)}\\

&= \frac{1}{N}\sum^N_{i=1}\sum^N_{j=1}p_{ij}\log\frac{N\cdot p_{ij}}{\sum_{k=1}^N p_{kj}}

\end{aligned}

}[/math]

Here, [math]\displaystyle{ \tilde{X}_t,\tilde{X}_{t+1} }[/math] are the states at times t and t+1 after intervening to make [math]X_t[/math] Uniformly Distributed, and [math]\displaystyle{ p_{ij} }[/math] is the probability of transitioning from state i to state j. From this equation, it is clear that EI is merely a function of the probability transition matrix [math]P[/math].

Vector Form of EI in Markov Chains

We can also represent the Transitional Probability Matrix [math]P[/math] as a concatenation of [math]N[/math] row vectors, i.e.:

[math]\displaystyle{ P=(P_1^T,P_2^T,\cdots,P_N^T)^T }[/math]

Where [math]P_i[/math] is the [math]i[/math]-th row vector of matrix [math]P[/math], and it satisfies the normalization condition for conditional probabilities: [math]||P_i||_1=1[/math], where [math]||\cdot||_1[/math] denotes the 1-norm of a vector. Then, EI can be written as follows:

-

[math]\displaystyle{ \begin{aligned} EI &= \frac{1}{N}\sum^N_{i=1}\sum^N_{j=1}p_{ij}\log\frac{N\cdot p_{ij}}{\sum_{k=1}^N p_{kj}}\\ &=\frac{1}{N}\cdot \sum_{i=1}^N\left(P_i\cdot \log P_i - P_i\cdot\log \bar{P}\right)\\ &=\frac{1}{N}\sum_{i=1}^N D_{KL}(P_i||\bar{P}) \end{aligned} }[/math]

(2)

By averaging the columns of the matrix, we obtain the average transition vector [math]\displaystyle{ \overline{P}=\sum_{k=1}^N P_k/N }[/math]. [math]D_{KL}[/math] is the KL Divergence between two distributions. Therefore, EI is the average KL Divergence between each row transition vector [math]P_i[/math] and the average transition vector [math]\bar{P}[/math].

For the three Transitional Probability Matrices listed above, their respective EI values are: 2 bits, 1 bit, and 0 bits. This shows that if more 0s or 1s appear in the Transitional Probability Matrix (i.e., if more of the row vectors are One-hot Vectors, where one position is 1 and the others are 0), the EI value will be higher. In other words, the more deterministic the jump from one time to the next, the higher the EI value tends to be. However, this observation is not entirely precise, and more exact conclusions are provided in the following sections.

Normalization

Clearly, the magnitude of EI (Effective Information) is related to the size of the state space, which poses challenges when comparing Markov Chains of different scales. To address this issue, we need a Measure of Causal Effect that is as independent of scale effects as possible. Therefore, we normalize EI to derive a metric that is independent of the system size.

According to the work of Erik Hoel and Tononi, the normalization process involves using the entropy under a Uniform Distribution (i.e., Maximum Entropy) as the denominator, [math]\displaystyle{ \log N }[/math], is used as the denominator to normalize EI, where [math]N[/math] is the number of states [2] in the state space [math]\mathcal{X}[/math]. Thus, the normalized EI becomes:

[math]\displaystyle{ Eff=\frac{EI}{\log N} }[/math]

This normalized metric is also referred to as Effectiveness.

However, when dealing with continuous state variables, normalizing EI by using the logarithm of the number of states in the state space may not be suitable, as the state number often depends on the dimensionality and the resolution of real numbers.

Determinism and Degeneracy

Decomposition of EI

From Equation 1, we see that EI can actually be decomposed into two terms:

[math]\displaystyle{ \begin{aligned} EI&=\frac{1}{\#(\mathcal{X})} \left(-\sum_{x\in\mathcal{X}}H(Pr(Y|X))\right) + H\left(Pr(Y)\right)\\ \end{aligned} }[/math]

Similarly, in the context of Markov chains, EI can be decomposed as:

-

[math]\displaystyle{ \begin{aligned} EI &= \frac{1}{N}\cdot \sum_{i=1}^N\left(P_i\cdot \log P_i - P_i\cdot\log \bar{P}\right)\\ &=\underbrace{-\langle H(P_i)\rangle}_{Determinism\ Term}+\underbrace{H(\bar{P})}_{Non-degeneracy\ Term} \end{aligned} }[/math]

(tow_terms)

Where the first term, [math]-\langle H(P_i)\rangle\equiv \frac{1}{N}\sum_{i=1}^N H(P_i)[/math], represents the negative average entropy of each row vector [math]P_i[/math], which measures the Determinism of the Markov transition matrix.

The second term, [math]H(\bar{P})[/math] is the entropy of the average row vector, where [math]\bar{P}\equiv \frac{1}{N}\sum_{i=1}^N P_i [/math] is the average row vector of all N row vectors, and it measures the Non-degeneracy of the Markov transition matrix.

Determinism and Degeneracy

In the above definition, the determinism and non-degeneracy terms are negative. To prevent this, we redefine the Determinism of a Markov chain transition matrix P as:

[math]\displaystyle{ Determinism \equiv \log N - \langle H(P_i)\rangle = \frac{1}{N}\sum_{i=1}^N \sum_{j=1}^N p_{ij}\log \left(N\cdot p_{ij}\right) }[/math]

This term is an average Negative Entropy, where the addition of [math]\log N[/math][2] prevents it from being negative. Determinism quantifies the certainty in predicting the system's next state given its current state. The reason lies in the fact that when a vector is closer to a uniform distribution, its entropy is larger, and when it is closer to a one-hot vector (where one element is 1 and others are 0), its entropy is smaller. The row vectors of the Markov transition matrix indicate the probabilities of transitioning from the current state to various future states. When the average negative entropy of the row vectors is high, it means that one element of the row vector has a probability of 1 while others are 0, indicating that the system will definitely transition to a specific state.

We also define the Degeneracy of a Markov chain transition matrix P as:

[math]\displaystyle{ Degeneracy \equiv \log N - H(\bar{P})=\log N + \sum_{j=1}^N \bar{P}_{\cdot j}\log \bar{P}_{\cdot j}=\sum_{j=1}^N \frac{\sum_{i=1}^Np_{ij}}{N}\log \left(\sum_{i=1}^Np_{ij}\right) }[/math]

This term measures degeneracy. In order to prevent it from being negative, [math]\log N[/math][2] was added. The meaning of degeneracy is: if the current state of the system is known, can it be deduced from the state of the system at the previous moment? If it can be inferred, then the degeneracy of this Markov matrix will be relatively low. If it is difficult to deduce, the Markov matrix is degenerate. Why can degeneracy be described by the negative entropy of the average row vector distribution? First of all, when all the row vectors in P are independent of each other, then their average distribution will be very close to a uniform distribution, i.e [math]\bar{P}\approx (\frac{1}{N},\frac{1}{N},\cdots,\frac{1}{N})[/math], resulting in maximum Shannon Entropy, i.e., [math]\log N[/math]. In this case, the Markov transition matrix is a Invertible Matrix, indicating that we can deduce the previous state from the current state. Therefore, this Markov matrix is non-degenerate, and the computed degeneracy is zero.

Conversely, when all row vectors of P are identical, the average vector is also a one-hot vector with minimum Entropy. In this case, it is challenging to infer the previous state from the current state, leading to a degenerate (or non-reversible) Markov matrix, with a computed degeneracy equal to [math]\log N[/math].

In more general situations, if the row vectors of P resemble a matrix formed by independent one-hot vectors, P becomes less degenerate. On the other hand, if the row vectors are identical and close to a one-hot vector, P becomes more degenerate.

Examples

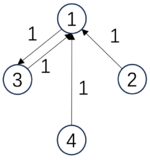

Below, we examine the determinism and degeneracy of three Markov chains.

| [math]\displaystyle{ P_1=\begin{pmatrix} &0 &0 &1 &0& \\ &1 &0 &0 &0& \\ &0 &0 &0 &1& \\ &0 &1 &0 &0& \\ \end{pmatrix} }[/math], | [math]\displaystyle{ P_2=\begin{pmatrix} &1/3 &1/3 &1/3 &0& \\ &1/3 &1/3 &1/3 &0& \\ &1/3 &1/3 &1/3 &0& \\ &0 &0 &0 &1& \\ \end{pmatrix} }[/math], | [math]\displaystyle{ P_3=\begin{pmatrix} &0 &0 &1 &0& \\ &1 &0 &0 &0& \\ &1 &0 &0 &0& \\ &1 &0 &0 &0& \\ \end{pmatrix} }[/math]. |

| [math]\begin{aligned}&Det(P_1)=2\ bits,\\&Deg(P_1)=0\ bits,\\&EI(P_1)=2\ bits\end{aligned}[/math] | [math]\begin{aligned}&Det(P_2)=0.81\ bits,\\&Deg(P_2)=0\ bits,\\&EI(P_2)=0.81\ bits\end{aligned}[/math] | [math]\begin{aligned}&Det(P_3)=2\ bits,\\&Deg(P_3)=1.19\ bits,\\&EI(P_3)=0.81\ bits\end{aligned}[/math] |

The first transition probability matrix is a Permutation Matrix, which is invertible. It has the highest determinism and no degeneracy, leading to the maximum EI. The second matrix has the first three states transitioning to one another with equal probability (1/3), resulting in the lowest determinism but zero degeneracy, with EI being 0.81. The third matrix is deterministic but since three of the states transition to the first state, it's impossible to infer from state 1 which previous state led to it. Therefore, it has high degeneracy, and its EI is also 0.81, the same as the second.

Normalized Determinism and Degeneracy

In Erik Hoel's original paper [2], determinism and degeneracy are presented in a normalized form by dividing them by a scale-dependent quantity. To distinguish between the two, we refer to the normalized quantities as the Determinism Coefficient and the Degeneracy Coefficient.

Specifically, Erik Hoel et al. decomposed the normalized effective information, i.e., Eff, corresponding to the determinism coefficient and the degeneracy coefficient.

[math]\displaystyle{ Eff = Determinism\ Coefficient - Degeneracy\ Coefficient }[/math]

The definitions of these two items are:

[math]\displaystyle{ \begin{aligned} &Determinism\ Coefficient = \frac{1}{N\log N}\sum_{i,j}p_{ij}\log\left(N\cdot {p_{ij}}\right) \\ &Degeneracy\ Coefficient = \frac{1}{N\log N}\sum_{ij}p_{ij}\log{\left(\sum_k p_{k,j}\right)} \end{aligned} }[/math]

In summary, determinism refers to how much confidence we have in predicting future states based on the current state's probability distribution, while degeneracy refers to how much certainty we have in inferring previous states from the current state. Systems with high determinism and low degeneracy exhibit strong causal dynamics.

Properties of the EI Function

From Equation 2, we can see that in the transition probability matrix P, EI is a function of each element, representing the conditional probabilities of transitioning from one state to another. Thus, a natural question arises: What mathematical properties does this function have? Does it have extreme points, and if so, where are they? Is it convex? What are its maximum and minimum values?

Domain

In the case of Markov chains with discrete states and discrete time, the domain of EI is clearly the transition probability matrix P. P is a matrix composed of [math]N\times N[/math] elements, each [math]p_{ij}\in[0,1][/math] representing a probability value. Additionally, each row must satisfy the normalization condition, meaning for any [math]\forall i\in[1,N][/math]:

-

[math]\displaystyle{ ||P_i||_1=\sum_{j=1}^N p_{ij}=1 }[/math]

(3)

Thus, the domain of EI, which is the possible space for P, is not the entire real space [math]\mathcal{R}^{N^2}[/math] of [math]N\times N[/math] dimensions. Due to the normalization condition 3, it is a subspace of the [math]N\times N[/math] dimensional real space. How can we express this subspace?

For any row vector [math]P_i[/math], its value range is a hyper simplex in N-dimensional real space. For example, when [math]N=2[/math], this space is a line segment: [math]p_{i,1}+p_{i,2}=1, \forall i\in\{1,2\}[/math]. When [math]N=3[/math], this space is a plane in three-dimensional space: [math]p_{i,1}+p_{i,2}+p_{i,3}=1, \forall i\in\{1,2,3\}[/math]. These two spaces are illustrated below:

In the general case, we define the value range space of the row vector [math]P_i[/math] in N-dimensional space as [math]\Delta=\{p_{j}|\sum_{j=1}^Np_{j}=1,p_{j}\in[0,1]\}[/math]. The Cartesian product of N such spaces forms the domain of EI:

[math]\displaystyle{ Dom(EI)=\Delta\times \Delta\cdots\times\Delta=\Delta^N }[/math]

First Derivative and Extreme Points

Taking the first derivative of Equation 2 with respect to [math]p_{ij}[/math], and considering the normalization condition [math]\sum_{j=1}^Np_{ij}=1[/math], we get:

-

[math]\displaystyle{ \frac{\partial EI}{\partial p_{ij}}=\log\left(\frac{p_{ij}}{p_{iN}}\right)-\log\left(\frac{\bar{p}_{\cdot j}}{\bar{p}_{\cdot N}}\right), }[/math]

(3)

Here, [math]\displaystyle{ p_{ij} }[/math] denotes the conditional probability of transitioning from state i to state j in matrix P. Since each row of P is subject to the normalization constraint 2, the EI function has [math]\displaystyle{ N(N-1) }[/math] degrees of freedom. We can select [math]\displaystyle{ 1\leq i\leq N, 1\leq j\leq N-1 }[/math], with [math]\displaystyle{ p_{iN} }[/math] representing the conditional probability in the i-th row and N-th column. [math]\displaystyle{ \bar{p}_{\cdot j}, \bar{p}_{\cdot N} }[/math] represent the average conditional probabilities of the j-th and N-th columns, respectively.

It is easy to see that this derivative is defined only when, for the selected [math]i,j\in[1,N][/math], the probabilities [math]p_{ij},p_{iN},\bar{p}_{\cdot j}\equiv \frac{\sum_{k=1}^Np_{kj}}{N},\bar{p}_{\cdot N}\equiv \frac{\sum_{k=1}^Np_{kN}}{N}[/math] are all greater than 0. Only if this condition is satisfied, then EI is derivable in the vicinity of [math]p_{ij}[/math]. Otherwise, if any of these terms are 0, the derivative does not exist.

Setting Equation 3 to 0 yields the extreme points. For any [math]\displaystyle{ 1\leq i\leq N, 1\leq j\leq N-1 }[/math], the following equation holds:

[math]\displaystyle{ p_{ij}=\bar{p}_{\cdot j}=\frac{1}{N}\sum_{k=1}^Np_{kj} }[/math]

It is straightforward to compute that in this case, [math]\displaystyle{ EI=0 }[/math], indicating that EI reaches an extreme point. From the second derivative of EI, we can easily determine that this is a minimum point. In other words, EI has many minimum points, as long as all the row vectors of the transition probability matrix are identical. Regardless of the specific distribution of these row vectors, EI will be zero.

Second Derivative and Convexity

To explore the convexity of the EI function, we can compute its second derivative [math]\displaystyle{ \frac{\partial^2 EI}{\partial p_{ij}\partial p_{st}} }[/math], where [math]\displaystyle{ 1\leq s \leq N, 1\leq t \leq N-1 }[/math]. First, we introduce a function symbol [math]\displaystyle{ \delta_{i,j} }[/math],

[math]\displaystyle{ \delta_{i,j} = \begin{cases} 0 & \text{if } i\ne j,\\ 1 & \text{if } i = j. \end{cases} }[/math]

Then, proceed to derive the second derivative. When [math]\displaystyle{ i=s }[/math],

[math]\displaystyle{ \begin{equation} \begin{aligned} \frac{\partial^2 EI}{\partial p_{ij}\partial p_{it}}&=\frac{\delta_{j,t}}{N}\left(\frac{1}{p_{ij}}-\frac{1}{N\cdot \bar{p}_{\cdot j}}\right)+\frac{1}{N\cdot p_{iN}}-\frac{1}{N^2\cdot \bar{p}_{\cdot N}}\\ &=\delta_{j,t}\frac{\sum_{k=1}^{N-1}p_{k j}-p_{ij}}{N^2\cdot p_{ij}\cdot \bar{p}_{\cdot j}}+\frac{\sum_{k=1}^{N-1}p_{k N}-p_{iN}}{N^2\cdot p_{iN}\cdot \bar{p}_{\cdot N}}\\ &=\delta_{j,t}\frac{\sum_{k\neq i}p_{kj}}{N^2\cdot p_{ij}\cdot \bar{p}_{\cdot j}}+\frac{\sum_{k\neq i}p_{k N}}{N^2\cdot p_{iN}\cdot \bar{p}_{\cdot N}}\gt 0, \end{aligned} \end{equation} }[/math]

When [math]\displaystyle{ i\ne s }[/math],

[math]\displaystyle{ \begin{equation} \frac{\partial^2 EI}{\partial p_{ij}\partial p_{st}}=-\frac{\delta_{j,t}}{N^2\cdot \bar{p}_{\cdot j}}-\frac{1}{N^2\cdot \bar{p}_{\cdot N}}\lt 0. \end{equation} }[/math]

In summary, the second derivative of EI is:

[math]\displaystyle{ \begin{equation} \frac{\partial^2 EI}{\partial p_{ij}\partial p_{st}}=\frac{1}{N}\cdot\left(\frac{\delta_{i,s}\delta_{j,t}}{p_{ij}}+\frac{\delta_{i,s}}{p_{iN}}-\frac{\delta_{j,t}}{N\cdot\bar{p}_{\cdot j}}-\frac{1}{N\cdot \bar{p}_{\cdot N}}\right). \end{equation} }[/math]

Moreover, the second derivative is positive when [math]\displaystyle{ i=s }[/math] and negative when [math]\displaystyle{ i\ne s }[/math]. Therefore, EI is neither convex nor concave.

Minimum Value

From the first derivative of EI, we discussed the extreme value problem, showing that EI reaches a minimum of 0 when all the row vectors of the transition matrix are identical. Is this minimum value the lowest possible value?

We know that based on Equation 2, EI is essentially a form of KL divergence, which is always non-negative. Thus:

[math]\displaystyle{ EI\geq 0 }[/math]

The equality holds when [math]p_{ij}=\bar{p}_{\cdot j}[/math] for all [math]i,j[/math]. Hence, 0 is the minimum value of EI, with the minimum point being [math]p_{ij}=\bar{p}_{\cdot j}=\frac{1}{N}\sum_{k=1}^Np_{kj}[/math]:

[math]\displaystyle{ EI_{min}=0. }[/math]

Maximum Value

From the previous discussion on determinism and degeneracy, we know that EI can be decomposed into two parts:

[math]\displaystyle{ EI = -\langle H(P_i)\rangle + H(\bar{P}), }[/math]

The definition of entropy is: [math]\displaystyle{ H(P_i)=-\sum_{j=1}^Np_{ij}\log p_{ij} }[/math]. Looking separately, the maximum value of the previous equation is 0, that is:

[math]\displaystyle{ - H(P_i)\leq 0, }[/math]

When [math]\displaystyle{ P_i }[/math] is a one-hot vector without uncertainty, the equal sign of this equation holds. This occurs when [math]\displaystyle{ P_i }[/math] is a deterministic one-hot vector. Therefore, when all [math]\displaystyle{ P_i }[/math] are one-hot vectors, we have:

[math]\displaystyle{

-\langle H(P_i)\rangle=0

}[/math]

On the other hand, there is also an inequality that holds for the entropy of the average vector of all row vectors in P,

[math]\displaystyle{ H(\bar{P})\leq \log N, }[/math]

The equal sign holds when the condition [math]\displaystyle{ \bar{P}=\frac{1}{N}\cdot \mathbb{1} }[/math] is satisfied, where [math]\mathbb{1}[/math] represents the vector with all elements being 1.

[math]\displaystyle{ EI\leq 0+\log N=\log N }[/math]

When the equal sign of these two equations holds simultaneously, that is, when [math]\displaystyle{ P_i }[/math] is a one-hot vector and [math]\displaystyle{ \bar{P} }[/math] is uniformly distributed (at this time, it is necessary to require [math]P_i[/math] to be perpendicular to each other, that is, P is a Permutation Matrix) — EI reaches its maximum value of:

[math]\displaystyle{ EI_{max}=\log N }[/math]

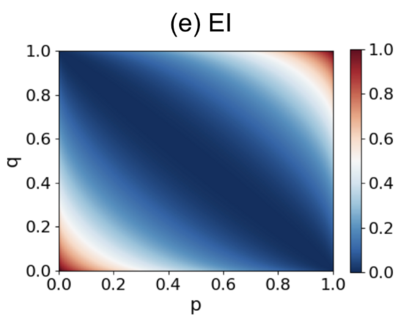

Analytical Solution of the Simplest Markov Chain

We consider the simplest 2*2 Markov chain matrix:

[math]\displaystyle{ P=\begin{pmatrix}p & 1-p \\1-q & q\end{pmatrix}, }[/math]

Here, [math]p[/math] and [math]q[/math] are parameters that take values in the range [math][0,1][/math].

The EI (Effective Information) of this transition probability matrix, which depends on [math]p[/math] and [math]q[/math], can be calculated using the following analytical solution:

[math]\displaystyle{ EI=\frac{1}{2}\left[p\log_2\frac{2p}{1+p-q}+(1-p)\log_2\frac{2(1-p)}{1-p+q}\right. + \left.(1-q)\log_2\frac{2(1-q)}{1+p-q}+q\log_2\frac{2q}{1-p+q}\right] }[/math]

The diagram below shows how EI changes with different values of [math]p[/math] and [math]q[/math].

It is clear from the graph that when p+q=1, meaning that all the row vectors are identical, EI reaches its minimum value of 0. Otherwise, as p and q increase along the direction perpendicular to p+q=1, EI increases, with the maximum value being 1.

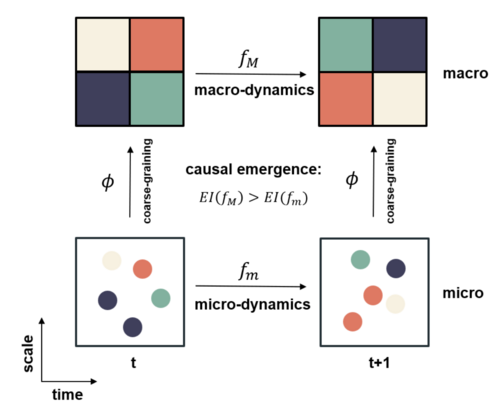

Causal Emergence

With the metric of Effective Information (EI) in place, we can now discuss causal emergence in Markov chains. For a Markov chain, an observer can adopt a multi-scale perspective to distinguish between micro and macro levels. First, the original Markov transition matrix P defines the micro-level dynamics. Second, after coarse-graining the Markov chain that maps microstates into macrostates (typically by grouping microstates together), the observer can obtain a macro-level transition matrix P′, which describes the transition probabilities between macrostates. We can compute EI for both dynamics. If the macro-level EI is greater than the micro-level EI, we say that the system exhibits causal emergence.

A new metric can be defined to directly measure the degree of causal emergence:

[math]\displaystyle{ CE = EI(P') - EI(P) }[/math]

Here, [math]P[/math] is the microstate Markov transition matrix with dimensions [math]N\times N[/math], where N is the number of microstates. [math]P'[/math] is the macro-state transition matrix obtained after the coarse-graining of [math]P[/math], with dimensions [math]M\times M[/math], where [math]M<N[/math] represents the number of macrostates.

The process of coarse-graining a Markov transition matrix typically involves two steps: 1) grouping N microstates into M macrostates, and 2) reducing the Markov transition matrix accordingly. For more details on the specific methods for coarse-graining a Markov chain, refer to the topic of Markov Chain Coarse-graining.

If the computed CE>0, the system is said to exhibit Causal Emergence; otherwise, it does not.

Below, we demonstrate a specific example of causal emergence:

|

[math]\displaystyle{ P_m=\begin{pmatrix} &1/3 &1/3 &1/3 &0& \\ &1/3 &1/3 &1/3 &0& \\ &1/3 &1/3 &1/3 &0& \\ &0 &0 &0 &1& \\ \end{pmatrix} }[/math], |

[math]\displaystyle{ P_M=\begin{pmatrix} &1 &0 & \\ &0 &1 & \\ \end{pmatrix} }[/math]. |

| [math]\begin{aligned}&Det(P_m)=0.81\ bits,\\&Deg(P_m)=0\ bits,\\&EI(P_m)=0.81\ bits\end{aligned}[/math] | [math]\begin{aligned}&Det(P_M)=1\ bits,\\&Deg(P_M)=0\ bits,\\&EI(P_M)=1\ bits\end{aligned}[/math] |

In this example, the microstate transition matrix [math]P_m[/math] is a 4*4 matrix, where the first three states transition to each other with a probability of 1/3. This leads to a transition matrix with relatively low determinism, and thus, the EI is not very high, with a value of 0.81. However, when we coarse-grain this matrix — merging the first three states into one macrostate a, and the last state becomes another macrostate b — all transitions between the original three microstates now become internal transitions between macrostate a and a. Thus, the transition probability matrix becomes [math]P_M[/math], with an EI of 1. In this case, the Causal Emergence can be measured as:

[math]\displaystyle{ CE=EI(P_M)-EI(P_m)=1-0.81=0.19\ bits }[/math]

That is, there is 0.19 bits of causal emergence.

Sometimes, Causal Emergence is calculated using the normalized EI, defined as:

[math]\displaystyle{ ce=Eff(P_M)-Eff(P_m)=1-0.405=0.595 }[/math]

Since normalized EI eliminates the effect of system size, the measure of causal emergence becomes larger.

Python Source Code for Calculating EI

Below is the Python source code for calculating EI for a Markov transition matrix. The input tpm is a Markov transition matrix that satisfies the row normalization condition. The returned values are ei_all, which is the EI, and other parameters such as effectiveness (eff), determinism (det), degeneracy (deg), Determinism Coefficient (det_c), and Degeneracy Coefficient (deg_c).

python:

def tpm_ei(tpm, log_base = 2):

'''

tpm: The input probability transition matrix can be a non square matrix

log_base:Base of a logarithm

ei_all:The value of EI

det:The deterministic part of EI

deg:The degeneracy part of EI

eff:Effectiveness

det_c:Coefficient of certainty

deg_c:Coefficient of degeneracy

'''

# marginal distribution of y given x ~ Unifrom Dist

puy = tpm.mean(axis=0)

m,n = tpm.shape

if m > n:

q = n

else:

q = m

# replace 0 to a positive number to avoid log error

eps = 0.5

tpm_e = np.where(tpm==0, eps, tpm)

puy_e = np.where(puy==0, eps, puy)

# calculate EI of specific x

ei_x = (np.log2(tpm_e / puy_e) / np.log2(log_base) * tpm).sum(axis=1)

# calculate det and deg

det = np.log2(n) + (tpm * np.log2(tpm_e)).sum(axis=1).mean(axis=0)

deg = np.log2(n) + (puy * np.log2(puy_e)).sum()

det = det / np.log2(log_base)

deg = deg / np.log2(log_base)

det_c = det / np.log2(q) * np.log2(log_base)

deg_c = deg / np.log2(q) * np.log2(log_base)

# calculate total EI

ei_all = ei_x.mean()

eff = ei_all / np.log2(q) * np.log2(log_base)

return ei_all,det,deg,eff,det_c,deg_c

tpm_ei(mi_states)

EI for Continuous Variables

In reality, most systems need to be considered in continuous spaces, so it is necessary to extend the concept of EI to continuous systems.

The core idea of this extension is to simplify the causal mechanism in continuous space into a deterministic function mapping [math]f(X)[/math], combined with a noise variable [math]\xi[/math]. In the cases listed below, [math]\xi\sim \mathcal{N}(0,\Sigma)[/math], meaning it follows a Gaussian distribution, allowing us to obtain an analytical expression for EI. For more general cases, there is no literature available for discussion yet.

Random Function Mapping

Initially, Erik Hoel considered this and proposed the framework of Causal Geometry[4]. This framework not only discusses the calculation of EI for random function mappings but also introduces the concepts of intervention noise and Causal Geometry. It defines the local form of EI and draws analogies and comparisons with Information Geometry. Below, we will discuss one-dimensional and multi-dimensional function mappings and the local form of EI.

One-Dimensional Function Mapping

First, let's consider the simplest case:

[math]\displaystyle{ y=f(x)+\xi, \xi\sim\mathcal{N}(0,\sigma^2) }[/math]

Here, [math]x,y\in \mathcal{R}[/math] are both one-dimensional real variables. According to the definition of Effective Information (EI), we need to intervene on variable x so that it follows a uniform distribution over its domain. If the domain of x is a fixed interval, such as [a,b], where a and b are real numbers, the probability density function of x is [math]1/(b-a)[/math]. However, if the domain of x extends over the entire real line, the interval becomes infinite, making the probability density function of x infinitesimally small.

To address this issue, we assume that the domain of x is not the entire real line but rather a sufficiently large region: [math][-L/2,L/2][/math], where L represents the size of the interval. In this case, the uniform distribution's density function over this region is [math]1/L[/math]. We hope that as [math]L\rightarrow +\infty[/math], the EI will converge to a finite value. However, EI is actually a quantity dependent on the size of the domain of x, so EI is a function of the parameter L, which can be seen from the definition of EI:

-

[math]\displaystyle{ \begin{aligned} EI&=I(y;x|do(x\sim U[-L/2,L/2]))\\ &=\int_{-\frac{L}{2}}^{\frac{L}{2}}\int_{f([-\frac{L}{2},\frac{L}{2}])}p(x)p(y|x)\ln\frac{p(y|x)}{p(y)}dydx\\ &=\int_{-\frac{L}{2}}^{\frac{L}{2}}\int_{f([-\frac{L}{2},\frac{L}{2}])}p(x)p(y|x)\ln p(y|x)dydx -\int_{-\frac{L}{2}}^{\frac{L}{2}}\int_{f([-\frac{L}{2},\frac{L}{2}])}p(x)p(y|x)\ln p(y)dydx \end{aligned} }[/math]

(4)

Here, [math]p(y|x)=\frac{1}{\sigma\sqrt{2\pi}}\exp\left(-\frac{(y-f(x))^2}{\sigma^2}\right)[/math] is the conditional probability density of y given x. Since [math]\xi[/math] follows a normal distribution with mean 0 and variance [math]\sigma^2[/math], [math]y=f(x)+\xi[/math] follows a normal distribution with mean [math]f(x)[/math] and variance [math]\sigma^2[/math].

The integration range of y is [math]f([-\frac{L}{L},\frac{L}{2}])[/math], i.e., the range of y is formed by mapping the domain [math][-\frac{L}{2},\frac{L}{2}][/math] of x through the function f.

[math]\displaystyle{ p(y)=\int_{-\frac{L}{2}}^{\frac{L}{2}}p(x_0)p(y|x_0)dx_0=\int_{-\frac{L}{2}}^{\frac{L}{2}}\frac{1}{L}\frac{1}{\sigma\sqrt{2\pi}}\exp\left(-\frac{(y-f(x_0))^2}{\sigma^2}\right)dx_0 }[/math]

The marginal probability density function of y, p(y), can be obtained by integrating the joint probability density function [math]p(x,y)=p(x)p(y|x)[/math] over x. To facilitate the subsequent discussion, we rename x as [math]x_0[/math] to distinguish it from other x variables in equation 4.

Since L is large, the interval [math][-\frac{L}{2},\frac{L}{2}][/math] is large, and hence, we assume that the interval [math]f([-\frac{L}{L},\frac{L}{2}])[/math] is also large. This allows us to approximate the integration limits as infinite, which gives the first term in equation 4:

[math]\displaystyle{ \begin{aligned} \int_{-\frac{L}{2}}^{\frac{L}{2}}\int_{f([-\frac{L}{2},\frac{L}{2}])}p(x)p(y|x)\ln p(y|x)dydx&\approx \int_{-\infty}^{\infty}\int_{-\infty}^{\infty}p(x)p(y|x)\ln p(y|x)dydx\\ &=\int_{-\infty}^{\infty}\int_{-\infty}^{\infty}\frac{1}{L}\frac{1}{\sigma\sqrt{2\pi}}\exp\left(-\frac{(y-f(x))^2}{\sigma^2}\right)\ln\left[\frac{1}{\sigma\sqrt{2\pi}}\exp\left(-\frac{(y-f(x))^2}{\sigma^2}\right)\right]dydx\\ &=\ln(\frac{1}{\sigma\cdot\sqrt{2\pi e}}) \end{aligned} }[/math]

Here, e is the base of the natural logarithm, and the last equality is derived using the Shannon entropy formula for a Gaussian distribution.

However, calculating the second term remains challenging, even with the assumption of infinite integration limits. Therefore, we perform a first-order Taylor expansion on the function [math]f(x_0)[/math]:

[math]\displaystyle{ f(x_0)\approx f(x)+f'(x)(x_0-x) }[/math]

Here, [math]x\in[-\frac{L}{2},\frac{L}{2}][/math] is any point within the domain of x.

Thus, p(y) can be approximated:

[math]\displaystyle{ p(y)=\int_{-\frac{L}{2}}^{\frac{L}{2}}\frac{1}{L}\frac{1}{\sigma\sqrt{2\pi}}\exp\left(-\frac{(y-f(x_0))^2}{\sigma^2}\right)dx_0\approx \int_{-\infty}^{\infty}\frac{1}{L}\frac{1}{\sigma\sqrt{2\pi}}\exp\left(-\frac{(y-f(x)-f'(x)(x_0-x))^2}{\sigma^2}\right)dx_0\approx \frac{1}{L}\cdot\frac{1}{f'(x)} }[/math]

It is important to note that in this step, we not only approximate [math]f(x_0)[/math]as a linear function but also introduce an assumption that the result of p(y) is independent of y and depends on [math]x[/math]. Since the second term of the EI calculation includes an integration over x, this approximation implies that p(y) is approximately different at different values of x.

Thus, the second term in equation 4 can be approximated as:

[math]\displaystyle{ \begin{aligned} \int_{-\frac{L}{2}}^{\frac{L}{2}}\int_{f([-\frac{L}{2},\frac{L}{2}])}p(x)p(y|x)\ln p(y)dydx\approx -\ln L - \frac{1}{L}\int_{-\frac{L}{2}}^{\frac{L}{2}}\ln f'(x)dx \end{aligned} }[/math]

The final EI can be approximately computed using the following equation:

[math]\displaystyle{ EI\approx \ln(\frac{L}{\sqrt{2\pi e}})+\frac{1}{2L}\int_{-\frac{L}{2}}^{\frac{L}{2}}\ln \left(\frac{f'(x)}{\sigma}\right)^2dx }[/math]

This kind of derivation was first seen in Hoel's 2013 paper [1] and was further discussed in detail in the "Neural Information Squeezer" paper [2].

High-Dimensional Case

We can extend the EI (Effective Information) calculation for one-dimensional variables to a more general n-dimensional scenario. Specifically:

-

[math]\displaystyle{ \mathbf{y}=f(\mathbf{x})+\xi, }[/math]

(5)

Let [math]\xi\sim \mathcal{N}(0,\Sigma)[/math], where [math]\displaystyle{ \Sigma }[/math] is the covariance matrix of the Gaussian noise [math]\displaystyle{ \xi }[/math]. First, we intervene on [math]\mathbf{x}[/math] such that it follows a uniform distribution over [math]\displaystyle{ [-L/2,L/2]^n\subset\mathcal{R}^n }[/math], where [math]\displaystyle{ [-L/2,L/2]^n }[/math] represents a hypercube in n-dimensional space. We assume [math]\displaystyle{ \mathbf{y}\in\mathcal{R}^m }[/math], where [math]\displaystyle{ n }[/math] and [math]\displaystyle{ m }[/math] are positive integers. In the presence of observational noise only, the EI can be generalized as follows:

-

[math]\displaystyle{ EI\approx \ln\left(\frac{L^n}{(2\pi e)^{m/2}}\right)+\frac{1}{L^n}\int_{-[\frac{L}{2},\frac{L}{2}]^n}\ln\left|\det\left(\frac{\partial_\mathbf{x} f(\mathbf{x})}{\Sigma^{1/2}}\right)\right| d\mathbf{x}, }[/math]

(6)

Where [math]\displaystyle{ |\cdot| }[/math] denotes the absolute value, and [math]\displaystyle{ \det }[/math] refers to the determinant.

Dimension-Averaged EI

In discrete-state systems, when comparing systems of different scales, we can compute either the direct EI difference or the normalized EI difference. Normalized EI is divided by [math]\log N[/math], where [math]N=\#(\mathcal{X})[/math] represents the number of elements in the discrete state space [math]\mathcal{X}[/math].

However, for continuous variables, if the original EI is used, an unreasonable result may occur. Firstly, as shown in equation 6, the EI formula contains a term [math]\ln L^n[/math]. Since L is a large positive number, EI will be significantly affected by L. Secondly, when calculating normalized EI (Eff), the issue arises that for continuous variables, the number of elements in the state space is infinite. A potential solution is to treat the volume of the space as the number N, and thus normalize it by [math]n \ln L[/math], meaning it is proportional to n and ln L:

[math]\displaystyle{ Eff=\frac{EI}{\ln L^n}\approx 1-\frac{m\ln\left(2\pi e\right)}{2n \ln L}+\frac{1}{n\ln L}\int_{[-\frac{L}{2},\frac{L}{2}]^n}\frac{1}{L^n}\cdot \ln\left|\det\left(\frac{\partial_\mathbf{x} f(\mathbf{x})}{\Sigma^{1/2}}\right)\right| d\mathbf{x}, }[/math]

However, this still contains the L term, affecting Eff significantly. Moreover, when comparing microscopic (n-dimensional) and macroscopic (m-dimensional, where m < n) Eff, the L term cannot be eliminated.

This suggests that the normalization issue in continuous variable systems cannot simply be transferred from the discrete case.

When the Neural Information Squeezer (NIS) framework was proposed [5], the authors invented another way to normalize EI for continuous variables using state space dimensions. This approach solves the EI comparison problem for continuous state variables, introducing the Dimension Averaged Effective Information (dEI):

[math]\displaystyle{ \mathcal{J}\equiv\frac{EI}{n} }[/math]

Where [math]n[/math] is the dimension of the state space. It can be proven that in the discrete state space, Dimension-averaged EI and Eff are actually equivalent. The EI for continuous variables will be further discussed below.

For n-dimensional iterative dynamical systems, [math]\mathbf{y}[/math] and [math]\mathbf{x}[/math] are variables of the same dimension, meaning [math]m=n[/math]. Substituting equation 6 into the dimension-averaged EI gives:

[math]\displaystyle{ \mathcal{J}=\frac{EI}{n}\approx \ln L - \frac{1}{2}\ln (2\pi e)+\frac{1}{n}\int_{[-\frac{L}{2},\frac{L}{2}]^n}\frac{1}{L^n}\cdot \ln\left|\det\left(\frac{\partial_\mathbf{x} f(\mathbf{x})}{\Sigma^{1/2}}\right)\right| d\mathbf{x} }[/math]

Although L has not disappeared, when calculating the dimension-averaged causal emergence—where the n-dimensional state variable [math]\mathbf{x}_t[/math] is projected onto an N-dimensional macroscopic state variable [math]\mathbf{X}_t[/math]—and the corresponding macrodynamics F and noise covariance [math]\Sigma'[/math], the difference between the macrodynamics and microdynamics' dimension-averaged EI is:

[math]\displaystyle{ \Delta \mathcal{J}\equiv \mathcal{J_F}-\mathcal{J_f}=\frac{EI_F}{N}-\frac{EI_f}{n}\approx \frac{1}{N}\int_{-[\frac{L}{2},\frac{L}{2}]^N}\frac{1}{L^N}\cdot \ln \left|\det\left(\frac{\partial_\mathbf{X} F(\mathbf{X})}{\Sigma'^{1/2}}\right)\right| dX - \frac{1}{n}\int_{[-\frac{L}{2},\frac{L}{2}]^n}\frac{1}{L^n}\cdot \ln\left|\det\left(\frac{\partial_\mathbf{x} f(\mathbf{x})}{\Sigma^{1/2}}\right)\right| d\mathbf{x} }[/math]

Note that the integral in the above equation can be written as the expectation under a uniform distribution, i.e. [math]\int_{[-\frac{L}{2},\frac{L}{2}]}\frac{1}{L^n}\cdot=\mathbb{E}_{\mathbf{x}\sim \mathcal{U}[-\frac{L}{2},\frac{L}{2}]^n}\cdot[/math], then the above equation is transformed into:[math]\displaystyle{ \Delta \mathcal{J}\approx \frac{1}{N}\mathbb{E}_{X\sim\mathcal{U}[-\frac{L}{2},\frac{L}{2}]^N}\ln \left|\det\left(\frac{\partial_\mathbf{X} F(\mathbf{X})}{\Sigma'^{1/2}}\right)\right| - \frac{1}{n}\mathbb{E}_{\mathbf{x}\sim\mathcal{U}[-\frac{L}{2},\frac{L}{2}]^n}\ln\left|\det\left(\frac{\partial_\mathbf{x} f(\mathbf{x})}{\Sigma^{1/2}}\right)\right| }[/math]

Here [math]\mathcal{U}([-\frac{L}{2},\frac{L}{2}]^n)[/math] represents the uniform distribution on the cube [math][-\frac{L}{2},\frac{L}{2}]^n[/math]. From this, it can be seen that although L is still implicitly included in the expectation, all terms in the equation that explicitly contain L are eliminated. In actual numerical calculations, the expected calculation can be manifested as taking the average of multiple samples on [math][-\frac{L}{2},\frac{L}{2}]^n[/math], and therefore is also independent of the size of L. This demonstrates the rationality of introducing dimension averaged EI.

Random Iterative Systems

We can extend the above results to linear iterative dynamical systems[7]. For an iterative system of the form:

[math]\displaystyle{ x_{t+1}=Ax_t+\varepsilon_t, }[/math]

Where [math]A\in\mathcal{R}^{n\times n}[/math] is a full-rank n*n matrix representing the dynamics of the linear iterative system, and [math]\varepsilon_t\sim\mathcal{N}(0,\Sigma)[/math] is n-dimensional Gaussian noise with mean zero and covariance matrix [math]\Sigma[/math]. Among them, the covariance matrix [math]\Sigma[/math] is also full rank.

It can be seen that this iterative system can be regarded as a special case of formula 5, where [math]y[/math] corresponds to [math]x_{t+1}[/math] here, and [math]f(x_t)[/math] is [math]A x_t[/math].

To define EI, let the intervention space size be [math]\displaystyle{ L }[/math]. For a single step mapping, we can obtain the average effective information of the dimensions.

[math]\displaystyle{ \mathcal{J}(A,\Sigma)\equiv \frac{EI(A,\Sigma)}{n}=\frac{1}{n}\ln\displaystyle\frac{|\det(A)|L^n}{(2\pi e)^\frac{n}{2}\displaystyle \det(\Sigma)^\frac{1}{2}}=\ln\displaystyle\frac{|\det(A)|^\frac{1}{n}L}{(2\pi e)^\frac{1}{2}\displaystyle \det(\Sigma)^\frac{1}{2n}}. }[/math]

The effective information of a random iterative system can be decomposed into two terms:

[math]\displaystyle{ \mathcal{J}=\mathcal{J}_1-\mathcal{J}_2 }[/math]

where

[math]\displaystyle{ \mathcal{J}_1\equiv-\left\lt H(p(x_{t+1}|x_t))\right\gt =-\ln\left[(2\pi e)^\frac{n}{2}\det(\Sigma)^\frac{1}{2}\right] }[/math]

is the term of Determinism, which describes the predictability of the system's future state based on the current state. The stronger the certainty, the smaller the randomness, and the easier it is to predict the future trend of the system.

and

[math]\displaystyle{ \mathcal{J}_2\equiv-H(\tilde{x}_{t+1}))=-\ln\left(|\det(A)|L^n\right) }[/math]

is the term of Degeneracy, which describes the ability to trace back the previous state from the current state. The weaker the degeneracy, the easier it is to infer the system's past evolutionary path. Among them, [math]\tilde{x}_{t+1}[/math] is a new [math]x_{t+1}[/math] variable obtained after intervention while keeping the causal mechanism unchanged.

The stronger the determinism and the weaker the degeneracy, the greater the effective information, leading to stronger causal effects.

After subtracting the macro effective information from the micro effective information, the dimension averaged causal emergence of the iterative system can be obtained as follows:

[math]\displaystyle{ \Delta\mathcal{J}=\ln\frac{|\det(WAW^{\dagger})|^{1/N}}{|\det(A)|^{1/n}}-\ln\frac{|\det(W\Sigma W^T)|^{\frac{1}{2N}}}{|\det(\Sigma)|^{\frac{1}{2n}}} }[/math]

Among them, [math]W[/math] is a coarse-grained matrix with an order of n * m (m is the dimension of the macroscopic state space), and its function is to map any microscopic state [math]x_t[/math] to a macroscopic state [math]y_t[/math]. [math]W^{\dagger}[/math] is the pseudo inverse operation of W. The first term in the equation is the emergence caused by determinism, abbreviated as Deterministic Emergence, and the second term is the emergence caused by degeneracy, abbreviated as Degenerative Emergence. For more detailed information, please refer to the causal emergence of stochastic iterative systems.

Feedforward Neural Networks

For modeling complex systems, neural networks are often used. Specifically, for feedforward neural networks, Zhang Jiang et al. derived the formula for calculating the EI of such networks. The input of the neural network is [math]\displaystyle{ x(x_1,...,x_n) }[/math], the output is [math]\displaystyle{ y(y_1,...,y_n) }[/math], and it satisfies [math]\displaystyle{ y=f(x) }[/math]. [math]\displaystyle{ f }[/math] is a deterministic mapping implemented by the neural network. However, according to formula 5, noise must be included in the mapping to reflect uncertainty.

Therefore, in neural networks, we assume that the computation from input to output is also uncertain, which also conforms to formula 5:

[math]\displaystyle{ y=f(x)+\xi, }[/math]

Here, [math]\xi\sim \mathcal{N}(0,\Sigma)[/math] is a Gaussian noise, and [math]\Sigma=\mathrm{diag}(\sigma_1,\sigma_2,\cdots,\sigma_n)[/math], where [math]\sigma_i[/math] is the Mean Square Error (MSE) of the i-th dimension. That is to say, we assume that the neural network mapping from x to y actually satisfies a conditional Gaussian distribution with a mean of [math]f(x)[/math] and a covariance of [math]\Sigma[/math], that is:

[math]\displaystyle{ Pr(y|x)\sim \mathcal{N}(f(x),\Sigma) }[/math]

Therefore, applying the general conclusion of high-dimensional mapping, we can provide a general formula for calculating the effective information of neural networks:

[math]\displaystyle{ \begin{gathered}EI(f)=I(do(x\sim \mathcal{U}([-\frac{L}{2},\frac{L}{2}]^n));y)\approx-\frac{n+n\ln(2\pi)+\sum_{i=1}^n\ln\sigma_i^2}2+n\ln(2L)+\mathbb{E}_{x\sim \mathcal{U}([-\frac{L}{2},\frac{L}{2}]^n)}(\ln|\det(\partial_{x}f(x)))|)\end{gathered} }[/math]

Among them, [math]\displaystyle{ \mathcal{U}\left(\left[-L/2, L/2\right]^n\right) }[/math] represents the uniform distribution of [math]\displaystyle{ n }[/math] dimensions over [math]\displaystyle{ \left[-L/2 ,L/2\right]^n }[/math], and [math]\displaystyle{ \det }[/math] represents the determinant. The average EI of dimensions is:

[math]\displaystyle{ \begin{gathered} \mathcal{J}\equiv \frac{EI(f)}{n}\approx -\frac{\ln(2\pi e)}{2}-\frac{\sum_{i=1}^n\ln\sigma_i}{n}+\ln(2L)+\frac{1}{n}\cdot\mathbb{E}_{x\sim \mathcal{U}([-\frac{L}{2},\frac{L}{2}]^n)}(\ln|\det(\partial_{x}f(x)))|) \end{gathered} }[/math]

If the micro dynamics can be fitted with a neural network f and the macro dynamics can be fitted with F, then causal emergence can be calculated by the following equation:[math]\displaystyle{ \begin{gathered} \mathcal{\Delta J}\equiv \frac{EI(F)}{m}-\frac{EI(f)}{n}\approx \frac{\sum_{i=1}^n\ln\sigma_i}{n}-\frac{\sum_{i=1}^m\ln\sigma'_i}{m}+\frac{1}{m}\cdot\mathbb{E}_{X\sim \mathcal{U}([-\frac{L}{2},\frac{L}{2}]^n)}(\ln|\det(\partial_{X}F(X)))|)-\frac{1}{n}\cdot\mathbb{E}_{x\sim \mathcal{U}([-\frac{L}{2},\frac{L}{2}]^m)}(\ln|\det(\partial_{x}f(x)))|) \end{gathered} }[/math]

Among them, [math]m[/math] is the macroscopic state dimension, and [math]\sigma'_i[/math] is the mean squared error (MSE) of the i-th macroscopic dimension, which can be calculated by the gradient of macroscopic state [math]X_i[/math] under the backpropagation algorithm.

Note that the above conclusions all require: [math]\displaystyle{ \partial_{x}f(x) }[/math] is not 0, but for all [math]\displaystyle{ x }[/math], when [math]\displaystyle{ \partial_{x}f(x) }[/math] is 0 everywhere, we obtain: [math]\displaystyle{ \begin{gathered}EI(f)\approx\end{gathered}0 }[/math]. For more general cases, it is necessary to consider the situation where the matrix is not of rank, please refer to the effective information of neural networks.

Source Code for EI in Continuous Systems

The numerical solution under reversible neural networks and the analytical solution for stochastic iterative systems both provide methods for calculating the Effective Information (EI). For general neural networks, they can be considered uncertain function mappings from input X to output Y: y=f(x)+ξ

[math]\displaystyle{ y=f(x)+\xi }[/math]

Where x has a dimension of input_size, y has a dimension of output_size, and [math]\xi[/math] is a Gaussian distribution with a covariance matrix: [math]\Sigma=\mathrm{diag}(\sigma_1,\sigma_2,\cdots,\sigma_n)[/math] Here, [math]\sigma_i[/math] represents the mean squared error (MSE) for the i-th dimension of the neural network. The inverse of this matrix is denoted as sigmas_matrix. The mapping function f is represented by func. The following code can be used to calculate the EI for this neural network. The basic idea of the algorithm is to use the Monte Carlo method to calculate the integral in Equation 6.

- Input Variables:

input_size: Dimension of the neural network's input, output_size: Dimension of the output, sigmas_matrix: Inverse of the covariance matrix of the output, assumed to follow a Gaussian distribution, func: Mapping function, L: Size of the intervention interval, num_samples: Number of samples for the Monte Carlo integration

- Output Variables:

d_EI: Dimension-averaged EI, eff: EI coefficient, EI: Effective Information, term1: Determinism, term2: Degeneracy, [math]\ln L[/math](-np.log(rho))

def approx_ei(input_size, output_size, sigmas_matrix, func, num_samples, L, easy=True, device=None):

rho=1/(2*L)**input_size #the density of X even distribution

#the first term of EI, the entropy of a gaussian distribution

#sigmas_matrix_np=sigmas_matrix.cpu() if use_cuda else sigmas_matrix

dett=1.0

if easy:

dd = torch.diag(sigmas_matrix)

dett = torch.log(dd).sum()

else:

#dett = np.log(np.linalg.det(sigmas_matrix_np))

dett = torch.log(torch.linalg.det(sigmas_matrix))

term1 = - (output_size + output_size * np.log(2*np.pi) + dett)/2

#sampling x on the space [-L,L]^n, n is the number of samples

xx=L*2*(torch.rand(num_samples, input_size, device=sigmas_matrix.device)-1/2)

dets = 0

logdets = 0

#iterate all samples of x

for i in range(xx.size()[0]):

jac=jacobian(func, xx[i,:]) #use pytorch's jacobian function to obtain jacobian matrix

det=torch.abs(torch.det(jac)) #calculate the determinate of the jacobian matrix

dets += det.item()

if det!=0:

logdets+=torch.log(det).item() #log jacobian

else:

#if det==0 then, it becomes a gaussian integration

logdet = -(output_size+output_size*np.log(2*np.pi)+dett)

logdets+=logdet.item()

int_jacobian = logdets / xx.size()[0] #take average of log jacobian

term2 = -np.log(rho) + int_jacobian # derive the 2nd term

if dets==0:

term2 = - term1

EI = max(term1 + term2, 0)

if torch.is_tensor(EI):

EI = EI.item()

eff = -EI / np.log(rho)

d_EI = EI/output_size

return d_EI, eff, EI, term1, term2, -np.log(rho)

For stochastic iterative systems, since an analytical solution is available, the calculations are simpler. They can either be computed directly or used as a comparison for the results obtained from the neural network. The input variables include the parameter matrix and the covariance matrix.

def EI_calculate_SIS(A,Sigma):

n=A.shape[0]

return math.log(abs(sl.det(A))/(np.sqrt(sl.det(Sigma))*math.pow(2*np.pi*np.e,n/2)))

EI and Other Related Topics

EI and Integrated Information Theory

The concept of Effective Information (EI) was first introduced in a paper [1] by Tononi et al. (2003) In this article, the authors defined the indicator of the Integrated Information Capability and established the Integrated Information Theory (IIT), which later evolved into an important branch of consciousness theory. The definition of the indicator of the Integrated Information Capability is based on effective information.

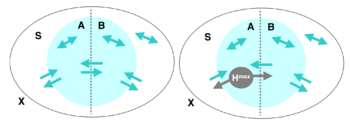

EI and Φ

The integrated information (or the degree of integration) [math]\displaystyle{ \Phi }[/math], can be defined as the minimum value of EI between any two bipartitions of a system. Suppose the system is 𝑋, and 𝑆 is a subset of 𝑋, that is partitioned into two parts, 𝐴 and 𝐵. There are causal interactions between 𝐴, 𝐵, and the rest of 𝑋.

In this scenario, we can measure the strength of these causal interactions. First, we calculate the EI from 𝐴 to 𝐵, i.e., we intervene on 𝐴 such that it follows the maximum entropy distribution, then measure the mutual information between 𝐴 and 𝐵:

[math]\displaystyle{ EI(A\rightarrow B) = I(A^{H^{max}}: B) }[/math]

Here, [math]\displaystyle{ A^{H^{max}} }[/math] denotes the maximum entropy distribution on 𝐴, which is implicitly a uniform distribution. This equation implies a causal mechanism from 𝐴 to 𝐵, denoted as [math]Pr(B|A)[/math], which remains unchanged during the intervention. If different states of 𝐴 cause significantly different changes in 𝐵, the EI will be high; conversely, if changes in 𝐴 have little effect on 𝐵, the EI will be low. This measure is directional, so EI from 𝐴 to 𝐵 may differ from EI from 𝐵 to 𝐴. We can sum these two directions to obtain the total EI for the partition:

[math]\displaystyle{ EI(A\leftrightarrow B) = EI(A\rightarrow B) + EI(B\rightarrow A) }[/math]

Traversing various partitions, if there exists a partition S that makes EI 0, it indicates that S can be seen as two causally independent parts, so the degree of integration should also be 0. From this particular example, we can see that we should focus on the one with the least significant information among all partitions. Of course, different partitions will result in different state spaces for A and B, so a normalization process should be performed by dividing by the smaller of the maximum entropy values of A and B. So, we can have a minimum information partition (MIB). The degree of integration [math]\displaystyle{ \Phi }[/math] is defined as follows:

[math]\displaystyle{ \Phi(S) = EI(MIB(S)) }[/math]

This defines the relationship between Integrated Information Capability and EI.

Distinction

It is important to note that unlike EI calculations for Markov chains, the EI here measures the causal connections between two parts of the system, rather than the strength of causal connections across two different time points in the same system.

EI and Other Causal Metrics

EI is a metric used to measure the strength of causal effects in a causal mechanism. Before the introduction of EI, several causal metrics had already been proposed. So, what is the relationship between EI and these causal measures? As Comolatti and Hoel pointed out in their 2022 paper, many causal metrics, including EI, can be expressed as combinations of two basic elements [13]. These two basic elements are called "Causal Primitives", which represent Sufficiency and Necessity and in causal relationships.

Definition of Causal Primitives

[math]\displaystyle{ \begin{aligned} \text{Sufficiency:}~~~&suff(e,c) = P(e|c) \\ \text{Necessity:}~~~&nec(e,c) = 1 - P(e|C \backslash c) \end{aligned} }[/math]

In this context, [math]\displaystyle{ c }[/math] and [math]\displaystyle{ e }[/math] represent the cause and effect events, respectively. [math]\displaystyle{ C }[/math] denotes the complete set of cause events, and [math]\displaystyle{ C \backslash c }[/math] is the complement of the cause event [math]\displaystyle{ c }[/math]. Events other than [math]\displaystyle{ c }[/math] can also be written as [math]\displaystyle{ \lnot c }[/math]. Sufficiency indicates the probability that the effect occurs when the cause occurs, while Necessity measures the probability that the effect does not occur when the cause is absent. When [math]\displaystyle{ nec = 1 }[/math], the cause or absence of the cause determines the occurrence of the effect.

The necessity of some causal indicators manifests in the following variant forms, which are also defined here:

[math]\displaystyle{ \begin{aligned} \text{Necessity}' \text{:}~~~nec'(e) = 1 - P(e|C) \end{aligned} }[/math]

According to the definition, when [math]\displaystyle{ c }[/math] is a very low probability event, [math]\displaystyle{ nec(e,c) \approx nec'(e) }[/math]. When [math]\displaystyle{ C }[/math] is a continuous state space, the two can be considered equivalent.

Note: The definition of [math]\displaystyle{ nec'(e) }[/math] is different from the definition of [math]\displaystyle{ nec^\dagger(e) = P(e|C) }[/math] in the literature[13]. The relationship between the two is [math]\displaystyle{ net'(e) = 1 - nec^\dagger(e) }[/math].

Causal Primitives, Determinism, and Degeneracy

As previously mentioned, EI (Effective Information) can be decomposed into two terms: determinism and degeneracy, which correspond to the causal primitives of sufficiency and necessity, respectively. [math]\displaystyle{ Determinism\quad Coef= \sum_{e \in E, c \in C}{P(e,c)\cdot \left[1 - \frac{\log{\frac{1}{suff(e,c)}}}{\log{N}}\right]} }[/math]

[math]\displaystyle{ Degeneracy\quad Coef= \sum_{e \in E}{P(e|C)\cdot\left[1 - \frac{\log{\frac{1}{1 - nec'(e)}}}{\log{N}}\right]} }[/math]

There is a monotonic relationship between sufficiency and determinism, as well as between necessity and degeneracy. The higher the sufficiency, the higher the determinism; the higher the necessity, the lower the degeneracy.

Causal Metrics in Terms of Causal Primitives

| Causal indicators | Definition of Probability | Definition of causal metalanguage | References |

|---|---|---|---|

| Effective Information EI | [math]\displaystyle{ \log_2{\frac{P(e|c)}{P(e|C)}} }[/math] | [math]\displaystyle{ \log_2\frac{suff}{1 - nec'} }[/math] | [2] |

| Galton Metric | [math]\displaystyle{ P(c)P(C\backslash c)(P(e|c) - P(e|C\backslash c)) }[/math] | [math]\displaystyle{ P(c)P(C\backslash c)(suff + nec - 1) }[/math] | [14] |

| Suppes Metric | [math]\displaystyle{ P(e|c) - P(e|C) }[/math] | [math]\displaystyle{ suff + nec' - 1 }[/math] | [15] |

| Eells Metric(Equivalent to Judea Pearl's necessary and sufficient probability PNS) | [math]\displaystyle{ P(e|c) - P(e|C\backslash c) }[/math] | [math]\displaystyle{ suff + nec - 1 }[/math] | [3][16] |

| Cheng Metric (Equivalent to Judea Pearl's sufficient probability PS) | [math]\displaystyle{ \frac{P(e|c) - P(e|C\backslash c)}{1 - P(e|C\backslash c)} }[/math] | [math]\displaystyle{ \frac{suff + nec - 1}{nec} }[/math] | [3][17] |

| Lewis Metric (Equivalent to Judea Pearl's necessary probability PN) | [math]\displaystyle{ \frac{P(e|c) - P(e|C\backslash c)}{P(e|c)} }[/math] | [math]\displaystyle{ \frac{suff + nec - 1}{suff} }[/math] | [3][18] |

EI and Dynamical Reversibility

As demonstrated in the example example of the Markov chain, EI increases when the probability transition matrix is a Permutation Matrix.