“人工智能”的版本间的差异

(→知识表示) |

(→规划) |

||

| 第79行: | 第79行: | ||

[[File:Hierarchical-control-system.svg|thumb| 分层控制系统是控制系统的一种形式,在这种控制系统中,一组设备和控制软件被放在一个层次结构中.]] | [[File:Hierarchical-control-system.svg|thumb| 分层控制系统是控制系统的一种形式,在这种控制系统中,一组设备和控制软件被放在一个层次结构中.]] | ||

| − | + | [[File:Hierarchical-control-system.svg|thumb| 分层控制系统是控制系统的一种形式,在这种控制系统中,一组设备和控制软件被放在一个层次结构中.]] | |

| − | + | 智能体必须能够设定并实现目标。<ref>ACM Computing Classification System: Artificial intelligence". ACM. 1998.</ref><ref>Russell, Stuart J.; Norvig, Peter (2003), Artificial Intelligence: A Modern Approach (2nd ed.), Upper Saddle River, New Jersey: Prentice Hall, ISBN 0-13-790395-2, pp. 375–459</ref><ref>Poole, David; Mackworth, Alan; Goebel, Randy (1998). Computational Intelligence: A Logical Approach. New York: Oxford University Press. ISBN 978-0-19-510270-3. pp. 281–316</ref><ref>Luger, George; Stubblefield, William (2004). Artificial Intelligence: Structures and Strategies for Complex Problem Solving (5th ed.). Benjamin/Cummings. ISBN 978-0-8053-4780-7, pp. 314–329</ref><ref>Nilsson, Nils (1998). Artificial Intelligence: A New Synthesis. Morgan Kaufmann. ISBN 978-1-55860-467-4, chpt. 10.1–2, 22</ref>他们需要能够有设想未来的办法——这是一种对其所处环境状况的表述,并能够预测他们的行动将如何改变环境——依此能够选择使效用(或者“价值”)最大化的选项。<ref name="Information value theory">Information value theory: * Russell & Norvig 2003, pp. 600–604</ref> | |

| − | |||

| − | + | 在经典的规划问题中,智能体可以假设它是世界上唯一运行着的系统,以便于智能体确定其做出某个行为带来的后果。<ref>Russell, Stuart J.; Norvig, Peter (2003), Artificial Intelligence: A Modern Approach (2nd ed.), Upper Saddle River, New Jersey: Prentice Hall, ISBN 0-13-790395-2,pp. 375–430</ref><ref>Poole, David; Mackworth, Alan; Goebel, Randy (1998). Computational Intelligence: A Logical Approach. New York: Oxford University Press. ISBN 978-0-19-510270-3, pp. 375–430,<ref><ref><ref>然而,如果智能体不是唯一的参与者,这就要求智能体能够在不确定的情况下进行推理。这需要一智能体不仅能够评估其环境和作出预测,而且还评估其预测和根据其预测做出调整。<ref name="Non-deterministic planning"/> | |

| − | |||

| − | |||

| 第93行: | 第90行: | ||

多智能体规划利用多个智能体之间的协作和竞争来达到目标。进化算法和群体智能会用到类似这样的涌现行为。 | 多智能体规划利用多个智能体之间的协作和竞争来达到目标。进化算法和群体智能会用到类似这样的涌现行为。 | ||

| − | |||

===学习=== | ===学习=== | ||

2021年7月25日 (日) 22:28的版本

人工智能 Artificial Intelligence(AI),在机科计算学中亦称机器智能 Machine Intelligence。与人和其他动物表现出的自然智能相反,AI指由人制造出来的机器所表现出来的智能。前沿AI的教科书把AI定义为对智能体的研究:智能体指任何感知周围环境并采取行动以最大化其成功实现目标的机会的机器。[1][2][3][4]通俗来说,“AI”就是机器模仿人类与人类大脑相关的“认知”功能:例如“学习”和“解决问题”。

AI 应用包括高级网络搜索引擎、推荐系统(YouTube、亚马逊和Netflix 使用)、理解人类语音(例如Siri或Alexa)、自动驾驶汽车(例如特斯拉)以及在战略游戏系统中进行最高级别的竞争(例如国际象棋和围棋),[5]随着机器的能力越来越强,被认为需要“智能”的任务往往从人工智能的定义中删除,这种现象被称为人工智能效应。[6]例如,光学字符识别经常被排除在人工智能之外, [7]已成为一项常规技术。[8]

1956年AI作为一门学科被建立起来,后来经历过几段乐观时期[9][10]与紧随而来的亏损以及缺乏资金的困境(也就是“AI寒冬”[11][12]),每次又找到了新的出路,取得了新的成果和新的投资[10][13]。AI 研究在其一生中尝试并放弃了许多不同的方法,包括模拟大脑、模拟人类问题解决、形式逻辑、大型知识数据库和模仿动物行为。在 21 世纪的头几十年,高度数学的统计机器学习已经主导了该领域,并且该技术已被证明非常成功,有助于解决整个工业界和学术界的许多具有挑战性的问题。[14]

AI 研究的各个子领域都围绕特定目标和特定工具的使用。人工智能研究的传统目标包括推理、知识表示、规划、学习、自然语言处理、感知以及移动和操纵物体的能力。[15]通用智能(解决任意问题的能力)是该领域的长期目标之一。[16]为了解决这些问题,人工智能研究人员使用了各种版本的搜索和数学优化、形式逻辑、人工神经网络以及基于统计的方法,概率和经济学。AI 还借鉴了计算机科学、心理学、语言学、哲学和许多其他领域。

这一领域是建立在人类智能“可以被精确描述从而使机器可以模拟”的观点上的。这一观点引出了关于思维的本质和造具有类人智能AI的伦理方面的哲学争论,于是自古以来[17]就有一些神话、小说以及哲学对此类问题展开过探讨。一些人认为AI的发展不会威胁人类生存;[18][19]但另一些人认为AI与以前的技术革命不同,它将带来大规模失业的风险。[20]

历史

具有思维能力的人造生物在古代以故事讲述者的方式出现,[21] 在小说中也很常见。比如 Mary Shelley的《弗兰肯斯坦 Frankenstein 》和 Karel Čapek的《罗素姆的万能机器人 Rossum's Universal Robots,R.U.R.》[22] ——小说中的角色和他们的命运向人们提出了许多现在在人工智能伦理学中讨论的同样的问题。

机械化或者说“形式化”推理的研究始于古代的哲学家和数学家。这些数理逻辑的研究直接催生了图灵的计算理论,即机器可以通过移动如“0”和“1”的简单的符号,就能模拟任何通过数学推演可以想到的过程,这一观点被称为邱奇-图灵论题 Church–Turing Thesis[23]。图灵提出“如果人类无法区分机器和人类的回应,那么机器可以被认为是“智能的”。</ref>Turing, Alan (1948), "Machine Intelligence", in Copeland, B. Jack (ed.), The Essential Turing: The ideas that gave birth to the computer age, Oxford: Oxford University Press, p. 412, ISBN 978-0-19-825080-7</ref>目前人们公认的最早的AI工作是由McCullouch和Pitts 在1943年正式设计的图灵完备“人工神经元”。[24]

AI研究于1956年起源于在达特茅斯学院举办的一个研讨会,[25]其中术语“人工智能”是由约翰麦卡锡创造的,目的是将该领域与控制论区分开来,并摆脱控制论主义者诺伯特维纳的影响。[26]与会者Allen Newell(CMU),赫伯特·西蒙 Herbert Simon(CMU),约翰·麦卡锡 John McCarthy(MIT),马文•明斯基 Marvin Minsky(MIT)和阿瑟·塞缪尔Arthur Samuel(IBM)成为了AI研究的创始人和领导者。他们和他们的学生做了一个新闻表述为“叹为观止”的计算机学习策略(以及在1959年就被报道达到人类的平均水平之上) ,解决代数应用题,证明逻辑理论以及用英语进行表达。到20世纪60年代中期,美国国防高级研究计划局斥重资支持研究,世界各地纷纷建立研究室。AI的创始人对未来充满乐观: Herbert Simon预测“二十年内,机器将能完成人能做到的一切工作。”。Marvin Minsky对此表示同意,他写道: “在一代人的时间里... ... 创造‘AI’的问题将得到实质性的解决。”

他们没有意识到现存任务的一些困难。研究进程放缓,在1974年,由于ir James Lighthill的指责以及美国国会需要分拨基金给其他有成效的项目,美国和英国政府都削减了探索性AI研究项目。接下来的几年被称为“AI寒冬”[11],在这一时期AI研究很难得到经费。

在20世纪80年代初期,由于专家系统在商业上取得的成功,AI研究迎来了复兴,[27]专家系统是一种能够模拟人类专家的知识和分析能力的程序。到1985年,AI市场超过了10亿美元。与此同时,日本的第五代计算机项目促使了美国和英国政府恢复对学术研究的资助。[10] 然而,随着1987年 Lisp 机器市场的崩溃,AI再一次遭遇低谷,并陷入了第二次持续更长时间的停滞。[12]

人工智能在 1990 年代末和 21 世纪初通过寻找特定问题的具体解决方案,例如物流、数据挖掘或医疗诊断,逐渐恢复了声誉。到 2000 年,人工智能解决方案被广泛应用于幕后。[14] 狭窄的焦点使研究人员能够产生可验证的结果,开发更多的数学方法,并与其他领域(如统计学、经济学和数学)合作。[28]

更快的计算机、算法改进和对大量数据的访问使机器学习和感知取得进步;数据饥渴的深度学习方法在 2012 年左右开始主导准确性基准。[29]据彭博社的Jack Clark称,2015 年是人工智能具有里程碑意义的一年,谷歌内部使用人工智能的软件项目数量从 2012 年的“零星使用”增加到 2700 多个项目。克拉克还提供了事实数据,表明自 2012 年以来 AI 的改进受到图像处理任务中较低错误率的支持。[30]他将此归因于可负担得起的神经网络的增加,这是由于云计算基础设施的增加以及研究工具和数据集的增加。[13]在 2017 年的一项调查中,五分之一的公司表示他们“在某些产品或流程中加入了人工智能”。[31][32]

目标

模拟(或创造)智能的一般问题已被分解为若干子问题。这些问题中涉及到的特征或能力是研究人员期望智能系统展示的。受到了最多的关注的是下面描述的几个特征。[15]

推理,解决问题

早期的研究人员开发了一种算法,这种算法模仿了人类在解决谜题或进行逻辑推理时所使用的循序渐进的推理。[33]到20世纪80年代末和90年代,AI研究使用概率论和经济学的理论开发出了处理不确定或不完全信息的方法。[34]

这些算法被证明不足以解决大型推理问题,因为它们经历了一个“组合爆炸” : 随着问题规模变得越来越大,它们的处理效率呈指数级下降。[35]事实上,即使是人类也很少使用早期AI研究建模的逐步推理。人们通过快速、直觉的判断来解决大多数问题。[36]

知识表示

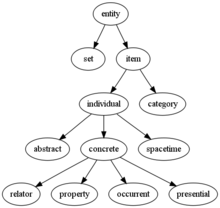

传统的AI研究的重点是知识表示 Knowledge Representation[37]和知识工程 Knowledge Engineering[38]。有些“专家系统”试图将某一小领域的专家所拥有的知识收集起来。此外,一些项目试图将普通人的“常识”收集到一个包含对世界的认知的知识的大数据库中。这些常识包括:对象、属性、类别和对象之间的关系;[39]情景、事件、状态和时间;[40]原因和结果;[41]关于知识的知识(我们知道别人知道什么);和许多其他研究较少的领域。“存在的东西”的表示是本体,本体是被正式描述的对象、关系、概念和属性的集合,这样的形式可以让软件智能体能够理解它。本体的语义描述了逻辑概念、角色和个体,通常在Web本体语言中以类、属性和个体的形式实现。[42]最常见的本体称为上本体 Upper Ontology,它试图为所有其他知识提供一个基础,[43]它充当涵盖有关特定知识领域(兴趣领域或关注领域)的特定知识的领域本体之间的中介。这种形式化的知识表示可以用于基于内容的索引和检索,[44]场景解释,[45]临床决策,[46]知识发现(从大型数据库中挖掘“有趣的”和可操作的推论)[47]等领域。[48]

知识表示中最困难的问题是:

默认推理和资格问题 : 人们对事物的认知常常基于一个可行的假设。提到鸟,人们通常会想象一只拳头大小、会唱歌、会飞的动物,但并不是所有鸟类都有这样的特性。1969年John McCarthy[49][50]将其归咎于限定性问题:对于AI研究人员所关心的任何常识性规则来说,大量的例外往往存在。几乎没有什么在抽象逻辑角度是完全真或完全假。AI研究探索了许多解决这个问题的方法。[51][52][53]

常识的广度: 常人掌握的“元常识”的数量是非常大的。试图建立一个完整的常识知识库(例如Cyc)的研究项目需要大量费力的本体工程——它们必须一次手工构建一个复杂的概念。[54][55][56][57]

一些常识性知识的子符号形式: 人们所知道的许多东西必不能用可以口头表达的“事实”或“陈述”描述。例如,一个国际象棋大师会避免下某个位置,因为觉得这步棋“感觉上太激进”;[58]或者一个艺术评论家可以看一眼雕像,就知道它是假的。[59]这些是人类大脑中无意识和亚符号的直觉。这种知识为符号化的、有意识的知识提供信息和语境。[60][61]与子符号推理的相关问题一样,我们希望情境AI、计算智能或统计AI能够表示这类知识。

规划

智能体必须能够设定并实现目标。[62][63][64][65][66]他们需要能够有设想未来的办法——这是一种对其所处环境状况的表述,并能够预测他们的行动将如何改变环境——依此能够选择使效用(或者“价值”)最大化的选项。[67]

在经典的规划问题中,智能体可以假设它是世界上唯一运行着的系统,以便于智能体确定其做出某个行为带来的后果。[68]引用错误:没有找到与</ref>对应的<ref>标签 is the study of computer algorithms that improve automatically through experience.[69][70]

机器学习 Machine Learning,ML是自AI诞生以来就有的一个基本概念,它研究如何通过经验自动改进计算机算法。

Unsupervised learning is the ability to find patterns in a stream of input, without requiring a human to label the inputs first. Supervised learning includes both classification and numerical regression, which requires a human to label the input data first. Classification is used to determine what category something belongs in, and occurs after a program sees a number of examples of things from several categories. Regression is the attempt to produce a function that describes the relationship between inputs and outputs and predicts how the outputs should change as the inputs change.[70] Both classifiers and regression learners can be viewed as "function approximators" trying to learn an unknown (possibly implicit) function; for example, a spam classifier can be viewed as learning a function that maps from the text of an email to one of two categories, "spam" or "not spam". Computational learning theory can assess learners by computational complexity, by sample complexity (how much data is required), or by other notions of optimization.[71] In reinforcement learning[72] the agent is rewarded for good responses and punished for bad ones. The agent uses this sequence of rewards and punishments to form a strategy for operating in its problem space.

无监督学习 Unsupervised Learning可以从数据流中发现某种模式,而不需要人类提前标注数据。有监督学习 Supervised Learning包括分类和回归,这需要人类首先标注数据。分类被用于确定某物属于哪个类别,这需要把大量来自多个类别的例子投入程序;回归用来产生一个描述输入和输出之间的关系的函数,并预测输出会如何随着输入的变化而变化[70][73]。在强化学习[72]中,智能体会因为好的回应而受到奖励,因为坏的回应而受到惩罚;智能体通过一系列的奖励和惩罚形成了一个在其问题空间中可施行的策略。

自然语言处理

Natural language processing[74] (NLP) gives machines the ability to read and understand human language. A sufficiently powerful natural language processing system would enable natural-language user interfaces and the acquisition of knowledge directly from human-written sources, such as newswire texts. Some straightforward applications of natural language processing include information retrieval, text mining, question answering引用错误:没有找到与</ref>对应的<ref>标签 Moravec's paradox generalizes that low-level sensorimotor skills that humans take for granted are, counterintuitively, difficult to program into a robot; the paradox is named after Hans Moravec, who stated in 1988 that "it is comparatively easy to make computers exhibit adult level performance on intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old when it comes to perception and mobility".[75][76] This is attributed to the fact that, unlike checkers, physical dexterity has been a direct target of natural selection for millions of years.[77]

AI在机器人学中应用广泛[78]。在现代工厂中广泛使用的高级机械臂和其他工业机器人,可以从经验中学习如何在存在摩擦和齿轮滑移的情况下有效地移动。当处在一个静态且可见的小环境中时,现代移动机器人[79]可以很容易地确定自己的位置并绘制环境地图;然而如果是动态环境,比如用内窥镜检查病人呼吸的身体的内部,难度就会更高。运动规划是将一个运动任务分解为如单个的关节运动这样的“基本任务”的过程。这种运动通常包括顺应运动,在这个过程中需要与物体保持物理接触。莫拉维克悖论 Moravec's Paradox [80][81]概括了人类理所当然认为低水平的感知运动技能很难在编程给机器人的事实,这个悖论是以汉斯 · 莫拉维克的名字命名的,他在1988年表示: “让计算机在智力测试或下跳棋中展现出成人水平的表现相对容易,但要让计算机拥有一岁小孩的感知和移动能力却很难,甚至不可能。”[82][83]这是因为,身体灵巧性在数百万年的自然选择中一直作为一个直接的目标以增强人类的生存能力;而与此相比,跳棋技能则很奢侈,“擅长跳棋”的基因并不被生存导向的自然选择所偏好与富集。[77]

社会智能

Moravec's paradox can be extended to many forms of social intelligence.[84][85] Distributed multi-agent coordination of autonomous vehicles remains a difficult problem.[86] Affective computing is an interdisciplinary umbrella that comprises systems which recognize, interpret, process, or simulate human affects.模板:Sfn模板:Sfn模板:Sfn Moderate successes related to affective computing include textual sentiment analysis and, more recently, multimodal affect analysis (see multimodal sentiment analysis), wherein AI classifies the affects displayed by a videotaped subject.[87]

莫拉维克悖论可以扩展到社会智能的许多形式。自动驾驶汽车分布式多智能体协调一直是一个难题。[88][89]情感计算是一个跨学科交叉领域,包括了识别、解释、处理、模拟人的情感的系统。与情感计算相关的一些还算成功的领域有文本情感分析,以及最近的多模态情感分析 Multimodal Affect Analysis [90],多模态情感分析中AI模板:Sfn模板:Sfn模板:Sfn可以做到将录像中被试表现出的情感进行分类。[91]

In the long run, social skills and an understanding of human emotion and game theory would be valuable to a social agent. Being able to predict the actions of others by understanding their motives and emotional states would allow an agent to make better decisions. Some computer systems mimic human emotion and expressions to appear more sensitive to the emotional dynamics of human interaction, or to otherwise facilitate human–computer interaction.[92] Similarly, some virtual assistants are programmed to speak conversationally or even to banter humorously; this tends to give naïve users an unrealistic conception of how intelligent existing computer agents actually are.[93]

从长远来看,社交技巧以及对人类情感和博弈论的理解对社会智能体的价值很高[92] 。能够通过理解他人的动机和情绪状态来预测他人的行为,会让智能体做出更好的决策。有些计算机系统模仿人类的情感和表情,有利于对人类交互的情感动力学更敏感,或利于促进人机交互。[94]

通用智能

Historically, projects such as the Cyc knowledge base (1984–) and the massive Japanese Fifth Generation Computer Systems initiative (1982–1992) attempted to cover the breadth of human cognition. These early projects failed to escape the limitations of non-quantitative symbolic logic models and, in retrospect, greatly underestimated the difficulty of cross-domain AI. Nowadays, the vast majority of current AI researchers work instead on tractable "narrow AI" applications (such as medical diagnosis or automobile navigation).[95] Many researchers predict that such "narrow AI" work in different individual domains will eventually be incorporated into a machine with artificial general intelligence (AGI), combining most of the narrow skills mentioned in this article and at some point even exceeding human ability in most or all these areas.[16][96] Many advances have general, cross-domain significance. One high-profile example is that DeepMind in the 2010s developed a "generalized artificial intelligence" that could learn many diverse Atari games on its own, and later developed a variant of the system which succeeds at sequential learning.[97][98][99] Besides transfer learning,[100] hypothetical AGI breakthroughs could include the development of reflective architectures that can engage in decision-theoretic metareasoning, and figuring out how to "slurp up" a comprehensive knowledge base from the entire unstructured Web.模板:Sfn Some argue that some kind of (currently-undiscovered) conceptually straightforward, but mathematically difficult, "Master Algorithm" could lead to AGI.模板:Sfn Finally, a few "emergent" approaches look to simulating human intelligence extremely closely, and believe that anthropomorphic features like an artificial brain or simulated child development may someday reach a critical point where general intelligence emerges.[101][102]

历史上,诸如 Cyc 知识库(1984 -)和大规模的日本第五代计算机系统倡议(1982-1992)等项目试图涵盖人类的所有认知。这些早期的项目未能逃脱非定量符号逻辑模型的限制,现在回过头看,这些项目大大低估了实现跨领域AI的难度。当下绝大多数AI研究人员主要研究易于处理的“狭义AI”应用(如医疗诊断或汽车导航)[95]。许多研究人员预测,不同领域的“狭义AI”工作最终将被整合到一台具有人工通用智能(AGI)的机器中,结合上文提到的大多数狭义功能,甚至在某种程度上在大多数或所有这些领域都超过人类。许多进展具有普遍的、跨领域的意义。[16][96]一个著名的例子是,21世纪一零年代,DeepMind开发了一种“通用人工智能 Generalized Artificial Intelligence” ,它可以自己学习许多不同的 Atari 游戏,后来又开发了这种系统的升级版,在序贯学习方面取得了成功。[103][104][105]除了迁移学习,未来AGI [106] 的突破可能包括开发能够进行决策理论元推理的反射架构,以及从整个非结构化的网页中整合一个全面的知识库。模板:Sfn 一些人认为,某种(目前尚未发现的)概念简单,但在数学上困难的“终极算法”可以产生AGI。最后,一些“涌现”的方法着眼于尽可能地模拟人类智能,并相信如人工大脑或模拟儿童发展等拟人方案,有一天会达到一个临界点,通用智能在此涌现。[101][107]

Many of the problems in this article may also require general intelligence, if machines are to solve the problems as well as people do. For example, even specific straightforward tasks, like machine translation, require that a machine read and write in both languages (NLP), follow the author's argument (reason), know what is being talked about (knowledge), and faithfully reproduce the author's original intent (social intelligence). A problem like machine translation is considered "AI-complete", because all of these problems need to be solved simultaneously in order to reach human-level machine performance.

如果机器要像人一样解决问题,那么本文中的许多问题也可能需要通用智能。例如,即使是特定的如机器翻译的直接任务,也要求机器用两种语言进行读写(NLP) ,符合作者的观点(推理) ,知道谈论的内容(知识) ,并忠实地再现作者的原始意图(社会智能)。像机器翻译这样的问题被认为是“AI完备”的,因为需要同时解决所有这些问题,机器性能才能达到人类水平。

方法

There is no established unifying theory or paradigm that guides AI research. Researchers disagree about many issues.[108] A few of the most long standing questions that have remained unanswered are these: should artificial intelligence simulate natural intelligence by studying psychology or neurobiology? Or is human biology as irrelevant to AI research as bird biology is to aeronautical engineering?[109]

目前还没有统一的理论或范式来指导AI的研究。研究人员在许多问题上存在分歧。[110]一些长期悬而未决的问题是: AI是否应该通过研究心理学或神经生物学来模拟天然智能?人类生物学和AI研究的关系和鸟类生物学和航空工程学的关系一样吗?

Can intelligent behavior be described using simple, elegant principles (such as logic or optimization)? Or does it necessarily require solving a large number of completely unrelated problems?[111]

智能行为可以用简单、优雅的原则(如逻辑或优化)来描述吗?还是需要去解决大量完全不相关的问题?

控制论与大脑模拟

In the 1940s and 1950s, a number of researchers explored the connection between neurobiology, information theory, and cybernetics. Some of them built machines that used electronic networks to exhibit rudimentary intelligence, such as W. Grey Walter's turtles and the Johns Hopkins Beast. Many of these researchers gathered for meetings of the Teleological Society at Princeton University and the Ratio Club in England.[112] By 1960, this approach was largely abandoned, although elements of it would be revived in the 1980s.

在20世纪四五十年代,许多研究人员探索了神经生物学、信息论和控制论之间的联系。他们中的一些人利用电子网络制造机器来表现基本的智能,比如 W·格雷·沃尔特 W. Grey Walter的乌龟和约翰·霍普金斯 Johns Hopkins的野兽。这些研究人员中的许多人参加了在普林斯顿大学的目的论学社和英格兰的比率俱乐部举办的集会[112] 。到了1960年,这种方法基本上被放弃了,直到二十世纪八十年代一些部分又被重新使用。

符号化方法

When access to digital computers became possible in the mid-1950s, AI research began to explore the possibility that human intelligence could be reduced to symbol manipulation. The research was centered in three institutions: Carnegie Mellon University, Stanford and MIT, and as described below, each one developed its own style of research. John Haugeland named these symbolic approaches to AI "good old fashioned AI" or "GOFAI".[113] During the 1960s, symbolic approaches had achieved great success at simulating high-level "thinking" in small demonstration programs. Approaches based on cybernetics or artificial neural networks were abandoned or pushed into the background.[114]

20世纪50年代中期,当数字计算机成为可能时,AI研究开始探索把人类智能化归为符号操纵的可能性。这项研究集中在3个机构: 卡内基梅隆大学,斯坦福和麻省理工学院,正如下面所描述的,每个机构都有自己的研究风格。约翰 · 豪格兰德将这些具有象征意义的AI方法命名为“好的老式人工智能 Good Old Fashioned AI”或“ GOFAI”[113]。20世纪60年代的时候,符号化方法在模拟高层次“思考”的小型程序中取得了巨大的成就。而基于控制论和人工神经网络的方法则被抛弃,或者只作为背景出现。[115]

20世纪六七十年代的研究人员相信,符号方法最终会成功地创造出一台具有通用人工智能的机器,并以此作为他们研究领域的目标。

模拟认知的方法

Economist Herbert Simon and Allen Newell studied human problem-solving skills and attempted to formalize them, and their work laid the foundations of the field of artificial intelligence, as well as cognitive science, operations research and management science. Their research team used the results of psychological experiments to develop programs that simulated the techniques that people used to solve problems. This tradition, centered at Carnegie Mellon University would eventually culminate in the development of the Soar architecture in the middle 1980s.[116][117]

经济学家赫伯特·西蒙和艾伦·纽厄尔研究了人类解决问题的技能,并试图将其形式化。他们的工作为AI、认知科学、运筹学和管理科学奠定了基础。他们的研究团队利用心理学实验的结果来开发程序,模拟人们用来解决问题的方法。以卡内基梅隆大学为中心,这种研究传统最终在20世纪80年代中期的SOAR架构开发过程中达到顶峰。[116][117]

基于逻辑的方法

Unlike Simon and Newell, John McCarthy felt that machines did not need to simulate human thought, but should instead try to find the essence of abstract reasoning and problem-solving, regardless whether people used the same algorithms.[109] His laboratory at Stanford (SAIL) focused on using formal logic to solve a wide variety of problems, including knowledge representation, planning and learning.[118] Logic was also the focus of the work at the University of Edinburgh and elsewhere in Europe which led to the development of the programming language Prolog and the science of logic programming.[119]

与西蒙和纽厄尔不同,约翰·麦卡锡认为机器不需要模拟人类的思维,而是应该尝试寻找抽象推理和解决问题的本质,不管人们是否使用相同的算法。[109] 他在斯坦福大学的实验室(SAIL)致力于使用形式逻辑来解决各种各样的问题,包括知识表示、规划和学习[118] 。逻辑也是爱丁堡大学和欧洲其他地方工作的重点,这促进了编程语言 Prolog 和逻辑编程科学的发展。

反逻辑的或“邋遢”的方法

Researchers at MIT (such as Marvin Minsky and Seymour Papert)[120] found that solving difficult problems in vision and natural language processing required ad-hoc solutions—they argued that there was no simple and general principle (like logic) that would capture all the aspects of intelligent behavior. Roger Schank described their "anti-logic" approaches as "scruffy" (as opposed to the "neat" paradigms at CMU and Stanford).[111] Commonsense knowledge bases (such as Doug Lenat's Cyc) are an example of "scruffy" AI, since they must be built by hand, one complicated concept at a time.[121]

麻省理工学院(MIT)的研究人员马文•明斯基和西摩•派珀特等发现[120],视觉和自然语言处理中的难题需要特定的解决方案——他们认为,没有简单而普遍的原则(如逻辑)可以涵盖智能行为。罗杰•尚克将他们的“反逻辑”方法形容为“邋遢的”(相对于卡内基梅隆大学和斯坦福大学的“整洁”范式)。常识库(如常识知识库的 Cyc)是“邋遢”AI的一个例子,因为它们必须人工一个一个地构建复杂概念。

基于知识的方法

When computers with large memories became available around 1970, researchers from all three traditions began to build knowledge into AI applications.[122] This "knowledge revolution" led to the development and deployment of expert systems (introduced by Edward Feigenbaum), the first truly successful form of AI software.[27] A key component of the system architecture for all expert systems is the knowledge base, which stores facts and rules that illustrate AI.[123] The knowledge revolution was also driven by the realization that enormous amounts of knowledge would be required by many simple AI applications.

1970年左右,当拥有大容量存储器的计算机出现时,来自这三个研究方向的研究人员开始将知识应用于AI领域[122] 。这一轮“知识革命”的一大成果是开发和部署专家系统,第一个真正成功的AI软件[27]。所有专家系统的一个关键部件是存储着事实和规则的知识库[124]。推动知识革命的另一个原因是人们认识到,许多简单的AI应用程序也需要大量的知识。

亚符号方法

By the 1980s, progress in symbolic AI seemed to stall and many believed that symbolic systems would never be able to imitate all the processes of human cognition, especially perception, robotics, learning and pattern recognition. A number of researchers began to look into "sub-symbolic" approaches to specific AI problems.[125] Sub-symbolic methods manage to approach intelligence without specific representations of knowledge.

到了20世纪80年代,符号AI的进步似乎停滞不前,许多人认为符号系统永远无法模仿人类认知的所有过程,尤其在感知、机器人学、学习和模式识别等方面。许多研究人员开始研究针对特定AI问题的“亚符号”方法[125]。亚符号方法能在没有特定知识表示的情况下,做到接近智能。

具身智慧

This includes embodied, situated, behavior-based, and nouvelle AI. Researchers from the related field of robotics, such as Rodney Brooks, rejected symbolic AI and focused on the basic engineering problems that would allow robots to move and survive.[126] Their work revived the non-symbolic point of view of the early cybernetics researchers of the 1950s and reintroduced the use of control theory in AI. This coincided with the development of the embodied mind thesis in the related field of cognitive science: the idea that aspects of the body (such as movement, perception and visualization) are required for higher intelligence.

具身智慧 Embodied Intelligence包括具体化的、情境化的、基于行为的新式 AI。来自机器人相关领域的研究人员,如罗德尼·布鲁克斯,放弃了符号化AI的方法,而专注于使机器人能够移动和生存的基本工程问题[126]。他们的工作重启了20世纪50年代早期控制论研究者的非符号观点,并将控制论重新引入到AI的应用中。这与认知科学相关领域的具身理论的发展相吻合: 认为如运动、感知和视觉等身体的各个功能是高智能所必需的。

Within developmental robotics, developmental learning approaches are elaborated upon to allow robots to accumulate repertoires of novel skills through autonomous self-exploration, social interaction with human teachers, and the use of guidance mechanisms (active learning, maturation, motor synergies, etc.).模板:Sfn模板:Sfn模板:Sfn模板:Sfn

在发展型机器人中,人们开发了发展型学习方法,通过自主的自我探索、与人类教师的社会互动,以及使用主动学习、成熟、协同运动等指导机制 ,使机器人积累新技能的能力。模板:Sfn模板:Sfn模板:Sfn模板:Sfn

计算智能与软计算

Interest in neural networks and "connectionism" was revived by David Rumelhart and others in the middle of the 1980s.[127] Artificial neural networks are an example of soft computing—they are solutions to problems which cannot be solved with complete logical certainty, and where an approximate solution is often sufficient. Other soft computing approaches to AI include fuzzy systems, Grey system theory, evolutionary computation and many statistical tools. The application of soft computing to AI is studied collectively by the emerging discipline of computational intelligence.[128]

上世纪80年代中期,大卫•鲁梅尔哈特等人重新激发了人们对神经网络和“连接主义 Connectionism”的兴趣。人工神经网络是软计算的一个例子ーー它们解决不能完全用逻辑确定性地解决,但常常只需要近似解的问题。AI的其他软计算方法包括模糊系统 Fuzzy Systems 、灰度系统理论 Grey System Theory、演化计算 Evolutionary Computation 和许多统计工具。软计算在AI中的应用是计算智能这一新兴学科的集中研究领域。[128]

统计学习

Much of traditional GOFAI got bogged down on ad hoc patches to symbolic computation that worked on their own toy models but failed to generalize to real-world results. However, around the 1990s, AI researchers adopted sophisticated mathematical tools, such as hidden Markov models (HMM), information theory, and normative Bayesian decision theory to compare or to unify competing architectures. The shared mathematical language permitted a high level of collaboration with more established fields (like mathematics, economics or operations research).模板:Efn Compared with GOFAI, new "statistical learning" techniques such as HMM and neural networks were gaining higher levels of accuracy in many practical domains such as data mining, without necessarily acquiring a semantic understanding of the datasets. The increased successes with real-world data led to increasing emphasis on comparing different approaches against shared test data to see which approach performed best in a broader context than that provided by idiosyncratic toy models; AI research was becoming more scientific. Nowadays results of experiments are often rigorously measurable, and are sometimes (with difficulty) reproducible.[28][129] Different statistical learning techniques have different limitations; for example, basic HMM cannot model the infinite possible combinations of natural language.模板:Sfn Critics note that the shift from GOFAI to statistical learning is often also a shift away from explainable AI. In AGI research, some scholars caution against over-reliance on statistical learning, and argue that continuing research into GOFAI will still be necessary to attain general intelligence.模板:Sfn模板:Sfn

许多传统的 GOFAI 在实验模型中行之有效,但不能推广到现实世界,陷入了需要不断给符号计算修补漏洞的困境中。然而,在20世纪90年代前后,AI研究人员采用了复杂的数学工具,如隐马尔可夫模型 Hidden Markov Model,HMM、信息论和标准贝叶斯决策理论 Normative Bayesian Decision Theory来比较或统一各种互相竞争的架构。共通的数学语言允许其与数学、经济学或运筹学等更成熟的领域进行高层次的融合。与 GOFAI 相比,隐马尔可夫模型和神经网络等新的“统计学习”技术在数据挖掘等许多实际领域中不必理解数据集的语义,却能得到更高的精度,随着现实世界数据的日益增加,人们越来越注重用不同的方法测试相同的数据,并进行比较,看哪种方法在比特殊实验室环境更广泛的背景下表现得更好; AI研究正变得更加科学。如今,实验结果一般是严格可测的,有时可以重现(但有难度)[28][130] 。不同的统计学习技术有不同的局限性,例如,基本的 HMM 不能为自然语言的无限可能的组合建模。评论者们指出,从 GOFAI 到统计学习的转变也经常是可解释AI的转变。在 通用人工智能 的研究中,模板:Sfn一些学者警告不要过度依赖统计学习,并认为继续研究 GOFAI 仍然是实现通用智能的必要条件。模板:Sfn模板:Sfn

集成各种方法

- Intelligent agent paradigm

- An intelligent agent is a system that perceives its environment and takes actions that maximize its chances of success. The simplest intelligent agents are programs that solve specific problems. More complicated agents include human beings and organizations of human beings (such as firms). The paradigm allows researchers to directly compare or even combine different approaches to isolated problems, by asking which agent is best at maximizing a given "goal function". An agent that solves a specific problem can use any approach that works—some agents are symbolic and logical, some are sub-symbolic artificial neural networks and others may use new approaches. The paradigm also gives researchers a common language to communicate with other fields—such as decision theory and economics—that also use concepts of abstract agents. Building a complete agent requires researchers to address realistic problems of integration; for example, because sensory systems give uncertain information about the environment, planning systems must be able to function in the presence of uncertainty. The intelligent agent paradigm became widely accepted during the 1990s.[131]

智能主体范式: 智能主体是一个感知其环境并采取行动,最大限度地提高其成功机会的系统。最简单的智能主体是解决特定问题的程序,更复杂的智能主体包括人类和人类组织(如公司)。这种范式使得研究人员能通过观察哪一个智能主体能最大化给定的“目标函数”,直接比较甚至结合不同的方法来解决孤立的问题。解决特定问题的智能主体可以使用任何有效的方法——可以是是符号化和逻辑化的,也可以是亚符号化的人工神经网络,还可以是新的方法。这种范式还为研究人员提供了一种与其他领域(如决策理论和经济学)进行交流的共同语言,因为这些领域也使用了抽象智能主体的概念。建立一个完整的智能主体需要研究人员解决现实的整合协调问题; 例如,由于传感系统提供关于环境的信息不确定,决策系统就必须在不确定性的条件下运作。智能体范式在20世纪90年代被广泛接受。[131]

- Agent architectures and cognitive architectures

- Researchers have designed systems to build intelligent systems out of interacting intelligent agents in a multi-agent system.[132] A hierarchical control system provides a bridge between sub-symbolic AI at its lowest, reactive levels and traditional symbolic AI at its highest levels, where relaxed time constraints permit planning and world modeling.[133] Some cognitive architectures are custom-built to solve a narrow problem; others, such as Soar, are designed to mimic human cognition and to provide insight into general intelligence. Modern extensions of Soar are hybrid intelligent systems that include both symbolic and sub-symbolic components.[134][135][136]

智能主体体系结构和认知体系结构: 研究人员已经设计了一些在多智能体系统中利用相互作用的智能体构建智能系统的系统[132]。分层控制系统为亚符号AI、反应层和符号AI提供了一座桥梁,亚符号AI在底层、反应层和符号AI在顶层[133]。 一些认知架构是人为构造用来解决特定问题的;其他比如SOAR,是用来模仿人类的认知,向通用智能更进一步。现在SOAR的扩展是含有符号和亚符号部分的混合智能系统。[137][138][139]

工具

AI领域已经开发出许多工具来解决计算机科学中最困难的问题。下面将讨论其中一些最常用的方法。

搜索和优化

Many problems in AI can be solved in theory by intelligently searching through many possible solutions:[140] Reasoning can be reduced to performing a search. For example, logical proof can be viewed as searching for a path that leads from premises to conclusions, where each step is the application of an inference rule.[141] Planning algorithms search through trees of goals and subgoals, attempting to find a path to a target goal, a process called means-ends analysis.[142] Robotics algorithms for moving limbs and grasping objects use local searches in configuration space.[79] Many learning algorithms use search algorithms based on optimization.

AI中的许多问题可以通过智能地搜索许多可能的解决方案而在理论上得到解决[140]: 推理可以简化为执行一次搜索。例如,逻辑证明可以看作是寻找一条从前提到结论的路径,其中每一步都用到了推理规则。规划算法通过搜索目标和子目标的树,试图找到一条通往目标的路径,这个过程称为“目的-手段”分析。机器人学中移动肢体和抓取物体的算法使用的是位形空间的局部搜索。许多学习算法也使用到了基于优化的搜索算法。

Simple exhaustive searches[143] are rarely sufficient for most real-world problems: the search space (the number of places to search) quickly grows to astronomical numbers. The result is a search that is too slow or never completes. The solution, for many problems, is to use "heuristics" or "rules of thumb" that prioritize choices in favor of those that are more likely to reach a goal and to do so in a shorter number of steps. In some search methodologies heuristics can also serve to entirely eliminate some choices that are unlikely to lead to a goal (called "pruning the search tree"). Heuristics supply the program with a "best guess" for the path on which the solution lies.[144] Heuristics limit the search for solutions into a smaller sample size.模板:Sfn

对于大多数真实世界的问题,简单的穷举搜索[143]很难满足要求: 搜索空间(要搜索的位置数)很快就会增加到天文数字。结果就是搜索速度太慢或者永远不能完成。对于许多问题,解决方法是使用“启发式 Heuristics ”或“经验法则 Rules of Thumb ” ,优先考虑那些更有可能达到目标的选择,并且在较短的步骤内完成。在一些搜索方法中,启发式方法还可以完全移去一些不可能通向目标的选择(称为“修剪搜索树”)。[144]启发式为程序提供了解决方案所在路径的“最佳猜测”。启发式把搜索限制在了更小的样本规模里。

A very different kind of search came to prominence in the 1990s, based on the mathematical theory of optimization. For many problems, it is possible to begin the search with some form of a guess and then refine the guess incrementally until no more refinements can be made. These algorithms can be visualized as blind hill climbing: we begin the search at a random point on the landscape, and then, by jumps or steps, we keep moving our guess uphill, until we reach the top. Other optimization algorithms are simulated annealing, beam search and random optimization.[145]

在20世纪90年代,一种非常不同的基于数学最优化理论的搜索引起了人们的注意。对于许多问题,可以从某种形式的猜测开始搜索,然后逐步细化猜测,直到无法进行更多的细化。这些算法可以喻为盲目地爬山: 我们从地形上的一个随机点开始搜索,然后,通过跳跃或登爬,我们把猜测点继续向山上移动,直到我们到达山顶。其他的优化算法有 模拟退火算法 、定向搜索 和随机优化 。[145]

]

particle swarm seeking the global minimum]]

Evolutionary computation uses a form of optimization search. For example, they may begin with a population of organisms (the guesses) and then allow them to mutate and recombine, selecting only the fittest to survive each generation (refining the guesses). Classic evolutionary algorithms include genetic algorithms, gene expression programming, and genetic programming.[146] Alternatively, distributed search processes can coordinate via swarm intelligence algorithms. Two popular swarm algorithms used in search are particle swarm optimization (inspired by bird flocking) and ant colony optimization (inspired by ant trails).[147][148]

演化计算用到了优化搜索的形式。例如,他们可能从一群有机体(猜测)开始,然后让它们变异和重组,选择适者继续生存 (改进猜测)。经典的演化算法包括遗传算法、基因表达编程和遗传编程。[146] Alternatively, distributed search processes can coordinate via swarm intelligence algorithms. Two popular swarm algorithms used in search are particle swarm optimization (inspired by bird flocking) and ant colony optimization (inspired by ant trails).[147][149]

逻辑

Logic[150] is used for knowledge representation and problem solving, but it can be applied to other problems as well. For example, the satplan algorithm uses logic for planning[151] and inductive logic programming is a method for learning.[152]

逻辑[150]被用来表示知识和解决问题,还可以应用到其他问题上。例如,satplan 算法就使用逻辑进行规划[151]。另外,归纳逻辑编程是一种学习方法。

Several different forms of logic are used in AI research. Propositional logic[153] involves truth functions such as "or" and "not". First-order logic[154] adds quantifiers and predicates, and can express facts about objects, their properties, and their relations with each other. Fuzzy set theory assigns a "degree of truth" (between 0 and 1) to vague statements such as "Alice is old" (or rich, or tall, or hungry) that are too linguistically imprecise to be completely true or false. Fuzzy logic is successfully used in control systems to allow experts to contribute vague rules such as "if you are close to the destination station and moving fast, increase the train's brake pressure"; these vague rules can then be numerically refined within the system. Fuzzy logic fails to scale well in knowledge bases; many AI researchers question the validity of chaining fuzzy-logic inferences.模板:Efn[155][156]

AI研究中使用了多种不同形式的逻辑。命题逻辑[153]包含诸如“或”和“否”这样的真值函数。一阶逻辑[154]增加了量词和谓词,可以表达关于对象、对象属性和对象之间的关系。模糊集合论给诸如“爱丽丝老了”(或是富有的、高的、饥饿的)这样模糊的表述赋予了一个“真实程度”(介于0到1之间),这些表述在语言上很模糊,不能完全判定为正确或错误。模糊逻辑在控制系统中得到了成功应用,使专家能够制定模糊规则,比如“如果你正以较快的速度接近终点站,那么就增加列车的制动压力”;这些模糊的规则可以在系统内用数值细化。但是,模糊逻辑无助于扩展知识库,许多AI研究者质疑把模糊逻辑和推理结合起来的有效性。模板:Efn[155][157]

Default logics, non-monotonic logics and circumscription[158] are forms of logic designed to help with default reasoning and the qualification problem. Several extensions of logic have been designed to handle specific domains of knowledge, such as: description logics;[39] situation calculus, event calculus and fluent calculus (for representing events and time);[40] causal calculus;[41] belief calculus (belief revision);[159] and modal logics.[160] Logics to model contradictory or inconsistent statements arising in multi-agent systems have also been designed, such as paraconsistent logics.

缺省逻辑 Default Logics、非单调逻辑 Non-monotonic Logics、限制逻辑 Circumscription和模态逻辑 Modal Logics[158],都用逻辑形式来解决缺省推理和限定问题。一些逻辑扩展被用于处理特定的知识领域,例如:描述逻辑 Description Logics[39] 、情景演算、事件演算、流态演算 Fluent Calculus(用于表示事件和时间)[40]、因果演算[41]、信念演算(信念修正)[161]、和模态逻辑[160]。人们也设计了对多主体系统中出现的矛盾或不一致陈述进行建模的逻辑,如次协调逻辑。

不确定推理的概率方法

Many problems in AI (in reasoning, planning, learning, perception, and robotics) require the agent to operate with incomplete or uncertain information. AI researchers have devised a number of powerful tools to solve these problems using methods from probability theory and economics.[162]

AI中的许多问题(在推理、规划、学习、感知和机器人技术方面)要求主体在信息不完整或不确定的情况下进行操作。AI研究人员从概率论和经济学的角度设计了许多强大的工具来解决这些问题。

Bayesian networks[163] are a very general tool that can be used for various problems: reasoning (using the Bayesian inference algorithm),[164] learning (using the expectation-maximization algorithm),模板:Efn[165] planning (using decision networks)[166] and perception (using dynamic Bayesian networks).[167] Probabilistic algorithms can also be used for filtering, prediction, smoothing and finding explanations for streams of data, helping perception systems to analyze processes that occur over time (e.g., hidden Markov models or Kalman filters).[167] Compared with symbolic logic, formal Bayesian inference is computationally expensive. For inference to be tractable, most observations must be conditionally independent of one another. Complicated graphs with diamonds or other "loops" (undirected cycles) can require a sophisticated method such as Markov chain Monte Carlo, which spreads an ensemble of random walkers throughout the Bayesian network and attempts to converge to an assessment of the conditional probabilities. Bayesian networks are used on Xbox Live to rate and match players; wins and losses are "evidence" of how good a player is[citation needed]. AdSense uses a Bayesian network with over 300 million edges to learn which ads to serve.模板:Sfn

贝叶斯网络 Bayesian Networks 是一个非常通用的工具,可用于各种问题: 推理(使用贝叶斯推断算法) ,学习(使用期望最大化算法) ,规划(使用决策网络)和感知(使用动态贝叶斯网络)。概率算法也可以用于滤波、预测、平滑和解释数据流,帮助传感系统分析随时间发生的过程(例如,隐马尔可夫模型或卡尔曼滤波器 Kalman Filters)。与符号逻辑相比,形式化的贝叶斯推断逻辑运算量很大。为了使推理易于处理,大多数观察值必须彼此条件独立。含有菱形或其他“圈”(无向循环)的复杂图形可能需要比如马尔科夫-蒙特卡罗图的复杂方法,这种方法将一组随机行走遍布整个贝叶斯网络,并试图收敛到对条件概率的评估。贝叶斯网络在 Xbox Live 上被用来评估和匹配玩家:胜率是证明一个玩家有多有优秀的“证据”。AdSense使用一个有超过3亿条边的贝叶斯网络来学习如何推送广告的。

A key concept from the science of economics is "utility": a measure of how valuable something is to an intelligent agent. Precise mathematical tools have been developed that analyze how an agent can make choices and plan, using decision theory, decision analysis,[168] and information value theory.[67] These tools include models such as Markov decision processes,[169] dynamic decision networks,[167] game theory and mechanism design.[170]

经济学中的一个关键概念是“效用” :这是一种衡量某物对于一个智能主体的价值的方法。人们运用决策理论、决策分析和信息价值理论开发出了精确的数学工具来分析智能主体应该如何选择和计划。这些工具包括马尔可夫决策过程、动态决策网络、博弈论和机制设计等模型。

分类器与统计学习方法

The simplest AI applications can be divided into two types: classifiers ("if shiny then diamond") and controllers ("if shiny then pick up"). Controllers do, however, also classify conditions before inferring actions, and therefore classification forms a central part of many AI systems. Classifiers are functions that use pattern matching to determine a closest match. They can be tuned according to examples, making them very attractive for use in AI. These examples are known as observations or patterns. In supervised learning, each pattern belongs to a certain predefined class. A class can be seen as a decision that has to be made. All the observations combined with their class labels are known as a data set. When a new observation is received, that observation is classified based on previous experience.[171]

最简单的AI应用程序可以分为两类: >分类器 Classifiers (“若闪光,则为钻石”)和控制器 Controllers (“若闪光,则捡起来”)。然而,控制器在推断前也对条件进行分类,因此分类构成了许多AI系统的核心部分。分类器一组是使用匹配模式来判断最接近的匹配的函数。它们可以根据样例进行性能调优,使它们在AI应用中更有效。这些样例被称为“观察”或“模式”。在监督学习中,每个模式都属于某个预定义的类别。可以把一个类看作是一个必须做出的决定。所有的样例和它们的对应的类别标签被称为数据集。当接收一个新样例时,它会被分类器根据以前的经验进行分类。[171]

--Thingamabob(讨论) 分类器(“ if shiny then diamond”)和控制器(“ if shiny then pick up”) 一句不能准确翻译

--Ricky(讨论)已解决。这里主要是突出分类器的工作是下判断,而控制器的工作是做动作。

A classifier can be trained in various ways; there are many statistical and machine learning approaches. The decision tree[172] is perhaps the most widely used machine learning algorithm.模板:Sfn Other widely used classifiers are the neural network,[173] Gaussian mixture model,[174] and the extremely popular naive Bayes classifier.模板:Efn[175] Classifier performance depends greatly on the characteristics of the data to be classified, such as the dataset size, distribution of samples across classes, the dimensionality, and the level of noise. Model-based classifiers perform well if the assumed model is an extremely good fit for the actual data. Otherwise, if no matching model is available, and if accuracy (rather than speed or scalability) is the sole concern, conventional wisdom is that discriminative classifiers (especially SVM) tend to be more accurate than model-based classifiers such as "naive Bayes" on most practical data sets.[176]模板:Sfn

分类器可以通过多种方式进行训练;,比如许多统计学和机器学习方法。决策树可能是应用最广泛的机器学习算法。其他使用广泛的分类器还有神经网络、[173]K最近邻算法模板:Efn[177]、核方法(比如支持向量机)模板:Efn[178]、 高斯混合模型 Gaussian Mixture Mode[174],以及非常流行的朴素贝叶斯分类器 Naive Bayes Classifier[175]。分类器的分类效果在很大程度上取决于待分类数据的特征,如数据集的大小、样本跨类别的分布、维数和噪声水平。如果假设的模型很符合实际数据,则基于这种模型的分类器就能给出很好的结果。否则,传统观点认为如果没有匹配模型可用,而且只关心准确性(而不是速度或可扩展性) ,在大多数实际数据集上鉴别分类器(尤其是支持向量机)往往比基于模型的分类器(如“朴素贝叶斯”)更准确。[176]模板:Sfn

人工神经网络

A neural network is an interconnected group of nodes, akin to the vast network of neurons in the human brain.

Neural networks were inspired by the architecture of neurons in the human brain. A simple "neuron" N accepts input from other neurons, each of which, when activated (or "fired"), cast a weighted "vote" for or against whether neuron N should itself activate. Learning requires an algorithm to adjust these weights based on the training data; one simple algorithm (dubbed "fire together, wire together") is to increase the weight between two connected neurons when the activation of one triggers the successful activation of another. The neural network forms "concepts" that are distributed among a subnetwork of shared模板:Efn neurons that tend to fire together; a concept meaning "leg" might be coupled with a subnetwork meaning "foot" that includes the sound for "foot". Neurons have a continuous spectrum of activation; in addition, neurons can process inputs in a nonlinear way rather than weighing straightforward votes. Modern neural networks can learn both continuous functions and, surprisingly, digital logical operations. Neural networks' early successes included predicting the stock market and (in 1995) a mostly self-driving car.模板:Efn模板:Sfn In the 2010s, advances in neural networks using deep learning thrust AI into widespread public consciousness and contributed to an enormous upshift in corporate AI spending; for example, AI-related M&A in 2017 was over 25 times as large as in 2015.[179][180]

神经网络的诞生受到人脑神经元结构的启发。一个简单的“神经元”N 接受来自其他神经元的输入,每个神经元在被激活(或者说“放电”)时,都会对N是否应该被激活按一定的权重赋上值。学习的过程需要一个根据训练数据调整这些权重的算法:一个被称为“相互放电,彼此联系”简单的算法在一个神经元激活触发另一个神经元的激活时增加两个连接神经元之间的权重。神经网络中形成一种分布在一个共享的神经元子网络中的“概念”,这些神经元往往一起放电。“腿”的概念可能和“脚”概念的子网络相结合,后者包括”脚”的发音。神经元有一个连续的激活频谱; 此外,神经元还可以用非线性的方式处理输入,而不是简单地加权求和。现代神经网络可以学习连续函数甚至的数字逻辑运算。神经网络早期的成功包括预测股票市场和自动驾驶汽车(1995年)。模板:Efn2010年代,神经网络使用深度学习取得巨大进步,也因此将AI推向了公众视野里,并促使企业对AI投资急速增加; 例如2017年与AI相关的并购交易规模是2015年的25倍多。[181][182]

The study of non-learning artificial neural networks[173] began in the decade before the field of AI research was founded, in the work of Walter Pitts and Warren McCullouch. Frank Rosenblatt invented the perceptron, a learning network with a single layer, similar to the old concept of linear regression. Early pioneers also include Alexey Grigorevich Ivakhnenko, Teuvo Kohonen, Stephen Grossberg, Kunihiko Fukushima, Christoph von der Malsburg, David Willshaw, Shun-Ichi Amari, Bernard Widrow, John Hopfield, Eduardo R. Caianiello, and others[citation needed].

沃尔特·皮茨和沃伦·麦克卢奇共同完成的非学习型人工神经网络[173]的研究比AI研究领域成立早十年。他们发明了感知机 Perceptron,这是一个单层的学习网络,类似于线性回归的概念。早期的先驱者还包括 Alexey Grigorevich Ivakhnenko,Teuvo Kohonen,Stephen Grossberg,Kunihiko Fukushima,Christoph von der Malsburg,David Willshaw,Shun-Ichi Amari,Bernard Widrow,John Hopfield,Eduardo r. Caianiello 等人。

The main categories of networks are acyclic or feedforward neural networks (where the signal passes in only one direction) and recurrent neural networks (which allow feedback and short-term memories of previous input events). Among the most popular feedforward networks are perceptrons, multi-layer perceptrons and radial basis networks.[183] Neural networks can be applied to the problem of intelligent control (for robotics) or learning, using such techniques as Hebbian learning ("fire together, wire together"), GMDH or competitive learning.[184]

网络主要分为非循环或前馈神经网络 Acyclic or Feedforward Neural Networks(信号只向一个方向传递)和循环神经网络 Recurrent Neural Network (允许反馈和对以前的输入事件进行短期记忆)。其中最常用的前馈网络.[183]有感知机、多层感知机 Multi-layer Perceptrons 和径向基网络 Radial Basis Networks。使用赫布型学习 Hebbian Learning (“相互放电,共同链接”) ,GMDH 或竞争学习等技术的神经网络可以被应用于智能控制(机器人)或学习问题。[184]

Today, neural networks are often trained by the backpropagation algorithm, which had been around since 1970 as the reverse mode of automatic differentiation published by Seppo Linnainmaa,[185][186] and was introduced to neural networks by Paul Werbos.[187][188][189]

当下神经网络常用反向传播算法 来训练,1970年反向传播算法出现,被认为是 Seppo Linnainmaa提出的自动微分的反向模式出现[185][186],被保罗·韦伯引入神经网络。[187][188][189]

Hierarchical temporal memory is an approach that models some of the structural and algorithmic properties of the neocortex.[190]

Hierarchical temporal memory is an approach that models some of the structural and algorithmic properties of the neocortex.

层次化暂时性记忆是一种模拟大脑新皮层结构和算法特性的方法。[190]

To summarize, most neural networks use some form of gradient descent on a hand-created neural topology. However, some research groups, such as Uber, argue that simple neuroevolution to mutate new neural network topologies and weights may be competitive with sophisticated gradient descent approaches[citation needed]. One advantage of neuroevolution is that it may be less prone to get caught in "dead ends".[191]

总之,大多数神经网络都会在人工神经拓扑结构上使用某种形式的梯度下降法 Gradient Descent。然而,一些研究组,比如 Uber的,认为通过简单的神经进化改变新神经网络拓扑结构和神经元间的权重可能比复杂的梯度下降法更适用[citation needed]。神经进化的一个优势是,它不容易陷入“死胡同”。[192]

深层前馈神经网络

Deep learning is any artificial neural network that can learn a long chain of causal links模板:Dubious. For example, a feedforward network with six hidden layers can learn a seven-link causal chain (six hidden layers + output layer) and has a "credit assignment path" (CAP) depth of seven[citation needed]. Many deep learning systems need to be able to learn chains ten or more causal links in length.[193] Deep learning has transformed many important subfields of artificial intelligence模板:Why, including computer vision, speech recognition, natural language processing and others.[194][195][193]

深度学习是任何可以学习长因果链的人工神经网络。例如,一个具有六个隐藏层的前馈网络可以学习有七个链接的因果链(六个隐藏层 + 一个输出层) ,并且具深度为7的“信用分配路径 Credit Assignment Path,CAP ”。许多深度学习系统需要学习长度在十及以上的因果链。[194][195][193]

--Thingamabob(讨论)Credit Assignment Path未找到标准翻译

--Ricky(讨论)中文的翻译一般来说就是“信用分配路径”,但其实这里的credit指的是贡献、声誉等。整个CAP要解决的是整条链路种每个神经元对最终结果的贡献是多少。

根据一篇综述[196],“深度学习”这种表述是在1986年[197]被里纳·德克特引入到机器学习领域的,并在2000年伊克尔·艾森贝格和他的同事将其引入人工神经网络后获得了关注。[198] The first functional Deep Learning networks were published by Alexey Grigorevich Ivakhnenko and V. G. Lapa in 1965.[199]

第一个可以用的深度学习网络是由A. G.伊瓦赫年科和V.G.拉帕 在1965年发表的。这些网络每次只训练一层。1971年伊瓦赫年科的论文描述了一个8层的深度前馈多层感知机网络的学习过程,这个网络已经比许多后来的网络要深得多了[200]。2006年,杰弗里•辛顿和特迪诺夫的文章介绍了另一种预训练多层前馈神经网络 Many-layered Feedforward Neural Networks, FNNs 的方法,一次训练一层,将每一层都视为无监督的受限玻尔兹曼机,然后使用监督式反向传播进行微调。与浅层人工神经网络类似,深层神经网络可以模拟复杂的非线性关系。在过去的几年里,机器学习算法和计算机硬件的进步催生了更有效的方法,训练包含许多层非线性隐藏单元和一个非常大的输出层的深层神经网络。[201]

Deep learning often uses convolutional neural networks (CNNs), whose origins can be traced back to the Neocognitron introduced by Kunihiko Fukushima in 1980.[202] In 1989, Yann LeCun and colleagues applied backpropagation to such an architecture. In the early 2000s, in an industrial application, CNNs already processed an estimated 10% to 20% of all the checks written in the US.[203]

深度学习通常使用'卷积神经网络 ConvolutionalNeural Networks CNNs ,其起源可以追溯到1980年由福岛邦彦引进的新认知机。1989年扬·勒丘恩(Yann LeCun)和他的同事将反向传播算法应用于这样的架构。在21世纪初,在一项工业应用中,CNNs已经处理了美国大约10% 到20%的签发支票。

自2011年以来,在 GPUs上快速实现的 CNN 赢得了许多视觉模式识别比赛。[193]

2016年Deepmind 的“AlphaGo Lee”使用了有12个卷积层的 CNNs 和强化学习,击败了一个顶级围棋冠军。[204]

深层循环(递归)神经网络

Early on, deep learning was also applied to sequence learning with recurrent neural networks (RNNs)[205] which are in theory Turing complete[206] and can run arbitrary programs to process arbitrary sequences of inputs. The depth of an RNN is unlimited and depends on the length of its input sequence; thus, an RNN is an example of deep learning.[193] RNNs can be trained by gradient descent[207][208][209] but suffer from the vanishing gradient problem.[194][210] In 1992, it was shown that unsupervised pre-training of a stack of recurrent neural networks can speed up subsequent supervised learning of deep sequential problems.[211]

早期,深度学习也被用于循环神经网络 Recurrent Neural Networks,RNNs 的序列学习[212],可以运行任意程序来处理任意的输入序列。一个循环神经网络的深度是无限制的,取决于其输入序列的长度; 因此,循环神经网络是一个深度学习的例子[193],但却存在梯度消失问题。1992年的一项研究表明无监督的预训练循环神经网络可以加速后续的深度序列问题的监督式学习。][213][214][215] but suffer from the vanishing gradient problem.[194][210] In 1992, it was shown that unsupervised pre-training of a stack of recurrent neural networks can speed up subsequent supervised learning of deep sequential problems.[211]

Numerous researchers now use variants of a deep learning recurrent NN called the long short-term memory (LSTM) network published by Hochreiter & Schmidhuber in 1997.[216] LSTM is often trained by Connectionist Temporal Classification (CTC).[217] At Google, Microsoft and Baidu this approach has revolutionized speech recognition.引用错误:没有找到与</ref>对应的<ref>标签。谷歌,微软和百度用CTC彻底改变了语音识别。例如,2015年谷歌的语音识别性能大幅提升了49%,现在数十亿智能手机用户都可以通过谷歌声音使用这项技术。谷歌也使用LSTM来改进机器翻译,例如2015年,通过训练的LSTM,谷歌的语音识别性能大幅提升了49%,现在通过谷歌语音可以被数十亿的智能手机用户使用。谷歌还使用LSTM来改进机器翻译、语言建模和多语言语言处理。LSTM与CNNs一起使用改进了自动图像字幕的功能等众多应用。引用错误:没有找到与</ref>对应的<ref>标签 While projects such as AlphaZero have succeeded in generating their own knowledge from scratch, many other machine learning projects require large training datasets.[218][219] Researcher Andrew Ng has suggested, as a "highly imperfect rule of thumb", that "almost anything a typical human can do with less than one second of mental thought, we can probably now or in the near future automate using AI."[220] Moravec's paradox suggests that AI lags humans at many tasks that the human brain has specifically evolved to perform well.[77]

AI和电或蒸汽机一样,是一种通用技术。AI 擅长什么样的任务,这个问题尚未达成共识[221]。虽然像 AlphaZero 这样的项目已经能做到从零开始产生知识,但是许多其他的机器学习项目仍需要大量的训练数据集[222][223]。研究人员吴恩达认为,作为一个“极不完美的经验法则”,“几乎任何普通人只需要不到一秒钟的思考就能做到的事情,我们现在或者在不久的将来都可以使用AI做到。”莫拉维克悖论表明,AI在执行许多人类大脑专门进化出来的、能够很好完成的任务时表现不如人类。[224] Moravec's paradox suggests that AI lags humans at many tasks that the human brain has specifically evolved to perform well.[77]

Games provide a well-publicized benchmark for assessing rates of progress. AlphaGo around 2016 brought the era of classical board-game benchmarks to a close. Games of imperfect knowledge provide new challenges to AI in the area of game theory.[225][226] E-sports such as StarCraft continue to provide additional public benchmarks.[227][228] There are many competitions and prizes, such as the Imagenet Challenge, to promote research in artificial intelligence. The most common areas of competition include general machine intelligence, conversational behavior, data-mining, robotic cars, and robot soccer as well as conventional games.[229]

游戏是评估进步率用的一个广泛认可的基准。2016年前后,AlphaGo 为传统棋类基准的时代的拉下终幕。[230][231] E-sports such as StarCraft continue to provide additional public benchmarks.[232][233] 不过,不完全知识的游戏给AI在博弈论领域提出了新的挑战。星际争霸等电子竞技现在仍然是一项的公众基准。现在出现了设立了有许多如 Imagenet 挑战赛的比赛和奖项以促进AI研究。最常见的比赛内容包括通用机器智能、对话行为、数据挖掘、机器人汽车、机器人足球以及传统游戏。 [234]

The "imitation game" (an interpretation of the 1950 Turing test that assesses whether a computer can imitate a human) is nowadays considered too exploitable to be a meaningful benchmark.[235] A derivative of the Turing test is the Completely Automated Public Turing test to tell Computers and Humans Apart (CAPTCHA). As the name implies, this helps to determine that a user is an actual person and not a computer posing as a human. In contrast to the standard Turing test, CAPTCHA is administered by a machine and targeted to a human as opposed to being administered by a human and targeted to a machine. A computer asks a user to complete a simple test then generates a grade for that test. Computers are unable to solve the problem, so correct solutions are deemed to be the result of a person taking the test. A common type of CAPTCHA is the test that requires the typing of distorted letters, numbers or symbols that appear in an image undecipherable by a computer.模板:Sfn

“模仿游戏”(对1950年图灵测试的一种解释,用来评估计算机是否可以模仿人类)如今被认为过于灵活,所以不能成为有一项意义的基准[236]。图灵测试衍生出了验证码 Completely Automated Public Turing test to tell Computers and Humans Apart,CAPTCHA(即全自动区分计算机和人类的图灵测试),顾名思义,这有助于确定用户是一个真实的人,而不是一台伪装成人的计算机。与标准的图灵测试不同,CAPTCHA 是由机器控制,面向人测试,而不是由人控制的,面向机器测试的。计算机要求用户完成一个简单的测试,然后给测试评出一个等级。计算机无法解决这个问题,所以一般认为只有人参加测试才能得出正确答案。验证码的一个常见类型是要求输入一幅计算机无法破译的图中扭曲的字母,数字或符号测试。模板:Sfn

“通用智能”测试旨在比较机器、人类甚至非人类动物在尽可能通用的问题集上的表现。在极端情况下,测试集可以包含所有可能出现的问题,再通过柯尔莫哥洛夫复杂度赋予权重;可是这些问题集里大多数问题都是不怎么难的模式匹配练习,在这些练习中,优化过的AI可以轻易地超过人类。[237][238]

应用

[[File:Automated online assistant.png|thumb|An automated online assistant providing customer service on a web page – one of many very primitive applications of artificial intelligence]AI的初级应用之一:提供客户服务的网页自动化助理] ]

AI与任何智力任务都息息相关模板:Sfn。现代AI技术无处不在[239] ,数量众多,无法在此列举。通常,当一种技术变成主流应用时,它就不再被认为是AI; 这种现象被称为AI效应。模板:Sfn

大众常见的AI包括自动驾驶(如无人机和自动驾驶汽车)、医疗诊断、艺术创作(如诗歌)、证明数学定理、玩游戏(如国际象棋或围棋)、搜索引擎(如谷歌搜索)、在线助手(如 Siri)、图像识别、垃圾邮件过滤、航班延误预测[240] 、司法判决预测[241] 、投放在线广告模板:Sfn[242][243]和能源储存[244]。

随着社交媒体网站取代电视成为年轻人获取新闻的来源,以及新闻机构越来越依赖社交媒体平台来发布新闻[245],大型出版商现在使用AI技术发布新闻,这样做效率更高且能带来更多的流量[246]。

AI还可以用来生成“深度虚假(DeepFake)”,这是一种内容改变技术。至顶网报道说,“它展示出一些并没有真正发生的事情。”尽管88% 的美国人认为换脸弊大于利,但只有47%的人认为自己会成为换脸对象。选举年的盛况也让公众开始讨论起虚假政治视频的害处[247]。

医疗

[[File:Laproscopic Surgery Robot.jpg|thumb| A patient-side surgical arm of Da Vinci Surgical System]]

在医疗保健中,AI通常被用于分类,它既可以自动对 CT 扫描或心电图EKG进行初步评估,又可以在人口健康调查中识别高风险患者。AI的应用范围正在迅速扩大。

例如研究结果表明,AI在成本高昂的剂量问题上可以节省160亿美元。2016年,加利福尼亚州的一项开创性研究报道,在AI的辅助下得到的一个数学公式,给出了器官患者免疫抑制药的准确剂量[248] 。

--Thingamabob(讨论) 例如研究结果表明,AI在高成本的剂量问题上可以节省160亿美元。 为省译

AI还能协助医生。据彭博科技报道,微软已经开发出帮助医生找到正确的癌症治疗方法的AI[249]。如今有大量的研究和药物开发与癌症有关,准确来说有800多种可以治疗癌症的药物和疫苗。这对医生来说并不是一件好事,因为选项太多,使得为病人选择合适的药物变得更难。微软正在开发一种名为“汉诺威”的机器。它的目标是记住所有与癌症有关的论文,并帮助预测哪些药物的组合对病人最有效。目前正在进行的一个项目是抗击髓系白血病,这是一种致命的癌症,几十年来治疗水平一直没有提高。据报道,另一项研究发现,AI在识别皮肤癌方面与训练有素的医生一样优秀[250]。另一项研究是使用AI通过询问每个高风险患者多个问题来监测他们,这些问题是基于从医生与患者的互动中获得的数据产生的[251]。其中一项研究是通过转移学习完成的,机器进行的诊断类似于训练有素的眼科医生,可以在30秒内做出是否应该转诊治疗的决定,准确率超过95% [252]。

据 CNN 报道,华盛顿国家儿童医疗中心的外科医生最近的一项研究成功演示了一台自主机器人手术。研究组观看了机器人做软组织手术、在开放手术中缝合猪肠的整个过程,并认为比人类外科医生做得更好[253] 。IBM已经创造了自己的AI计算机——IBM 沃森,它在某种程度上已经超越了人类智能。沃森一直在努力实现医疗保健领域的应用[254]。

汽车

在AI领域,自动驾驶汽车的创造和发展促进了汽车行业的增长。目前有超过30家公司利用AI开发自动驾驶汽车,包括特斯拉、谷歌和苹果等[255]。

自动驾驶汽车的功能的实现需要很多组件。这些车辆集成了诸如刹车、换车道、防撞、导航和测绘等系统。这些系统和高性能计算机一起被装配到一辆复杂的车中.[256]。

自动驾驶汽车的最新发展使自动驾驶卡车的创新成为可能,尽管它们仍处于测试阶段。英国政府已通过立法,于2018年开始测试自动驾驶卡车列队行驶[257]。自动驾驶卡车队列是指一排自动驾驶卡车跟随一辆非自动驾驶卡车,所以卡车排还不是完全自动的。与此同时,德国汽车公司戴姆勒正在测试Freightliner Inspiration,这是一种只在高速公路上行驶的半自动卡车[258]。

影响无人驾驶汽车性能的一个主要因素是地图。一般来说,一张行驶区域的地图会被预先写入车辆中。这张地图将包括街灯和路缘高度的近似数据,让车辆能够感知周围环境。然而谷歌一直在研究一种不需要预编程地图的算法,创造一种能够适应各种新环境的设备[259]。一些自动驾驶汽车没有配备方向盘或刹车踏板,因此也有研究致力于创建感知速度和驾驶条件的算法,为车内乘客提供一个安全的环境[260]。

衡量无人驾驶汽车能力的另一个因素是乘客的安全。工程师们必须对无人驾驶汽车进行编程,使其能够处理比如与行人正面相撞等高风险情况。这辆车的主要目标应该是做出一个避免撞到行人,保护车内的乘客的决定。但是有时汽车也可能也会不得不决定将某人置于危险之中。也就是说,汽车需要决定是拯救行人还是乘客[261]。汽车在这些情况下的编程对于一辆成功的无人驾驶汽车是至关重要的。

金融和经济

长期以来,金融机构一直使用人工神经网络系统来检测超出常规的费用或申诉,并将其标记起来等待人工调查。AI在银行业的应用可以追溯到1987年,当时美国国家安全太平洋银行成立了一个防防诈特别小组,以打击未经授权使用借记卡的行为[262]。Kasisto 和 Moneystream等程序正在把AI技术使用到金融服务领域。

如今,银行使用AI系统来组织业务、记账、投资股票和管理房地产。AI可以对突然的变化和没有业务的情况做出反应[263]。2001年8月,机器人在一场模拟金融交易竞赛中击败了人类[264]。AI还通过监测用户的行为模式发现异常变化或异常现象,减少了欺诈和金融犯罪[265][266][267]。

人AI正越来越多地被企业所使用。马云发表过一个有争议的预测:距离AI当上CEO还有30年的时间[268][269]。

AI机器在市场上如在线交易和决策的应用改变了主流经济理论[270]。例如,基于AI的买卖平台改变了供求规律,因为现在可以通过AI很容易地估计个性化需求和供给曲线,从而实现个性化的定价。此外,AI减少了交易的信息不对称,在使市场更有效率的同时也减少了交易量。此外,AI限定了市场行为的后果,进一步提高了交易效率。受AI影响的其他理论包括理性选择、理性预期、博弈论、刘易斯转折点、投资组合优化和反事实思维。2019年8月,AICPA 为会计专业人员开设了 AI 培训课程[271]。

网络安全

网络安全领域面临着各种大规模黑客攻击的重大挑战,这些攻击损害到了很多组织,造成了数十亿美元的商业损失。网络安全公司已经开始使用AI和自然语言处理(NLP) ,例如,SIEM (Security Information and Event Management,安全信息和事件管理)解决方案。更高级的解决方案使用AI和自然语言处理将网络中的数据划分为高风险和低风险两类信息。这使得安全团队能够专注于对付那些有可能对组织造成真正伤害的攻击,不沦为分布式拒绝服务攻击(DoS)、恶意软件和其他攻击的受害者。

政务

政务AI包括应用和管理。AI与人脸识别系统相结合可用于大规模监控。中国的一些地区已经开始使用这种技术[272][273]。一个AI还参与了2018年Tama City市长选举的角逐。

2019年,印度硅谷班加罗尔将在该市的387个交通信号灯上部署AI控制的交通信号系统。这个系统将使用摄像头来确定交通密度,并据此计算清除交通量所需的时间,决定街道上的车辆交通灯的持续时间[274]。

与法律有关的专业

AI正在成为法律相关专业的主要组成部分。一些情况下,人们会通过AI分析处理技术,使用算法和机器学习来完成以前由初级律师完成的工作。

电子资料档案查询(eDiscovery)产业一直很关注机器学习(预测编码 / 技术辅助评审) ,这是AI的一个子领域。自然语言处理(NLP)和自动语音识别(ASR)也正在这个行业流行起来。

电子游戏

在视频游戏中,AI通常被用来让非玩家角色( non-player characters,NPCs)中做出动态的目的性行为。此外,还常用简单的AI技术寻路。一些研究人员认为,对于大多数生产任务来说,游戏中的 NPC AI 是一个“已解决问题”。含更多非典型 AI 的游戏有《求生之路》(Left 4 Dead, 2008)中的 AI 导演和《最高指挥官2》(Supreme Commander 2, 2010)中的对一个野战排进行的神经演化训练[275][276]。

--Thingamabob(讨论) neuroevolutionary training of platoons 未找到标准翻译

--Ricky(讨论)platoons是指野战排,一个军事编制单位。neuroevolutionary training是指结合神经网络和演化计算的训练方式。这个词还不是一个很专业术语,没有标准翻译。

军事

美国和其他国家正在为一系列军事目的开发AI应用程序[277]。AI和机器学习的主要军事应用是增强 C2、通信、传感器、集成和互操作性。情报收集和分析、后勤、网络操作、信息操作、指挥和控制以及各种半自动和自动车辆等领域正在进行AI研究[278] AI research is underway in the fields of intelligence collection and analysis, logistics, cyber operations, information operations, command and control, and in a variety of semiautonomous and autonomous vehicles.[277]。AI技术能够协调传感器和效应器、探测威胁和识别、标记敌人阵地、目标获取、协调和消除有人和无人小组(MUM-T)、联网作战车辆和坦克内部的分布式联合火力[278] 。伊拉克和叙利亚的军事行动就采用了AI。[277]

全球每年在机器人方面的军费开支从2010年的51亿美元增加到2015年的75亿美元[279][280]。人们都认为具有自主行动能力的军用无人机很有价值[281]。而许多AI研究人员则正在试图远离AI的军事应用。[282]

待客

In the hospitality industry, Artificial Intelligence based solutions are used to reduce staff load and increase efficiency[283] by cutting repetitive tasks frequency, trends analysis, guest interaction, and customer needs prediction.[284] Hotel services backed by Artificial Intelligence are represented in the form of a chatbot,[285] application, virtual voice assistant and service robots.

In the hospitality industry, Artificial Intelligence based solutions are used to reduce staff load and increase efficiency by cutting repetitive tasks frequency, trends analysis, guest interaction, and customer needs prediction. Hotel services backed by Artificial Intelligence are represented in the form of a chatbot, application, virtual voice assistant and service robots.

在待客行业,基于AI的解决方案通过减少重复性任务的频率、分析趋势、与客户活动和预测客户需求来减少员工负担和提高效率[286][287]。使用AI的酒店服务以聊天机器人、应用程序、虚拟语音助手和服务机器人的形式呈现[288]。

审计

For financial statements audit, AI makes continuous audit possible. AI tools could analyze many sets of different information immediately. The potential benefit would be the overall audit risk will be reduced, the level of assurance will be increased and the time duration of audit will be reduced.[289]

For financial statements audit, AI makes continuous audit possible. AI tools could analyze many sets of different information immediately. The potential benefit would be the overall audit risk will be reduced, the level of assurance will be increased and the time duration of audit will be reduced.

在财务报表审计这方面,AI 使得持续审计成为可能。AI工具可以迅速分析多组不同的信息。AI应用带来的可能的好处包括减少总体审计风险,提高审计水平,缩短审计时间。[290]

广告

It is possible to use AI to predict or generalize the behavior of customers from their digital footprints in order to target them with personalized promotions or build customer personas automatically.[291] A documented case reports that online gambling companies were using AI to improve customer targeting.[292]

It is possible to use AI to predict or generalize the behavior of customers from their digital footprints in order to target them with personalized promotions or build customer personas automatically. A documented case reports that online gambling companies were using AI to improve customer targeting.

AI通过客户的数字足迹预测或归纳客户的行为,投放定制广告或者自动构建顾客画像[291]。有记录报告称,线上赌博公司正在使用AI来改进客户定位功能。[293]

Moreover, the application of Personality computing AI models can help reducing the cost of advertising campaigns by adding psychological targeting to more traditional sociodemographic or behavioral targeting.[294]

Moreover, the application of Personality computing AI models can help reducing the cost of advertising campaigns by adding psychological targeting to more traditional sociodemographic or behavioral targeting.

此外,个性计算AI模型通过结合心理定位和传统的社会人口学或行为定位方法,帮助降低了广告投放的成本。[294]

艺术

Artificial Intelligence has inspired numerous creative applications including its usage to produce visual art. The exhibition "Thinking Machines: Art and Design in the Computer Age, 1959–1989" at MoMA[295] provides a good overview of the historical applications of AI for art, architecture, and design. Recent exhibitions showcasing the usage of AI to produce art include the Google-sponsored benefit and auction at the Gray Area Foundation in San Francisco, where artists experimented with the DeepDream algorithm[296] and the exhibition "Unhuman: Art in the Age of AI," which took place in Los Angeles and Frankfurt in the fall of 2017.[297][298] In the spring of 2018, the Association of Computing Machinery dedicated a special magazine issue to the subject of computers and art highlighting the role of machine learning in the arts.[299] The Austrian Ars Electronica and Museum of Applied Arts, Vienna opened exhibitions on AI in 2019.[300][301] The Ars Electronica's 2019 festival "Out of the box" extensively thematized the role of arts for a sustainable societal transformation with AI.[302]

AI催生了许多在如视觉艺术等领域的创造性应用。在纽约现代艺术博物馆举办的“思考机器: 计算机时代的艺术与设计,1959-1989”展览概述了艺术、建筑和设计的历史中AI的应用[295]。最近的展览展示了AI在艺术创作中的应用[296],包括谷歌赞助的旧金山灰色地带基金会(Gray Area Foundation)的慈善拍卖会,艺术家们在拍卖会中尝试了 DeepDream 算法,以及2017年秋天在洛杉矶和法兰克福举办的“非人类: AI时代的艺术”展览.[297][298]。2018年春天,计算机协会发行了一期主题为计算机和艺术的特刊,着重展示了机器学习在艺术中的作用。奥地利电子艺术博物馆和维也纳应用艺术博物馆于2019年开设了AI展览[300][301]。2019年的电子艺术节 “Out of the box”将AI艺术在可持续社会转型中的作用变成了一个主题[302]。

哲学和伦理学

有三个与人工智能相关的哲学问题:

- 通用人工智能可能实现吗?机器能解决任何人类使用智能就能解决的问题吗?或者一台机器所能完成的事情是否有严格的界限?

- 智能机器危险吗?我们怎样才能确保机器的行为和使用机器的过程符合道德规范?

- 机器能否拥有与人类完全相同的思维、意识和精神状态?一台机器是否能拥有直觉,因此得到某些权利?机器会做出刻意伤害吗?

人工智能的局限性

机器是智能的吗?它能“思考”吗?

- Alan Turing's "polite convention"

- We need not decide if a machine can "think"; we need only decide if a machine can act as intelligently as a human being. This approach to the philosophical problems associated with artificial intelligence forms the basis of the Turing test.[303]

阿兰 · 图灵的“礼貌惯例: 阿兰 · 图灵的“礼貌惯例” : 我们不需要决定一台机器是否可以“思考”;我们只需要决定一台机器是否可以像人一样聪明地行动。这个对AI相关哲学问题的回应成为了图灵测试的基础。

--Thingamabob(讨论)polite convention未找到标准翻译

- The Dartmouth proposal

- "Every aspect of learning or any other feature of intelligence can be so precisely described that a machine can be made to simulate it." This conjecture was printed in the proposal for the Dartmouth Conference of 1956.[304]

达特茅斯提案:达特茅斯会议提出: “可以通过准确地描述学习的每个方面或智能的任何特征,使得一台机器模拟学习和智能。”这个猜想被写在了1956年达特茅斯学院会议的提案中。

纽厄尔和西蒙的物理符号系统假说: 物理符号系统是通往通用智能行为的充分必要途径。纽厄尔和西蒙认为智能由符号形式的运算组成。[305] 休伯特·德雷福斯则相反地认为,人类的知识依赖于无意识的本能,而不是有意识的符号运算;依赖于对情境的“感觉”,而不是明确的符号知识。(参见德雷福斯对人工智能的批评。)[306][307]

哥德尔的论点:哥德尔本人[308] 、约翰·卢卡斯(在1961年)和罗杰·彭罗斯(在1989年以后的一个更详细的争论中)提出了高度技术性的观点,认为人类数学家可以看到他们自己的“哥德尔不完备定理 Gödel Satements”的真实性,因此计算能力超过机械图灵机[309]。然而,也有一些人不同意“哥德尔不完备定理”。[310][311][312]

- The artificial brain argument

- The brain can be simulated by machines and because brains are intelligent, simulated brains must also be intelligent; thus machines can be intelligent. Hans Moravec, Ray Kurzweil and others have argued that it is technologically feasible to copy the brain directly into hardware and software and that such a simulation will be essentially identical to the original.[101]

人工大脑的观点: 因为大脑可以被机器模拟,且大脑是智能的,模拟的大脑也必须是智能的;因此机器可以是智能的。汉斯·莫拉维克、雷·库兹韦尔和其他人认为,技术层面直接将大脑复制到硬件和软件上是可行的,而且这些拷贝在本质上和原来的大脑是没有区别的。

- The AI effect

- Machines are already intelligent, but observers have failed to recognize it. When Deep Blue beat Garry Kasparov in chess, the machine was acting intelligently. However, onlookers commonly discount the behavior of an artificial intelligence program by arguing that it is not "real" intelligence after all; thus "real" intelligence is whatever intelligent behavior people can do that machines still cannot. This is known as the AI Effect: "AI is whatever hasn't been done yet."

AI效应: 机器本来就是智能的,但是观察者却没有意识到这一点。当深蓝在国际象棋比赛中击败加里 · 卡斯帕罗夫时,机器就在做出智能行为。然而,旁观者通常对AI程序的行为不屑一顾,认为它根本不是“真正的”智能; 因此,“真正的”智能就是人任何类能够做到但机器仍然做不到的智能行为。这就是众所周知的AI效应: “AI就是一切尚未完成的事情"。

潜在危害

AI的广泛使用可能会产生危险或导致意外后果。生命未来研究所(Future of Life Institute)等机构的科学家提出了一些短期研究目标,以此了解AI如何影响经济、与AI相关的法律和道德规范,以及如何将AI的安全风险降到最低。从长远来看,科学家们建议继续优化功能,同时最小化新技术带来的可能的安全风险。[313]

The potential negative effects of AI and automation were a major issue for Andrew Yang's 2020 presidential campaign in the United States.[314] Irakli Beridze, Head of the Centre for Artificial Intelligence and Robotics at UNICRI, United Nations, has expressed that "I think the dangerous applications for AI, from my point of view, would be criminals or large terrorist organizations using it to disrupt large processes or simply do pure harm. [Terrorists could cause harm] via digital warfare, or it could be a combination of robotics, drones, with AI and other things as well that could be really dangerous. And, of course, other risks come from things like job losses. If we have massive numbers of people losing jobs and don't find a solution, it will be extremely dangerous. Things like lethal autonomous weapons systems should be properly governed — otherwise there's massive potential of misuse."[315]

AI和自动化潜在的负面影响在安德鲁·杨2020年竞选美国总统的过程中体现了出来[316] 。联合国人工智能与机器人中心主任伊拉克利·贝瑞德兹表示: ”我认为AI危害会体现在犯罪分子或大型恐怖组织利用AI破坏大型流程或通过数字战争造成损失,也可能是机器人、无人机、AI以及其他可能非常危险的东西的结合。当然,其还有失业等风险。如果大量的人失去工作,而且没有解决方案,这将是极其危险的。另外,致命的自动化武器系统之类的东西应该得到合适的控制,否则就可能会被大量滥用。”[317]

存在风险

Physicist Stephen Hawking, Microsoft founder Bill Gates, and SpaceX founder Elon Musk have expressed concerns about the possibility that AI could evolve to the point that humans could not control it, with Hawking theorizing that this could "spell the end of the human race".[318][319][320]

Physicist Stephen Hawking, Microsoft founder Bill Gates, and SpaceX founder Elon Musk have expressed concerns about the possibility that AI could evolve to the point that humans could not control it, with Hawking theorizing that this could "spell the end of the human race".

物理学家斯蒂芬·霍金、微软创始人比尔·盖茨和 SpaceX 公司创始人埃隆·马斯克对AI进化到人类无法控制的程度表示担忧,霍金认为这可能“会导致人类末日”。[321][319][322]

In his book Superintelligence, philosopher Nick Bostrom provides an argument that artificial intelligence will pose a threat to humankind. He argues that sufficiently intelligent AI, if it chooses actions based on achieving some goal, will exhibit convergent behavior such as acquiring resources or protecting itself from being shut down. If this AI's goals do not fully reflect humanity's—one example is an AI told to compute as many digits of pi as possible—it might harm humanity in order to acquire more resources or prevent itself from being shut down, ultimately to better achieve its goal. Bostrom also emphasizes the difficulty of fully conveying humanity's values to an advanced AI. He uses the hypothetical example of giving an AI the goal to make humans smile to illustrate a misguided attempt. If the AI in that scenario were to become superintelligent, Bostrom argues, it may resort to methods that most humans would find horrifying, such as inserting "electrodes into the facial muscles of humans to cause constant, beaming grins" because that would be an efficient way to achieve its goal of making humans smile.[323] In his book Human Compatible, AI researcher Stuart J. Russell echoes some of Bostrom's concerns while also proposing an approach to developing provably beneficial machines focused on uncertainty and deference to humans,[324]:173 possibly involving inverse reinforcement learning.[324]:191–193

在《超级智能》一书中,哲学家尼克·博斯特罗姆提出了一个AI将对人类构成威胁的论点。他认为,如果足够智能的AI选择有目标地行动,它将表现出收敛的行为,如获取资源或保护自己不被关机。如果这个AI的目标没有人性,比如一个AI被告知要尽可能多地计算圆周率的位数,那么它可能会伤害人类,以便获得更多的资源或者防止自身被关闭,最终更好地实现目标。博斯特罗姆还强调了向高级AI充分传达人类价值观存在的困难。他假设了一个例子来说明一种南辕北辙的尝试: 给AI一个让人类微笑的目标。博斯特罗姆认为,如果这种情况下的AI变得非常聪明,它可能会采用大多数人类都会感到恐怖的方法,比如“在人类面部肌肉中插入电极,使其产生持续的笑容” ,因为这将是实现让人类微笑的目标的有效方法。[325]AI研究人员斯图亚特.J.罗素在他的《人类相容》一书中回应了博斯特罗姆的一些担忧,同时也提出了一种开发可证明有益的机器可能涉及逆强化学习的方法[324]:191–193,这种机器侧重解决不确定性和顺从人类的问题。[324]:173

Concern over risk from artificial intelligence has led to some high-profile donations and investments. A group of prominent tech titans including Peter Thiel, Amazon Web Services and Musk have committed $1 billion to OpenAI, a nonprofit company aimed at championing responsible AI development.[326] 人工智能领域内专家们的意见是混杂的,担忧和不担忧超越人类能力的AI的观点都占有很大的分额。[327] Facebook CEO Mark Zuckerberg believes AI will "unlock a huge amount of positive things," such as curing disease and increasing the safety of autonomous cars.[328] In January 2015, Musk donated $10 million to the Future of Life Institute to fund research on understanding AI decision making. The goal of the institute is to "grow wisdom with which we manage" the growing power of technology. Musk also funds companies developing artificial intelligence such as DeepMind and Vicarious to "just keep an eye on what's going on with artificial intelligence.[329] I think there is potentially a dangerous outcome there."[330][331]

对人工智能潜在风险的担忧引来了一些大额的捐献和投资。一些科技巨头,如彼得·蒂尔、亚马逊云服务、以及马斯克已经把10亿美金给了OpenAI,一个拥护和聚焦于开发可靠的AI的非盈利公司。[332] 人工智能领域内专家们的观点是混杂的,担忧和不担忧超人类AI的意见都占有很大的份额。其他技术行业的领导者相信AI在目前的形式下是有益的,并将继续帮助人类。甲骨文首席执行官马克·赫德表示,AI“实际上将创造更多的就业机会,而不是减少就业机会” ,因为管理AI系统需要人力[333] 。Facebook 首席执行官马克·扎克伯格相信AI将“解锁大量正面的东西” ,比如治愈疾病和提高自动驾驶汽车的安全性[334]。2015年1月,马斯克向未来生命研究所捐赠了1000万美元,用于研究AI决策。该研究所的目标是“用智能管理”日益增长的技术力量。马斯克还为 DeepMind 和 Vicarious 等开发AI的公司提供资金,以“跟进AI的发展”[335]因为认为这个领域“可能会产生危险的后果”[336][337]。

For the danger of uncontrolled advanced AI to be realized, the hypothetical AI would have to overpower or out-think all of humanity, which a minority of experts argue is a possibility far enough in the future to not be worth researching.[338][339] Other counterarguments revolve around humans being either intrinsically or convergently valuable from the perspective of an artificial intelligence.[340]

For the danger of uncontrolled advanced AI to be realized, the hypothetical AI would have to overpower or out-think all of humanity, which a minority of experts argue is a possibility far enough in the future to not be worth researching. Other counterarguments revolve around humans being either intrinsically or convergently valuable from the perspective of an artificial intelligence.

为了实现不受控制的先进人工智能的危险,假设的人工智能必须超越或超越整个人类,一小部分专家认为这种可能性在未来足够遥远,不值得研究。其他反对意见则围绕着从人工智能的角度来看, 人类有内在或可聚合的价值。

如果要实现不受控制的高级AI,这个假想中的AI必须超越或者说在思想上超越全人类,一小部分专家认为这种可能性在足够遥远未来才会出现,不值得研究。其他反对意见则认为,从AI的角度来看,人类或者具有内在价值,或者具有可交流的价值。

--Thingamabob(讨论) 不太能翻译 Other counterarguments revolve around humans being either intrinsically or convergently valuable from the perspective of an artificial intelligence.一句

人性贬值

Joseph Weizenbaum wrote that AI applications cannot, by definition, successfully simulate genuine human empathy and that the use of AI technology in fields such as customer service or psychotherapy[341] was deeply misguided. Weizenbaum was also bothered that AI researchers (and some philosophers) were willing to view the human mind as nothing more than a computer program (a position now known as computationalism). To Weizenbaum these points suggest that AI research devalues human life.[342]

约瑟夫·维森鲍姆写道,根据定义,AI应用程序不能模拟人类的同理心,并且在诸如客户服务或心理治疗等领域使用AI技术是严重错误[343] 。维森鲍姆还对AI研究人员(以及一些哲学家)将人类思维视为一个计算机程序(现在称为计算主义)而感到困扰。对维森鲍姆来说,这些观点表明AI研究贬低了人类的生命价值。[342]

社会正义

One concern is that AI programs may be programmed to be biased against certain groups, such as women and minorities, because most of the developers are wealthy Caucasian men.[344] Support for artificial intelligence is higher among men (with 47% approving) than women (35% approving).

人们担心的一个问题是,AI程序可能会对某些群体存在偏见,比如女性和少数族裔,因为大多数开发者都是富有的白人男性[345]。男性对AI的支持率(47%)高于女性(35%)。

Algorithms have a host of applications in today's legal system already, assisting officials ranging from judges to parole officers and public defenders in gauging the predicted likelihood of recidivism of defendants.[346] COMPAS (an acronym for Correctional Offender Management Profiling for Alternative Sanctions) counts among the most widely utilized commercially available solutions.[346] It has been suggested that COMPAS assigns an exceptionally elevated risk of recidivism to black defendants while, conversely, ascribing low risk estimate to white defendants significantly more often than statistically expected.[346]

算法在今天的法律体系中已经有了大量的应用,它能协助法官以及假释官员,以及哪些负责评估被告再次犯罪可能性的公设辩护人[346]。COMPAS(Correctional Offender Management Profiling for Alternative Sanctions,即“替代性制裁的惩罚性罪犯管理分析”的首字母缩写)是商业上使用最广泛的解决方案之一[346]。有人指出,COMPAS 对黑人被告累犯风险的评估数值非常高,而相反的,白人被告低风险估计的频率明显高于统计学期望。[346]

劳动力需求降低

The relationship between automation and employment is complicated. While automation eliminates old jobs, it also creates new jobs through micro-economic and macro-economic effects.[347] Unlike previous waves of automation, many middle-class jobs may be eliminated by artificial intelligence; The Economist states that "the worry that AI could do to white-collar jobs what steam power did to blue-collar ones during the Industrial Revolution" is "worth taking seriously".[348] Subjective estimates of the risk vary widely; for example, Michael Osborne and Carl Benedikt Frey estimate 47% of U.S. jobs are at "high risk" of potential automation, while an OECD report classifies only 9% of U.S. jobs as "high risk".[349][350][351] Jobs at extreme risk range from paralegals to fast food cooks, while job demand is likely to increase for care-related professions ranging from personal healthcare to the clergy.[352] Author Martin Ford and others go further and argue that many jobs are routine, repetitive and (to an AI) predictable; Ford warns that these jobs may be automated in the next couple of decades, and that many of the new jobs may not be "accessible to people with average capability", even with retraining. Economists point out that in the past technology has tended to increase rather than reduce total employment, but acknowledge that "we're in uncharted territory" with AI.[20]

自动化与就业的关系是复杂的。自动化在减少过时工作的同时,也通过微观经济和宏观经济效应创造了新的就业机会[353]。与以往的自动化浪潮不同,许多中产阶级的工作可能会被AI淘汰。《经济学人》杂志指出,“AI对白领工作的影响,就像工业革命时期蒸汽动力对蓝领工作的影响一样,需要我们正视”[354]。对风险的主观估计差别很大,例如,迈克尔·奥斯本和卡尔·贝内迪克特·弗雷估计,美国47% 的工作有较高风险被自动化取代 ,而经合组织的报告认为美国仅有9% 的工作处于“高风险”状态[355][356][357]。从律师助理到快餐厨师等职业都面临着极大的风险,而个人医疗保健、神职人员等护理相关职业的就业需求可能会增加[358]。作家马丁•福特和其他人进一步指出,许多工作都是常规、重复的,对AI而言是可以预测的。福特警告道,这些工作可能在未来几十年内实现自动化,而且即便对失业人员进行再培训,许多能力一般的人也不能获得新工作。经济学家指出,在过去技术往往会增加而不是减少总就业人数,但他们承认,AI“正处于未知领域”[20]。

自动化武器

Currently, 50+ countries are researching battlefield robots, including the United States, China, Russia, and the United Kingdom. Many people concerned about risk from superintelligent AI also want to limit the use of artificial soldiers and drones.[359]

目前,包括美国、中国、俄罗斯和英国在内的50多个国家正在研究战场机器人。许多人在担心来自超级智能AI的风险的同时,也希望限制人造士兵和无人机的使用。[360]

道德机器

Machines with intelligence have the potential to use their intelligence to prevent harm and minimize the risks; they may have the ability to use ethical reasoning to better choose their actions in the world. As such, there is a need for policy making to devise policies for and regulate artificial intelligence and robotics.[361] Research in this area includes machine ethics, artificial moral agents, friendly AI and discussion towards building a human rights framework is also in talks.[362]

具有智能的机器有潜力使用它们的智能来防止伤害和减少风险;它们也有能力利用伦理推理来更好地做出它们在世界上的行动。因此,有必要为AI和机器人制定和规范政策[363]。这一领域的研究包括机器伦理学、人工道德主题、友好AI以及关于建立人权框架的讨论[364]。

人工道德智能主体

Wendell Wallach introduced the concept of artificial moral agents (AMA) in his book Moral Machines[365] For Wallach, AMAs have become a part of the research landscape of artificial intelligence as guided by its two central questions which he identifies as "Does Humanity Want Computers Making Moral Decisions"[366] and "Can (Ro)bots Really Be Moral".[367] For Wallach, the question is not centered on the issue of whether machines can demonstrate the equivalent of moral behavior in contrast to the constraints which society may place on the development of AMAs.[368]

温德尔•沃勒克在他的著作《道德机器》(Moral Machines)[369]中提出了人工道德智能主体(AMA)的概念。在两个核心问题的指导下,AMA 已经成为AI研究领域的一部分。他将这两个核心问题定义为“人类是否希望计算机做出道德决策”和“机器人真的可以拥有道德吗”。对于沃勒克来说,这个问题的重点并不是机器能否适应社会,表现与社会对AMA发展所施加的限制相对应的道德行为。

机器伦理学

The field of machine ethics is concerned with giving machines ethical principles, or a procedure for discovering a way to resolve the ethical dilemmas they might encounter, enabling them to function in an ethically responsible manner through their own ethical decision making.[370] The field was delineated in the AAAI Fall 2005 Symposium on Machine Ethics: "Past research concerning the relationship between technology and ethics has largely focused on responsible and irresponsible use of technology by human beings, with a few people being interested in how human beings ought to treat machines. In all cases, only human beings have engaged in ethical reasoning. The time has come for adding an ethical dimension to at least some machines. Recognition of the ethical ramifications of behavior involving machines, as well as recent and potential developments in machine autonomy, necessitate this. In contrast to computer hacking, software property issues, privacy issues and other topics normally ascribed to computer ethics, machine ethics is concerned with the behavior of machines towards human users and other machines. Research in machine ethics is key to alleviating concerns with autonomous systems—it could be argued that the notion of autonomous machines without such a dimension is at the root of all fear concerning machine intelligence. Further, investigation of machine ethics could enable the discovery of problems with current ethical theories, advancing our thinking about Ethics."[371] Machine ethics is sometimes referred to as machine morality, computational ethics or computational morality. A variety of perspectives of this nascent field can be found in the collected edition "Machine Ethics"[370] that stems from the AAAI Fall 2005 Symposium on Machine Ethics.[371]

机器伦理学领域关注的是给予机器伦理原则,或者一种用于解决它们可能遇到的伦理困境的方法,使它们能够通过自己的伦理决策以一种符合伦理的方式运作.[370]。2005年秋季AAAI机器伦理研讨会描述了这一领域: ”过去关于技术与伦理学之间关系的研究主要侧重于人类是否应该对技术的使用负责,只有少数人对人类应当如何对待机器感兴趣。任何时候都只有人类会参与伦理推理。现在是时候给至少一些机器增加道德层面的考虑了。这势必要的,因为我们认识到了机器行为的道德后果,以及机器自主性领域最新和潜在的发展。与计算机黑客行为、软件产权问题、隐私问题和其他通常归因于计算机道德的主题不同,机器道德关注的是机器对人类用户和其他机器的行为。机器伦理学的研究是减轻人们对自主系统担忧的关键——可以说,人们对机器智能担忧的根源是自主机器概念没有道德维度。此外,在机器伦理学的研究中可以发现当前伦理学理论存在的问题,加深我们对伦理学的思考。”[371] 机器伦理学有时被称为机器道德、计算伦理学或计算伦理学[370]。这个新兴领域的各种观点可以在 AAAI 秋季2005年机器伦理学研讨会上收集的“机器伦理学”版本中找到。[371]

善AI与恶AI

Political scientist Charles T. Rubin believes that AI can be neither designed nor guaranteed to be benevolent.[372] He argues that "any sufficiently advanced benevolence may be indistinguishable from malevolence." Humans should not assume machines or robots would treat us favorably because there is no a priori reason to believe that they would be sympathetic to our system of morality, which has evolved along with our particular biology (which AIs would not share). Hyper-intelligent software may not necessarily decide to support the continued existence of humanity and would be extremely difficult to stop. This topic has also recently begun to be discussed in academic publications as a real source of risks to civilization, humans, and planet Earth.

政治科学家查尔斯 · 鲁宾认为,AI既不可能被设计成是友好的,也不能保证会是友好的[373]。他认为“任何足够的友善都可能难以与邪恶区分。”人类不应该假设机器或机器人会对我们友好,因为没有先验理由认为他们会对我们的道德体系有共鸣。这个体系是在我们特定的生物进化过程中产生的(AI没有这个过程)。超智能软件不一定会认同人类的继续存在,且我们将极难停止超级AI的运转。最近一些学术出版物也开始讨论这个话题,认为它是对文明、人类和地球造成风险的真正来源。

One proposal to deal with this is to ensure that the first generally intelligent AI is 'Friendly AI' and will be able to control subsequently developed AIs. Some question whether this kind of check could actually remain in place.

解决这个问题的一个建议是确保第一个具有通用智能的AI是“友好的AI”,并能够控制后面研发的AI。一些人质疑这种“友好”是否真的能够保持不变。